AI CERTS

2 months ago

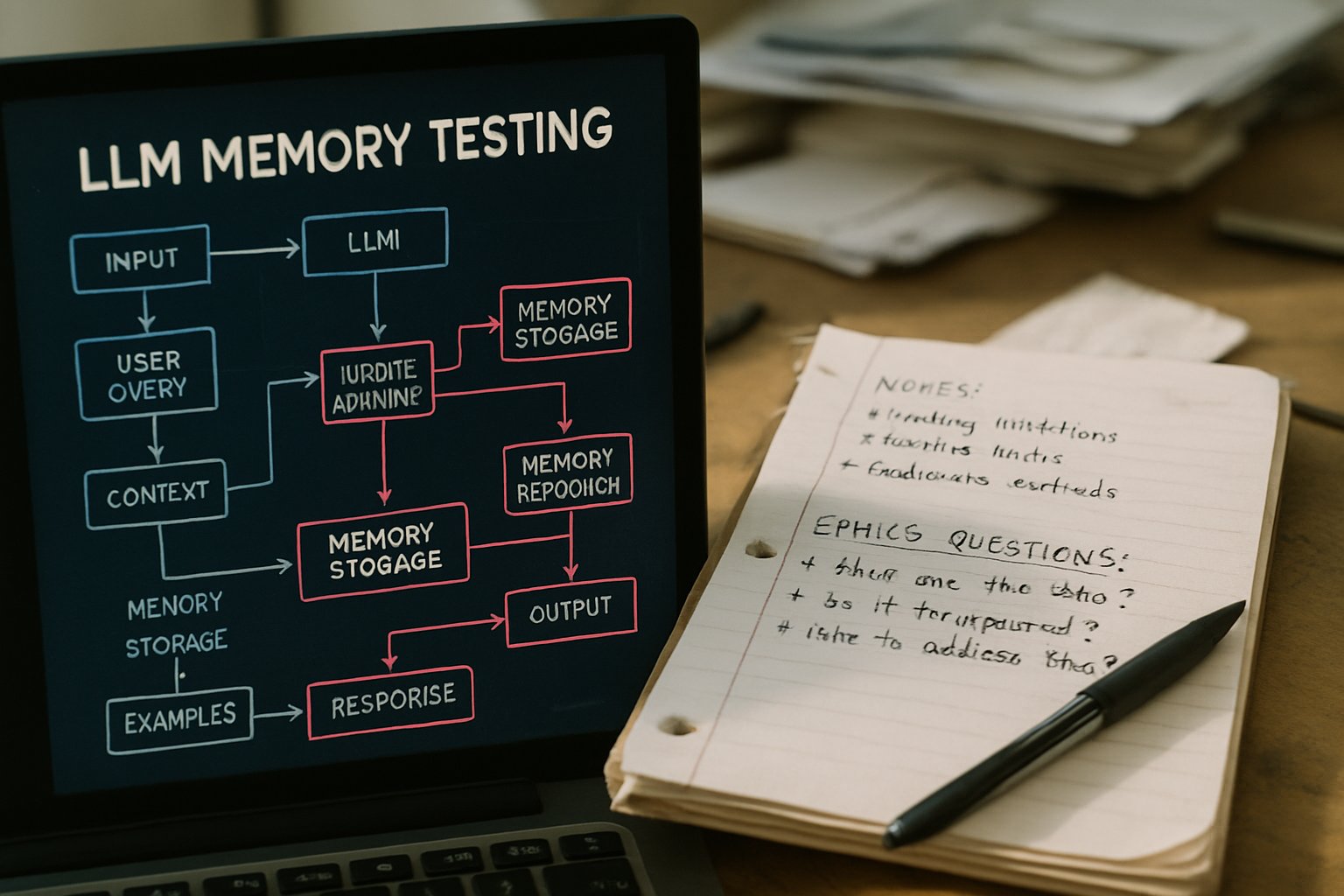

Memvid’s AI Bully Stunt Reveals LLM Memory Testing Challenges

The campaign also serves as live LLM Memory Testing that executives can reference in sales decks.

Business Insider broke the story on March 11, 2026.

Moreover, the stunt highlights rising industry interest in reliable long-term context retention.

This article examines the strategy, risks, and lessons for teams pursuing efficient LLM Memory Testing.

Stunt Highlights Memory Gaps

The job pays $100 per hour for an eight-hour session.

Participants must stream their screen while provoking popular chatbots.

Additionally, organizers will splice memorable glitches into social clips for promotion.

The process resembles structured memory testing used by research labs, yet the rhetoric is deliberately edgy.

Nevertheless, Memvid insists the spectacle surfaces authentic pain points.

A founder told Business Insider, “People constantly have to repeat themselves to chatbots.”

Those repeats illustrate costly productivity drag.

Subsequently, Memvid hopes the footage will dramatize its claimed 60% retrieval accuracy boost.

The stunt transforms mundane annoyances into viral evidence.

However, deeper technical context explains why these failures persist.

Inside Startup Product Pitch

Unlike traditional RAG pipelines, the company stores everything in a single .mv2 Smart Frame file.

Therefore, retrieval latency reportedly stays below 17 milliseconds.

The vendor also advertises 15-fold compression and up to 90% cost savings.

Critics still seek independent benchmarks before accepting those performance numbers.

- 157 documents ingested per second

- Sub-17 millisecond search latency

- +60% retrieval accuracy versus baseline RAG

- 80-93% infrastructure cost reduction

Consequently, leadership frames these figures as evidence that robust memory reduces cloud spend.

Their core value proposition speaks directly to teams budgeting for recurring LLM Memory Testing cycles.

Yet the spectacular job listing invites immediate public critique.

Engineers want proof that flashy marketing aligns with disciplined architecture.

The pitch promises speed and thrift.

Nevertheless, safety professionals emphasize red-teaming standards over slogans.

Red-Teaming Industry Best Practices

Major labs already employ internal red-teams that simulate adversaries.

Anthropic, Microsoft, and OpenAI publish guidelines for structured challenge design.

Moreover, best practice documents warn about tester fatigue and emotional toll.

That startup’s position echoes that tradition but adds theatrical flair.

Experts argue paid one-day sessions capture only narrow slices of failure modes.

Meanwhile, sustained LLM Memory Testing across weeks surfaces long-tail regressions.

Worker protection also matters.

Therefore, successful programs offer counseling, escalation channels, and clear content policies.

Rigorous red-teaming demands steady process, not just viral jobs.

In contrast, verifying vendor claims requires an evidence driven approach.

Evaluating Startup Vendor Promises

Technical buyers scrutinize any claim of 60% retrieval accuracy uplift.

Consequently, they request reproducible notebooks, open benchmarks, and raw logs.

RAG baselines vary widely across datasets, hardware, and prompt design.

Furthermore, single file approaches may trade fluid updates for compactness.

Teams planning intensive memory testing will need sandbox environments before production rollout.

Independent labs could perform side-by-side LLM Memory Testing under equal loads.

Such studies would generate objective critique beyond marketing clips.

Transparent methodology remains the gold standard.

Subsequently, workforce welfare issues enter the conversation.

Worker Welfare And Concerns

Stress testing chatbots often involves crafting hateful or traumatic prompts.

Therefore, testers may encounter disturbing content repeatedly.

Occupational psychologists classify that exposure as potential secondary trauma.

Guidelines suggest rotation schedules, debrief sessions, and mental health support.

However, the 'professional bully' label could minimize those risks publicly.

Ethicists urge employers to supply counseling hotlines and explicit escalation paths.

Additionally, legal teams must secure informed consent before recording or distributing footage.

Any robust LLM Memory Testing program should document these safeguards transparently.

Protecting testers sustains program effectiveness.

Meanwhile, the market has already begun to deliver its verdict.

Market Reaction And Critique

Social feeds embraced the pranklike concept immediately.

Yet many engineers offered pointed critique of the terminology and methodology.

Some praised creative outreach that surfaces memory testing pain quickly.

Others warned the 'professional bully' frame distracts from serious evaluation.

Investors appear intrigued by low infrastructure claims but wary of unverified benchmarks.

Consequently, competitor vector database vendors highlighted their own mature audit tooling.

Analysts predict continued consolidation around memory solutions that prove measurable reliability.

For practitioners, disciplined LLM Memory Testing remains the decisive buying criterion.

Public opinion mixes excitement with skepticism.

Therefore, enterprise architects must weigh implications carefully.

Implications For Enterprise Teams

Enterprise adoption depends on stable recall, predictable cost, and governance alignment.

Teams already plan quarterly memory testing audits for compliance reporting.

Integrating a single-file memory layer could simplify stack maintenance.

In contrast, migration risk grows if proprietary formats lock data inside opaque binaries.

Enterprises should demand contractual service-level objectives covering latency and accuracy.

Additionally, leaders can upskill staff via the AI Prompt Engineer Essential™ certification.

Structured training complements ongoing LLM Memory Testing and model validation workflows.

Therefore, procurement teams must craft pilot scopes that mirror real production chat flows.

Successful rollouts balance innovation, safety, and skills.

Consequently, decision makers should watch initial bully sessions but await audited reports.

Conclusion

Memories drive trust in conversational systems.

However, real-world tests still expose stubborn gaps.

The 'professional bully' experiment dramatizes those gaps for both investors and engineers.

Independent critique will clarify real performance value.

Nevertheless, rigorous LLM Memory Testing across time, domains, and workloads remains mandatory.

Enterprises should validate vendor metrics, demand clear safeguards, and protect testers from harm.

Consequently, structured programs supported by certified staff outperform one-off stunts.

Teams that combine disciplined LLM Memory Testing with continuous skill building can unlock durable AI value.

Act now: schedule a pilot, review ethical guardrails, and pursue specialized credentials for competitive advantage.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.