AI CERTS

48 minutes ago

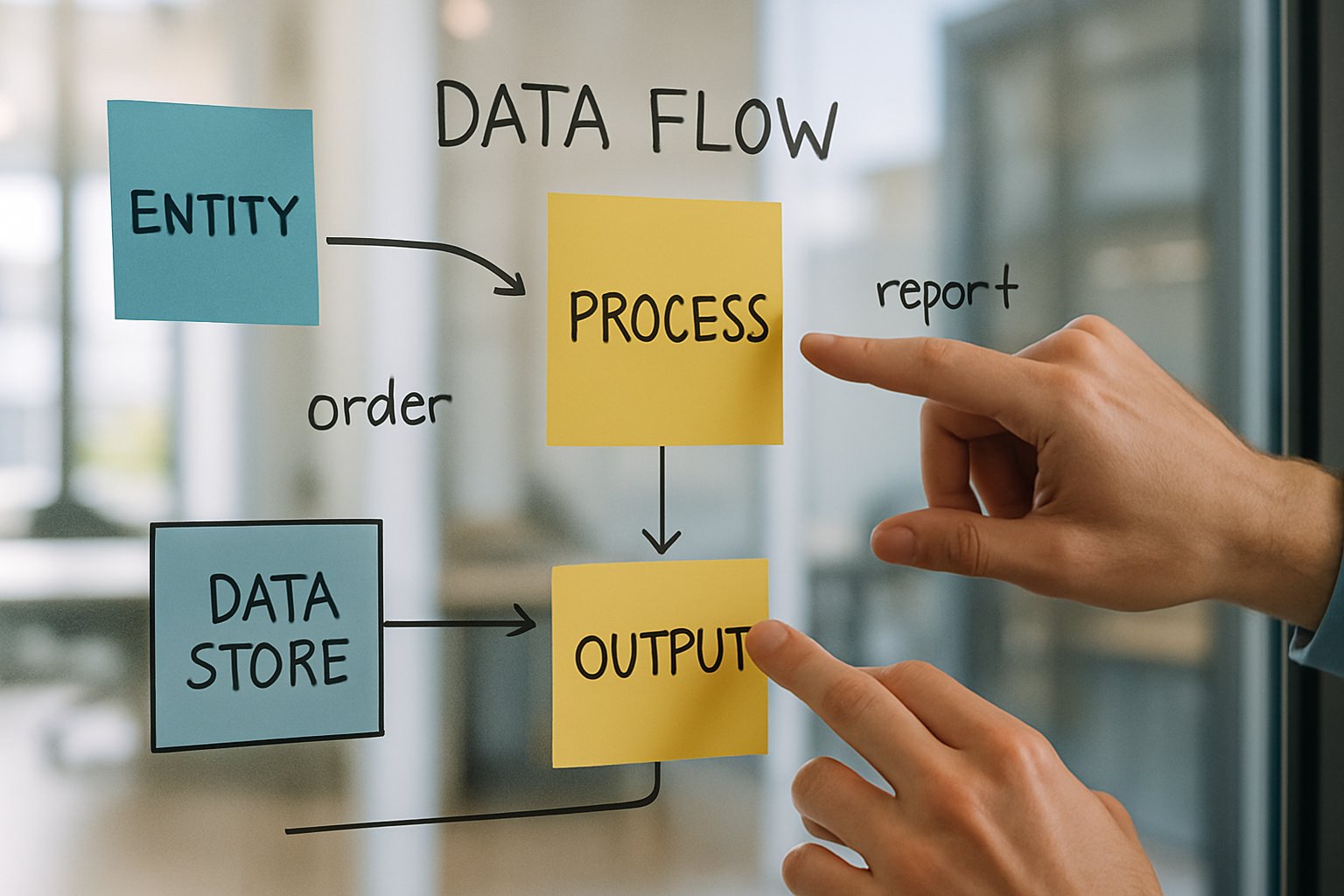

Data Quality Governance for Agentic AI Pipelines

Without rigorous controls, agent workflows can drift, expose secrets, or even delete critical systems.

Moreover, McKinsey reports that 62% of enterprises already experiment with autonomous systems, yet maturity lags.

Deloitte found only 21% possess a robust agentic oversight model.

Therefore, practitioners need pragmatic guidance that blends policy, observability, and secure engineering.

This article explores the evolving playbook, highlighting regulations, adoption gaps, lifecycle guardrails, observability, reliability integration, and pipeline security best practices.

Along the way, we reference certifications that can upskill professionals for this emerging mandate.

Regulation Shapes New Stakes

Singapore stunned observers in January 2026 with the Model Governance Framework for Agentic AI.

Released at the World Economic Forum, the document introduces risk tiers, human accountability, and mandatory technical controls.

Moreover, it is the first framework to spotlight continuous Data Quality Governance for autonomous Agents.

Regulators elsewhere quickly took notice.

IMDA officials stressed that organizations can adopt such systems confidently once data risks are bounded.

Meanwhile, cloud vendors echoed that message.

Google’s Gemini Enterprise Agent Platform touts an agent gateway, lineage capture, and policy enforcement aligned with the new rules.

In contrast, academic voices argue for deeper transparency, citing the MIT AI Agent Index which shows limited public safety disclosures.

Nevertheless, the regulatory drumbeat is pushing the market toward auditable data controls.

Early policy moves elevate Data Quality Governance from optional to essential.

Consequently, enterprises must reassess readiness before scaling deployments.

Enterprise adoption trends reveal why that reassessment cannot wait.

Enterprise Adoption Gap Widens

Market appetite for Agents keeps accelerating, yet governance readiness lags.

McKinsey, Deloitte, and MIT studies together paint a consistent picture.

- McKinsey: 62% experiment with agentic AI; data quality cited as competitive edge.

- Deloitte: 74% plan moderate deployment within two years; only 21% have mature governance.

- MIT/BCG: Adoption climbed to 35% in 2023, with 44% more poised to jump.

In contrast, only a fraction of respondents track lineage metrics in real time.

Consequently, executives fear reputational damage from uncontrolled data drift.

Moreover, the AI Agent Index confirms limited public safety evidence from most vendors.

Therefore, boards increasingly request concrete Data Quality Governance metrics before approving new projects.

These numbers expose a yawning capability gap.

Consequently, organizations need operational guardrails that embed Quality into every agent cycle.

The next section examines those data lifecycle guardrails in detail.

Data Lifecycle Guardrails Matter

Effective guardrails start with a living inventory of data assets accessible to runtimes.

Alation and similar vendors recommend assigning stewards, SLAs, and Quality grades to each source.

Moreover, every agent decision should be traceable through immutable lineage metadata.

IBM researchers warn that stale feature stores can silently sabotage forecasting workloads.

Meanwhile, policy engines lose effectiveness when metadata schemas grow inconsistent across teams.

McKinsey argues that real-time validation, anomaly detection, and drift checks move Data Quality Governance from periodic to continuous.

Consequently, teams attach freshness and completeness scores directly to the datasets passed into planning loops.

Policy enforcement further hardens the lifecycle.

Identity, least privilege, and runtime policy engines deny unsafe queries before damage occurs.

Nevertheless, many organizations overlook write-back controls, leaving systems vulnerable to silent corruption.

Robust lifecycle controls turn abstract principles into daily engineering tasks.

Therefore, observability must complement these controls for rapid incident response.

The following section focuses on that observability layer.

Observability And Action Logs

Once data flows, incidents are inevitable; observability makes them recoverable.

Google, IBM, and several startups now bundle telemetry collectors with agent platforms.

Furthermore, immutable action logs record prompts, tool calls, responses, and downstream effects in append-only stores.

Those logs integrate with SIEM dashboards, bringing Pipeline Security and compliance teams into the same view.

Consequently, red-teamers can replay sessions to locate root causes within minutes.

Additionally, integration with incident chat channels ensures alerts reach human responders immediately.

However, observability only delivers value when paired with alerting thresholds tied to Data Quality Governance metrics.

For example, a spike in schema errors should trigger circuit breakers before runtimes write faulty records.

Meanwhile, supervisory agents can veto any action that violates policy, acting as runtime auditors.

Comprehensive telemetry closes feedback loops quickly.

Subsequently, engineering cultures must adjust to own those loops alongside reliability teams.

The next section explores that cultural convergence.

DevOps, SRE Converge Here

Traditional DevOps already tracks release velocity, uptime, and incident response.

However, autonomous Agents introduce novel failure modes that require more granular service-level objectives.

SRE leaders therefore extend error budgets to cover hallucinations, goal drift, and data freshness breaches.

Moreover, many teams map Data Quality Governance metrics directly into existing SRE dashboards.

A single red dashboard tile can now represent stale embeddings, unauthorized writes, or Pipeline Security violations.

Consequently, runbooks integrate rollback steps for both code and prompt versions.

Furthermore, canary deployments let teams observe agent behavior under limited blast radius.

Meanwhile, post-incident reviews examine training data defects alongside infrastructure outages.

Blending agent metrics with reliability practice shortens recovery times dramatically.

Nevertheless, controls still fail without external defenses.

We now turn to those defenses within the pipeline itself.

Securing Critical Pipeline Security

Pipeline Security extends beyond perimeter firewalls.

Identity isolation prevents shadow Agents from impersonating production tasks.

Additionally, API gateways apply row-level policies, blocking sensitive fields before they reach external calls.

IBM researchers advocate sandboxed execution to prevent accidental file deletions or secret leaks.

Furthermore, write-back transactions must pass reconciliation checks against source-of-truth systems.

Those checks add a final Quality buffer when all else fails.

Data Quality Governance frameworks recommend pairing these controls with continuous penetration testing.

Moreover, time-bounded credentials reduce exposure if tokens leak during supply-chain attacks.

Consequently, security teams treat pipeline observability, lineage, and policy engines as a single integrated surface.

Layered Pipeline Security reduces blast radius across multi-agent workflows.

Subsequently, professionals need new skills to design and audit such layers.

The concluding section outlines available upskilling paths.

Upskilling For Future Readiness

Governance demands cross-functional fluency spanning policy, architecture, and incident response.

Therefore, organizations invest heavily in targeted training.

Professionals can enhance their expertise with the AI Data™ certification.

The curriculum covers lineage modeling, real-time validation, and secure pipeline design tied to Data Quality Governance KPIs.

Moreover, many programs emphasize hands-on labs that integrate SRE tooling with agent control planes.

Additionally, communities of practice share playbooks and reusable policy templates.

Consequently, peer collaboration accelerates organizational learning curves.

Skilled professionals accelerate trustworthy deployments.

Nevertheless, a strategic outlook remains essential.

The conclusion synthesizes the lessons and offers a final call to action.

Conclusion

Agentic adoption is no longer hypothetical; production rollouts are underway across every sector.

However, incidents show that autonomy without robust Data Quality Governance courts unacceptable risk.

Organizations must inventory assets, validate continuously, log immutably, and weave controls into SRE and Pipeline Security practices.

Moreover, regulatory frameworks like IMDA’s blueprint and vendor control planes provide helpful starting points.

Nevertheless, sustained success hinges on people who understand policy and packet captures equally.

Therefore, consider enrolling in the AI Data™ program and embed Data Quality Governance deeply into every agent roadmap.

Take the next step now and future-proof your operations.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.