AI CERTS

5 months ago

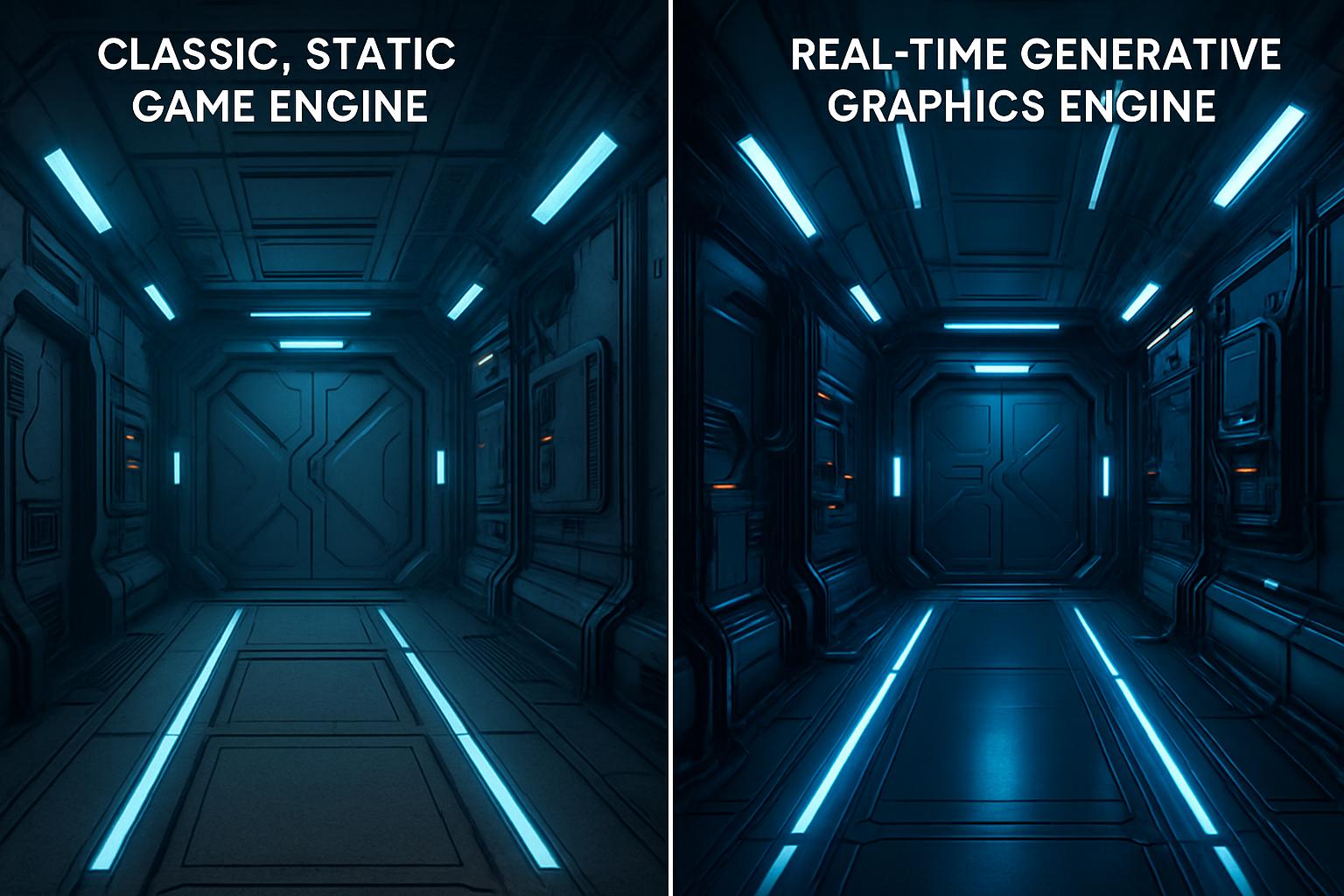

Google’s Real Time Generative GameNGen Breakthrough

Unlike conventional pipelines, the system draws each frame directly from a learned probability distribution. Moreover, it preserves game state, player actions, and environment dynamics for minutes without collapse. These traits position the technology as a fresh alternative to established engines built around rasterization or ray tracing. Meanwhile, investors and studios are scanning the results for competitive advantage. Throughout this analysis, we explore the architecture, performance metrics, and business implications of Google’s advance. Readers will see where opportunity lies and where skepticism remains.

Early Origins Of GameNGen

GameNGen emerged from a simple research question: could a diffusion network replace a traditional game engine? Initially, researchers trained a reinforcement agent on VizDoom to gather ten million action-frame pairs. Subsequently, those traces seeded a Stable Diffusion v1.4 model fine-tuned for next-frame prediction. Therefore, the project combines policy learning with image synthesis in a tight loop. Furthermore, the revised paper appeared on arXiv in August 2024. It later featured as an ICLR 2025 poster in April 2025. The authors framed the work as the first Real Time Generative proof for a 3D shooter. In contrast, earlier efforts like NVIDIA’s GameGAN targeted 2D arcade scenes.

These origins reveal careful dataset curation and compute commitment. Nevertheless, the bigger story lies in how the pieces fit together below.

Architecture And Core Tricks

The architecture follows a two-stage pipeline that balances speed with fidelity. First, past frames and recent actions enter an encoder that maps them into latent channels. Secondly, a denoising U-Net predicts the next latent, which a decoder turns into visible graphics. Moreover, the team injects controlled Gaussian noise into context frames during training. Consequently, the model learns to correct its own drift over long sequences. This detail matters because autoregressive sampling often diverges quickly. The Real Time Generative mandate forced aggressive optimisation. Therefore, only four DDIM steps are used per frame, giving twenty frames per second on one TPU. Researchers note that single-step distillation could push fifty frames, yet quality drops. Additionally, they integrate action tokens directly into the diffusion input, enabling reliable response to keyboard and mouse impulses. Compared with classic neural rendering pipelines, this fused conditioning keeps temporal coherence. Importantly, the system still behaves like a full game engine, executing rules implicitly held inside weights.

The design blends proven diffusion strengths with novel conditioning tricks. However, performance numbers ultimately decide practical relevance.

Performance Metrics And Benchmarks

Empirical data supports the excitement around the prototype. At inference, the network maintains around twenty frames per second while forecasting DOOM corridors. Furthermore, PSNR reaches 29.4, roughly matching moderate JPEG compression. Human raters could scarcely tell synthetic sequences from gameplay, scoring just above random. Moreover, the authors showed stability for over five minutes, surpassing prior neural rendering attempts. Training, however, demanded 128 TPU-v5e chips across 700,000 steps, underlining high entry costs. The Real Time Generative goal pushed engineers to use only four denoise iterations, reducing latency to 50 milliseconds. In contrast, earlier diffusion pipelines often require thirty or more steps. Consequently, throughput rises while energy per frame falls. Another benchmark explored theoretical single-step sampling, giving 50 FPS yet lower fidelity. Therefore, trade-offs between speed and clarity remain central.

These numbers illustrate dramatic strides over past simulators. Still, scalability beyond DOOM will test the approach next.

Opportunities For Game Development

Studios constantly seek faster content pipelines. Consequently, a Real Time Generative simulator could automate level prototyping without complex asset creation. Additionally, QA teams might record thousands of playthroughs by letting diffusion networks drive agents. Furthermore, indie developers could remix visual styles instantly by fine-tuning the decoder for different graphics palettes. Potential business advantages include lower art budgets and richer procedural experiences. Moreover, researchers see alignment with live-ops models, where environments evolve daily. The approach also aligns with cloud streaming trends, as rendering becomes model inference rather than GPU rasterization. Professionals can enhance their expertise with the AI Government Specialist™ certification.

- Rapid scene iteration with Real Time Generative content

- Built-in support for stylized neural rendering

- Lower client hardware requirements due to server inference

- Data-driven balancing via continuous retraining loops

These possibilities could reshape production economics. Nevertheless, every paradigm shift brings new risks, discussed next.

Current Limitations And Risks

No technology arrives without caveats. First, training GameNGen consumed significant energy and compute, limiting independent replication. Additionally, diffusion models still hallucinate objects, risking gameplay glitches after extended sessions. Autoregressive drift therefore remains unsolved despite noise augmentation. Moreover, the approach inherits legal questions around asset IP, because weights embed DOOM textures and level layouts. In contrast, conventional engines separate code from copyrighted art. The Real Time Generative pipeline blurs that boundary. Cybersecurity teams also note that model weights cannot currently enforce multiplayer fairness, opening cheating vectors. Consequently, regulators may demand audits before commercial launch.

Risk factors could delay mainstream adoption. However, comparing to earlier projects illuminates where progress truly stands.

Comparisons With Earlier Work

NVIDIA’s 2020 GameGAN recreated Pac-Man using a GAN paired with memory modules. However, it lacked first-person perspective and realistic graphics. World Models compressed Atari frames into latent codes but never attempted continuous control at 20 FPS. In contrast, GameNGen’s Real Time Generative diffusion tackles a three-dimensional shooter with active mouse aiming. Moreover, visual sharpness and temporal consistency exceed earlier neural rendering studies. The underlying game engine logic is also richer, covering doors, ammo, and damage states without explicit scripting. Therefore, the project marks a quantitative and qualitative leap.

Historical context highlights the pace of innovation. Subsequently, we turn toward future research directions and market impact.

Roadmap And Industry Impact

Authors plan to open-source additional evaluation code, yet full weights may remain private for now. Meanwhile, studios are experimenting with hybrid pipelines that pair a classical game engine for physics. These setups then add a Real Time Generative module for visuals. Additionally, chip vendors see opportunities to bundle diffusion accelerators into next-generation hardware. Moreover, regulators evaluate environmental footprints, pushing for carbon-aware training schedules. Doom fans already envision community maps rendered through personalized graphics styles. Consequently, skill sets will broaden; engineers must understand model tuning alongside shader programming. Therefore, professional upskilling becomes essential.

Market watchers expect pilot integrations within two years. Nevertheless, sustainable scaling will dictate long-term success.

Google’s GameNGen demonstrates a striking union of diffusion science and interactive gaming. Consequently, it proves that high-fidelity neural rendering can reach playable speeds today. Moreover, the research places Real Time Generative systems on the strategic roadmap for tool vendors, studios, and chip makers. Yet, heavy training footprints, legal ambiguities, and drift risk temper immediate enthusiasm. Nevertheless, the trajectory from Pac-Man emulators to DOOM simulators signals rapid capability growth. Professionals should monitor code releases, benchmark updates, and regulatory guidance as they plan investments. Finally, explore certifications and research forums to build fluency before the next wave of neural engines arrives.