AI CERTS

5 days ago

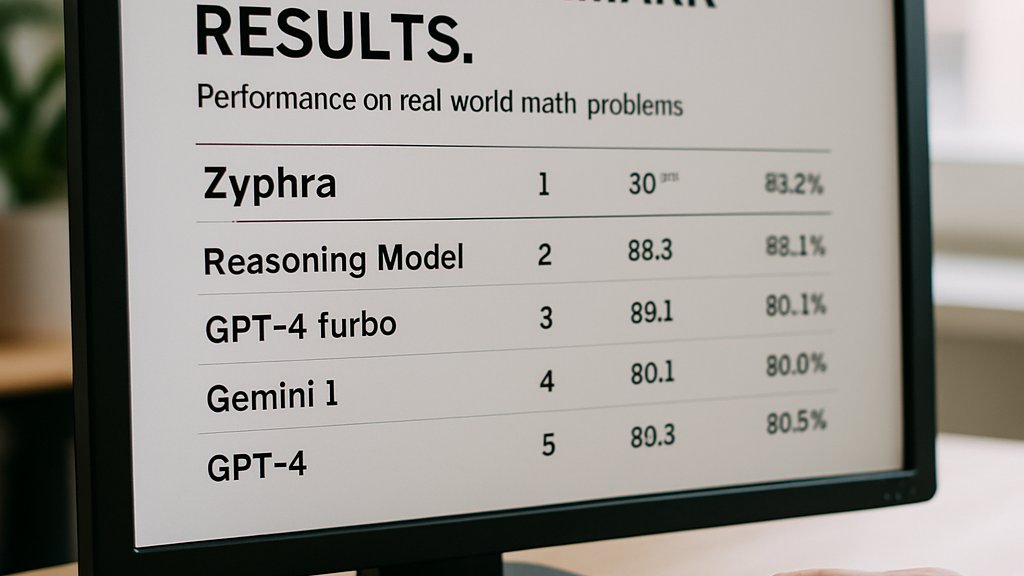

Zyphra Reasoning Model Tops Math Benchmarks on AMD Instinct MI300

Industry professionals need clear context before integrating the release into roadmaps.

This article dissects architecture, hardware, benchmarks, licensing, and strategic implications.

It offers an evidence-based view for technical decision makers.

Throughout, the Zyphra Reasoning Model will be compared with Claude and GPT claims.

Readers will also find certification resources to strengthen their cloud AI expertise.

Release Signals Market Shift

Zyphra framed the release as proof of high intelligence density per parameter.

Moreover, executives claimed the model "punches above its weight" on real workloads.

Early coverage from HPCWire and BuildFastWithAI echoed that narrative, yet maintained caution.

In contrast, independent researchers requested raw evaluation traces before endorsing performance leadership.

Zyphra Reasoning Model visibility grew rapidly because weights arrived under the permissive Apache-2.0 license.

Therefore, enterprises can begin controlled experiments without lengthy legal negotiations.

These launch dynamics created excitement and scrutiny alike.

Subsequently, architecture details drew deeper interest.

Architecture Highlights Explained Clearly

ZAYA1-8B uses a sparse mixture-of-experts transformer with 32 expert blocks.

A router selects two experts per token.

Consequently, only about 760 million parameters stay active.

This design delivers high capability with moderate inference cost.

Furthermore, Zyphra layered Compressed Convolutional Attention to shrink memory footprints.

The Zyphra Reasoning Model also embeds reinforcement learning objectives aligned with extended reasoning rollouts.

Such changes target sustained token throughput during Markovian RSA driven sessions.

- Sparse mixture-of-experts routing for compute efficiency

- Compressed Convolutional Attention for long contexts

- Residual scaling tuned for stability

- Router load balancing improvements reducing variance

Collectively, these components aim to maximize intelligence density.

However, benchmark evidence determines real-world value.

Benchmark Claims Scrutinized Carefully

Zyphra published headline scores on math, coding, and knowledge tasks.

On HMMT math the company reported 89.6, edging out Claude 4.5 Sonnet’s 88.3.

Moreover, LiveCodeBench registered 65.8, approaching GPT-5-High territory despite the smaller model.

Analysts noted those figures relied on aggressive Markovian RSA test-time compute budgets.

- HMMT score: 89.6 with 40k tokens

- AIME score: 92.0 after 5.5M token exploration

- GPQA-Diamond: 71.0 baseline

- MMLU-Pro: 74.2 baseline

Independent testers have not yet reproduced every figure under identical conditions.

Nevertheless, early community runs confirm respectable baseline accuracy without extra compute.

Consequently, the Zyphra Reasoning Model may still deliver strong everyday performance.

These provisional results warrant cautious optimism.

Next, hardware choices explain training feasibility.

Hardware Partnership Contextual Depth

Training occurred exclusively on AMD Instinct MI300 hardware within an IBM Cloud cluster.

Pensando Pollara networking linked nodes, supplying 400 Gbps fabric throughput.

Furthermore, Zyphra collaborated with AMD engineers to optimize ROCm kernels for mixture-of-experts routing.

Emad Barsoum of AMD called the project evidence of a viable CUDA alternative.

Consequently, enterprises exploring heterogeneous hardware can reference the open training stack.

The Zyphra Reasoning Model demonstrates that front-tier capability no longer demands Nvidia exclusivity.

Hardware openness could reshape procurement strategies.

Meanwhile, licensing terms influence adoption pace.

AMD Instinct MI300 enables 192 GB HBM per GPU, accommodating large expert matrices comfortably.

Moreover, AMD Instinct MI300 paired with Pensando cuts latency during distributed MoE training.

Open Access Implications Discussed

Zyphra released weights on Hugging Face under Apache-2.0, inviting scrutiny and downstream innovation.

Developers can spin serverless endpoints on Zyphra Cloud within minutes.

Therefore, startups may prototype solutions powered by the Zyphra Reasoning Model without capital heavy licenses.

Licensing drivers matter because open weights enable fine-tuning for domain specific math tasks.

Nevertheless, mixture-of-experts architecture requires hosting the full 8.4 billion parameter file set.

- No licensing fees or usage caps

- Permissive redistribution clauses

- Compatible with AMD Instinct MI300 clusters

Professionals can enhance expertise with the AI Cloud Architect™ certification.

Such openness accelerates independent benchmarking and specialized deployments.

Consequently, practical guidance becomes the immediate priority.

Practical Takeaways Moving Forward

Technology leaders should weight efficiency metrics against deployment constraints.

Moreover, the Zyphra Reasoning Model yields high reasoning density with limited active parameters.

Teams running AMD Instinct MI300 infrastructure can replicate training experiments thanks to released scripts.

Cloud users on CUDA stacks can still deploy the model despite training differences.

However, maximize value by matching test-time compute budgets to your latency needs.

When pursuing advanced math applications, evaluate whether Markovian RSA overhead aligns with service level objectives.

- Benchmark baseline accuracy without extended compute.

- Profile memory on AMD Instinct MI300 or equivalent GPUs.

- Tune mixture-of-experts router thresholds for workload balance.

- Iterate context window sizes to manage cost.

Additionally, integrate governance, reproducibility, and documentation processes early.

The Zyphra Reasoning Model benefits from community verification, so share evaluation artifacts openly.

Subsequently, track leaderboard updates to monitor independent replication efforts.

In summary, careful benchmarking, hardware selection, and open collaboration unlock this model’s full promise.

Therefore, start small-scale pilots, then scale once findings match internal benchmarks.

Zyphra’s latest release illustrates an emerging path toward compact yet capable reasoning agents.

Furthermore, extensive open data, paired with AMD Instinct MI300 proof points, signals broader ecosystem flexibility.

Nevertheless, independent benchmarks must validate every headline before production reliance.

The Zyphra Reasoning Model offers opportunity, provided teams respect test-time compute constraints and reproducibility needs.

Consequently, readers should explore the earlier certification to formalize cloud AI proficiency and stay competitive.

Act now, begin measured experiments, and share findings with the community.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.