AI CERTS

55 minutes ago

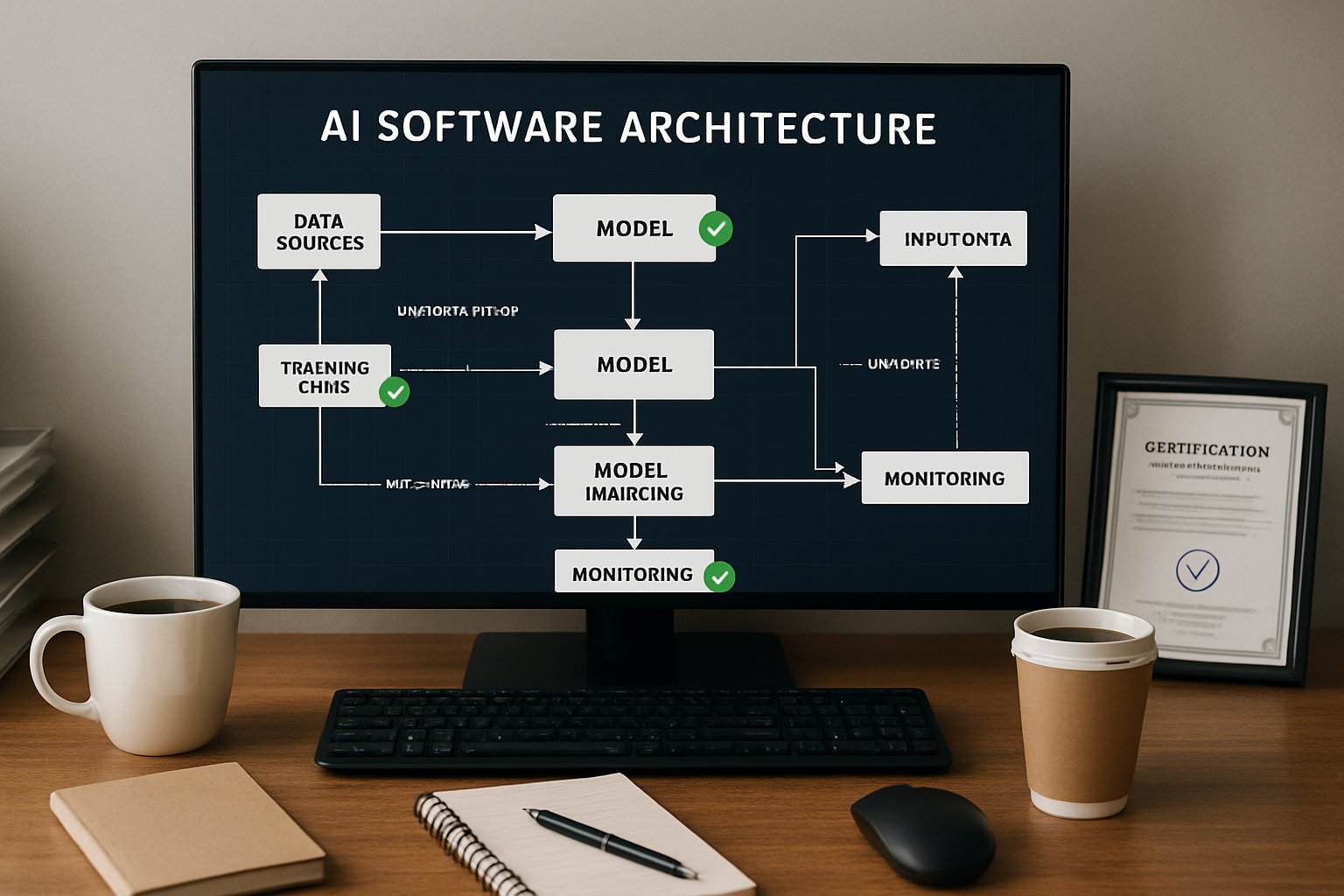

G5 Labs Guides AI Software Architecture Refactors

This article unpacks market trends, emerging guardrails, and G5 Labs’ playbook for machine-speed refactors. Readers will learn why proactive governance matters and how incremental steps protect long-term value.

Market Forces Driving Refactors

The MLOps market reached USD 2.43 billion in 2025. Moreover, analysts expect USD 56.60 billion by 2035, a 37 percent CAGR. Cloud vendors now bundle agent skills, drift scores, and policy kits with every new platform. Additionally, ThoughtWorks warns that unchecked agents accelerate drift from planned Design structures.

G5 Labs sees clients adopt agents for routine Coding tasks. In contrast, few organizations update documentation or dependency maps at equal speed. Consequently, invisible coupling multiplies until outages force emergency fixes.

These market signals confirm growing urgency. Therefore, leadership must budget time for architectural care before problems explode.

The figures highlight revenue potential for quality tools. Nevertheless, fast growth amplifies risk, guiding our next discussion.

Risks Of Agent Drift

LLMs generate valid code yet ignore context unless guided. Furthermore, each automated merge may break layering rules, expose secrets, or duplicate logic. Shift-Up researchers showed lower drift when machine-readable constraints existed. However, absent guardrails, defects propagate at silicon speed.

Security experts link ungoverned agent output to production Safety incidents. Small violations accumulate until incident metrics spike. Meanwhile, regulatory auditors increasingly demand evidence that Design intent matches deployed binaries.

ThoughtWorks concludes that deterministic checks plus LLM evaluation reduce drift. Therefore, teams need verified loops that block non-compliant Coding changes.

Drift erodes trust and velocity. However, suitable tooling can reverse the trend, as the next section explains.

Guardrails And Tooling Options

Modern guardrails encode architecture as data, enabling continuous verification. Moreover, vendors package conformance rules as GitHub Actions, creating seamless developer experiences.

- Static analyzers: ArchUnit, Spectral, and similar libraries catch layer violations early.

- Architecture intelligence: Archyl scores drift and proposes automated pull requests.

- C4, ADR pipelines: Shift-Up converts diagrams and decisions into executable policies.

Each toolchain aligns with AI Software Architecture best practices. Additionally, teams embed DORA metrics to show ROI.

Guardrails convert tribal knowledge into testable artifacts. Consequently, developers gain freedom within clearly bounded space.

Strong guardrails reduce future surprises. Meanwhile, G5 Labs integrates them into client roadmaps, discussed next.

G5 Labs Strategic Approach

G5 Labs offers fractional-CTO guidance grounded in MIT research culture. Their advisors couple business goals with technical execution. Moreover, partnerships with AWS, Google, and Anthropic accelerate cloud onboarding.

The consultancy positions AI Software Architecture as a continuous discipline, not a one-off project. They emphasize measurable baselines, incremental Refactoring, and enforced Safety constraints.

Clients often begin with a workshop mapping objectives against current Design reality. Subsequently, G5 Labs proposes a sprint plan aligned with risk appetite.

This strategic framing aligns incentives across teams. Therefore, execution clarity improves, setting up the detailed roadmap.

Stepwise Refactor Roadmap

Martin Fowler notes that small moves enable large change. Following that doctrine, G5 Labs recommends the sequence below.

- Baseline analysis: collect metrics, build C4 diagrams, and inventory Coding violations.

- Policy authoring: turn diagrams and ADRs into machine-readable rules.

- CI integration: enforce rules on every merge, blocking unsafe diffs.

- Incremental Refactoring: apply extract-service or split-phase patterns with test coverage.

- MLOps alignment: link model pipelines to the refreshed structure and monitor Safety signals.

- Verification loop: update metrics, close gaps, and document intentional deviations.

This roadmap embeds AI Software Architecture thinking into daily routines. Furthermore, each step produces quantifiable evidence of progress.

Structured increments curb risk while sustaining momentum. Consequently, teams avoid big-bang failures and preserve morale.

Skills And Certification Path

Sustained success demands capable talent. Engineers deepen expertise through research papers, community forums, and formal programs. Professionals can enhance their expertise with the AI Engineer™ certification.

The course, built with MIT faculty input, covers architecture patterns, secure Coding, and operational Safety. Moreover, labs require hands-on Design exercises and incremental Refactoring.

Graduates report faster promotion cycles and improved audit outcomes. Consequently, organizations gain validated skill sets aligned with strategic goals.

Certified staff reinforce guardrail adoption. Therefore, leadership should budget training alongside tooling investments.

Key Takeaways And Outlook

Market data confirms explosive growth for production AI. However, unchecked drift threatens reliability and compliance. Guardrails, incremental Refactoring, and skilled engineers counter that danger.

G5 Labs merges MIT rigor with cloud partnerships to deliver structured roadmaps. Additionally, emerging tools ease policy enforcement and metric tracking within AI Software Architecture pipelines.

These insights spotlight an urgent agenda. Nevertheless, disciplined action positions firms for sustainable advantage.

Conclusion

Effective AI Software Architecture demands continuous attention as agents proliferate. Moreover, guardrails, market-tested tools, and certified talent create a resilient foundation. Thoughtful Design choices, secure Coding habits, and documented Safety policies complete the picture.

Consequently, leaders should adopt the outlined roadmap today. Explore the linked certification to elevate your team and ensure future-proof systems.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.