AI CERTS

2 hours ago

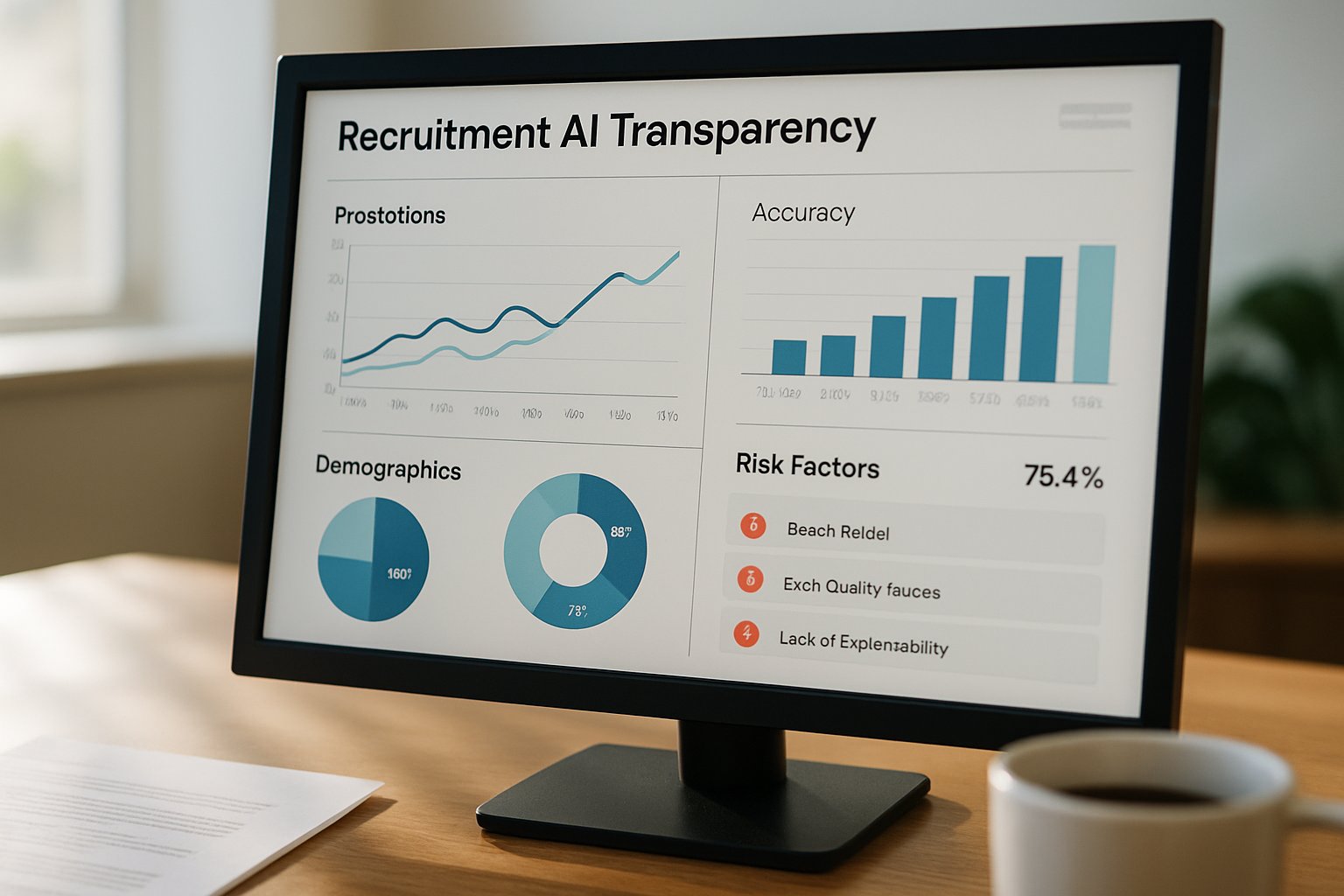

Closing the Recruitment AI Transparency Gap

Greenhouse’s latest multi-market survey sharpens that concern with hard numbers. Seventy percent of hiring managers applaud AI speed, yet only eight percent of candidates deem it fair. Consequently, the Trust deficit fuels an "AI doom loop" of mutual suspicion. Addressing that spiral demands credible Recruitment AI Transparency policies, robust oversight, and clear communication.

AI Doom Loop Explained

Daniel Chait, Greenhouse CEO, describes today’s stalemate as an AI doom loop. Recruiters trust speedier filters while candidates distrust unseen algorithms. Moreover, volume spikes drive teams toward additional automation, further distancing humans from decisions. Recruitment AI Transparency offers a release valve by realigning expectations on both sides.

The cycle thrives on speed and secrecy. However, transparency interrupts the loop and restores mutual confidence. Next, we locate the precise policy gap.

Recruitment AI Transparency Gap

Survey data pinpoints the sharpest perception rift. Only eight percent of candidates call algorithmic screening fair, yet seventy percent of managers feel empowered by it. Consequently, mistrust festers.

Greenhouse notes that 27 percent of respondents have never seen an employer AI policy. Visible statements reduce Bias concerns and narrow the Trust divide. Recruitment AI Transparency documentation should appear before application submission, not hidden within privacy footers.

Clear policies close perception gaps and curb reputational risk. Consequently, quantified evidence keeps leadership focused on progress milestones. The next section distills that evidence.

Data Reveals Trust Gap

Greenhouse commissioned 4,136 interviews across the United States, United Kingdom, Ireland, and Germany. The mixed sample included 2,900 candidates and 1,236 recruiting professionals. Consequently, the dataset offers a balanced snapshot.

- 70% of hiring managers say AI speeds better decisions.

- 8% of candidates believe AI improves fairness.

- 41% of U.S. job seekers attempt prompt injections.

- 91% of recruiters detect fraud or deepfakes.

These statistics underscore widening Trust fractures and rising fraud exposure. Recruitment AI Transparency therefore becomes a measurable objective rather than a philosophical ideal.

Numbers convert perception into urgency. Moreover, they illuminate how applicant tactics threaten data integrity. We now explore those tactics.

Candidate Tactics And Risks

Security teams observe prompt injections that embed hidden commands inside PDF résumés. Consequently, screening models may elevate unqualified profiles. Candidates also deploy deepfake videos to bypass identity verification.

Recruiters report identifying deception in 91 percent of recent cycles. Meanwhile, manual investigation slows Hiring timelines and can introduce unintentional Bias. Recruitment AI Transparency plus human review mitigates both pitfalls.

Fraud thrives when algorithms hide their criteria. Nevertheless, visible systems discourage manipulation and support honest applicants. Greenhouse bundles those visibility practices into three pillars.

Transparency Pillars For Hiring

Greenhouse outlines three actionable steps. First, disclose whenever AI screens, ranks, or messages applicants. Second, request small amounts of "good friction" data, such as assessments or verified IDs, to signal authenticity. Third, keep humans in the loop for every final Hiring decision.

Moreover, ISO 42001 style controls govern lifecycle risk. Professionals can enhance expertise through the AI+ Human Resources™ certification. Structured learning accelerates enterprise Recruitment AI Transparency programs.

These pillars translate abstract ethics into daily workflows. Consequently, they prepare organizations for incoming regulation. That regulatory wave is gathering momentum.

Regulatory Landscape Shifts

EEOC technical assistance confirms that AI tools remain subject to existing civil-rights tests. Meanwhile, Illinois restricts video interview analytics. Civil liberties groups accuse several vendors of algorithmic Bias and opaque practices. Therefore, organizations without clear Recruitment AI Transparency may face fines and public backlash.

Competitors such as HireVue and Eightfold publish selective audit summaries, yet independent validation is sparse. Consequently, buyers increasingly demand third-party attestations before signing contracts.

Regulators, critics, and buyers now share incentives for openness. In contrast, secrecy rapidly erodes market share. Leaders must act before mandates arrive.

Next Steps For Leaders

Executives should inventory every model impacting candidates across sourcing, scheduling, and assessment. Subsequently, draft plain-language notices describing purpose, data, and limitations. Engage counsel to test for Bias across protected classes. Furthermore, embed human reviewers before offers finalize. Finally, publish annual impact reports to maintain Recruitment AI Transparency over time.

Early action reduces compliance surprises and boosts Employer Brand Trust. Consequently, transparent programs also attract higher-quality candidates.

Greenhouse data reveals an industry at a tipping point where efficiency collides with perception. However, organizations that reveal algorithmic intent, verify identity, and keep humans engaged can escape the doom loop. Bias testing, candid policy pages, and continuous audit cycles transform opacity into opportunity.

Consequently, forward-thinking talent leaders should formalize these practices now. Enhance your capability with the linked certification and position your team for ethical, resilient growth.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.