AI CERTS

5 days ago

WordPress AI Crawler Blocking Trends Explained

Meanwhile, newsroom executives fret over lost licensing leverage and disappearing referral clicks. Developers also struggle because troubleshooting opaque CDN or host behaviour consumes expensive engineering hours. This report unpacks how WordPress sites unknowingly block verified AI bots. It also explains why the trend accelerates and what to do next. Along the way, we assess Robots.txt limitations, web scraping arms races, and the broader SEO impact. Ultimately, informed operators can regain control and decide whether AI systems quote or ignore their work.

Silent Blocking Trend

Publishers often assume that permitting crawlers in Robots.txt guarantees AI visibility. However, default CDN and host settings now override that file before requests reach WordPress. Cloudflare’s one-click shield, for example, ships with AI crawler deny rules enabled for new zones.

Managed WordPress hosts add another invisible layer. Investigators reproduced 429 errors for GPTBot while browsers received normal 200 responses on WP Engine. Therefore, the block originates at the platform, not inside the CMS.

Security plugins complicate matters further because some shipped blocklists covering ClaudeBot, PerplexityBot, and GPTBot by default. Site owners installing them for spam defense never realize they activated AI Crawler Blocking again.

These silent changes create a misleading sense of openness. Nevertheless, understanding the technical layers clarifies where intervention is possible, leading to the next section.

Key Technical Layers

Three layers determine whether an AI bot reaches your content. Firstly, Robots.txt provides advisory rules that compliant crawlers respect. Secondly, CDN or WAF enforcement uses IP fingerprinting, rate limits, and managed signatures to drop traffic. Thirdly, WordPress level plugins can still veto individual user agents.

Moreover, some crawlers now spoof user agents or rotate IP ranges, bypassing Robots.txt entirely. Consequently, network edge filtering becomes the decisive gatekeeper for AI Crawler Blocking.

In contrast, retrieval bots that cite live sources often need real-time access and identify themselves honestly. Training bots fetch massive archives once, so operators view them as cost centers rather than partners.

Layer awareness helps diagnose failures quickly. Proper mapping prevents accidental AI Crawler Blocking. Subsequently, evidence moves the debate from anecdotes to hard percentages.

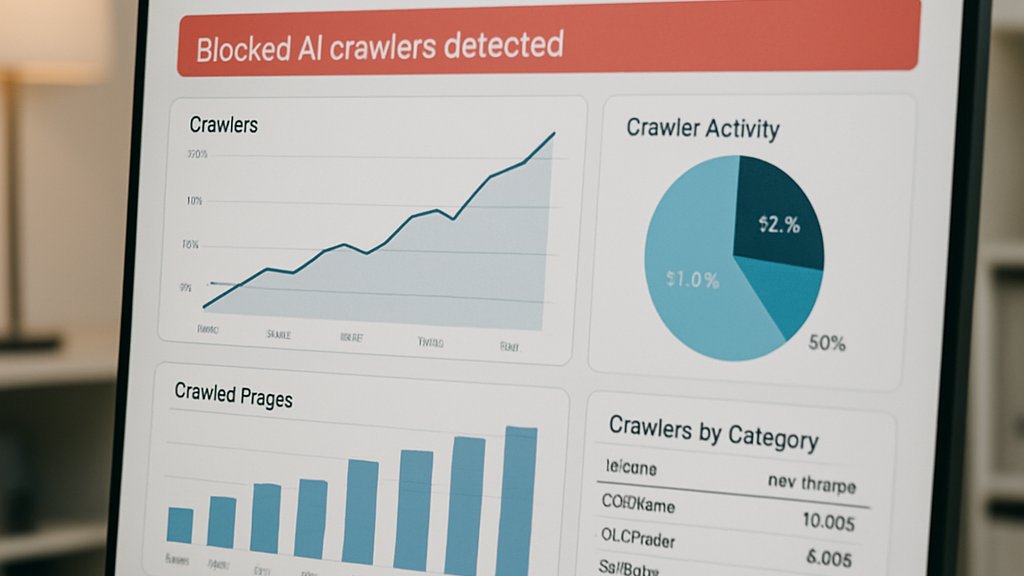

Data Driven Evidence

BuzzStream audited 100 leading UK and US news domains during April 2026. Researchers found 79% blocked at least one training bot and 71% blocked a retrieval bot.

- 79% block at least one training crawler

- 71% block at least one retrieval crawler

- Only 14% block every AI bot

- 18% allow all bots completely

Industry analysts now use AI Crawler Blocking as a benchmark for publisher assertiveness. Cloudflare simultaneously reported billions of denied bot requests after launching its default protection toggle. Meanwhile, PC Gamer covered claims that Perplexity used stealth crawlers to dodge such defenses.

These numbers underscore the rapid adoption of AI Crawler Blocking across high-traffic publishers. Therefore, WordPress administrators should consider the SEO impact of disappearing from conversational search.

Statistics reveal momentum, but legal and business motives drive much of the posture. Consequently, we examine those forces next.

Business And Legal

Publishers chase revenue, and referral clicks still fund newsrooms. However, AI answer engines surface full snippets, reducing user incentives to visit source sites. Harry Clarkson-Bennett summarized the stance: there is “almost no value exchange.”

Consequently, blocking crawlers strengthens negotiation leverage for licensing or litigation settlements. The New York Times and Chicago Tribune both filed suits alleging unauthorized copying by Perplexity.

Many publishers now label AI Crawler Blocking a tactical lever during licensing talks. AI companies counter that fragmented access hinders model quality and that a standardized framework is preferable. Cloudflare’s pay-per-crawl proposal attempts to monetize the access while preserving publisher choice.

Nevertheless, unexpected platform defaults may block friendly retrieval bots, harming discoverability and producing negative SEO impact. Balancing risks and visibility becomes a strategic decision.

Legal stakes amplify the need for clear audits. Therefore, practical detection methods are essential, as discussed in the following section.

Detection And Audits

A simple curl loop often exposes hidden barriers. Search Engine Land shared commands comparing browser and ClaudeBot responses within minutes.

Additionally, response headers reveal whether Cloudflare, a host WAF, or a plugin generated the block. Look for CF-Ray, Server, or x-anubis clues when evaluating web scraping defences.

Administrators should also disable security plugins temporarily, then retest to isolate layer interactions. Meanwhile, Cloudflare dashboards list every action under Security » Bots, including AI crawler rules.

If AI Crawler Blocking originates upstream, create explicit allow rules for verified IP ranges or agent strings. Document changes to maintain compliance and reduce future confusion.

Thorough audits turn guesswork into actionable insight. Subsequently, operators can craft proactive strategies instead of reactive patches.

Strategic Recommendations

Firstly, decide whether your growth strategy benefits from appearing in AI answers. If so, whitelist approved bots at the CDN and plugin levels while keeping Robots.txt consistent.

Secondly, monitor traffic logs for spikes from unverified agents that ignore declared policies, signalling malicious web scraping. Rate limiting combined with cryptographic bot authentication proposals can mitigate those costs.

Professionals can enhance expertise with the AI Sales™ certification. It teaches negotiation tactics for data licensing and platform deals.

Moreover, update stakeholder reports to include AI Crawler Blocking status and resulting SEO impact metrics monthly.

These steps align technical controls with commercial goals. Consequently, organizations remain in command of their discoverability narrative.

Conclusion

Silent defaults have placed many WordPress sites behind unseen walls. However, targeted audits quickly reveal whether AI Crawler Blocking hampers visibility or protects revenue.

Robots.txt alone is insufficient, but combined edge and plugin policies offer granular choice. Furthermore, data shows the practice is already mainstream, influencing SEO impact across industries. Effective governance also reduces unwanted web scraping traffic that strains infrastructure.

Therefore, review your stack today, adopt transparent policies, and capitalize on AI exposure intentionally. Explore the linked certification to strengthen negotiation skills and lead these conversations confidently.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.