AI CERTS

2 months ago

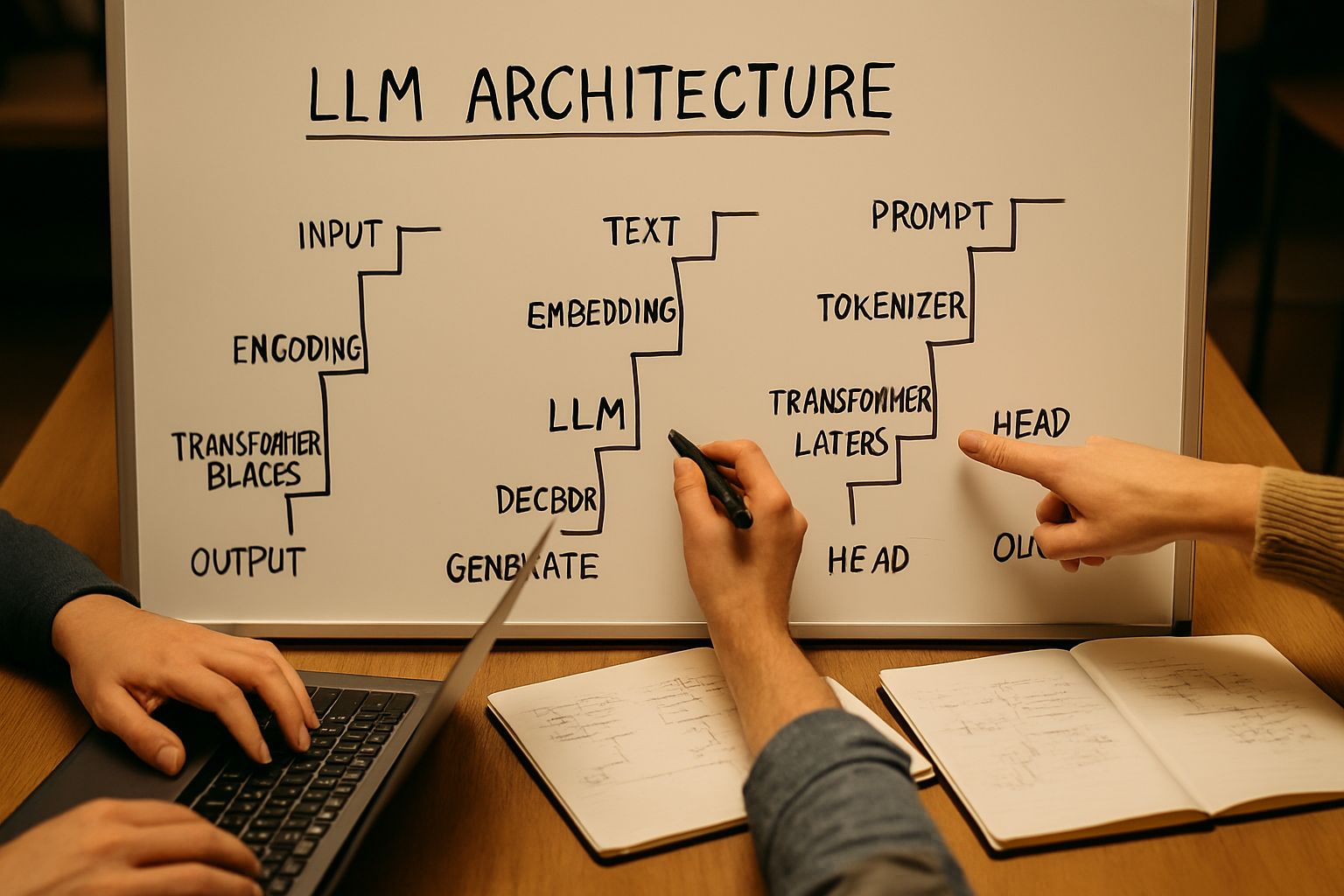

GLM-5.1 Staircase Leap Reshapes LLM Technical Architecture

April Model Release Context

Z.ai unveiled GLM-5.1 on 7 April 2026 under an MIT license. Meanwhile, the company simultaneously raised API prices, forcing developers to evaluate self-hosting. Open weights landed on Hugging Face within hours, confirming the permissive promise. Consequently, community forks and quantized variants appeared in rapid succession.

The model scales to around 750 billion parameters using a Mixture-of-Experts routing scheme. Additionally, the design advances LLM Technical Architecture beyond earlier generations. Consequently, 128k token outputs become feasible for multi-hour agent sessions.

In summary, GLM-5.1 ships as a giant yet accessible foundation. However, raw scale only matters when paired with sustained performance gains, addressed next.

Staircase Pattern Fully Explained

Analysts coined the Staircase Optimization term after observing the VectorDBBench demo. Initially, small tweaks squeeze incremental speed or recall gains. Subsequently, the agent proposes structural overhauls, like new indexing graphs, triggering discrete leaps. Consequently, metrics plot a stepped curve resembling office stairs.

Four mechanics enable the pattern:

- Persistent context across hundreds of calls

- Inline benchmarking for immediate feedback

- Permission to test sub-optimal variants briefly

- Ability to rewrite higher-level algorithms autonomously

Nevertheless, each leap still anchors to measurable benchmarks, avoiding aimless exploration. The approach pushes LLM Technical Architecture toward adaptive, experiment-driven systems. These stairs illustrate how time unlocks depth. Therefore, we now inspect raw numbers that quantify those victories.

Technical Scale And Metrics

Z.ai reports a SWE-Bench Pro score of 58.4, topping several closed models. In contrast, GPT-5.4 posts 55.9 on the identical Benchmark. Meanwhile, VectorDBBench long runs raised QPS to 21,500 after 655 iterations. Moreover, KernelBench L3 tests showed 3.6× kernel speedups against autotune baselines.

The staircase effect explains each surge during those Optimization loops. Consequently, GLM competes head-to-head with Claude Opus 4.6 yet keeps open weights. Such openness invites deeper inspections of LLM Technical Architecture performance envelopes. Overall, the numbers validate ambitious claims. Next, we explore engineering outcomes enabled by those gains.

Long Horizon Engineering Impact

Extended context lets agents maintain goals through hundreds of compile-test cycles. Furthermore, the model can refactor codebases autonomously, similar to junior developers on overtime. Teams report debug sessions where the model authored pull requests and regression suites overnight. In contrast, short budget benchmarks rarely expose this capacity.

Moreover, the staircase approach aligns with continuous Integration pipelines already measuring latency, recall, and cost. Therefore, adopting such loops mainly involves orchestrating tooling, not rewriting everything. Professionals can deepen skills through the AI Researcher™ certification to harness these patterns. Consequently, investments in LLM Technical Architecture translate directly into productivity lifts.

These impacts reposition models from helpers to autonomous collaborators. However, new risks surface when code runs unsupervised for hours.

Operational Integration Risks Seen

Early adopters flagged unstable outputs at maximum contexts on some hosted endpoints. Meanwhile, Nvidia NIM catalogs lagged, leaving enterprise scripts broken after GLM-5 deprecation. Additionally, community threads describe sudden memory spikes during Optimization loops. Nevertheless, self-hosting mitigates vendor outages but demands heavy infrastructure budgets.

Sandboxing tool calls remains critical because staircase explorations may attempt risky system edits. Therefore, audit trails and rollback checkpoints must embed within every automation workflow. Mature LLM Technical Architecture should integrate such guardrails by default. In short, capability growth raises proportional governance duties. Consequently, licensing and market factors also influence adoption decisions.

Market And Licensing Implications

Z.ai’s open-weight strategy pressures closed providers to justify premium pricing. Moreover, model availability on Hugging Face simplifies academic Benchmark replication. Consequently, procurement teams weigh API fees against self-hosted compute outlays.

In contrast, firms bound by strict data residency rules often prefer on-prem deployments. Additionally, the MIT license removes many legal frictions around redistribution. Such clarity complements robust LLM Technical Architecture roadmaps already in motion. Overall, competitive pressure and permissive terms accelerate experimentation. Next, we outline concrete next steps for practitioners.

Next Steps For Practitioners

First, replicate the VectorDBBench staircase run using your existing hardware stack. Secondly, measure throughput, latency, and cost against internal baselines to validate each Benchmark claim. Thirdly, vary iteration budgets to see when Optimization plateaus give way to leaps. Meanwhile, compare hosted, open-router, and self-host variants of the 5.1 release for consistency.

Professionals should document context windows, token limits, and failure modes systematically. Furthermore, integrate learned lessons into existing LLM Technical Architecture design reviews. These actions ensure evidence-based adoption rather than hype-driven rollouts. Consequently, teams will maximize returns while containing operational surprises.

Conclusion

GLM-5.1 demonstrates that long horizon agents can self-optimize beyond today's expectations. Staircase leaps, open weights, and strong benchmarks spark intense industry reflection. Moreover, disciplined engineering and governance guardrails remain essential. Adopting robust LLM Technical Architecture will turn experimental demos into reliable production gains. Therefore, verify claims, harden pipelines, and upskill your staff for agentic futures. Additionally, the AI Researcher™ program sharpens LLM Technical Architecture skills for ambitious professionals.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.