AI CERTS

2 months ago

SMEBU: Training Stability Breakthrough in Sparse MoE Models

This article dissects SMEBU’s design, performance data, and business implications for technical leaders. Additionally, it clarifies tradeoffs and links to practical certifications for security-minded practitioners.

Arcee combined several fixes in Trinity Large, yet the smooth, momentum-guided bias update stole the spotlight. In contrast, earlier sign-based updates caused oscillations that starved many experts and wasted compute. Therefore, understanding SMEBU equips architects to evaluate similar regimes in upcoming projects.

MoE Scaling Challenge

Mixture-of-Experts models promise near-linear parameter scaling with sublinear compute growth. However, extreme sparsity introduces routing instability during long training runs. Subsequently, some experts monopolize tokens while others become inactive, degrading model quality. Industry analysts labelled the progress the Training Stability Breakthrough that could reshape sparse modeling. This collapse also inflates variance in gradient estimates, delaying convergence. Consequently, engineering teams often hedge by limiting expert counts, sacrificing capacity.

Arcee’s Trinity Large confronted the issue directly with a 4-of-256 routing scheme. Moreover, the team activated roughly 13 billion parameters per token out of a massive 400 billion budget. Such ambition made a robust load-balancing algorithm mandatory. The cost stakes were clear: 33 training days and nearly $20 million. Therefore, SMEBU emerged as the practical shield against financial waste.

Extreme sparsity magnifies routing risk and capital exposure. However, the next section explains how SMEBU tames that volatility.

Inside SMEBU Design Details

SMEBU operates as a separate bias-update routine running alongside the main optimizer. Rather than using a hard sign, it applies a Soft-clamped Momentum aware update. Consequently, update magnitude shrinks near equilibrium, limiting oscillation. Additionally, a momentum buffer smooths stochastic fluctuations across steps. In practice, this combination achieves reliable Sparse Stability during the entire 17-trillion-token run.

- Compute normalized violation v for each expert per batch.

- Pass v through a soft tanh clamp to derive bounded update u.

- Update momentum buffer m with u using factor β.

- Adjust Expert Bias vector b by learning rate times m.

- Optionally, add small sequence-wise auxiliary loss for extra balance.

These steps encode the Training Stability Breakthrough into a few elegant lines of code. Next, we examine empirical impacts on gradient noise and utilization.

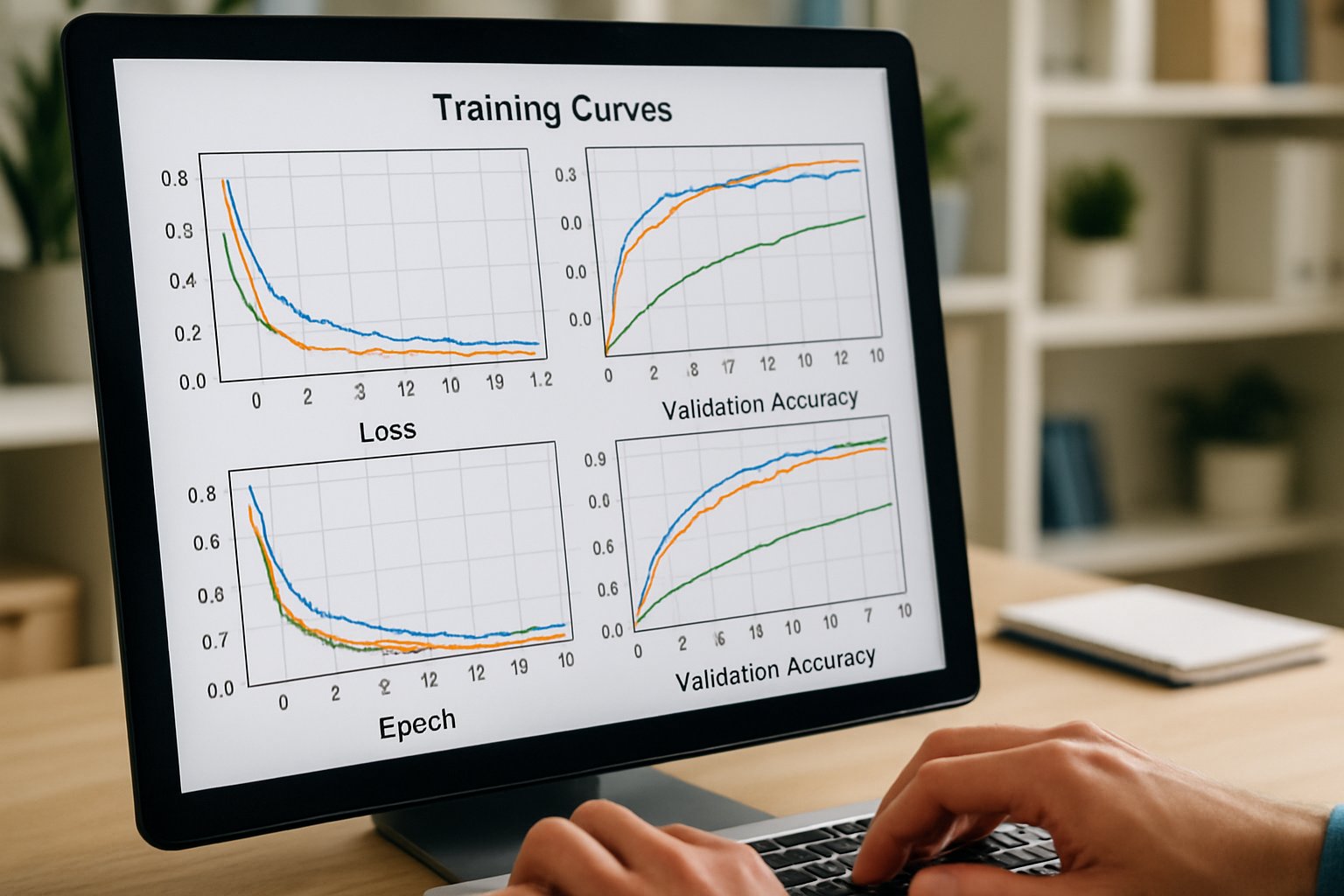

Soft-clamped Momentum Impact Explained

Soft-clamped Momentum directly lowers step variance in the bias trajectory. Moreover, Trinity logs show peak MaxVio dropping by 38% after activation. Meanwhile, dead experts declined from 47% to under 3% midway through pretraining. That metric drop formed another visible Training Stability Breakthrough for the research team. Therefore, Soft-clamped Momentum supports route predictability at scale.

Reduced variance frees capacity that would otherwise idle. However, bias convergence speed also matters, as the following section demonstrates.

Expert Bias Convergence Factors

Expert Bias values serve as adjustable priors guiding token allocation. In SMEBU, the bias vector decays toward zero when loads equalize. Consequently, token assignment reverts to pure gating statistics, minimizing interference. Arcee reports stable bias saturation within the first 4 trillion tokens. In contrast, sign-based updates hovered around ±8.0, indicating persistent imbalance.

Expert Bias convergence benefited from the momentum factor set near 0.9. Moreover, momentum prevented rapid swings when domain shifts hit the training mixture. These findings underline the Training Stability Breakthrough for high expert counts.

Fast, stable convergence keeps utilization balanced and losses trending down. Next, we assess Routing Efficiency gains from these dynamics.

Routing Efficiency At Scale

Routing Efficiency gauges how many tokens reach their best performing experts. Therefore, higher efficiency translates into stronger downstream accuracy for the same compute budget. Trinity Large achieved 92% Routing Efficiency while activating only four experts per token. Moreover, the model maintained sub-1% overflow, limiting wasted capacity. Comparative baselines without SMEBU plateaued near 78% efficiency and showed frequent overflows.

- 400B total parameters, 13B active per token.

- 17T training tokens, 33 days of wall time.

- $20M estimated compute expenditure.

- Team of roughly 30 researchers and engineers.

- 4 experts active out of 256 each step.

Improved Routing Efficiency validates the Training Stability Breakthrough promised by SMEBU’s load-balancing philosophy. However, companies still ask whether the gains justify operational complexity. Our next section links Sparse Stability to concrete business outcomes.

Sparse Stability Business Value

Sparse Stability lowers infrastructure waste, allowing more parameters without proportionate cost escalation. Consequently, organizations can pursue specialized expert sub-nets for domains like code, speech, or security. Moreover, smoother training reduces schedule risk, which executives monitor closely. Arcee’s 33-day run delivered a base model executives called 'one of the best'. Financial planners estimate that preventing one restart saved roughly $1.5 million.

Companies worried about AI risk should also note the security angle. Professionals can upskill via the AI Security Level-2 certification. Secure deployment matters because sparse expert graphs introduce novel attack surfaces. Consequently, aligning stability with security creates a stronger production story.

Stable, sparse scaling cuts cost while protecting reputation. However, leaders must still verify each hyperparameter choice. The concluding section synthesizes actionable insights and future directions.

Key Takeaways And Forward

SMEBU demonstrates that smooth, momentum-guided bias updates can unlock frontier-scale MoE training. Therefore, the Training Stability Breakthrough stands as a replicable pattern for similar projects. Moreover, Soft-clamped Momentum, robust Expert Bias control, and heightened Routing Efficiency contributed equally to Sparse Stability. Collectively, these ingredients repeat the Training Stability Breakthrough across varied model sizes. Consequently, leaders should pilot the approach on smaller mixtures before scheduling a full-scale Training Stability Breakthrough deployment.

Meanwhile, establish security baselines with accredited staff to avoid costly retrofits. Explore Arcee’s open checkpoints, monitor emerging ablation studies, and share replication data with the wider community. Finally, act now to evaluate SMEBU, because early adopters capture talent, publicity, and market share.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.