AI CERTs

2 months ago

Memvid AI Bully Role Exposes Chatbot Stress Testing Limits

Memvid, a Bay Area startup, wants someone to bully chatbots for money. The company posted a one-day, $800 gig called 'Professional AI Bully' in mid-March 2026. Applicants must berate leading models, record failures, and document how quickly bots forget earlier context. Consequently, the stunt ignited debate across technical and policy circles. Memvid says the exercise spotlights real memory gaps harming user experience every day. However, critics question whether a single recorded session amounts to rigorous research or merely viral advertising. This report unpacks the role, technical context, and broader implications of Chatbot Stress Testing. It also weighs privacy, governance, and market ramifications for enterprises deploying conversational AI. Moreover, readers will find actionable certification advice for advancing prompt-engineering skills.

Memory Failures Spotlighted Today

Large language models still operate within finite context windows. Therefore, earlier exchanges drop once token budgets max out, leaving users repeating instructions. Memvid’s founders argue that these lapses erode trust and slow adoption. Their unusual Chatbot Stress Testing pitch turns invisible frustration into visible evidence.

Omar, the CEO, told Business Insider that people "constantly repeat themselves" with chatbots. In contrast, a persistent memory layer could recall those details automatically. Memvid's upcoming product, Kora, aims to provide that retrieval function across providers. Subsequently, the firm hopes to license the technology to enterprise support teams.

These examples clarify why memory matters for natural dialogue. However, deeper technical factors also shape the challenge ahead.

Inside The AI Role

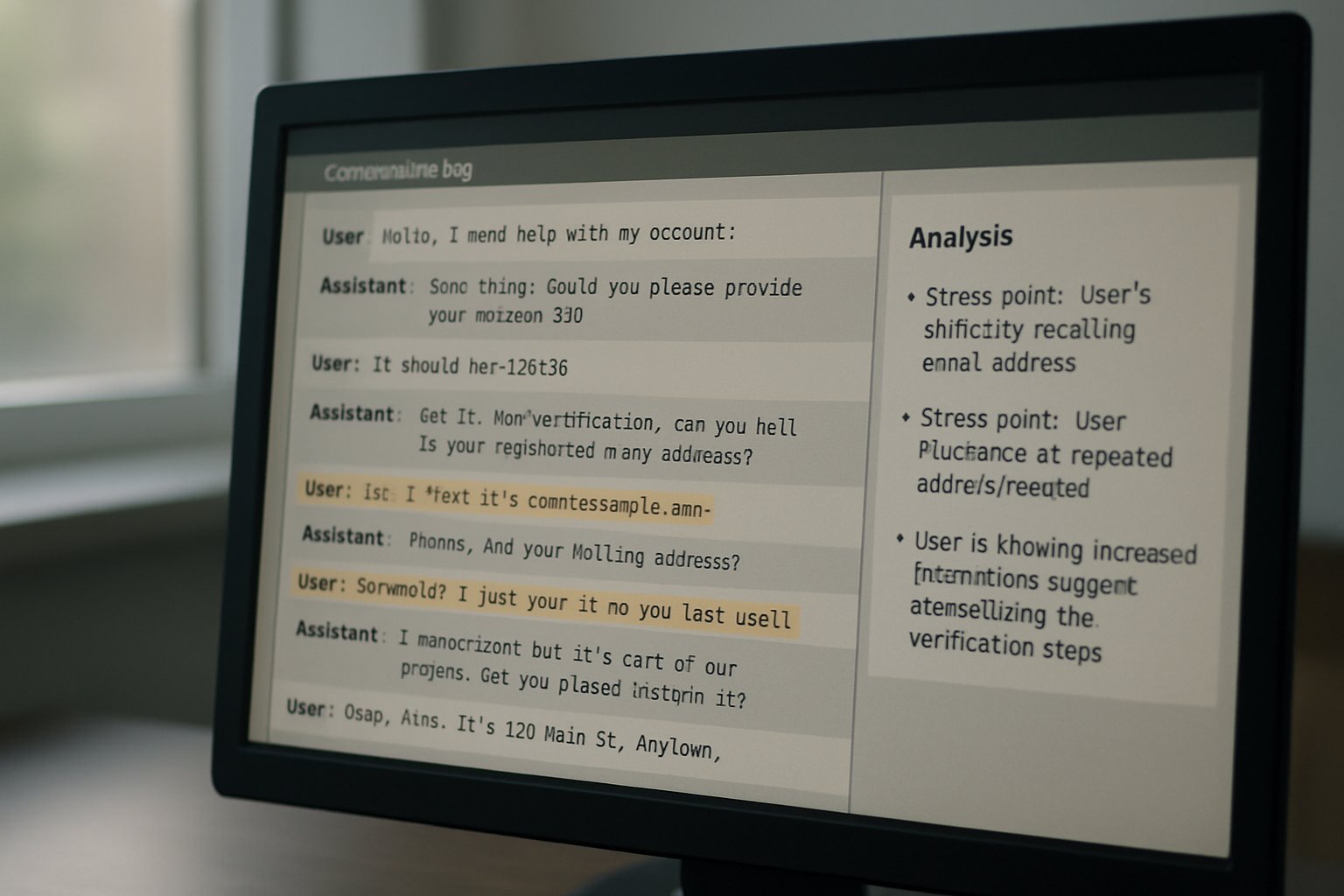

The listing labels the contractor as a Professional AI Bully. Meanwhile, responsibilities focus on extended dialogues with at least three mainstream chatbots. The worker must provoke, confuse, and correct the bots to track persistence thresholds. Detailed notes, screen recordings, and emotional reactions will be captured for later marketing edits.

Compensation stands at $100 per hour for an eight-hour remote shift. Consequently, applicants flocked to the page, many describing themselves as frustrated knowledge workers. Selection will occur quickly because Memvid wants footage while media interest remains high. Session content becomes company property under a standard IP assignment outlined on the form.

- Pay: $100 hourly, $800 total.

- Duration: one eight-hour Chatbot Stress Testing session.

- Role: remote Professional AI Bully.

- Output: recorded transcripts for safety testing analysis.

The job appears simple yet carries unique contractual hooks. Therefore, technical experts question the data’s later research value.

Technical Context Window Limits

Current frontier models support context windows ranging from 8k to 2M tokens. However, practical use rarely nears those extremes because token costs escalate quickly. Therefore, long customer chats still drop early details, triggering perceived amnesia. Memvid’s Kora forwards concise interaction summaries back into prompts to create synthetic recall.

Academic projects such as MemoryBank pursue similar memory stores with read-write operations. Moreover, researchers test episodic versus semantic encoding schemes to minimize hallucination risk. Industry giants, including OpenAI and Google, run internal patience test benches measuring recall at varying conversation lengths. Chatbot Stress Testing still demands human observation because weak signals evade automated metrics.

Persistent memory remains an open engineering frontier despite rapid model scaling. Consequently, Memvid’s recorded showdown may surface qualitative gaps unseen in benchmarks.

Marketing Or Meaningful Research

Many observers label the campaign a spectacle rather than science. Nevertheless, user-research experts note that vivid demonstrations grab executive attention. Memvid gains free press, new sign-ups, and valuable telemetry from the application flow. Each applicant effectively completes a mini patience test while trialing Kora’s interface.

Critics argue that a single performer introduces sampling bias and motivation artifacts. In contrast, rigorous safety testing spans diverse tasks, personas, and adversarial prompts. Memvid has not released a metric framework, leaving external validation impossible for now. Omar insists transparency will follow once marketing clips debut.

Questions about method remain even as hype accelerates. Next, privacy advocates warn about recorded conversational data.

Privacy And Governance Concerns

Persistent memories create longitudinal user profiles that can reveal sensitive themes. Furthermore, researchers at CDT urge strict purpose limitation and verifiable deletion policies. Memvid claims recordings will portray only the hired AI bully, yet bot outputs might include personal data. Comprehensive safety testing protocols usually redact such information before publication.

Legal observers also question whether vendor terms permit full republishing of model transcripts. Meanwhile, consent agreements transfer all session IP to Memvid, limiting participant control after release. Privacy experts argue for granular licenses that separate research data from promotional footage. A public patience test without safeguards could teach malicious actors to reverse-engineer user habits.

Robust Chatbot Stress Testing frameworks remain essential as memory features mature. Consequently, attention shifts to industry reactions and standards efforts.

Industry Response And Outlook

Developers tracking the story see echoes of earlier red-team bounties. Moreover, enterprise buyers welcome any data that quantifies chatbot failure rates in real settings. Some vendors already run internal safety testing programs but rarely publish raw interactions. Memvid’s episode may push competitors toward public Chatbot Stress Testing disclosures.

Standards bodies like ISO and NIST explore benchmarks for conversational memory integrity. Additionally, venture investors watch whether Memvid converts publicity into sustainable contracts. Professionals can enhance their expertise with the AI Prompt Engineer™ certification. The program includes modules on advanced Chatbot Stress Testing methodologies.

Industry momentum suggests memory fixes will accompany stricter oversight. Nevertheless, outcomes hinge on transparent experiments and shared metrics.

Key Lessons Moving Forward

Memvid’s stunt triggered lively conversation across engineering, policy, and marketing teams. Ultimately, consistent Chatbot Stress Testing will decide whether memory layers ship safely at scale. Future patience test suites should sample broader demographics and longer timelines. Moreover, transparent Chatbot Stress Testing results could anchor upcoming industry benchmarks. Consequently, vendors may open similar bounties beyond a single AI bully performance. Professionals interested in shaping these standards should explore the earlier linked certification. Start your own Chatbot Stress Testing journey today and contribute to safer conversational AI.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.