AI CERTs

2 months ago

MongoDB’s Unified Platform Reshapes AI Data Retrieval

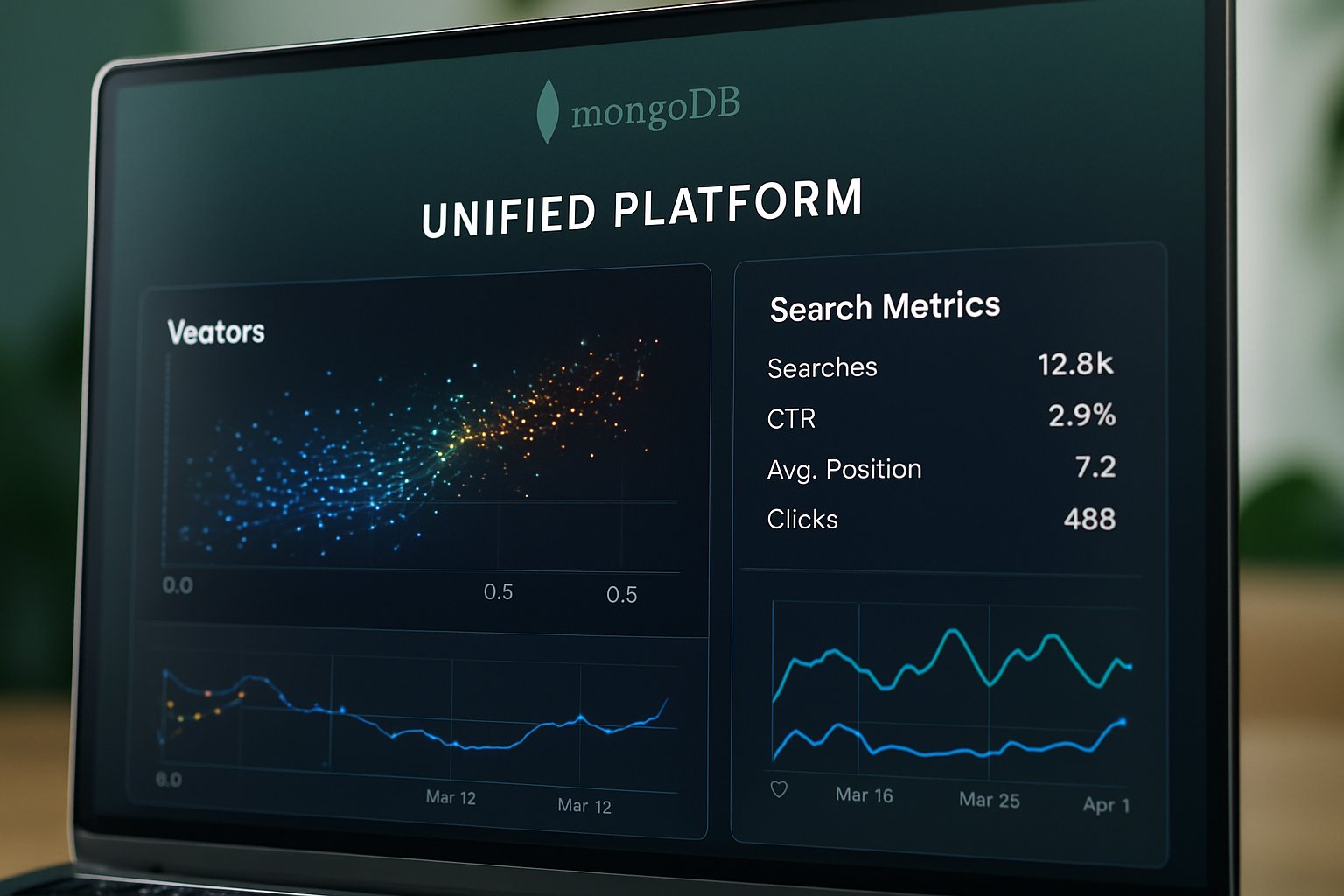

Generative AI projects often stall when teams juggle multiple data services. Consequently, engineering velocity drops and governance weakens. MongoDB now proposes a different path. The company has spent 18 months folding advanced retrieval, embedding, and reranking directly into its core document store. This deep integration creates a Unified Platform for operational and AI workloads. Moreover, it promises fewer moving parts for Retrieval-Augmented Generation pipelines. Investors, developers, and competitors are watching closely. The February 2025 Voyage AI acquisition and January 2026 Automated Embedding preview headline the shift. Meanwhile, hyperscaler partnerships with AWS, Azure, and Google Cloud keep Atlas everywhere builders need it. In contrast, specialist vector databases race to maintain performance advantages. This article unpacks the market context, technical details, and strategic stakes. Readers will learn why Database consolidation matters, where Innovation still flourishes, and how Search quality could decide enterprise adoption.

Market Forces Rapid Shift

Vector index spending is exploding. MarketsandMarkets expects revenue to approach USD 8.9 billion by 2030. Therefore, investors view retrieval tooling as the next cloud gold rush. However, customers dislike running yet another specialized store. Operational complexity raises cost, governance risk, and latency variance. Consequently, consolidation waves have begun across the Software stack. MongoDB is surfing that wave by becoming a Unified Platform for AI retrieval. Moreover, the company already serves millions of developers, giving it a distribution advantage. Industry analyst firms note that 70 percent of Fortune 100 companies use MongoDB today. These adoption numbers give management confidence to challenge pure-play vector peers. In contrast, startups like Pinecone and Weaviate argue that focus yields lower latency. Nevertheless, boardrooms usually bet on integrated roadmaps when transformation risk is high. This market dynamic frames every MongoDB product decision.

Section summary: Spending is rising fast, and integration pressure favors vendors offering a single control plane. Transitioning to key milestones will reveal how strategy turned into shipped code.

Timeline Milestones Drive Innovation

Understanding the rollout sequence clarifies technical progress.

- May 2 2024: Atlas Vector Search reached general availability with Amazon Bedrock.

- Nov 19 2024: Azure OpenAI guidance made RAG prototypes easier.

- Feb 24 2025: MongoDB acquired Voyage AI for $220 million.

- Jan 15 2026: Automated Embedding entered public preview.

- Jan 2026: Voyage-4 model family and reranking APIs were announced.

Moreover, each event reduced external plumbing. For example, Automated Embedding lets teams store raw documents and let the Database handle vector generation automatically. Consequently, launch cadence underscores that Innovation is now baked into the release pipeline rather than gated behind labs. These milestones demonstrate momentum. However, product depth matters more than press releases.

Section summary: Rapid releases show execution discipline. Therefore, technical capabilities deserve a closer inspection.

Unified Database Search Strategy

MongoDB positions Atlas as the application runtime, data store, and retrieval engine. Consequently, the Unified Platform message centers on three features. First, Vector Search uses the mongot engine with HNSW indexing for millisecond similarity queries. Second, Automated Embedding calls Voyage models during writes and updates, eliminating external Software loops. Third, the new reranking API refines top-k results, boosting retrieval precision for RAG prompts. Moreover, self-managed users can audit the pipeline because MongoDB released mongot under the SSPL license. In contrast, specialist vector services remain proprietary black boxes. Developers gain governance benefits because data never leaves the Database boundary during preprocessing. Meanwhile, runtime latency stays acceptable for most enterprise SLAs. Voyage-4 models reportedly score within two points of OpenAI text-embedding-3 benchmarks. Therefore, teams can balance cost and quality without separate hosting contracts. Unified Platform advocates also highlight query syntax simplicity. One index definition covers keyword, vector, and hybrid Search, reducing DSL switching. These design choices illustrate why MongoDB claims a differentiated approach.

Section summary: Embedding, retrieval, and reranking live together, turning the database into a full AI retrieval hub. Next, we examine the Technical Features In Software.

Technical Features In Software

Automated Embedding attaches an autoEmbed flag to any Atlas Vector Search index. Subsequently, the Software intercepts insert and update events, batches content, and calls Voyage models asynchronously. Therefore, developers avoid creating change-stream pipelines and schedule jobs. Moreover, automatic retries handle transient failures, which keeps production uptime predictable. Reranking operates as a second query stage; it accepts the initial candidate set and returns a reordered list. Meanwhile, heuristic fallbacks maintain answer quality if the model times out. The Database stores both dense vectors and metadata in the same document, which simplifies backup policies. In contrast, external model services require duplicating sensitive data into separate shards. Performance benchmarks shared by MongoDB show 10 k QPS on commodity hardware with 10-millisecond median latency. Nevertheless, specialized vector engines may still lead on tail latency for high-frequency trading scenarios. "Unified Platform" evangelists argue the trade-off is acceptable for mainstream workloads.

Section summary: Internal automation and near-native latency showcase practical Innovation. We now turn to risks and competitive pressures shaping adoption.

Competitive Landscape And Risks

Competition remains fierce even with MongoDB's momentum. Pinecone, Weaviate, and Redis tout lower tail latency and memory-efficient indexes. However, those players lack the broad operational feature set of a mature Database. Furthermore, some architects worry about vendor lock-in if retrieval, models, and orchestration reside in one Unified Platform. Licensing debates add additional friction. The SSPL license increases transparency yet differs from permissive open source norms. Consequently, risk-averse teams may hesitate until commercial terms stabilize. Analysts also warn that better retrieval reduces hallucinations but never eliminates generation errors. Therefore, guardrails, evaluation suites, and human review remain essential. Search ranking quality can still drift when documents evolve rapidly. Nevertheless, MongoDB's hyperscaler partners give customers exit options if latency floors become unacceptable.

Section summary: Performance rivals and legal nuances temper enthusiasm. Yet customer benefits often outweigh these concerns, which we explore next.

Customer Impact And Benefits

Enterprises adopting the Unified Platform report shorter delivery cycles for AI pilots. For instance, a global pharma firm ingested 12 million research abstracts in one week without external pipelines. Additionally, governance officers praised that no sensitive records left the core Database. Furthermore, internal cost models showed 35 percent savings compared with a two-service architecture. Key advantages include:

- Reduced MLOps staffing because embeddings generate automatically.

- Lower data egress fees since Search happens near storage.

- Faster iteration due to single query language across CRUD and retrieval.

Moreover, teams can boost leadership credibility by pairing deployments with recognised credentials. Professionals can enhance their expertise with the AI Executive Essentials™ certification. Consequently, staff align technical delivery with executive priorities. Innovation continues as Voyage releases multimodal models for images and code. MongoDB intends to reach general availability for Automated Embedding on Atlas later this year. Subsequently, the company will expose managed Voyage models through consumption-based endpoints. Moreover, deeper Bedrock and Vertex AI integrations are on the near horizon. CEO Dev Ittycheria has stated that a Unified Platform remains the guiding principle for all product groups. Meanwhile, specialist vendors may partner with orchestrators to retain niche advantages. Industry watchers expect market consolidation as enterprises favor integrated offerings over piecemeal Software stacks. Therefore, we anticipate stronger hybrid retrieval features, cross-cluster embedding sharing, and real-time reranking improvements.

Section summary: MongoDB is marrying retrieval features with broad ecosystem support, pointing toward accelerated feature delivery. With context set, we can now extract final lessons for technical leaders.

Conclusion And Next Steps

MongoDB's eighteen-month sprint has redefined expectations for an enterprise data layer. Consequently, the company now offers a Unified Platform that blends operational storage with AI retrieval out of the box. Meanwhile, competitors push on latency and openness, ensuring healthy Innovation for customers. Nevertheless, adoption trends suggest that teams value simplicity and governance above marginal speed gains. This reality favors a Unified Platform strategy anchored by automated embedding, vector retrieval, and integrated models. Therefore, forward-looking leaders should evaluate total cost, lock-in risk, and skill development plans. Explore technical previews, read benchmark disclosures, and secure executive buy-in. Finally, consider formal upskilling paths, including the AI Executive Essentials™ certification, to keep talent aligned with rapid product evolution.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.