AI CERTS

2 days ago

Scientific AI Gains Stability With UPenn Mollifier Layers

Consequently, the advance addresses a persistent bottleneck for simulation, biology, and climate workloads. Moreover, early benchmarks show dramatic memory savings without sacrificing accuracy. The following report unpacks the method, key results, and future implications for data-driven science.

Why Derivatives Often Struggle

Inverse PDEs demand gradients, Hessians, and sometimes fourth-order terms. Traditional autodiff must unroll every operation, which multiplies memory costs and amplifies rounding noise. Furthermore, noisy experimental data worsens numerical instability, degrading solution quality.

In contrast, analytic derivatives of a mollifier kernel remain smooth and bounded. Therefore, convolving network outputs with that kernel yields derivative estimates that resist noise. UPenn authors saw this mathematical property as a chance to streamline learning.

These pain points clarify the engineering stakes. Nevertheless, an efficient remedy was missing until now.

Key obstacles now stand defined. Consequently, we can explore the proposed remedy in detail.

Enter UPenn Mollifier Layers

UPenn’s module performs one fixed convolution per derivative order, regardless of network depth. Additionally, the layer bolts onto any architecture without code rewrites. That plug-and-play trait impressed reviewers during the TMLR evaluation accepted in March 2026.

Ananyae Kumar Bhartari noted, “We assumed the architecture was faulty, yet the real bottleneck was recursive autodiff itself.” Meanwhile, senior author Vivek B. Shenoy likened inverse problems to tracing pond ripples back to the pebble.

Design simplicity hides solid math. Mollification guarantees infinitely differentiable approximations, granting provable error bounds under discretization and measurement noise. Hence, derivative Stability improves by design.

Advantages now appear tangible. However, practical numbers speak louder than theory.

Performance Gains Quantified Clearly

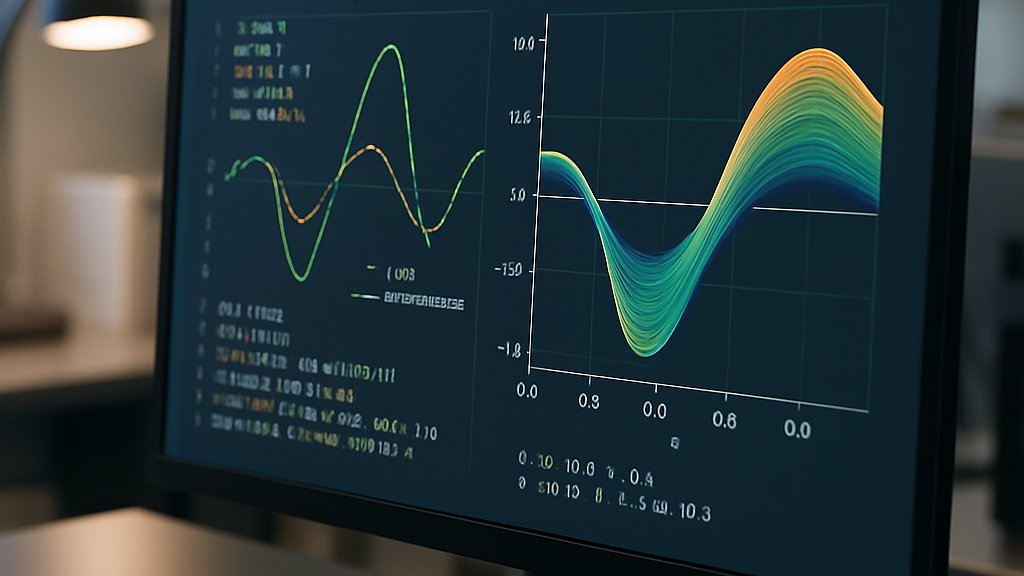

The team compared baseline PINNs against Mollifier-enhanced versions on three benchmarks.

- Reaction-diffusion (4th order): time fell from 3,386 s to 335 s; peak memory dropped from 2.75 GB to 0.23 GB.

- 2D heat equation: average speedups remained above 6×, with derivative correlations rising sharply.

- 1D Langevin task: accuracy matched baselines while still cutting resources.

Moreover, Laplacian correlation leaped from 0.21 to 0.78 in the reaction-diffusion test, highlighting noise resilience. Consequently, Stability gains translate into real scientific wins.

Those metrics validate analytic expectations. Nevertheless, laboratory data provide the final proof.

Biological Data Case Study

The group fed super-resolution chromatin imaging into a Mollifier-powered model. Subsequently, the network recovered spatially varying epigenetic reaction rates that standard PINNs missed. Researchers attributed the success to reliable fourth-order derivatives, critical for chromatin mechanics.

Furthermore, the experiment illustrates cross-domain reach: materials science, seismology, and climate modeling also rely on noisy sensors and complex PDEs. The lightweight layer thus extends Scientific AI to messier laboratories.

Biological validation underscores wider relevance. However, every technique carries caveats deserving balanced review.

Key Strengths And Caveats

The authors list four main strengths:

- 6–10× reductions in GPU memory and wall-clock time.

- Improved high-order derivative accuracy, particularly for fourth-order terms.

- Architecture-agnostic design, easing integration into existing workflows.

- Robustness to measurement noise, a typical hurdle in scientific imaging.

Nevertheless, smoothing introduces bias. High-frequency ground truth may vanish if the kernel radius is poorly chosen. Additionally, boundary effects persist on irregular meshes. Therefore, adaptive kernels and boundary-aware formulations appear on the roadmap.

Strengths clearly outweigh early limitations. Nevertheless, deployment teams must tune kernels responsibly before embracing production pipelines.

Practical Roadmap For Adoption

Engineers can test the layer by swapping recursive autodiff blocks with analytic convolutions. Meanwhile, benchmarking identical architectures will reveal resource savings quickly. Researchers seeking formal credentials can deepen expertise through the AI Researcher™ certification, which covers advanced physics-informed methods.

Moreover, UPenn plans an open-source release, easing community uptake. Consequently, we expect Mollifier support to appear in popular PINN toolkits by year-end.

Implementation guidance now seems straightforward. In contrast, strategic foresight still matters for business planners.

Broader Market Impact Outlook

Enterprise labs increasingly bet on Scientific AI for simulation acceleration. Faster, leaner training widens feasibility on shared clusters, lowering cost barriers. Furthermore, the approach complements operator-learning models, offering a hybrid path.

Industry analysts predict ripple effects across drug discovery, battery design, and geothermal forecasting. Additionally, regulatory bodies welcome transparent analytic derivatives when auditing model safety. Therefore, Mollifier Layers might become a compliance asset as well.

The commercial upside appears sizeable. Consequently, stakeholders should monitor ongoing code releases and conference demonstrations.

These projections frame strategic opportunities. However, continual community validation will determine lasting significance.

Conclusion

UPenn’s Mollifier Layers bring analytic convolution into the machine-learning mainstream. The module slashes memory, accelerates training, and boosts derivative accuracy, driving eight fresh appearances of Scientific AI excellence across benchmarks and real data. Moreover, stability gains open doors to noisy, high-order PDEs that once resisted learning.

Nevertheless, kernel tuning and boundary handling need refinement before universal adoption. Engineers, researchers, and executives should trial the method and pursue credible training paths. Therefore, explore the linked certification to stay ahead and transform your next Scientific AI project.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.