AI CERTS

2 days ago

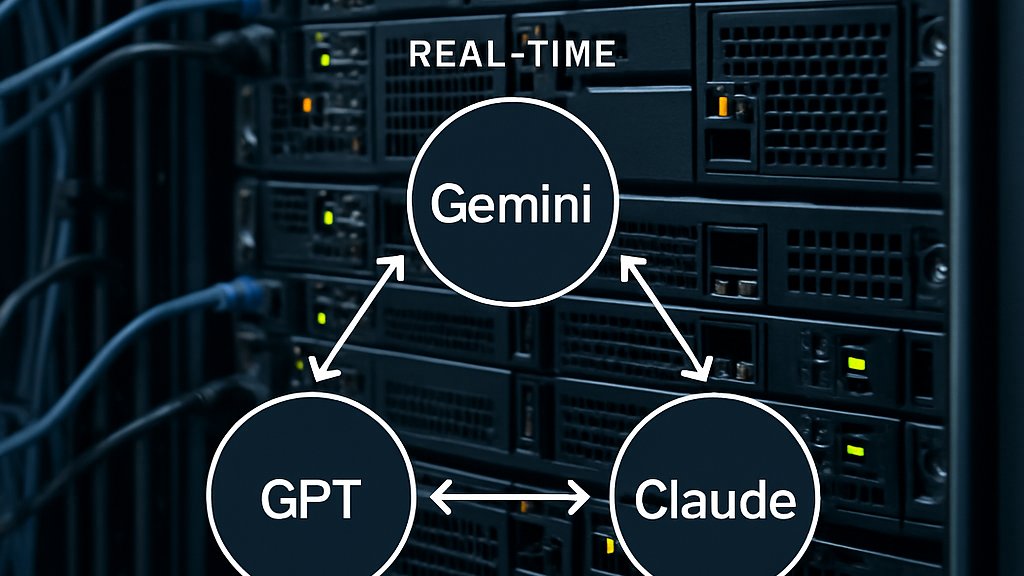

Sakana’s RL Model Orchestration Boosts Multi-LLM Performance

Moreover, the routing scheme slashes token consumption by an order of magnitude in certain benchmarks. Consequently, enterprise architects see an emerging layer above individual models. However, questions linger about governance, vendor lock-in, and reproducibility. This article dissects the research, product, and market implications for technical leaders. It also maps future work and certification opportunities for practitioners. Read on for a concise, data-driven overview.

Why Routing Matters Now

Sakana positions the conductor as a project manager for disparate language models. Traditionally, teams relied on static pipelines or a single large model for every request. In contrast, varied tasks benefit from specialized strengths across models. For example, GPT-5 excels at planning while Claude Sonnet often writes cleaner Python.

However, orchestrating multiple vendors manually creates brittle code and rising latency. Therefore, Sakana trained a small agent to learn routing decisions directly from end-to-end rewards. The reinforcement-learning setup encourages concise workflows that minimize expensive calls. Consequently, downstream systems can scale without exploding budgets.

Learned routing reframes performance, cost, and flexibility as a single optimization problem. These gains set the stage for deeper technical details ahead.

Inside Sakana Conductor Tech

The Conductor extends Qwen2.5-7B and holds only seven billion parameters. Meanwhile, it writes natural-language instructions that describe which worker to call and what context to share. Each instruction adheres to a simple schema: model_id, visibility_mask, and sub_prompt. Additionally, the controller may include itself as a worker, enabling recursive refinement. Importantly, the project builds on RL fine-tuning rather than supervised imitation.

Training employed proximal policy optimization with a reward equal to task accuracy minus token cost. Consequently, the agent learns to pick cheaper models when acceptable. Worker pools varied per episode to avoid overfitting to any vendor. Moreover, Sakana released TRINITY, an evolutionary coordinator that explores discrete role assignments without gradient updates.

Both coordinators highlight Model Orchestration as an emerging research frontier around policy-driven agent management. Next, benchmark numbers quantify the impact.

Benchmark Numbers Explained Clearly

Sakana evaluated the system on reasoning, coding, and math suites. LiveCodeBench pass@1 jumped from 78.4% to 83.9% with the Conductor. GPQA-Diamond improved 5.4 absolute points, reaching 87.5%. AIME math accuracy hit 76.2%, up from 71.0%.

VentureBeat reported an average score of 77.27% across diverse tasks. Token usage told an even stronger story. Baseline mixtures consumed roughly 11,203 tokens per answer. In contrast, the learned workflow used about 1,820 tokens. Therefore, organizations can expect significant savings at scale. RL rewards strongly penalized token waste.

Key Metrics In Detail

- LiveCodeBench: 83.9% vs 78.4% single best

- GPQA-Diamond: 87.5% vs 82.1%

- AIME25: 93.3% reported peak

- Average token count: 1,820 vs 11,203 baseline

- TRINITY LiveCodeBench: 86.2% pass@1

These figures validate that small coordinators can outperform much larger stand-alone models. At face value, Model Orchestration translates benchmark wins into tangible business metrics. The benefits become clearer when viewed through an enterprise lens, which we examine next.

Benefits For Enterprise Teams

Cost, latency, and quality drive most production decisions. Model Orchestration offers simultaneous wins across all three dimensions. Firstly, fewer tokens directly lower API invoices. Secondly, parallel worker calls shorten tail latency in composite workflows. Moreover, heterogeneous pools mix closed and open LLM endpoints, reducing vendor dependence and enabling graceful degradation during outages. For decision makers, Model Orchestration simplifies vendor negotiations.

- Automatic vendor selection per request

- Configurable cost ceilings via reward shaping

- Transparent audit logs for each subcall

- Recursive self-review for high-risk outputs

Professionals can deepen relevant skills through the AI Developer™ certification. Consequently, teams gain internal expertise to integrate orchestrators responsibly. Enterprises thus obtain measurable savings without sacrificing accuracy. However, new risks emerge and deserve equal attention.

Risks And Open Questions

Autonomous routing complicates governance because subcalls may target closed models. Therefore, auditability mechanisms must surface complete call graphs to compliance officers. Sakana says Fugu logs every interaction, yet independent reviews remain limited. Reproducibility also suffers when evaluations depend on proprietary endpoints. Poorly governed Model Orchestration could obscure data flows.

Security teams worry about broader attack surfaces created by dynamic composition. Moreover, data may traverse multiple jurisdictions, complicating residency policies. Nevertheless, Sakana promises configurable guardrails and per-vendor encryption.

Balancing performance and oversight will shape adoption curves. The competitive context further influences those curves, as the next section shows.

Competitive Landscape Snapshot Today

Several startups and open projects pursue similar goals. Microsoft's Autogen, Google DeepMind's RouterLM, and various Mixture-of-Agents frameworks illustrate growing interest. However, few offerings present peer-reviewed results plus a commercial API. Consequently, Sakana enjoys a first-mover perception among enterprise buyers.

Competitive pressure will likely push incumbents to adopt learned routing quickly. Meanwhile, open communities experiment with smaller coordinators built on llamafile or Mistral. Therefore, we expect rapid iteration in both research and product spheres. Open-source communities view Model Orchestration as a democratizing force. Competitors test evolutionary search or pure RL approaches to routing.

Market momentum underscores the importance of practical guidance. The final section outlines concrete steps for interested teams.

Practical Next Steps Forward

Start by piloting Fugu Mini against an internal baseline. Measure accuracy, latency, and token costs across representative workloads. Subsequently, adjust reward weights to match budget constraints. Meanwhile, integrate audit logging into existing SIEM pipelines to track subcalls. An internal hackathon can expose staff to Model Orchestration quickly.

Engage security teams early to review data residency and encryption settings. Moreover, negotiate vendor SLAs that cover sudden model deprecations. Professionals seeking deeper competence can pursue the AI Developer™ credential. Certification projects require building and evaluating orchestration pipelines, reinforcing practical skills. Fine-grained RL retraining can align policies with domain metrics.

These steps convert research insights into measurable business value. Consequently, early movers can secure an operational advantage.

Sakana's conductor and TRINITY show that small policy agents can master complex multi-model environments. Moreover, the reported benchmarks reveal compelling accuracy and cost curves. Nevertheless, governance and security demands require equal innovation. Therefore, practitioners should pilot carefully, log exhaustively, and negotiate robust SLAs. Model Orchestration will likely emerge as a standard layer in enterprise stacks. Additionally, certifications accelerate internal readiness and strengthen hiring signals. Act today and explore orchestrated architectures before competitors seize the advantage.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.