AI CERTS

6 days ago

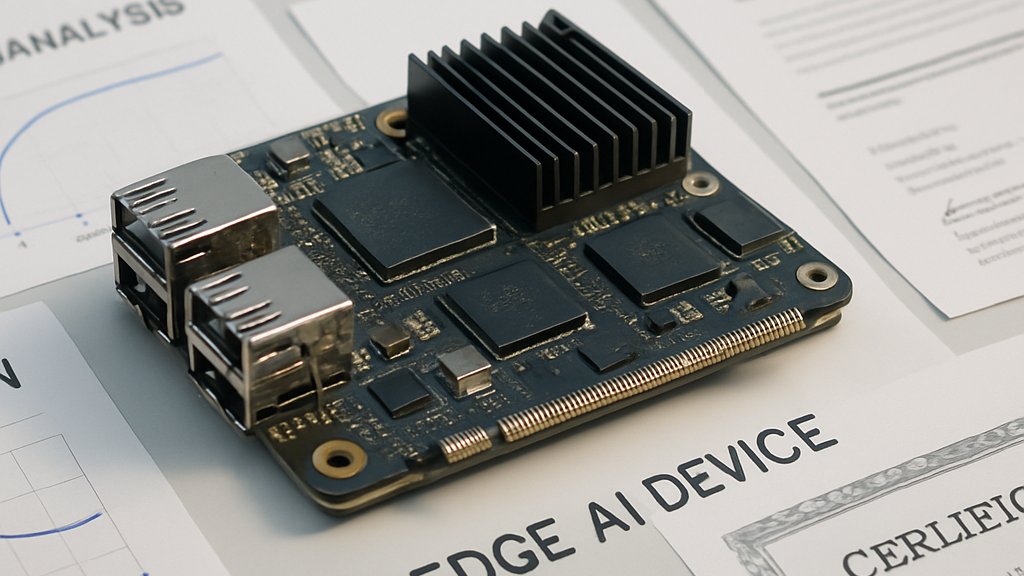

Nota and NPU Partners Expand Edge AI Commercialization

However, understanding the strategic logic requires unpacking partner motives, technical promises, and commercial risks. This article breaks down that puzzle for engineering managers and corporate strategists. Moreover, it highlights certification pathways that future-proof professional skill sets. Read on for a data-driven tour of the rapidly evolving field. Furthermore, every insight connects directly to published press releases and analyst numbers. Therefore, decision makers can separate marketing rhetoric from measurable impact.

Partnership Momentum Builds Fast

Nota first signaled intent with a memorandum signed at Computex 2025 alongside DEEPX. Subsequently, formal contracts followed with FuriosaAI, LG AI Research, SiMa.ai, and most recently Mobilint. Each agreement licenses NetsPresso and commits joint NPU performance validation while exploring distribution of the packaged Nota Vision Agent. Consequently, software revenue scales alongside hardware volume instead of discrete seat licenses.

Mobilint stands out because the two firms will co-target traffic, surveillance, and smart-city video workloads. In contrast, FuriosaAI focuses on high throughput data-center inference using its Renegade card. Meanwhile, SiMa.ai positions the stack for robotics and other physical systems needing tight latency budgets. Collectively, the deals show Nota chasing horizontal reach rather than exclusive silicon allegiance. Industry analysts describe the approach as "pick-and-shovel" for Edge AI gold seekers. Nevertheless, delivery risk grows with every added toolchain.

These alliances illustrate aggressive scaling of partnerships. However, technical integration depth determines ultimate revenue realization. Next, examine the market conditions driving such urgency.

Market Drivers And Challenges

Edge inference spending continues climbing at double-digit CAGR across industrial, automotive, and public safety sectors. Mordor Intelligence pegs edge hardware at roughly USD 30.7 billion for 2026. Moreover, several reports forecast mid-teens growth for specialized NPU shipments through 2034. Therefore, vendors cannot rely on raw compute alone; software differentiation becomes essential.

Model Optimization sits at the center because compressed networks unlock deployment on power-limited devices. Nota claims NetsPresso shrinks weights by up to 90 percent without accuracy loss for many tasks. Nevertheless, experts request independent benchmarks before accepting those numbers. Customers also weigh privacy, latency, and total cost savings versus fully cloud approaches.

- Regulatory push for on-prem data residency

- Escalating GPU shortages in cloud regions

- Rising energy prices pressuring TCO models

- Demand for real-time computer vision analytics

Consequently, integrators view Edge AI as insurance against volatile cloud economics. However, fragmentation across toolchains remains a barrier.

These forces explain the rush toward integrated stacks. The following section dissects the technology choices enabling that integration.

Technology Stack Explained Clearly

An NPU executes neural math using highly parallel, low-precision compute units. Meanwhile, NetsPresso provides automated Model Optimization through pruning, quantization, and distillation workflows. Furthermore, the platform analyzes operator graphs, then generates Edge AI runtime code optimized for each device. Outputs travel through partner SDKs such as FuriosaAI's compiler or SiMa.ai’s Palette.

Consider Renesas RZ/V2H boards running a crowd-counting model optimized by Nota. The detection variant reaches 43.1 FPS while consuming modest memory. Heatmap generation sustains 24.4 FPS on the same silicon, yet counts up to 5,000 people. Therefore, compression clearly translates into field performance.

Professionals can enhance their expertise with the AI Network Security™ certification. Moreover, certified teams often navigate hardware-software co-design hurdles more smoothly.

This stack shows how optimization and silicon cooperation deliver measurable speedups. Next, we explore competitive dynamics shaping partner selection.

Competitive Landscape In Flux

The market already features heavyweight stacks from NVIDIA, Qualcomm, and open-source consortiums. In contrast, Nota pursues an "agnostic glue" model that dodges vendor lock-in. Nevertheless, GPU incumbents bundle mature compilers and extensive Edge AI ecosystem tools. Consequently, Nota must prove that paired Model Optimization lowers cost per inference.

Geography adds another lens. Most announced partners operate from Korea or the United States, reflecting concentrated silicon innovation. FuriosaAI, Mobilint, and LG AI Research strengthen Seoul’s claim as an accelerator hotbed. Meanwhile, SiMa.ai brings Silicon Valley reach and robotics expertise. Analysts expect Chinese entrants to seek similar alliances once export controls loosen.

Competitive pressures will intensify across regions. However, partner diversity remains Nota’s primary hedge.

The next section details customer decision factors.

Customer Implications And Actions

Enterprise architects evaluating integrated stacks face three recurring questions. Firstly, what accuracy delta accompanies Model Optimization on their workload and dataset? Secondly, how mature is the combined toolchain regarding documentation, debugging, and CI/CD hooks? Thirdly, what are the licensing terms once pilots graduate to production?

- Accuracy drop at fixed latency

- Energy per inference under real traffic

- Deployment portability across accelerators

- Vendor support service-level agreements

Moreover, vertical use cases such as intelligent transportation demand rigorous safety certifications. Mobilint already markets its MLA400 boards toward those deployments, enabling deterministic video analytics. Consequently, operators in Korea’s smart-city pilots represent attractive lighthouse customers. Edge AI promises latency below 50 milliseconds, crucial for incident detection.

These evaluation steps reduce post-deployment surprises. Next, we project the roadmap for 2026 and beyond.

Strategic Outlook For 2026

Nota intends to deepen joint go-to-market motions with every listed partner. Subsequently, NetsPresso updates will target large language models following the LG collaboration. Moreover, rumors suggest additional agreements with European automotive suppliers. Edge AI inference inside vehicles aligns well with compressed transformer models.

Independent benchmarking will likely become the decisive credibility driver. Therefore, watchers should look for MLPerf or academic conference papers detailing reproducible results. Meanwhile, Mobilint plans domestic manufacturing expansions to secure supply resilience. Korea offers tax incentives for such facilities, further concentrating the ecosystem.

Consequently, the coming year could validate Nota’s agnostic platform thesis. However, competition will react quickly if early pilots demonstrate significant cost savings.

Nota’s multi-partner campaign highlights growing demand for practical Edge AI outside hyperscale facilities. Moreover, NetsPresso’s proven compression pipeline offers a clear productivity boost for hardware teams. FuriosaAI, Mobilint, and SiMa.ai now race to showcase quantifiable customer wins. Consequently, independent metrics will determine whether compressed models truly unlock mainstream Edge AI projects.

Korea could emerge as a showcase region if smart-city pilots meet ambitious performance targets. Nevertheless, buyers must scrutinize toolchain maturity, licensing clauses, and support guarantees. Professionals seeking an advantage should pursue the previously linked certification and deepen hardware-software fluency. Explore the resources, run your benchmarks, and accelerate your next Edge AI rollout today.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.