AI CERTS

2 months ago

Anthropic Debuts Code Review With AI Coding Agents

However, questions about accuracy, cost, and security followed immediately. This article dissects Anthropic’s release, examines early metrics, and compares it with existing review solutions. Moreover, it outlines best practices for integrating the service into modern Software Development pipelines while controlling spend.

Anthropic Review Tool Debut

Anthropic revealed Code Review through a detailed blog post and press interviews. Subsequently, the feature entered research preview for Claude Teams and Enterprise customers. Availability is limited, yet interested groups can request activation inside the Claude dashboard.

Unlike single-pass linters, the system spawns a squad of AI Coding Agents that specialize in security, logic, and performance. Consequently, Anthropic frames the release as a higher-signal alternative to its existing open-source GitHub Action.

Early access remains gated but influential. Nevertheless, understanding the multi-agent process clarifies why the company stresses depth over speed.

Multi-Agent Process Explained

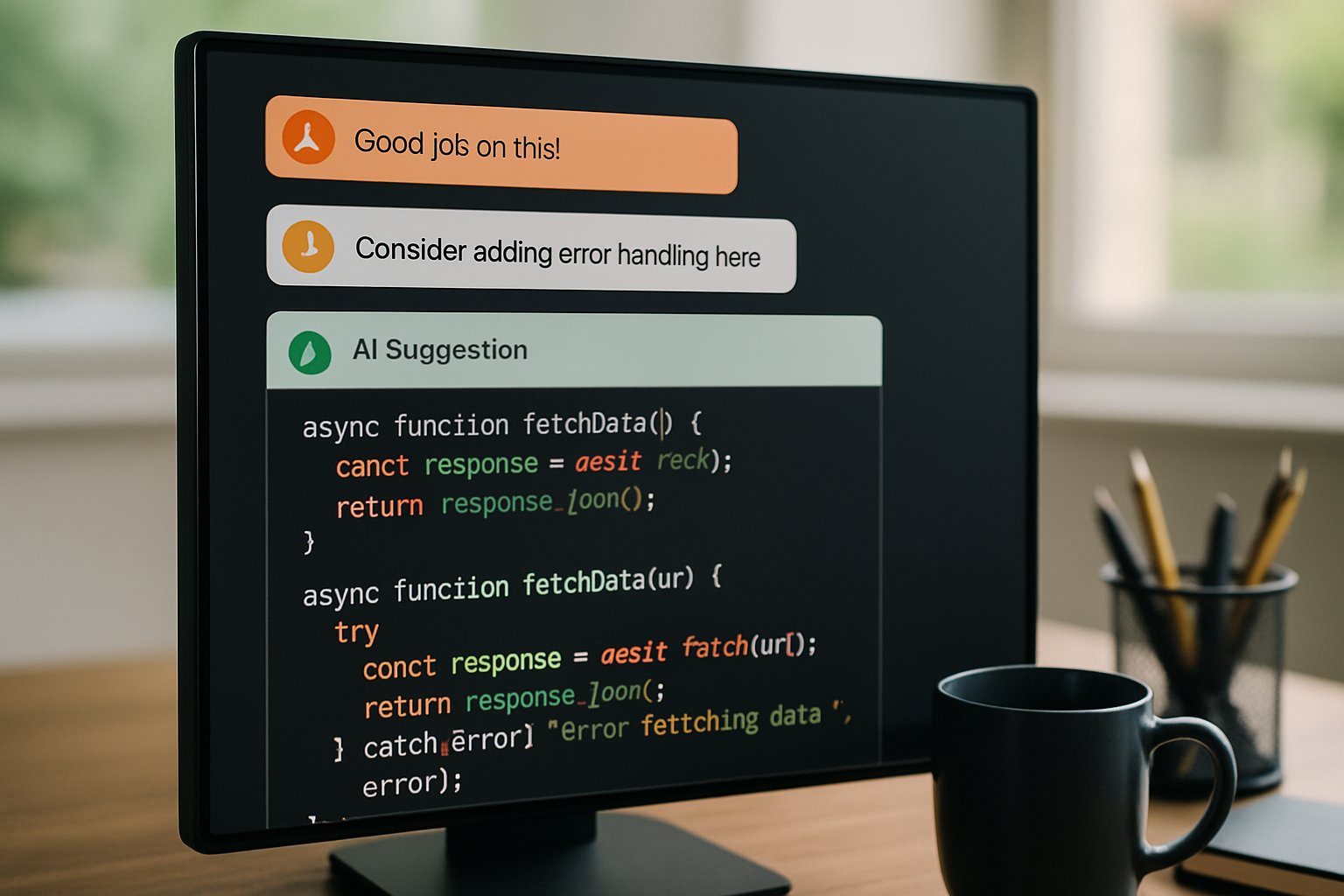

When a pull request opens, Claude Code dispatches several coordinated reviewers. Meanwhile, each reviewer examines the diff from a unique viewpoint.

One agent hunts security flaws, another validates business logic, and a third checks stylistic consistency. Additionally, a meta-agent ranks findings and filters false positives before posting a unified summary alongside inline comments.

Therefore, engineers receive concentrated feedback instead of dozens of noisy line items. Anthropic claims this structure keeps the false-positive rate under one percent. These AI Coding Agents cross-interrogate each other, reducing hallucinations that often plague large LLM tools.

Multi-agent orchestration underpins Code Review's promised precision. Consequently, tangible metrics illustrate how that design performs in production.

Performance Metrics Revealed

Anthropic shared internal numbers gathered across thousands of company pull requests. For large reviews exceeding 1,000 lines, agents surfaced findings in 84 percent of cases, averaging 7.5 issues.

Conversely, small reviews under 50 lines generated findings 31 percent of the time, averaging half an issue. Importantly, engineers rejected under one percent of agent comments as incorrect. Moreover, the typical review completed within 20 minutes.

Such signal density appeals to contemporary Software Development teams swamped by AI Coding Agents rapidly submitting code snippets.

- 200% rise in code output per Anthropic engineer over 12 months

- 16% to 54% leap in substantive review comments after deployment

- Average cost per review: $15–$25 based on token usage

These metrics suggest meaningful quality gains despite modest latency. However, financial implications require separate scrutiny.

Cost And Billing Details

Pricing follows token consumption rather than a flat rate. Therefore, longer diffs translate to heavier bills.

Anthropic estimates an average review costs between fifteen and twenty-five dollars. Administrative dashboards allow repository-level enablement and monthly spending caps.

Teams already employing AI Coding Agents for continuous Integration may process hundreds of pull requests weekly, amplifying charges rapidly. In contrast, proponents argue that catching critical Bugs early offsets compute fees that would otherwise manifest as outage expenses.

Cost control hinges on disciplined adoption policies. Subsequently, security considerations influence whether leadership enables wide deployment.

Security And Risk Factors

February security disclosures exposed remote-execution vulnerabilities within earlier Claude Code builds. Anthropic patched those holes, yet cautious teams still demand strict sandboxing and supply-chain audits.

Furthermore, external researchers emphasise that automated reviewers read proprietary code, raising governance questions. These challenges extend beyond AI Coding Agents themselves to broader Automation frameworks and LLM governance models.

Nevertheless, Anthropic avoids autonomous merges; human approvers remain the ultimate gatekeepers.

Security diligence remains paramount despite promising accuracy results. Consequently, competitive dynamics also shape adoption timelines.

Competitive Market Outlook 2026

OpenAI, Google, and niche vendors already offer code analysis products. However, few deploy coordinated multi-agent verification at scale.

Industry analysts expect rivals to accelerate releases that bundle specialized AI Coding Agents for review duty within existing IDEs. Meanwhile, enterprises evaluating Copilot, Gemini, or Snyk must weigh depth, latency, and cost when selecting tooling.

Moreover, Anthropic aims squarely at large organisations like Uber and Salesforce that prioritise accuracy over raw throughput. Therefore, the launch intensifies pressure to prove real world gains through independent benchmarks.

Competitive forces will likely lower prices and improve model fidelity. Subsequently, implementation advice becomes critical for buyers.

Implementation Best Practices Guide

First, scope enablement to high-risk repositories before turning the switch company-wide. Second, calibrate token caps based on historic pull request sizes.

Third, build dashboards that correlate Bugs caught by agents with incident metrics, proving return on investment. Additionally, train reviewers to triage comments efficiently, avoiding overload from parallel Automation pipelines.

Professionals can enhance their expertise with the AI Developer™ certification.

These steps complement teams already experimenting with AI Coding Agents for generation tasks, ensuring seamless workflow continuity.

Thoughtful rollout maximises benefit while minimising spend. In contrast, hasty adoption risks duplicated processes and budget surprises.

Final Thoughts

Anthropic’s Code Review illustrates how coordinated AI Coding Agents can harden Software Development without sacrificing velocity. Early metrics signal lower false positives, faster feedback, and fewer latent Bugs in production.

However, token costs, security posture, and integration complexity need constant monitoring as LLM capabilities evolve. Consequently, leaders should pilot the feature alongside existing Automation scripts and measure objective defect reduction.

Ready to deepen your mastery? Therefore, explore Anthropic’s documentation and secure credentials like the linked AI Developer™ certification today. Adopting AI Coding Agents responsibly now positions your team for future competitive advantages.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.