AI CERTS

2 months ago

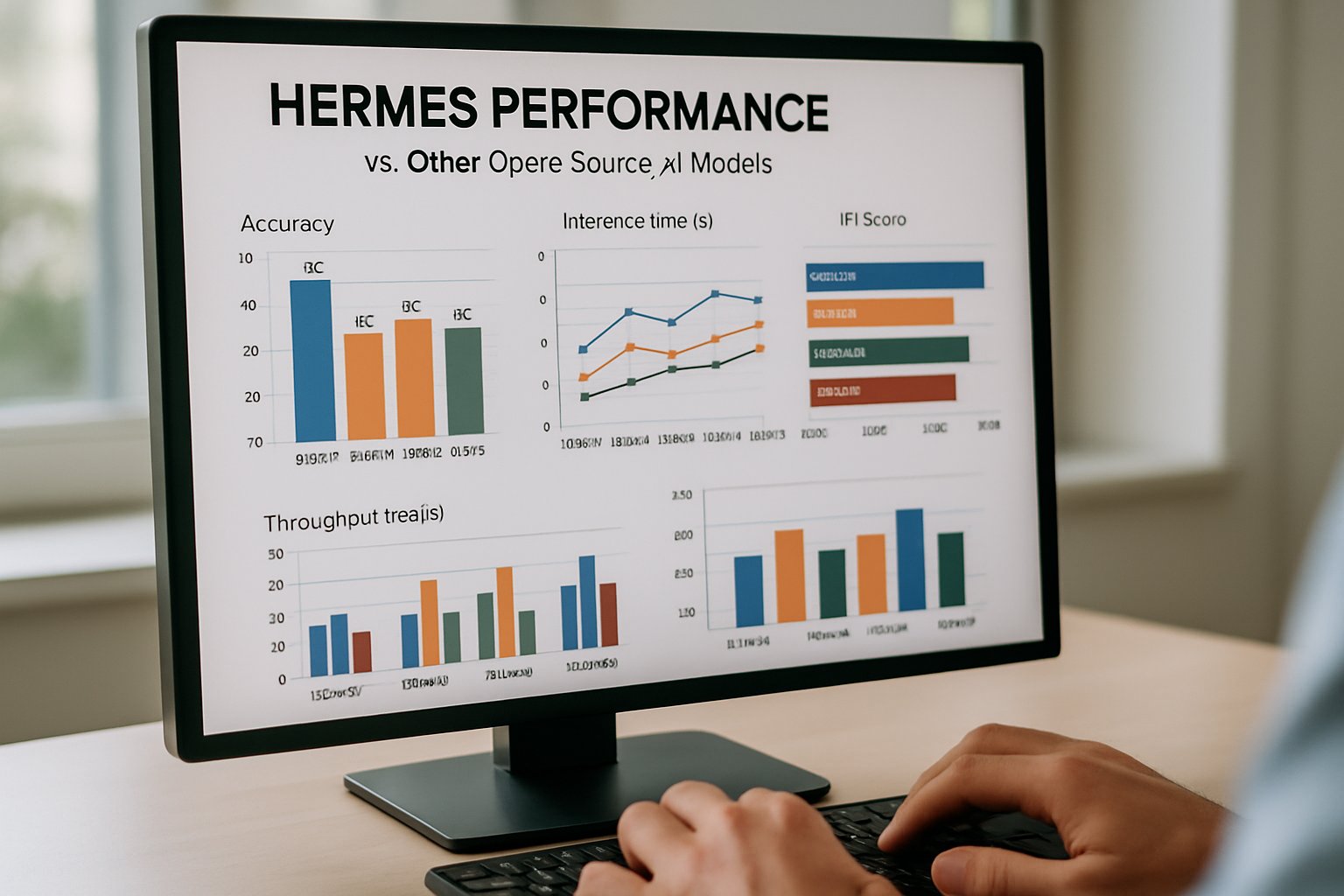

Hermes Performance Redefines Open AI Benchmarks

Origins Of Hermes Project

Nous Research introduced the first Hermes family in August 2025. Moreover, the open-source laboratory framed the release as a community milestone. The group stated that Hermes Performance would equal or exceed premium systems while granting users greater content freedom. In contrast, proprietary vendors embed extensive refusal policies. Subsequently, the project expanded into multiple sizes, topping out at a 405 B parameter variant. Nous Research invested about 71,600 GPU hours on 192 NVIDIA B200 cards for the flagship model. These figures illustrate the serious hardware behind the open promise. The section shows how ambition and transparency shape early perception. However, deeper evaluation requires hard numbers, which follow next.

These origin details highlight decisive goals. Therefore, understanding the launch context prepares us for direct performance scrutiny.

Benchmarking Against Closed Giants

Independent analysts prioritize rigorous model comparison when judging new entrants. Consequently, Nous Research placed Hermes 4 on common suites like MATH-500, AIME, and MMLU. Sample scores show 93.8 % on MATH-500 for the 36 B Psyche build, narrowly trailing the 70 B baseline. Meanwhile, GPT-4o serves as the public yardstick, yet direct numbers differ across test harnesses. Moreover, Hermes Performance appears competitive on several reasoning tasks while maintaining smaller parameter footprints. Industry observers note that such efficient scaling strengthens open-source adoption. Nevertheless, skeptics urge third-party replication before drawing firm conclusions.

- Hermes 4.3 36 B: 93.8 % MATH-500

- Hermes 4 70 B: 95.5 % MATH-500

- GPT-4o (publicly quoted): 96 %+ on selected math benchmarks

These figures suggest narrowing gaps. However, methodology variations still complicate absolute ranking. Consequently, leaders must weigh context, not only raw percentages.

Decentralized Training Psyche Claims

Hermes 4.3 emerged from Psyche, a decentralized compute network. Furthermore, Nous Research touts DisTrO coordination for gradient exchange across volunteer nodes. Therefore, the team argues that Hermes Performance benefits from diverse hardware and lower capital barriers. In contrast, closed labs usually rely on centralized clusters. Additionally, Psyche seeks blockchain-backed audit trails for training steps. Such architecture supports open-source ideals but raises fresh operational questions. Nevertheless, smaller firms could adopt similar federated pipelines to trim upfront expenses.

This exploration of infrastructure reveals innovation beyond model weights. Subsequently, attention shifts to how the model behaves during real interactions.

RefusalBench Results In Focus

Low refusal rates drive the headline claim of expanded content freedom. Consequently, Nous Research built RefusalBench to quantify answers versus declines. Hermes 4.3 records 74.60 % answered prompts in non-reasoning tests and 72.29 % in reasoning scenarios. Meanwhile, GPT-4o posts 17.67 % on the same chart, and Claude Sonnet sits at 17.0 %. Moreover, Hermes Performance maintains these rates while sustaining competitive reasoning accuracy. However, RefusalBench remains proprietary, and peer validation is still limited. Therefore, enterprises should replicate the suite before adjusting moderation policies.

Critics observe that addressing disallowed requests can amplify legal and reputational risk. Nevertheless, proponents argue that researchers gain richer datasets when obstacles vanish. These divergent views underline the balance between utility and safety. Consequently, robust governance frameworks become essential.

Safety And Misuse Debate

Increased answer rates trigger immediate safety scrutiny. Moreover, independent researchers caution that a model answering everything may reveal harmful instructions. In contrast, open-source advocates celebrate unfiltered exploration. Tommy Shaughnessy remarked that excessive guardrails “hurt innovation and usability.” Nevertheless, organizations remain accountable for downstream misuse.

Therefore, risk assessments must include compliance checks, red-teaming, and continuous monitoring. Nous Research acknowledges concerns and exposes internal reasoning using <think> tags. Consequently, auditors can inspect chains-of-thought for problematic jumps. However, visible thoughts also expose sensitive reasoning that bad actors might exploit.

These safety dilemmas cannot be ignored. Subsequently, decision-makers evaluate trade-offs before full deployment.

Implications For Enterprises Adoption

Enterprises crave performant yet controllable language models. Furthermore, Hermes Performance offers quantized GGUF builds that run on modest hardware. This capability reduces hosting bills and simplifies edge deployment. Additionally, open-source licensing fosters rapid customization, though Llama-derived terms may constrain some commercial uses. Meanwhile, multiple vendors Chutes, Nebius, and Luminal already host turnkey endpoints. Organizations can also self-host to retain data sovereignty. Professionals can enhance their expertise with the AI Developer™ certification. Consequently, certified teams manage fine-tuning and safeguard implementations more effectively.

These adoption pathways illustrate tangible value. However, strategic planning still demands holistic cost-benefit analysis.

Key Takeaways And Outlook

Hermes 4 shifts the competitive narrative through transparent design, strong reasoning, and radical content freedom. Moreover, Nous Research proves that community-driven engineering can approach elite capability tiers. Nevertheless, open benchmarks, safety audits, and meticulous model comparison remain pending. Therefore, enterprises must validate Hermes Performance under their workloads while enforcing robust governance. Meanwhile, decentralized training and visible reasoning point toward emerging best practices for trustworthy open-source AI.

The road ahead mixes opportunity with caution. Consequently, industry collaboration will determine whether open weight titans like Hermes 4 mature into dependable production workhorses.

Hermes Performance continues to attract global attention. Furthermore, its trajectory will influence policy, procurement, and research norms. Leaders should follow upcoming benchmark replications, pursue relevant certifications, and join cross-industry safety initiatives.