AI CERTs

4 months ago

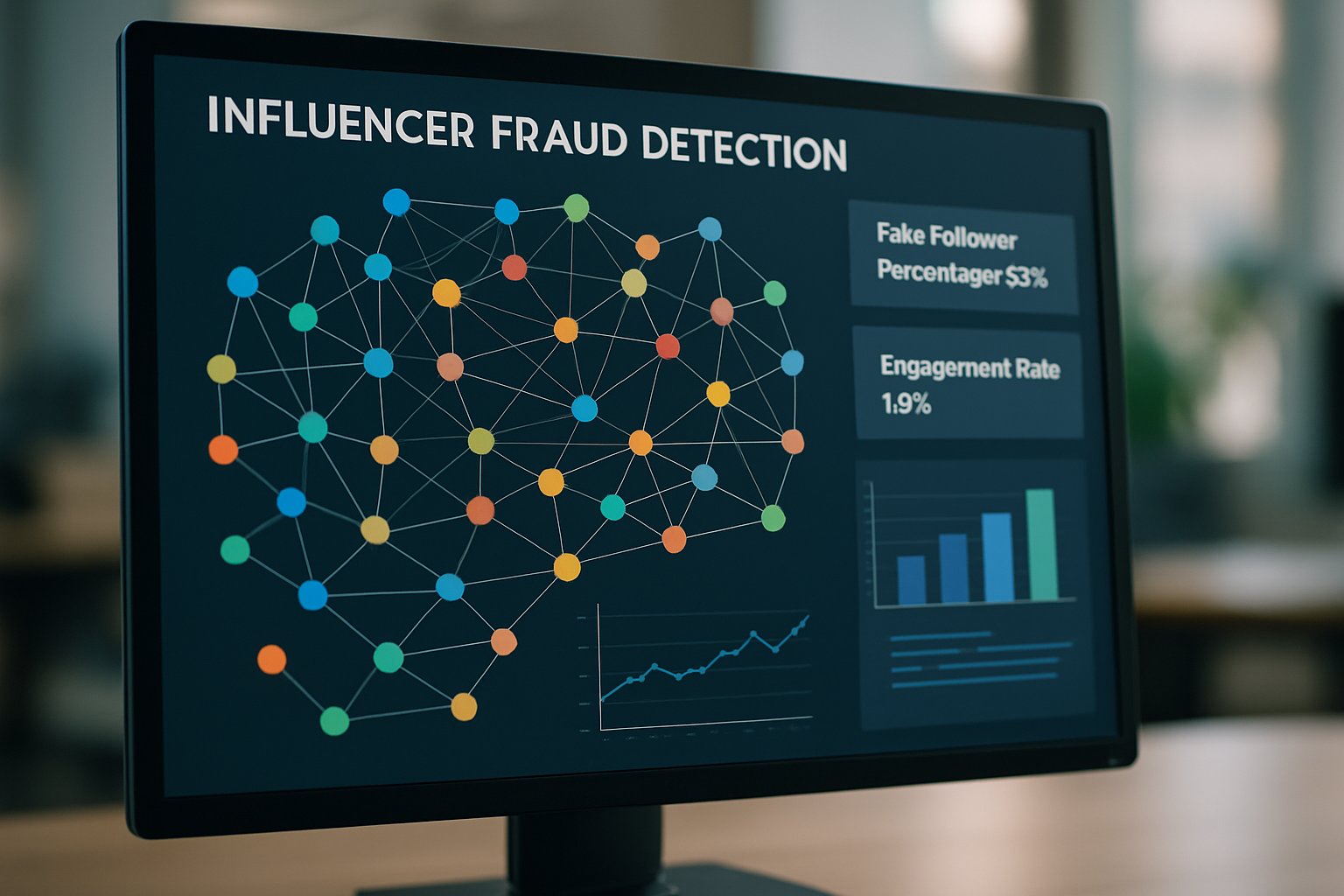

Influencer Fraud Detection Graphs Safeguard Brand Partnerships

AB InBev’s audit uncovered dormant audiences behind glossy engagement numbers.

Graph analysts showed one-third of paid creators barely mattered inside target communities.

Such surprises explain why brands now rely on Influencer Fraud Detection Graphs to vet partnerships.

These connected data models surface hidden rings of bots, impersonators, and engagement pods.

Consequently, marketing leaders see graph analytics as essential risk insurance in a market approaching $30 billion.

However, implementation demands technical rigor, clear workflows, and constant vigilance against adversarial adaptation.

Moreover, privacy limits and data silos complicate cross-platform analysis that graphs require.

This article unpacks core concepts, tools, limitations, and next steps for professionals responsible for brand safety.

Finally, it links resources and certifications that deepen practical knowledge.

Graphs Expose Fraud Rings

Fraud rarely manifests in isolated metrics.

Bots follow dozens of creators, comment together, and amplify each other’s reach within seconds.

In contrast, traditional spreadsheet filters ignore relational patterns, missing sophisticated coordination.

Graph databases model these relationships as nodes and edges, letting analysts traverse suspicious clusters in milliseconds.

Additionally, community-detection algorithms flag dense circles where accounts interact far more with each other than with outsiders.

Influencer Fraud Detection Graphs pivot on this relational power, replacing blunt follower thresholds with contextual network scores.

Furthermore, graph visualizations give investigators clickable evidence that executives can understand during budget approvals.

Graphs transform scattered signals into coherent stories about fraud rings.

Therefore, brands gain tangible proof before rejecting risky creators, preventing wasted spend.

Market stakes are escalating, making loss prevention the next logical focus.

Market Stakes Are Rising

Industry surveys project the creator economy will reach up to $33 billion by 2025.

Yet reports cite fraud rates between 20% and 40%, draining more than a billion dollars yearly.

Moreover, brand safety teams note reputational fallout when fake audiences misalign with corporate values.

Neo4j claims graph deployments double fraud detection without raising false positives in select partner pilots.

However, those figures remain vendor-verified rather than peer-reviewed.

- Up to 40% influencers flagged for suspicious activity, industry averages suggest.

- $1-2 billion annual marketing spend wasted on fake engagement, multiple reports estimate.

- One-third of AB InBev creators were deemed irrelevant after network analysis, Graphika reported.

Consequently, finance leaders now budget for Influencer Fraud Detection Graphs alongside traditional media audits.

The numbers show real money and reputation are at risk.

Therefore, the tooling conversation shifts from optional innovation to necessary protection.

Leading vendors illustrate how that protection works.

Leading Tools And Vendors

Neo4j and TigerGraph dominate enterprise graph infrastructure for fraud scenarios.

Additionally, social-specific platforms like HypeAuditor and CreatorIQ embed audience authenticity graphs for marketer use.

Graphika offers consultancy services that map influence dynamics and recommend healthier creator rosters.

Meanwhile, emerging providers such as PuppyGraph build integrations with Databricks to streamline large-scale analytics.

Each vendor couples dashboards with API access, easing integration into marketing workflows.

Integrating Influencer Fraud Detection Graphs into existing data lakes often needs only connector middleware and clear data contracts.

Furthermore, some vendors publish graph starter kits that visualize suspicious clusters within hours of data ingestion.

Similarly, marketplace startups bundle graphs with payment escrow to link outcomes to verified audiences.

Tool choice depends on budget, scale, and analyst expertise.

Moreover, compatibility with cloud stacks influences deployment timelines.

Understanding underlying methods clarifies those timeline expectations.

How Graph Methodologies Operate

Entity resolution links multiple social accounts back to single real-world identities using shared devices or payment methods.

Consequently, investigators see whole networks instead of isolated handles.

Graph queries then search for motifs like reciprocal follow triangles or sudden simultaneous follower spikes.

Subsequently, edge-weighting strategies prioritize suspicious links sharing IP addresses or payment tokens.

Moreover, Graph Neural Networks learn anomaly patterns by combining connection structure with profile features.

Academic studies report higher recall detecting fake reviewers when GNNs augment traditional classifiers.

However, adversarial research warns attackers can add benign edges to dodge detection.

Influencer Fraud Detection Graphs therefore require continuous retraining and regular rule updates to stay resilient.

Trust AI dashboards help analysts verify model rationale, building organizational confidence in automated alerts.

Fake follower analysis metrics, such as growth velocity and geolocation mismatches, feed directly into node attributes.

Graphs combine structural context with rich features for nuanced fraud scoring.

Nevertheless, defenders must expect an arms race with adaptive adversaries.

The next section translates methodology into practical brand workflows.

Implementation Playbook For Brands

Brands should embed vetting checkpoints before, during, and after campaigns.

Additionally, they must insist on limited data sharing within contracts to allow independent verification.

- Pre-contract: execute fake follower analysis plus graph scans to detect engagement pods early.

- In-campaign: monitor engagement source graphs and flag repeated bot interactions within minutes.

- Post-campaign: compare conversions against reach; investigate gaps with Influencer Fraud Detection Graphs insights.

- Legal: insert audit clauses and performance-based payments to absorb potential losses.

Furthermore, teams should document escalation paths linking model alerts to human review within hours.

Such governance fosters trust AI adoption and reduces knee-jerk cancellations based on single metrics.

Regular fake follower analysis refreshes keep dashboards aligned with evolving fraud tactics.

Structured playbooks turn technical capability into repeatable brand safeguards.

Consequently, leadership gains measurable ROI through fewer wasted impressions.

Remaining cognizant of tool limitations is still essential.

Limitations And Counter Measures

Graph models can misclassify legitimate fan communities as engagement pods, prompting unnecessary disputes.

In contrast, sophisticated fraudsters may infiltrate genuine communities, lowering anomaly scores enough to slip through.

Additionally, privacy rules restrict access to cross-platform device or IP metadata, limiting graph completeness.

Research shows GNNs suffer adversarial vulnerabilities where attackers add camouflage edges.

Transparent explainability panels nurture trust AI by revealing which edges influenced each alert.

Nevertheless, Influencer Fraud Detection Graphs remain valuable when paired with continuous validation and human oversight.

Weekly fake follower analysis audits help calibrate thresholds and retrain models against fresh data.

Limitations call for balanced, layered defenses rather than blind automation.

Therefore, brands should treat graphs as augmentation, not substitution, for knowledgeable investigators.

Looking ahead, several trends will shape that balanced approach.

Future Outlook And Actions

Graph vendors plan larger language integrations that auto-explain anomalies in natural language.

Meanwhile, regulators explore disclosure mandates that could force platforms to share richer fraud-relevant metadata.

Consequently, smaller brands may access affordable graph insights through managed service offerings.

Professionals can expand skills through the AI Educator™ certification.

The program addresses graph basics, ethics, and stakeholder communication for confident rollouts.

Influencer Fraud Detection Graphs will likely merge with predictive conversion models, tightening performance-based payments.

Moreover, fake follower analysis will feed these models richer historical signals.

Therefore, practitioners should pilot small graph projects now, iterating before regulations mandate action.

The future favors transparent, explainable graph solutions that secure budgets and credibility.

Consequently, early adopters can set best practices the rest of the market will follow.

The conclusion consolidates these insights.

Graph analytics has shifted influencer vetting from educated guesswork to data-driven precision.

However, technical excellence alone cannot guarantee safe partnerships.

Brands must pair robust graphs with disciplined processes, documented review paths, and contractual safeguards.

Moreover, ongoing training ensures teams interpret anomalies correctly and avoid damaging legitimate relationships.

Certifications such as AI Educator™ broaden strategic context and align detection with wider business goals.

Consequently, early adopters can stretch budgets further and protect brand credibility in a competitive creator economy.

Take the next step by reviewing existing workflows, piloting small graph projects, and pursuing relevant professional development today.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.