AI CERTS

1 hour ago

Why Sullivan & Cromwell’s Apology Owing to AI Hallucinations Signals a Global Wake-Up Call for AI Training

This is not just a legal story. It’s a business, technology, and workforce story—and a clear signal that AI adoption without structured training is no longer sustainable.

When AI “Hallucinates,” Reality Pays the Price

The controversy stems from a court filing in the matter of In re Prince Global Holdings Limited, where the firm admitted that its submission contained “inaccurate citations and other errors” produced by artificial intelligence tools. These errors included fabricated legal precedents, misquoted judgments, and even garbled text—classic symptoms of what is known as AI “hallucination.”

AI hallucinations occur when models generate information that appears credible but is factually incorrect or entirely made up. In high-stakes industries like law, where precision is paramount, such outputs can have serious consequences. The firm acknowledged that both its internal AI usage policies and standard review processes were not followed, allowing these inaccuracies to slip through.

The mistake was ultimately identified by opposing counsel, underscoring a critical point: AI errors are not always self-evident and often require human expertise to detect.

A Pattern, Not an Exception

While this case has grabbed headlines due to the stature of the firm involved, it is far from isolated. Legal experts have been tracking a growing number of incidents where AI-generated inaccuracies have made their way into court filings. In fact, hundreds of such cases have been documented in recent years, signaling a systemic issue rather than a one-off lapse.

Across the legal ecosystem, similar incidents have led to fines, reputational damage, and increased judicial scrutiny. Courts have even begun imposing sanctions on lawyers who rely on unverified AI-generated content.

This trend points to a deeper challenge: the rapid adoption of generative AI has outpaced the development of skills, governance frameworks, and accountability mechanisms needed to use it responsibly.

The Real Problem: Not AI, But Untrained AI Usage

It would be easy to blame the technology. But that would be missing the point.

The real issue is not that AI tools hallucinate, it’s that professionals are using them without adequate training, oversight, or understanding of their limitations.

Interestingly, Sullivan & Cromwell already had strict internal policies, including mandatory AI training modules and verification requirements before using AI outputs. Yet, in this case, those protocols were not followed.

This reveals a critical gap: having policies is not enough. Organizations need deeply embedded AI literacy, continuous training, and a culture of accountability to ensure compliance.

Why This Matters Beyond the Legal Industry

This incident is not confined to law firms. It reflects a universal challenge across industries, from finance and healthcare to marketing and cybersecurity.

AI is now being used to draft reports, generate insights, automate workflows, and support decision-making. But without proper training, professionals risk:

- Inaccurate outputs that appear convincing

- Overreliance on AI without verification

- Reputational and financial damage

- Compliance and regulatory violations

In sectors where accuracy is non-negotiable, the cost of such errors can be enormous.

The Urgent Need for Structured AI Training

The lesson here is clear: AI adoption must be accompanied by structured, role-based training.

This is where initiatives like the Authorized Training Partner (ATP) ecosystem by AI CERTs become critical. ATPs are designed to bridge the gap between AI tools and real-world application by equipping professionals with practical, industry-aligned skills.

Rather than just teaching how to use AI tools, ATP programs focus on:

- Understanding AI limitations like hallucinations

- Implementing verification and validation workflows

- Ensuring ethical and compliant AI usage

- Applying AI effectively within specific industries

In essence, ATPs don’t just teach AI—they teach responsible AI.

And as this case demonstrates, that distinction can make all the difference.

From Experimentation to Accountability

The Sullivan & Cromwell incident marks a turning point in how organizations view AI. The era of casual experimentation is giving way to one of accountability.

Businesses can no longer afford to treat AI as a plug-and-play solution. It requires the same rigor, governance, and training as any other critical system.

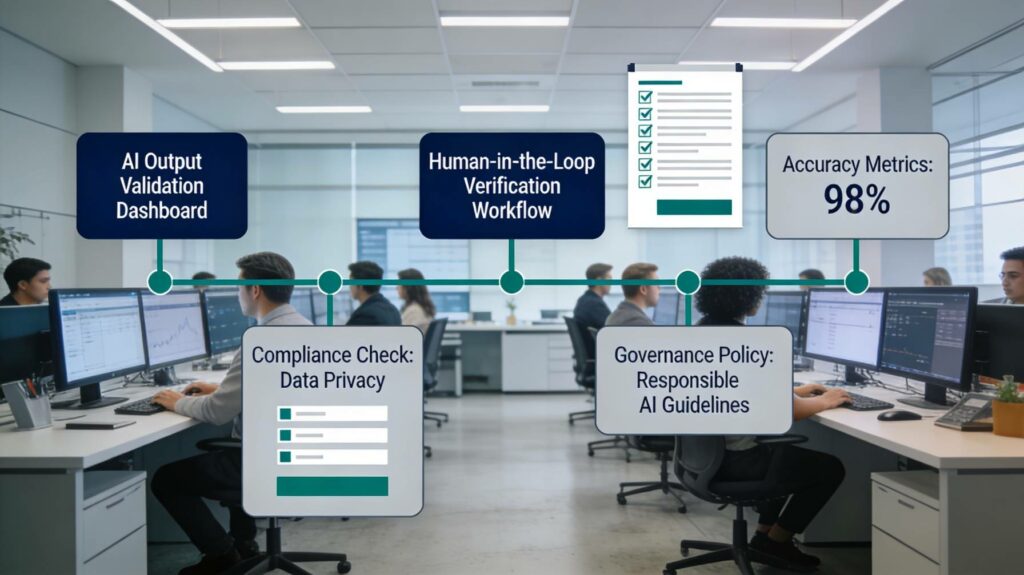

Forward-looking organizations are already taking steps to:

- Invest in AI literacy across teams

- Implement human-in-the-loop verification systems

- Adopt certified training programs

- Establish clear AI governance frameworks

Those who fail to do so risk not just operational errors but long-term credibility loss.

A Wake-Up Call for Professionals

For individual professionals, the message is equally urgent.

AI is not replacing expertise but it is reshaping what expertise looks like.

The professionals who will thrive in this new landscape are not those who simply use AI, but those who understand it deeply, its strengths, its limitations, and its risks.

In other words, AI proficiency is no longer optional. It is foundational.

The Future Belongs to the Trained

The apology from Sullivan & Cromwell is more than a legal correction, it is a signal to the global workforce.

AI is powerful, but it is not infallible. And without proper training, even the most prestigious institutions can falter.

As AI continues to integrate into every aspect of work, the organizations and professionals who invest in structured learning through programs like ATP will be the ones who lead, innovate, and succeed.

The rest may find themselves apologizing to more than just a courtroom.

FAQs

What are AI hallucinations in simple terms?

AI hallucinations refer to situations where an AI system generates false or misleading information that appears accurate. These outputs are not based on real data but are created due to how AI predicts language patterns.

Why did Sullivan & Cromwell face criticism?

The firm submitted a court filing containing incorrect legal citations and fabricated references generated by AI. These errors were not verified before submission, leading to an apology to the court.

Are AI hallucinations common?

Yes, they are a known limitation of generative AI systems. Studies and real-world cases show that even advanced AI models can produce incorrect information if not properly guided and reviewed.

Can AI be safely used in professional work?

Yes, but only with proper training, verification processes, and human oversight. AI should be treated as an assistant, not a final authority.

What is the role of AI training programs like ATP?

AI training programs like ATP help professionals understand how to use AI responsibly, avoid risks like hallucinations, and apply AI effectively in real-world scenarios, ensuring both accuracy and compliance.