AI CERTs

3 months ago

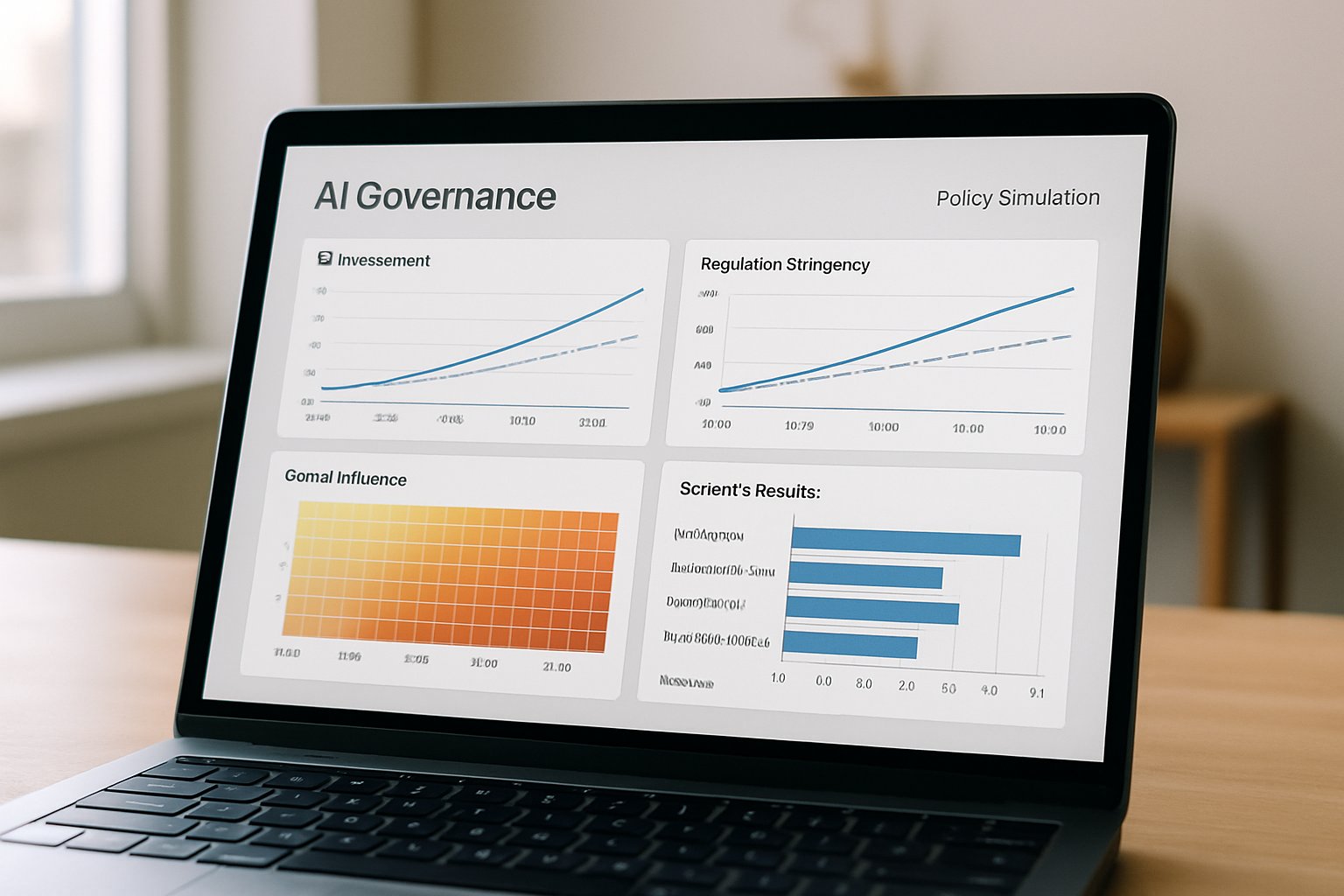

Algorithmic Policy Impact Simulators Reshape AI Governance

Policymakers face rising pressure to predict AI program outcomes before citizens feel consequences. Consequently, many agencies now experiment with algorithmic policy impact simulators. These interactive tools replicate tax rules, identity checks, or supply chains inside virtual sandboxes. Moreover, officials can change parameters and instantly see budget, equity, or security effects. Recent launches from GAO, NIST partners, and open-source communities underscore momentum. In contrast, critics warn that model bias, opacity, and compute costs hamper reliable adoption. This article explores how algorithmic policy impact simulators shape federal governance, the benefits, the limits, and practical next steps. Readers will also learn where regulatory modeling overlaps with public sector AI risk frameworks.

Simulators Enter Federal Toolbox

Federal innovators have moved prototypes from labs to live dashboards within 12 months. For example, GAO’s ID Verification Controls Simulator visualizes tradeoffs among fraud detection, cost, and user burden. Meanwhile, open projects like PolicyEngine let researchers evaluate tax reforms across the United States, Canada, and the United Kingdom. Commercial vendors such as Simudyne tout agent-based digital twins capable of millions of agents. Consequently, algorithmic policy impact simulators now appear in budget hearings and procurement briefs. These developments show a fast mainstreaming of simulation culture. Nevertheless, understanding the modeling foundations remains essential before scaling.

Core Policy Modeling Approaches

Three dominant techniques support most algorithmic policy impact simulators today. Microsimulation applies policy rules to synthetic households, producing distributional and fiscal outputs. Additionally, agent-based models create autonomous agents whose interactions reveal emergent system behavior. Digital twins replicate an entire program or economy, sometimes feeding live data into the virtual copy. Therefore, agencies select approaches based on complexity, data access, and time constraints. Open standards like OpenFisca lower entry barriers for new country models.

Key Federal Market Signals

- GAO released three public simulators since 2022, including the identity verification prototype.

- NIST’s AI RMF Playbook references simulation outputs in its Measure function.

- PolicyEngine GitHub shows weekly commits across multiple country models.

- Simudyne reports multi-million agent stress tests on AWS high performance clusters.

Collectively, these signals confirm rising demand for transparent regulatory modeling within public sector AI initiatives. Consequently, tooling decisions now affect workforce hiring and cloud budgets. Agencies need clear benefit statements for leadership buy-in. The next section examines those advantages.

Benefits For Federal Agencies

Simulation promises quick, low-risk experimentation. Moreover, algorithmic policy impact simulators allow side-by-side scenario comparison without harming real users. Budget offices can test thousands of rule changes overnight. Equity teams can break results down by demographic segment, supporting civil rights compliance.

Furthermore, regulatory modeling outputs integrate neatly with oversight dashboards, reinforcing transparency mandates. Public sector AI champions point to clear communication gains when interactive charts replace dense spreadsheets.

- Rapid what-if exploration saves months of manual analysis.

- Interactive visuals improve stakeholder engagement and understanding.

- Sensitivity analysis identifies hidden edge cases early.

- Stress testing strengthens program resilience against shocks.

Evidence dashboards also help congressional staff grasp fiscal impacts within minutes. Consequently, algorithmic policy impact simulators can accelerate evidence-based policymaking across agencies. Yet every advantage sits beside a caution, as the following section reveals.

Risks And Current Limits

Any model rests on assumptions that might not match reality. In contrast, overconfidence in flawless predictions invites flawed interventions. Data bias may propagate through synthetic populations and distort equity findings. Additionally, complex agent-based engines make internal logic hard to audit.

Governance teams must weigh privacy because administrative data can re-identify individuals when combined. Nevertheless, algorithmic policy impact simulators remain invaluable when paired with transparent documentation and validation. Critics also flag resource burdens; high-performance clusters cost money and talent. Therefore, small bureaus sometimes rely on shared service providers or academic partners.

Moreover, model drift can appear when economic conditions shift abruptly. Continuous recalibration therefore remains a non-negotiable maintenance task. Risks underline the need for strong standards. The next section describes emerging frameworks that mitigate these gaps.

Integration With Governance Frameworks

NIST’s AI Risk Management Framework offers a useful alignment map. Agencies can tag simulator outputs to Govern, Map, Measure, and Manage functions. Moreover, algorithmic policy impact simulators feed quantitative evidence into required Algorithmic Impact Assessments.

Meanwhile, regulatory modeling teams often embed uncertainty bounds directly within dashboards. This practice supports continuous monitoring demanded by public sector AI guidance. Professionals can enhance their expertise with the AI Security Level-1 certification. Consequently, a certified workforce understands privacy threats and secure deployment patterns. Nevertheless, frameworks alone do not guarantee adoption; operational playbooks still matter.

Standards provide structure for accountability. The final section outlines concrete steps for implementation.

Adoption Playbook Implementation Steps

Successful programs start with a clear decision boundary. Define whether the simulator informs budgeting, oversight, or exploratory research. Subsequently, choose transparent, open-source code when risk tolerance is low. Agencies should publish data dictionaries and assumption logs.

Furthermore, stakeholder workshops expose hidden normative tradeoffs before launch. Conduct back-testing against historical outcomes to calibrate accuracy. Therefore, algorithmic policy impact simulators deliver credible insights only after rigorous validation.

Privacy reviews must apply differential privacy or aggregation techniques. Meanwhile, governance boards tie simulator findings to procurement and monitoring workflows.

These steps transform technical prototypes into lasting institutional capabilities. Consequently, agencies build trust while accelerating responsive public sector AI services. A disciplined playbook secures sustainability. The conclusion now distills overarching lessons.

Algorithmic policy impact simulators have moved from niche prototypes to core governance assets. Moreover, agencies enjoy rapid scenario testing, clearer equity analysis, and stronger public communication. Nevertheless, success depends on transparent models, rigorous validation, and sustainable resources. Aligning outputs with NIST’s AI RMF and adopting a structured playbook closes critical risk gaps. Professionals should consider the linked AI Security Level-1 certification to deepen technical governance skills. Consequently, readers can accelerate trustworthy, data-driven decisions across regulatory modeling and public sector AI domains.