AI CERTS

3 hours ago

Agent Architecture Drives Z.ai’s GLM-5-Turbo Strategy

Strategic Model Shift Explained

Z.ai kept base GLM-5 fully open. Meanwhile, GLM-5-Turbo ships only through commercial endpoints. Moreover, executives frame the split as necessary for sustainable innovation. Open weights attract research mindshare, while proprietary upgrades monetize production deployments. In contrast, some open-source advocates see a step backwards. Nevertheless, strong demand for agentic throughput may justify the paywall.

Key launch milestones clarify the timing. The open GLM-5 weights appeared in February 2026. Subsequently, GLM-5-Turbo rolled out via Z.ai’s API and OpenRouter during mid-March. Investing.com reported double-digit share gains immediately after news broke. These financial signals underscore how the firm aligns product segmentation with shareholder expectations.

These strategic dynamics reveal an ongoing tension between openness and revenue. However, they also highlight why Agent Architecture now drives licensing decisions. Next, we explore the model’s core concepts.

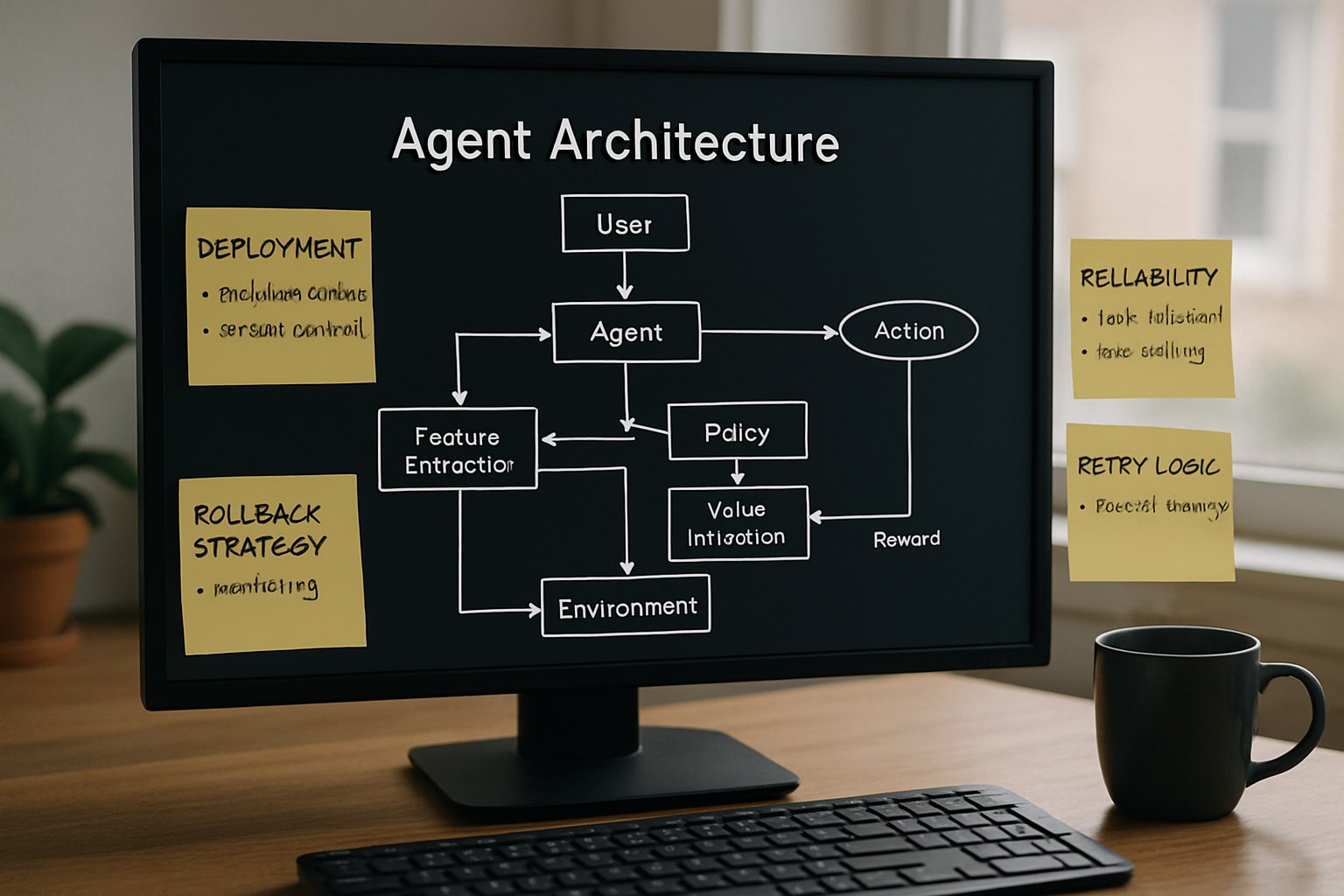

Agent Architecture Core Concepts

Execution-first models must perceive, plan, and act across long sessions. Therefore, Z.ai tuned GLM-5-Turbo for lower tool-call error rates and stable long-chain reasoning. The 200,000-token context window supports massive codebases and historical chat logs. Additionally, the 128,000-token maximum output lets workflows write entire documentation sets in one pass.

Four foundation ideas shape the design:

- Mixture-of-Experts routing activates ~40B parameters per token, boosting efficiency.

- Tool calling schemas reduce formatting mistakes to roughly 0.67% in ZClawBench tests.

- Temporal awareness improves scheduled and persistent tasks across days.

- High throughput shortens end-to-end chain completion versus earlier endpoints.

Such traits embody modern Agent Architecture thinking. Furthermore, they aim to let agents operate with minimal human retry loops. These concepts set the stage for our technical deep dive.

Technical Specs Overview Today

The following quantitative details matter when choosing an execution engine:

- Parameter family: 744B total, sparse MoE.

- Context length: 200K tokens.

- Max generation: 128K tokens.

- Reported throughput: 48–200 tokens/sec, deployment dependent.

- Marketplace pricing: $0.96/M input, $3.20/M output on OpenRouter at launch.

Moreover, provider telemetry drives the wide throughput spread. Adam Holter tracked 200+ TPS under optimized OpenRouter nodes. Meanwhile, VentureBeat saw 48 TPS on baseline infrastructure. Therefore, engineering leaders must benchmark their actual stack.

The specs reflect deliberate compromises. A vast context demands memory, yet sparse routing keeps per-token compute moderate. Consequently, Agent Architecture scenarios benefit without blowing budgets. After reviewing raw numbers, performance evidence warrants scrutiny.

Performance Claims Examined Thoroughly

Z.ai released ZClawBench to demonstrate superiority on OpenClaw agent tasks. The benchmark shows Turbo finishing chains faster than base GLM-5. Additionally, tool-call success improved. Nevertheless, external validation remains limited. Independent testers still craft reproducible scripts.

Third-party metrics add nuance. VentureBeat clocked an eight-second completion for a sample chain. In contrast, some Reddit users reported intermittent empty responses under heavy load. Consequently, operational reliability correlates with hosting choices.

Throughput variability deserves emphasis. For instance, 48 TPS suffices for many enterprise queues. However, algorithmic trading bots might demand 150+. Therefore, procurement teams should request time-boxed trials from each provider.

These mixed findings underline the need for more evidence. Yet, they also affirm Turbo’s promise for serious execution workloads. Next, we assess market reception.

Market Impact Analysis Summary

Financial analysts responded quickly. Investing.com recorded mid-teens percent intraday gains for Zhipu shares on March 16. Moreover, VentureBeat framed the move as a monetization litmus test for open-source leaders. Consequently, rival vendors watch pricing feedback keenly.

Enterprise buyers, meanwhile, compare GLM-5-Turbo against Claude, GPT-5.x, and Gemini. Pricing appears competitive for long-context tasks, although rising quotas complicate smaller teams’ budgets. Furthermore, the closed license may deter startups committed to fully open stacks.

Importantly, Z.ai bundled Turbo within its coding subscription tiers. This tactic encourages migration from generic chat endpoints to specialized agents. If adoption scales, subscription upgrades could outpace token sales as the main revenue driver.

The market response showcases confidence in Agent Architecture monetization. However, buyers still weigh operational risks, covered next.

Operational Risks And Mitigations

Provider variance presents the largest concern. Throughput, latency, and quality fluctuate across OpenRouter, Together, and Z.ai native endpoints. Therefore, teams should implement health checks and fallback routes.

Licensing opacity poses another challenge. The closed-source stance hinders auditing and reproducibility. Nevertheless, enterprises can request private disclosures under NDA. Meanwhile, community efforts push for more transparent evaluation suites.

Cost control also matters. Quota changes occurred within days of launch. Consequently, finance teams should monitor invoices weekly.

Professionals can enhance their expertise with the AI Developer™ certification. This credential strengthens planning skills for resilient Agent Architecture deployments.

Addressing these risks secures smoother execution paths for modern agents. Finally, we list near-term verification priorities.

Provider Variance Issues Noted

Teams must collect first-token latency, tokens per second, and tool-call success metrics. Moreover, tests should mirror production prompt chains. Subsequently, dashboards can surface anomalies before customer impact.

Future Verification Needs Highlighted

Independent labs should publish open datasets for cross-model agent benchmarking. Additionally, Z.ai could release partial eval logs to boost trust. Until then, cautious rollouts remain prudent.

These action steps close the operational discussion. However, broader conclusions await.

Conclusion

GLM-5-Turbo illustrates how Agent Architecture now shapes commercial AI strategy. Z.ai balanced openness and monetization by withholding Turbo’s weights yet offering generous context and improved tool reliability. Furthermore, the model’s performance appears strong, although provider variance persists. Consequently, enterprises must benchmark, monitor costs, and demand transparency. Nevertheless, when configured well, Turbo empowers durable autonomous agents across demanding execution chains. Professionals seeking deeper mastery should consider the linked certification. Act now to fortify your next generation of intelligent systems.