AI CERTS

3 hours ago

AI Frame Generation Fallout: Nvidia DLSS 5 Faces Gamer Fury

However, the preview produced harsh shadows, plastic skin, and other Visual Artifacts that many players found unacceptable. Consequently, the reveal video now ranks among Nvidia’s most disliked uploads, underscoring a widening gap between corporate optimism and community trust.

Reveal Sparks Fierce Backlash

DLSS 5 entered public view on 16 March 2026. Early hands-on pieces from Digital Foundry initially praised the detail boost. Nevertheless, community sleuths captured frame grabs showing warped eyes and over-smoothed textures. These comparisons went viral, and backlash spread to mainstream Gaming outlets within a day. Moreover, dislike ratios near 84 percent were reported by third-party trackers, indicating rare consensus across typically fragmented platforms.

The uproar highlights a sensitive intersection of aesthetics and technology. Players worry their favourite worlds could gain an unwanted gloss. Industry observers note that AI Frame Generation [1] may now face the same perception hurdles ray tracing once met. These concerns underscore the cultural stakes. However, the hardware story soon became just as thorny.

These reactions summarise the reveal fallout. Consequently, attention shifted toward performance questions that threaten adoption.

Demo Hardware Burden Questioned

Reporters learned the GTC demo required two RTX 5090 GPUs—one drawing the game, one feeding the model. In contrast, earlier DLSS versions ran comfortably on a single card. Moreover, Nvidia declined to publish final targets, only promising “optimizations before launch.” Budget-conscious Gaming enthusiasts quickly framed the feature as elitist. Furthermore, indie developers warned that parallel hardware demands could complicate console parity.

Key stats surfaced in coverage:

- 750+ titles already support older DLSS iterations

- Fall 2026 remains the official consumer release window

- Two-GPU demo stressed 540 watts during Digital Foundry’s capture

Numbers like these fuel skepticism around AI Frame Generation [2]. Consequently, potential partners now face pressure to prove single-GPU viability before integrating the SDK.

This hardware debate frames cost realities. However, artistic integrity issues have proven even more visceral.

Artistry Debate Intensifies Online

Few topics rile artists faster than automated style changes. Bethesda, Capcom, and Ubisoft each issued statements affirming opt-out controls. Meanwhile, several indie studios urged peers to “tank sales” if publishers force the setting. Additionally, Digital Foundry’s second video admitted overlooked flaws, especially on characters. Richard Leadbetter called some faces “horrendous,” amplifying Visual Artifacts discourse.

Critics contend that AI Frame Generation [3] could homogenize colour grading across titles. Moreover, hallucinated highlights may break immersion when objects move unpredictably. Supporters counter that proper masks will protect signature looks. Nevertheless, even friendly voices concede workflow documentation remains thin.

These exchanges clarify artistic stakes. Therefore, developer messaging now matters as much as code quality.

Developers Issue Mixed Statements

Studio comments range from cautious optimism to outright alarm. Todd Howard stressed that “artists stay in charge,” yet an Epic Games producer called the panic “absolutely insane.” Consequently, discourse now pivots on whether tool granularity matches marketing claims. Furthermore, confusion over training data licensing lingers, adding legal uncertainty beyond mere Visual Artifacts.

Such divergent positions expose a fractured consensus. However, Nvidia leadership refuses to yield ground publicly.

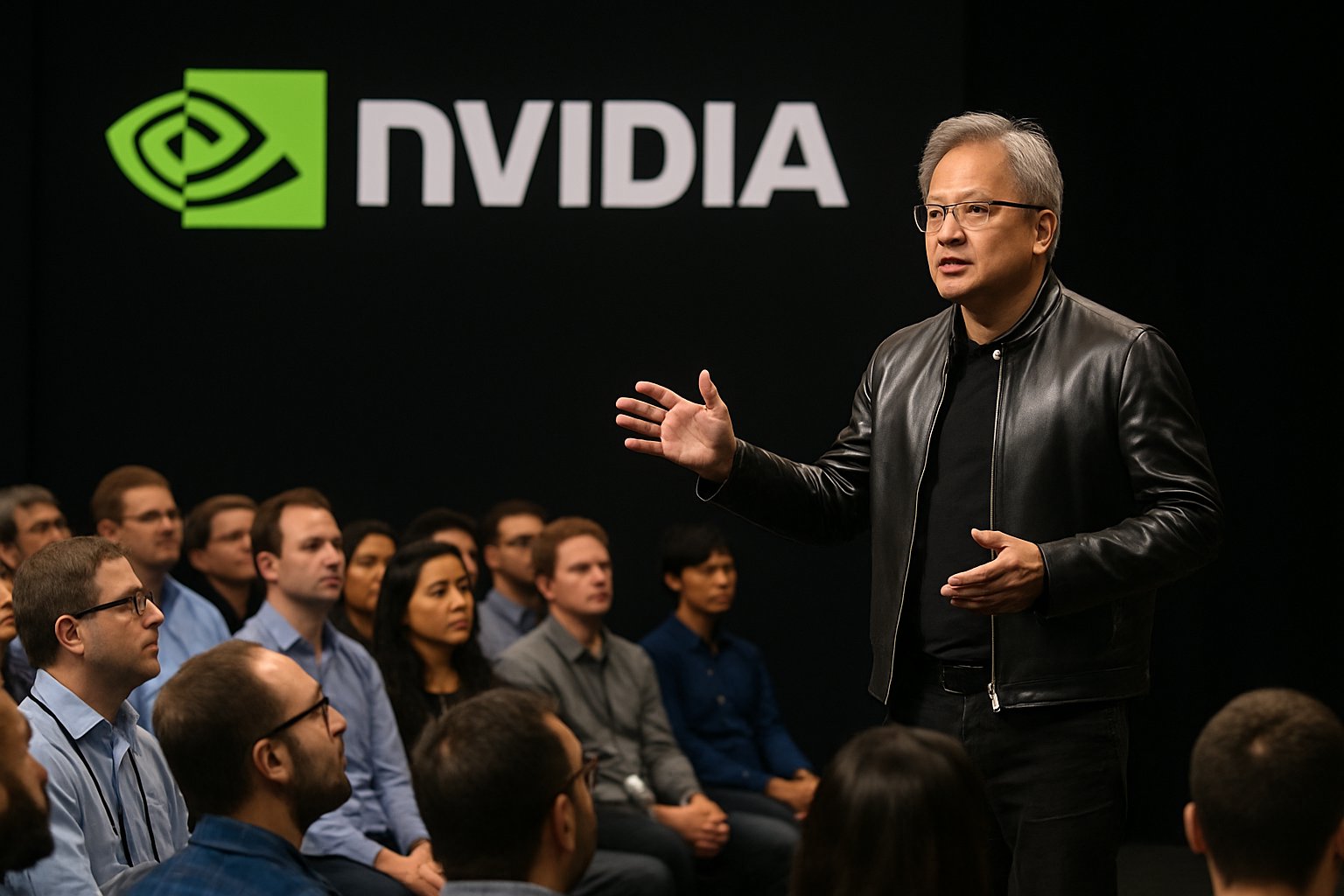

CEO Offers Blunt Defense

During a press Q&A, Jensen Huang declared detractors “completely wrong.” He argued that developers will dial intensity per asset, making fears premature. Additionally, he framed AI Frame Generation [4] as a leap similar to ChatGPT’s language breakthrough. Nevertheless, the tone struck many as dismissive, and memes portraying Huang as ignoring feedback multiplied.

History shows outspoken confidence can stabilize investor mood. In contrast, it may also harden community resistance. Consequently, partner studios now mediate between corporate assurance and audience distrust.

These remarks emphasize Nvidia’s unwavering stance. Subsequently, attention returned to technical unknowns that testing must address.

Technical Concerns Still Persist

Independent testers confirmed the model infers lighting from 2D frames plus motion vectors. Therefore, occlusion and shadow errors emerge in edge cases. Moreover, TechSpot documented flickering artefacts when small props crossed bright areas. Meanwhile, the absence of training-dataset details fuels broader AI accountability debates.

Analysts outline three unresolved questions:

- Will single-GPU performance meet 60 fps targets?

- How will studios mask regions to prevent wrong highlights?

- Can Nvidia disclose training sources to avoid likeness suits?

Until clear answers arrive, AI Frame Generation [5] remains a technological gamble. Consequently, risk-averse studios may delay adoption despite promised efficiency gains.

These open issues illustrate engineering hurdles. Therefore, forward-looking observers track milestones that could sway opinion.

Future Scenarios To Watch

Several developments may decide the feature’s fate before launch. Firstly, Nvidia will release an updated SDK this summer. Secondly, Digital Foundry plans a frame-by-frame stress test once code stabilizes. Additionally, partner titles like Starfield’s next update will show real implementation choices. Moreover, regulators increasingly probe AI training pipelines, adding policy risk.

Professionals can deepen relevant skills through the AI + UX Designer™ certification. Such programs teach evaluation frameworks for emerging render techniques. Consequently, teams can critique Visual Artifacts methodically instead of reactively.

Outcomes hinge on four key indicators: community sentiment, single-GPU benchmarks, developer tool depth, and legal clarity. If progress materializes, AI Frame Generation [6] could mirror DLSS 2’s eventual acceptance. In contrast, stalled improvements may confine the model to tech demos.

These scenario threads capture the road ahead. Subsequently, decision-makers must weigh hype against evidence before shipping toggles.

Conclusion

DLSS 5 illustrates how powerful ideas spark equal parts excitement and anxiety. Public ire over Visual Artifacts, hardware demands, and uncertain workflows shows that trust, not teraflops, determines adoption. However, Jensen Huang’s conviction, combined with scheduled optimizations, means the story remains fluid. Moreover, transparent benchmarks and honest developer testimonies can still steer perception.

Stakeholders should monitor upcoming SDK drops, independent analyses, and partner rollouts. Meanwhile, enhancing evaluation literacy through credentials like the linked certification sharpens competitive advantage. Consequently, ready teams will navigate AI Frame Generation [7] wisely—embracing benefits, mitigating risks, and, ultimately, shaping the next era of Gaming visuals.