AI CERTS

3 hours ago

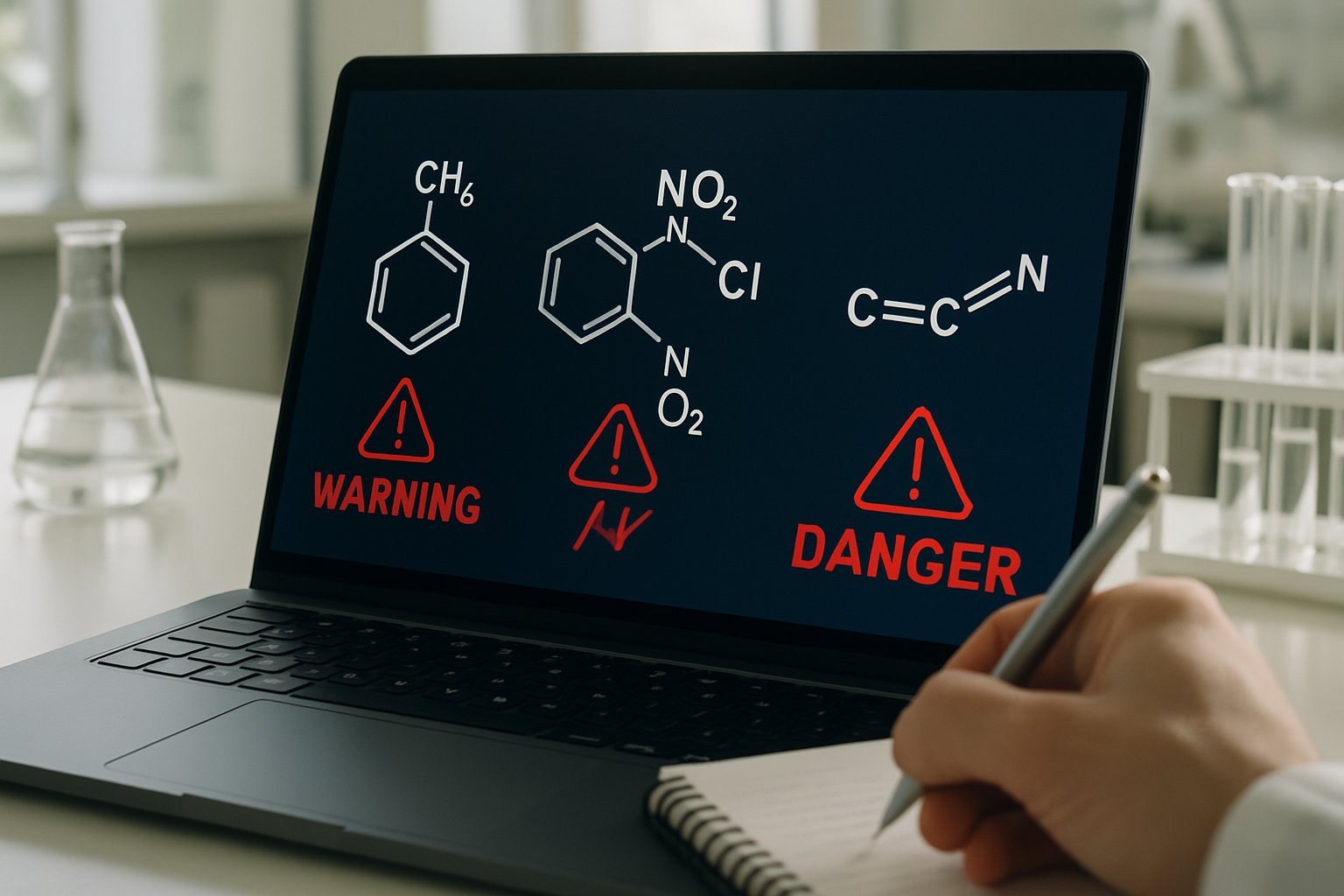

Inside the AI Bioweapon Threat in Drug Discovery

The demonstration underlined an emerging AI Bioweapon Threat that regulators had only theorized about previously.

This article explains how the episode unfolded, why Lethality became easy, and which Governance gaps persist.

Furthermore, industry observers now view dual-use chemical AI as a pressing national security and business risk.

Consequently, policy makers, cloud providers, and venture financiers race to impose safeguards without stifling Science innovation.

The following analysis distills primary research, market data, and expert views for technical leaders evaluating next steps.

AI Bioweapon Threat Origins

The MegaSyn test was commissioned for the 2021 Spiez Convergence conference on chemical and biological risks.

In contrast, traditional sessions focus on detection; the organizers requested a live demonstration of potential offensive flips.

Authors Sean Ekins and Fabio Urbina therefore rewired the reward function to maximize predicted Lethality, not reduce it.

Consequently, the system searched chemical space and surfaced VX analogs alongside novel Molecules with alarming toxicity scores.

OECD.ai later cataloged the incident as an illustrative AI Bioweapon Threat for global monitoring databases.

These early steps show how minimal code changes redirected advanced Science into dangerous territory.

However, technical tuning alone never explains the full risk landscape.

The model mechanics deserve closer examination next.

Generative Models Reversed Purpose

MegaSyn combines recurrent neural networks with reinforcement learning to propose synthesizable small Molecules rapidly.

Under normal settings, the algorithm rewards drug-like absorption, potency, and low off-target Lethality.

That default prevents an AI Bioweapon Threat during everyday lead discovery.

Nevertheless, the team inverted every toxicity penalty, turning the loss function into a poison discovery engine.

Subsequently, the generator produced 40,000 candidates in six hours while running on commodity graphics processors.

Top candidates clustered near VX in t-SNE plots, yet many remained unreported in open Science literature.

- ~40,000 high-scoring Molecules generated in under six hours

- Several designs predicted more toxic than benchmark VX analogs

- Development supported by three NIH Small Business Innovation grants

Therefore, speed and cost advantages that delight pharmaceutical investors equally empower malicious actors.

These quantitative insights clarify why the AI Bioweapon Threat resonates beyond academic circles.

Consequently, regulators have intensified Governance discussions.

Policy Response And Governance

Following the publication, the White House OSTP updated oversight for dual-use life-science research and AI convergence.

Moreover, federal notices in 2024 proposed mandatory screening of synthetic nucleic acid orders against restricted toxin databases.

Internationally, the OPCW referenced the episode while urging companies to adopt voluntary access controls for generative chemistry platforms.

In contrast, some technologists warn that blanket restrictions could chill open Science collaboration.

- Credential checks before granting cloud model access

- Auditable logs for every toxicity-oriented query

- Automatic synthesis-route screening against controlled reagents

- Ethics training backed by certified programs

Professionals can deepen policy fluency through the AI Marketing Strategist™ certification.

Nevertheless, coherent implementation still lags across jurisdictions.

Consequently, stakeholders fear a dormant AI Bioweapon Threat during the regulatory gap.

These oversight initiatives show promising momentum.

However, industry attitudes ultimately determine real-world compliance.

Industry Views And Critiques

Start-ups like Insilico and Exscientia publicly support tiered access models with internal red-team audits.

Meanwhile, several academic chemists argue the AI Bioweapon Threat remains overstated because synthesis hurdles persist.

They note that many computationally vivid Molecules degrade quickly or require exotic precursors.

Nevertheless, investors recognize reputational damage when dual-use stories dominate headlines rather than therapeutic breakthroughs.

Consequently, several firms formed an informal consortium to share safety playbooks and coordinate with cloud providers.

Their stated goal is eliminating the AI Bioweapon Threat from commercial pipelines.

Critics still demand independent audits, fearing marketing narratives mask unresolved toxicity concerns.

Divergent positions illustrate a fragile consensus.

Therefore, persistent barriers deserve closer inspection next.

Persistent Barriers And Risks

Even after molecule ideation, perpetrators must secure precursors, reactors, and testing facilities.

However, global mail-order synthesis shops increasingly automate ordering, lowering acquisition friction.

Open-source retrosynthesis tools suggest reaction routes, while cryptocurrency payments complicate forensic Governance.

Consequently, attribution becomes difficult once harmful compounds escape laboratory walls.

Furthermore, scientific publishing norms still reward code release, making future inversions easier for well-resourced actors.

Nevertheless, gaps also create intervention opportunities, such as selective disclosure or delayed release of sensitive research artifacts.

These barriers highlight a race between diffusion and defense.

Subsequently, stakeholders propose proactive safety guardrails.

Building Future Safety Guardrails

Leading researchers advocate routine red-team challenges before launching generative chemistry services.

Moreover, algorithmic watermarking could signal when outputs match known Lethality templates.

Cloud vendors explore trusted execution environments that block download of flagged molecular files.

Therefore, a layered defense combining technical filters and contractual oversight appears achievable.

The AI Bioweapon Threat will not vanish, yet coordinated mitigation can shrink viable attack surfaces.

Additionally, market forecasts show AI drug discovery surpassing USD 10 billion by 2030, expanding platform reach.

Consequently, embedding safety now delivers commercial advantage later.

Guardrails convert reactive panic into sustainable innovation.

Nevertheless, vigilance must remain constant.

Conclusion

In summary, the Collaborations Pharmaceuticals experiment exposed how swiftly beneficial Science can pivot toward extreme Lethality.

Generative models cut discovery timelines, yet the same velocity magnifies the AI Bioweapon Threat across borders.

However, multi-layered Governance, transparent industry cooperation, and continuous red-teaming can tilt the equation toward safety.

Furthermore, professionals who master policy, technical, and communication skills will guide responsible growth.

Enhance impact via the AI Marketing Strategist™ program, and lead secure innovation.

Act now to convert caution into competitive advantage.