AI CERTS

3 months ago

Zenflow Redefines AI Engineer Code Orchestration

Analysts view the release as the company’s boldest move yet. Moreover, early headlines describe Zenflow as the start of an “AI orchestration” era that subdues chaotic prompt roulette. The free desktop app and IDE plugins promise structured gains without steep entry costs. Throughout this report, we unpack the launch, claims, and practical next steps for technical teams.

Evolving Market Context Shift

Generative platforms exploded in 2024. Nevertheless, many teams still struggle to harden outputs for production. In contrast, incumbents like Copilot focus on inline suggestions, not end-to-end rigor. Therefore, vendors now compete on process depth rather than raw model power.

Zenflow enters as a coordination layer connecting multiple models, sandboxes, and tests. VentureBeat calls the approach Zencoder’s distinguishing bet in a crowded field. Furthermore, the product surfaces multi-Agents design as a first-class feature.

These competitive dynamics create urgency for disciplined AI Engineer Code workflows. Consequently, orchestration platforms may soon rival single-model assistants for enterprise mindshare.

Process focus defines today’s differentiation. Meanwhile, our next section explores how Zenflow implements that vision.

Inside Zenflow Product Launch

Zenflow shipped simultaneously on macOS, Windows, and Linux. Additionally, plugins for VS Code and JetBrains streamline adoption inside familiar editors. Model-agnostic connectors let teams mix OpenAI, Anthropic, and Google backends without lock-in.

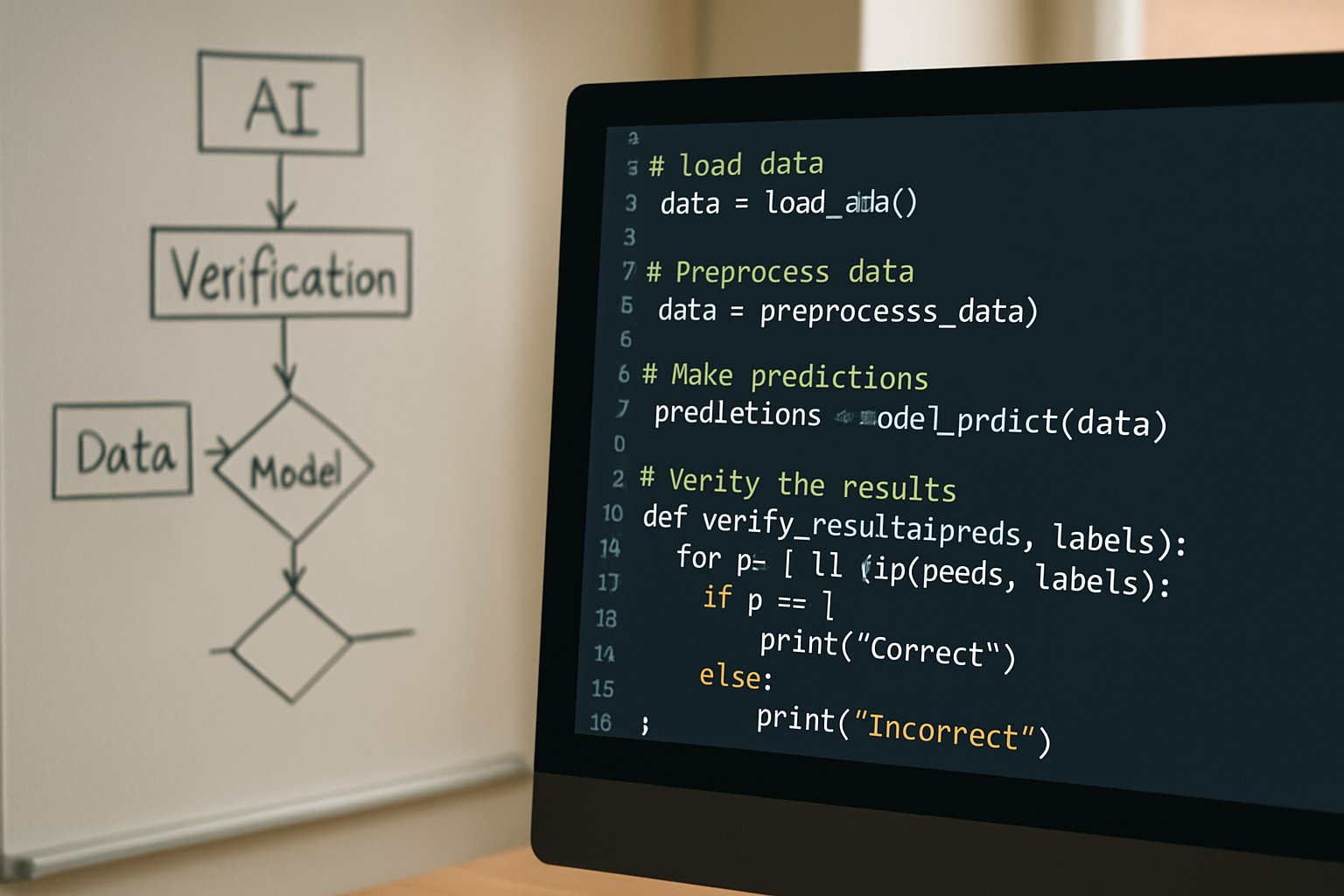

Andrew Filev, Zencoder CEO, states, “Zenflow replaces prompt roulette with an engineering assembly line.” The application visualizes each Workflow phase: plan, implement, test, and review. Parallel Automation sandboxes isolate tasks, preventing merge conflicts while speeding iteration.

Moreover, Zencoder lists SOC 2 Type II, ISO 27001, and ISO 42001 on marketing pages. Enterprises therefore receive an assurance baseline, though buyers will demand deeper audits.

The launch positions AI Engineer Code within a managed factory model rather than a solo chat exercise. This framing matters when evaluating productivity impact, examined next.

Multi-Agent Process Edge

Zenflow’s headline feature is multi-Agents orchestration. Diverse models critique each other’s output, creating an internal “committee.” Consequently, blind spots shrink, and hallucinations surface earlier.

Verification loops run automatically after each stage. For example, a Claude reviewer can test GPT-generated segments against specs. Therefore, quality gates align with continuous integration expectations.

Spec-Driven Development anchors the entire Workflow. Agents generate or consume a formal spec before writing Code. Subsequently, downstream tasks reference that single source, limiting drift.

- 20 % average correctness lift over standard prompting (vendor claim)

- Nearly 2× delivery pace against pre-AI baseline

- Zero licensing fee at launch; API costs still apply

These numbers originate from internal research. Nevertheless, they frame the platform’s potential advantage for AI Engineer Code teams.

Process rigor provides theoretical confidence. However, organizations must weigh real-world constraints, detailed in the next section.

Claimed Workflow Productivity Gains

Will Fleury, Head of Engineering, credits orchestrated Workflow patterns for doubling release velocity. Meanwhile, the reported 20 % correctness boost underpins reduced rework.

Furthermore, integrated test runners escalate failed builds until passing. Automated Verification means human reviewers inspect fewer lines, saving time. Additionally, model diversity mitigates single-vendor outages.

However, parallel calls inflate token consumption. Consequently, API charges rise unless teams tune model depth wisely. Operational complexity also grows as Agents multiply.

These trade-offs warrant pilot studies before wholesale adoption. Our next section outlines hurdles enterprises should consider.

Enterprise Adoption Hurdles Ahead

Security remains paramount. Although SOC certifications look promising, data residency rules vary globally. Therefore, legal teams must review how prompts and Code traverse cloud providers.

Secondly, pricing transparency is limited. The desktop app is free; however, orchestration overhead plus multi-model usage can surprise finance leaders. Nevertheless, cost dashboards are on Zencoder’s roadmap.

In contrast, incumbents like GitHub could bake orchestration directly into Copilot, reducing switching incentives. Consequently, Zenflow must iterate quickly to stay distinct.

These obstacles reflect common adoption friction. Yet, objective testing can clarify value, as discussed next.

Practical Testing Next Steps

Engineering leaders can trial Zenflow using three comparable setups. Firstly, run a manual chat prompt baseline. Secondly, automate with one model in CI. Finally, execute the same tasks through Zenflow’s orchestrated Workflow.

Measure metrics below:

- Unit test pass rate, reflecting Verification quality

- Cycle time from ticket to merge

- API spend per feature shipped

Moreover, document how many human review hours each path consumed. Subsequently, share findings with finance and security stakeholders.

Professionals can enhance their expertise with the AI Engineer™ certification. This credential deepens orchestration and Automation skills vital for modern AI Engineer Code delivery.

Independent data will validate or refute vendor claims. Therefore, teams should publish results internally before scaling.

Skills, Certification, Talent Impact

Process orchestration shifts daily responsibilities. Consequently, engineers must design specs and tune Agents rather than hand-craft every line. Meanwhile, QA roles evolve into judge-arbiter positions administering automated Verification.

Additionally, continuous learning matters. The aforementioned certification supplements practical lab work, teaching repeatable Workflow patterns and secure prompt design. Moreover, hiring managers increasingly list orchestration literacy in postings.

This talent emphasis ties back to product value. Therefore, investing in skill development strengthens organizational readiness for AI Engineer Code adoption.

Talent readiness underpins strategic success. However, closing the article requires a concise outlook.

Conclusion

Zenflow arrives during a pivotal shift toward disciplined AI Engineer Code. Moreover, its multi-Agents orchestration, spec anchoring, and automated Verification promise measurable gains. However, claims remain vendor-supplied until independent benchmarks emerge. Enterprises should pilot, measure, and weigh overhead costs carefully. Consequently, those findings will determine whether orchestration supplants ad-hoc assistants. Professionals should explore the linked certification to build requisite skills and drive informed adoption.

Evaluate Zenflow, gather data, and upskill today to stay competitive in tomorrow’s automated development landscape.