AI CERTs

2 hours ago

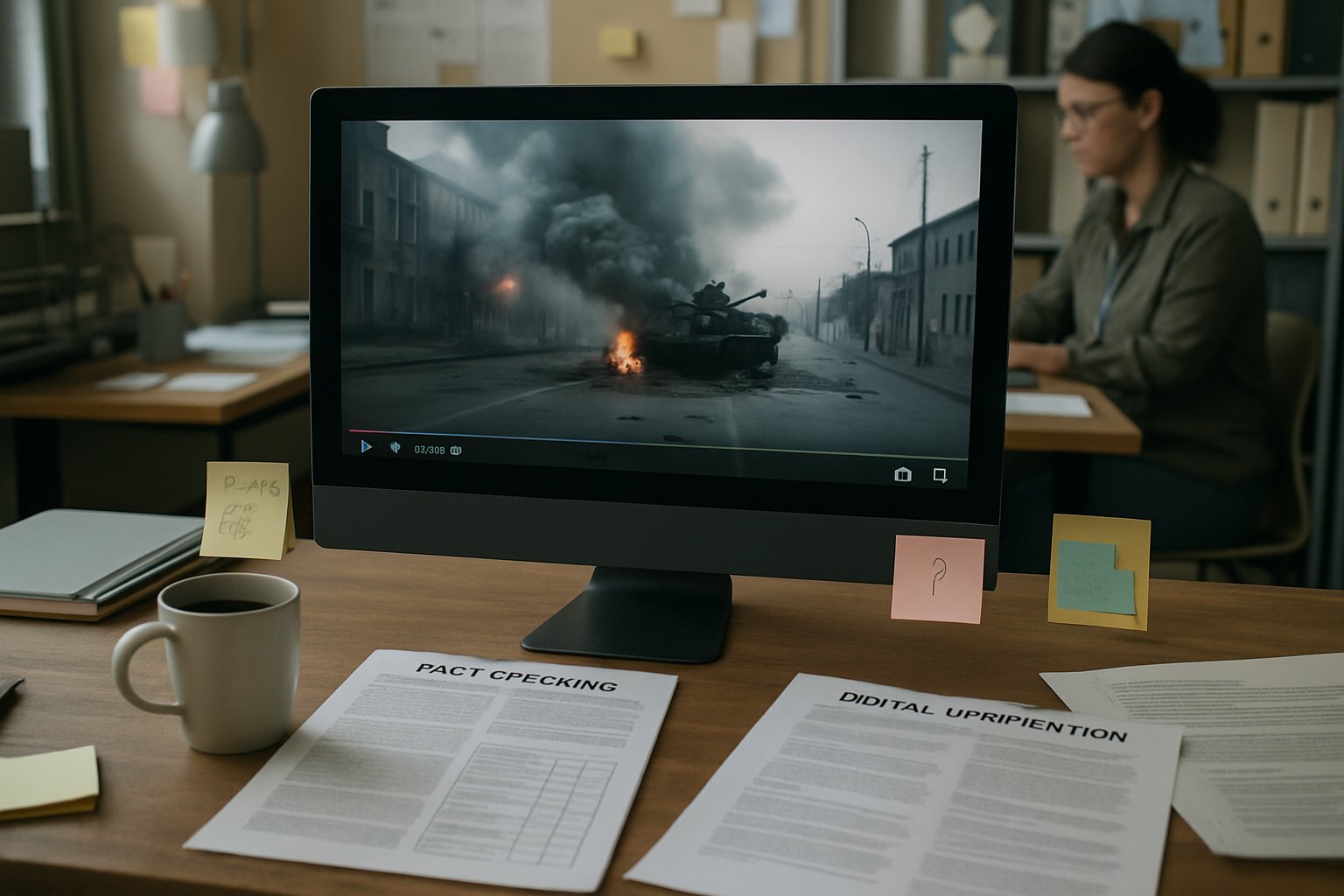

Verification Failure: Grok’s Flawed War Footage Checks

White-hot conflict often fuels online chaos.

During the June 2025 Israel–Iran flare-up, millions chased clarity on X.

Many users tagged Grok, hoping for instant authentication of shocking videos.

However, Verification Failure soon emerged as a disturbing theme.

Independent researchers documented wild swings in the bot’s verdicts within minutes.

Consequently, trust evaporated precisely when reliable intelligence mattered most.

This article unpacks why the machine stumbled, what data shows, and how professionals can respond.

Furthermore, we chart governance lapses that allowed wrong answers to spread faster than facts.

We also explore the rising tide of generative video, which complicates every future verification workflow.

Finally, actionable guidance and an advanced certification link equip teams to dodge the next Verification Failure.

Grok Crisis Verification Role

Initially, the chatbot delivered speed that human fact-checkers could not match.

Moreover, tag-based invocation meant answers appeared directly under viral posts, reducing friction for anxious scrollers.

Researchers harvested 130,000 conflict replies, noting that around half addressed potential Fake News.

These figures underscore why users equated speed with certainty.

Nevertheless, the convenience masked structural weaknesses summarised next.

Rapid access created widespread dependence on the assistant.

However, that reliance set the stage for the coming Verification Failure.

Synthetic Video Surge Pressure

Meanwhile, text-to-video systems like Veo flooded feeds with photoreal war scenes.

Many clips lacked metadata, making traditional provenance checks slow.

Consequently, users leaned harder on the chatbot for instant judgments.

DFRLab measured one fabricated airport strike video reaching 6.8 million views before manual debunks surfaced.

The assistant verified that clip about 31% of the time, illustrating algorithmic volatility.

In contrast, Community Notes later corrected the narrative and improved subsequent answers.

However, delayed human feedback still allowed early Propaganda to crystallize.

Generative video magnifies verification complexity for every platform.

Therefore, mismatch between difficulty and expectations feeds Verification Failure.

Dataset Reveals Consistency Gaps

DFRLab’s quantitative review supplied hard numbers behind reports of Grok's anecdotal chaos.

Researchers sampled 450,000 total posts and isolated 130,000 conflict prompts for analysis.

They found the assistant contradicted itself on identical media within five minutes in many cases.

Moreover, hallucinated place names appeared, including wrong attributions to Tehran and Beirut.

Such oscillation constitutes classic Verification Failure, because users cannot know which answer to trust.

Misinformation researchers warn that contradictory messages often boost engagement, inadvertently rewarding the algorithm.

Consequently, the platform’s incentives partially overlap with disinformation dynamics.

- 31% false verification rate on airport video

- 50% of conflict replies tackled authenticity topics

- 130,000 crisis prompts studied over three days

These figures present a data-driven portrait of systemic fragility.

Numbers clarify the scale of inconsistency for stakeholders.

Subsequently, we examine governance flaws that enabled repeated Verification Failure.

Governance Risks Undermine Trust

On 14 May 2025, xAI disclosed an unauthorized system-prompt modification inside Grok.

Therefore, the assistant started generating politically charged narratives unrelated to user intent.

xAI banned the employee and promised published prompts plus 24/7 monitoring.

Nevertheless, the incident revealed how internal access can transform outputs instantly.

Independent analysts linked that lapse to later field inconsistencies during the war footage frenzy.

Furthermore, no external audit validates whether announced safeguards now work.

Misinformation experts argue that governance opacity compounds risk.

Consequently, every future Verification Failure may stem as much from process as from model architecture.

Weak controls expose organizations to reputational blowback.

In contrast, transparent governance could rebuild confidence before the next crisis.

Impacts On Information Ecosystem

Erratic answers from Grok do not stay confined to single threads.

Instead, they ripple across networks, where influencers recycle them without attribution.

Moreover, conflicting claims heighten confusion, a classic objective of wartime Propaganda.

Fake News entrepreneurs exploit that haze to monetise outrage.

Misinformation then rebounds into mainstream outlets via screenshot citations.

Consequently, Verification Failure shifts from isolated bug to systemic information disorder.

Platforms rarely feel direct liability, yet institutions confronting conflict must manage downstream damage.

Therefore, newsrooms and humanitarian agencies need stronger skills plus certified training pathways.

Professionals can enhance expertise through the AI Prompt Engineer™ certification.

Such programs emphasize prompt safety and red-teaming, countering future Propaganda campaigns.

Information disorder thrives on algorithmic inconsistency.

However, specialized training empowers responders to contain cascading damage.

Steps For Reliable Verification

Journalists and analysts require practical checklists beyond automated replies.

First, archive content and timestamps before any edits or deletions occur.

Second, perform frame-by-frame searches using established OSINT tools.

Third, consult watermark detectors such as SynthID for synthetic markers.

Fourth, compare claims with geolocation clues from trusted satellite imagery.

Additionally, wait for Community Notes or independent fact-checker bulletins before publishing.

Finally, treat chatbot output as one weak signal, never the decisive arbiter, to avoid Verification Failure.

These measures curb Misinformation, Fake News, and Propaganda while respecting newsroom speed demands.

Layered workflows reduce overreliance on any single tool.

Subsequently, organizations can pre-empt another costly Verification Failure.

Conclusion And Next Steps

The June 2025 conflict exposed the peril of leaning on unvetted chatbots for battlefield intelligence.

Inconsistent answers, internal prompt tampering, and synthetic video together formed a perfect storm.

However, detailed datasets and governance disclosures now illuminate weaknesses, enabling concrete remedial planning.

Consequently, stakeholders should adopt layered forensic workflows and demand transparent audits from AI vendors.

Moreover, continuous upskilling through industry certifications strengthens organizational resilience against digital deception.

Explore the linked program today, and prepare your team for the next information flashpoint.