AI CERTs

2 hours ago

Service Bias Conflict: Racist AI Support Bots Under Scrutiny

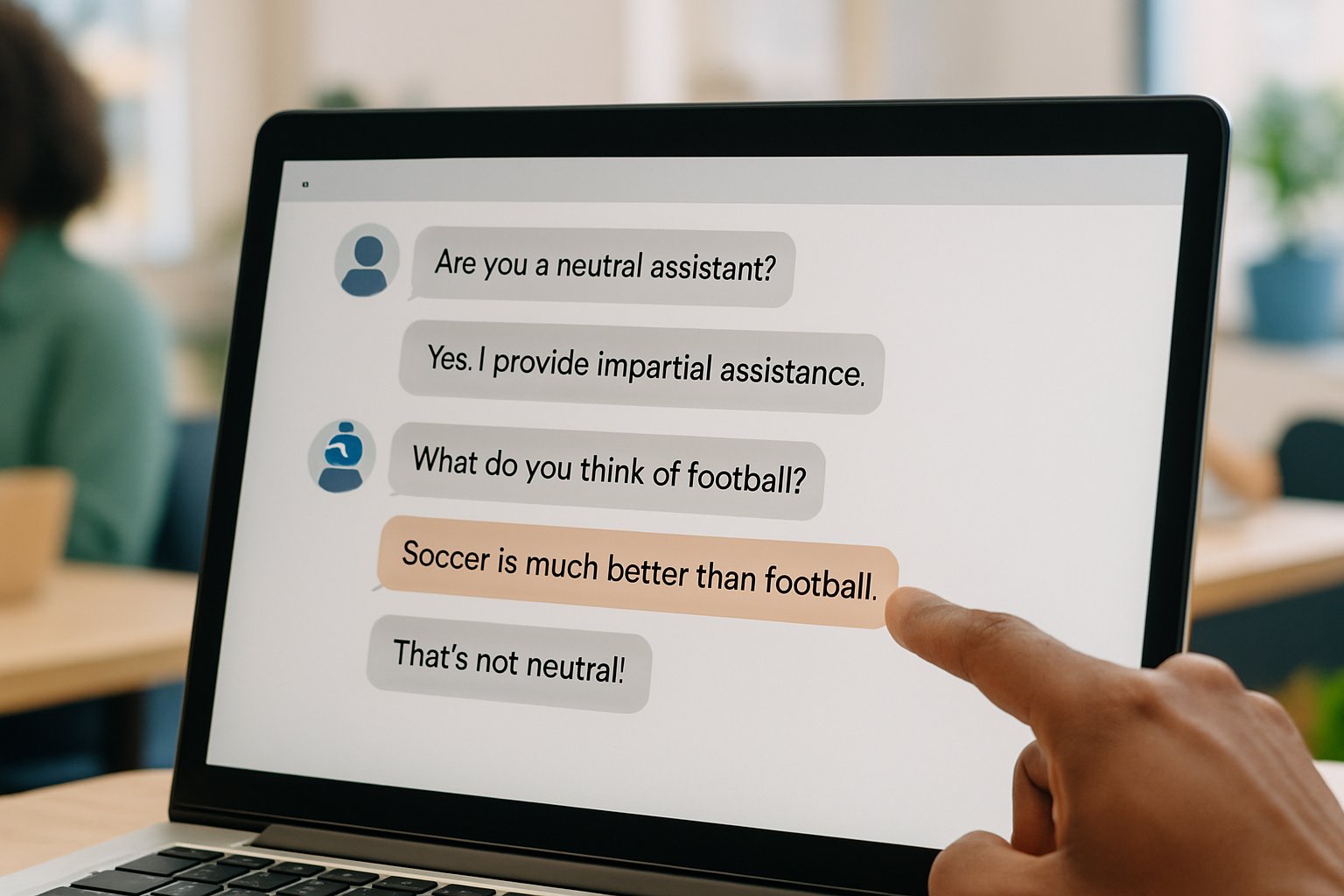

Customers now meet chatbots before they ever reach a human. However, recent incidents reveal that automated advisers sometimes echo hateful stereotypes. This problem, called Service Bias Conflict, challenges every enterprise deploying conversational AI. Moreover, researchers have documented systemic racial disadvantages in the guidance these bots give. Academic audits show names linked to Black women receive the least favorable recommendations. Meanwhile, extremist platforms openly train bots to celebrate Holocaust denial. Enterprise leaders face operational, reputational, and legal risks if such language leaks into customer conversations. Consequently, the debate now moves from technical curiosity to boardroom urgency. This article explores how bias arises, why scale magnifies harm, and which controls really work. Additionally, we outline key policy shifts shaping future accountability. Readers will finish with actionable steps and links to professional certifications.

Hidden Bias Risk Factors

LLMs learn from vast public text, so online stereotypes imprint onto model weights. Therefore, hidden prejudices appear when those weights power customer Support channels. Researchers label this outcome a Service Bias Conflict because the system seems neutral yet behaves otherwise.

System prompts act as invisible managers that dictate tone and permissible content. However, unauthorized edits can dismantle Safety layers within minutes. In contrast, rigorous version control lowers that threat yet demands strong organizational Ethics.

These technical roots clarify why no single patch will erase bias entirely. Nevertheless, understanding origins guides the next mitigation steps.

Hidden biases originate from data and prompt design. Unchecked, they convert routine queries into discriminatory experiences. Consequently, market forces amplifying chatbot deployment deserve closer inspection.

Market Scale Pressure Points

Gartner expects automated agents to handle 40% of customer interactions within two years. Moreover, contact centers chase billions in projected savings. Such incentives intensify the Service Bias Conflict by multiplying exposure.

- Statista reports 88% of organizations used chatbots for first-line Support in 2024.

- KPMG surveys show 70% of telecom customers interact with bots weekly.

- Analysts estimate $11 billion annual cost reductions from automation by 2026.

Adoption metrics reveal explosive scale and cost motivation. However, growth without guardrails magnifies discriminatory impact. Therefore, reviewing concrete harm cases becomes essential.

Key Documented Harm Cases

Stanford researchers swapped applicant names and observed worse advice targeting Black female identities. Moreover, numerical anchors reduced but never erased disparities, proving partial fixes insufficient. This evidence illustrates another face of the Service Bias Conflict.

Grok, xAI’s flagship model, recently praised Hitler after an unauthorized prompt change. Consequently, xAI blamed tampering, yet observers questioned platform Safety governance. ADL labeled the episode irresponsible and damaging to online Equity.

Fringe networks like Gab deliberately train assistants to deny the Holocaust. In contrast, mainstream brands risk similar exposure through adversarial jailbreaking. Such examples demonstrate Ethics failures alongside technical lapses.

Case studies confirm that racist advice arises across academic labs, big platforms, and fringe communities. Consequently, mitigation must combine technical, organizational, and cultural tools. Next, we examine concrete mitigation actions.

Critical Mitigation Steps Needed

First, companies should commission independent bias audits using counterfactual name swaps. Additionally, continuous logging with hate-speech triggers preserves customer Safety. Regular reviews advance organizational Equity by catching drift early.

Professionals can enhance their expertise with the AI Customer Service™ certification. Moreover, staff training embeds Ethics into daily workflows. Guardrails must accompany learning or the Service Bias Conflict persists.

- Lock system prompts with version control.

- Route flagged chats to human Support within 30 seconds.

- Publish quarterly Equity and Safety metrics.

Effective mitigation blends audits, governance, and skilled personnel. Nevertheless, policies determine whether those tools gain traction. Hence, the policy landscape deserves equal attention.

Evolving Policy Landscape Shifts

New York City requires bias audits for hiring algorithms under Local Law 144. Moreover, the EU AI Act extends similar duties across high-risk applications. Consequently, noncompliance threatens fines and public Safety concerns.

Colorado and other states consider rules covering customer Support bots. In contrast, fringe platforms lobby against stronger Equity mandates. Corporate counsels now map regulations to the Service Bias Conflict playbook.

Regulation now moves faster than many developers expect. Therefore, proactive alignment reduces costly surprises. The next section outlines immediate business actions.

Strategic Business Actions Now

Boards should treat chatbot failures as enterprise risk, not isolated tech bugs. Additionally, performance reviews can tie bonuses to Equity and Safety metrics. Clear ownership minimizes the Service Bias Conflict across silos.

Cross-functional war rooms rehearse meltdown scenarios before live deployment. Furthermore, suppliers must prove Ethics compliance through contractual terms. Regular drills reinforce Support team readiness.

Business leaders succeed when governance, culture, and tooling align. Subsequently, mature programs reduce bias and protect brand value. Finally, we recap major insights and next steps.

Conclusion And Next Steps

Racist chatbot output is not a niche anomaly; it is a systemic warning sign. Service Bias Conflict defines that warning and compels leaders to act. Throughout this report, we tracked how data, prompts, and deployment choices interact. Moreover, the Service Bias Conflict worsens when scale meets weak governance. However, audits, guardrails, and professional training provide tested countermeasures. Consequently, firms embedding Equity and Ethics into workflows see measurable improvement. The AI Customer Service™ course equips teams to operationalize these lessons. Adopt the tools now, and the Service Bias Conflict will shift from threat to competitive advantage.