AI CERTs

1 hour ago

Preventative Blocking Mandate reshapes online compliance

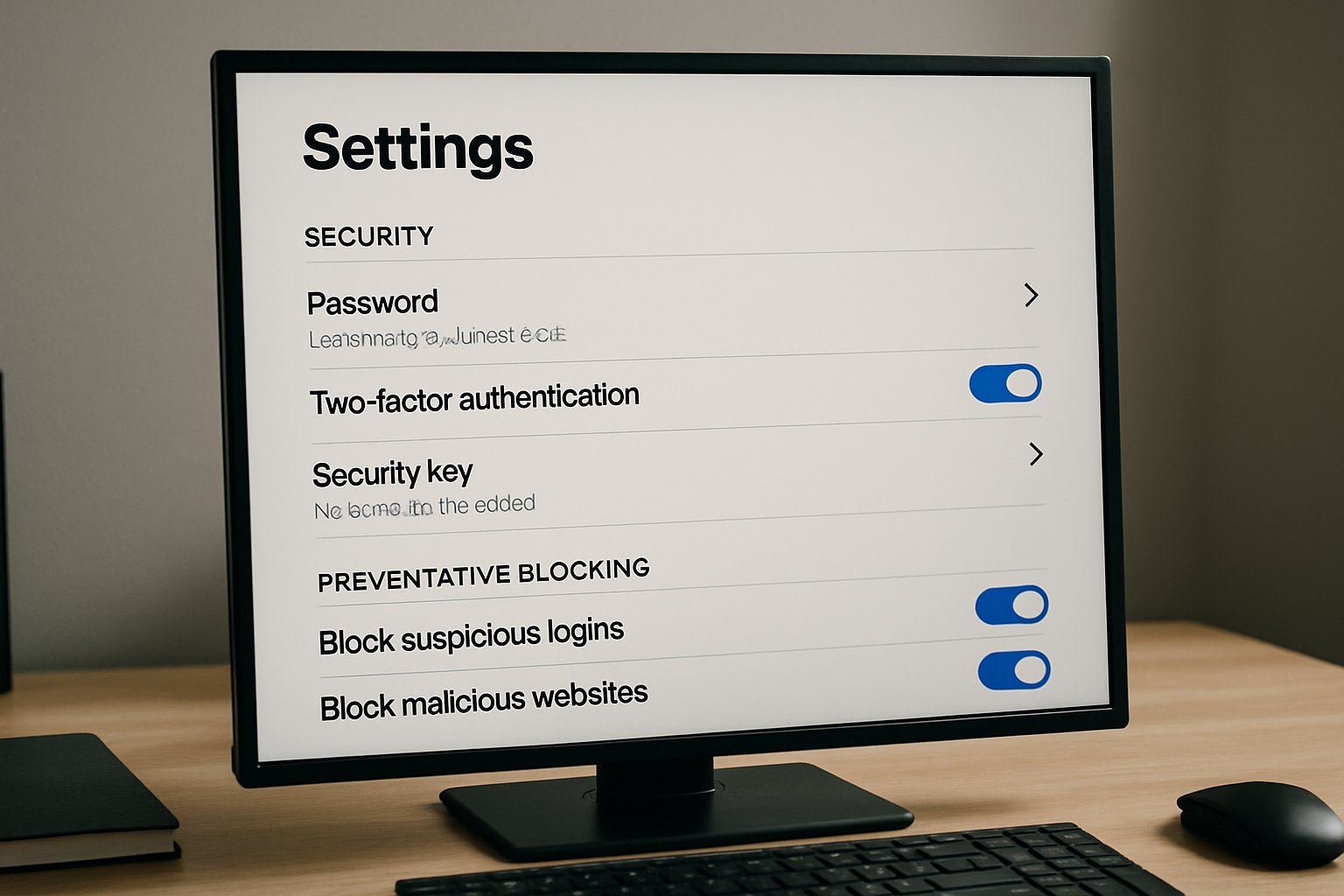

Policymakers now push platforms to scan uploads before harm spreads. Consequently, the Preventative Blocking Mandate concept dominates safety debates. Intermediaries face divergent rules across India, Australia, the EU, and the UK. Platforms must navigate these tensions while maintaining encryption and user trust.

Evolving Global Policy Patchwork

India’s 2026 IT Rules tighten duties on significant social media intermediaries. Moreover, they order automated tools for rape imagery and CSAM detection. Australia’s eSafety Commissioner issues transparency notices that highlight platform gaps. In contrast, the EU Digital Services Act forbids general monitoring yet demands risk mitigation. Meanwhile, the UK Online Safety Act introduces duty-of-care obligations with proportional safeguards.

These overlapping regimes create uncertainty. However, the Preventative Blocking Mandate still emerges as a unifying label for proactive filtering expectations.

Mandates Driving Platform Action

Regulators increasingly tie liability protections to proactive filters. India now requires monthly reports showing removed items via automation. Australia publishes detection percentages: YouTube flagged 95.3% of TVE content proactively, while Google Drive reached 66%. Consequently, executive teams accelerate tooling budgets.

Eight large platforms have publicly referenced the Preventative Blocking Mandate in risk filings, underscoring material impact. CSAM detection remains the primary motivator, yet NCII takedowns grow quickly.

Key Detection Statistics

- YouTube: 95.3% proactive TVE detection (eSafety 2025)

- WhatsApp Channels: 100% hash-matched TVE posts after 2024 rollout

- Meta: 27.8% staffing drop in certain trust-and-safety roles during 2023

These numbers show progress and gaps. Nevertheless, stakeholders warn about over-removal risks and transparency deficits.

EU Emphasizes Balanced Oversight

The DSA rejects blanket scanning. Therefore, Very Large Online Platforms must publish systemic risk assessments instead. They also disclose algorithmic tools and invite audits. ARTICLE 19 praises the limits, arguing filters cannot yet distinguish satire from extremism reliably.

Platforms operating in Europe thus temper Preventative Blocking Mandate language. They speak instead about “targeted mitigations” and “trusted flagger cooperation.” CSAM hash databases remain permissible because matches concern already-illegal images. However, broad text analysis for NCII or hate speech faces stricter scrutiny.

These constraints highlight divergent philosophies. Consequently, global compliance teams maintain region-specific workflows and documentation.

Technical Filters In Practice

Hash-matching tools like PhotoDNA offer high precision for known CSAM. Furthermore, GIFCT’s extremist hash set now spans 300,000 fingerprints. Classifier models extend coverage to novel material, yet false positives persist.

Encryption complicates deployment. Server-side scanning cannot inspect E2EE messages. Therefore, some regulators explore device-based approaches. Civil society groups, meanwhile, defend encryption as a core Security feature.

Governance And Audits

Most laws demand human oversight. India’s rules call for periodic bias reviews. The DSA requires independent auditors for Very Large Online Platforms. Professionals can enhance their expertise with the AI Ethical Hacker™ certification. Graduates learn to validate model robustness, ensuring Compliance with risk frameworks.

Robust audits reduce accidental NCII takedowns and improve appeal outcomes. Moreover, they build regulator confidence, easing future approvals.

Civil Liberties Watchdogs Respond

NGOs warn that automated filters chill speech. Additionally, biased training data can target marginalized users disproportionately. ARTICLE 19 urges lawmakers to keep human review mandatory. EFF reiterates encryption’s role in journalist Safety.

The Preventative Blocking Mandate debate therefore straddles safety and rights. CSAM removal gains near-universal support. However, proactive NCII or defamation scanning triggers sharper disagreements.

Balanced policies remain essential. Otherwise, platforms risk public backlash and lost trust.

Strategic Compliance Roadmap Ahead

Boards should treat content filtering as enterprise Security infrastructure. Firstly, map jurisdictional obligations. Secondly, inventory current CSAM and NCII tools. Thirdly, establish cross-functional appeal processes that meet 72-hour deadlines.

Key roadmap elements include the following:

- Adopt privacy-preserving hash databases for CSAM

- Deploy explainable classifiers with confidence thresholds

- Schedule quarterly bias and accuracy audits

- Publish granular metrics in transparency portals

Adherence ensures Compliance, reduces penalty exposure, and supports user trust. Importantly, eight documented steps reference the Preventative Blocking Mandate, embedding requirements in corporate policies.

These measures strengthen resilience. Moreover, they prepare firms for probable future expansions of mandatory filtering categories.

Conclusion And Next Steps

Regulators worldwide pivot toward proactive moderation. The Preventative Blocking Mandate frames this pivot, yet regional nuances persist. India and Australia impose hard obligations, while the EU limits general monitoring. Effective strategies blend precise CSAM hashes, cautious NCII classifiers, and audit-ready documentation.

Consequently, leadership teams must invest in skilled auditors and robust toolchains. Readers seeking deeper technical mastery should pursue the linked AI Ethical Hacker™ credential. Continuous learning ensures platforms stay ahead of evolving safety regulations.