AI CERTS

3 hours ago

Perplexity’s Model Council Redefines Multi-Model Validation

The launch sits inside Perplexity Max, a flat subscription tier costing $200 each month. Therefore, only premium users can test the system today. Analysts praise the idea of integrated multi-model queries, yet they question how the chair model handles conflicts. Meanwhile, enterprises still await mobile or desktop support. This introduction explores how the system operates, why it matters, and which economic, technical, and compliance gaps remain.

Triangulation Rationale Behind Adoption

Perplexity executives argue that cross-validation reduces blind spots. Additionally, agreement among independent models boosts user confidence. Nevertheless, shared training data can trick all three engines, so consensus is never proof. The company quotes, “Model Council helps surface blind spots any single model might have.” Furthermore, power users already run manual comparisons; Perplexity now productizes that habit. Each query triggers three multi-model queries in parallel. Consequently, latency rises, yet the payoff is richer context.

These benefits appeal to fact-checkers, investors, and policy analysts. However, success depends on the synthesizer faithfully representing disagreements. Two-line summary: Triangulation offers error signals and efficiency. However, synthesizer fidelity determines real-world trust. Next, we examine the interaction flow.

Workflow And UI Walkthrough

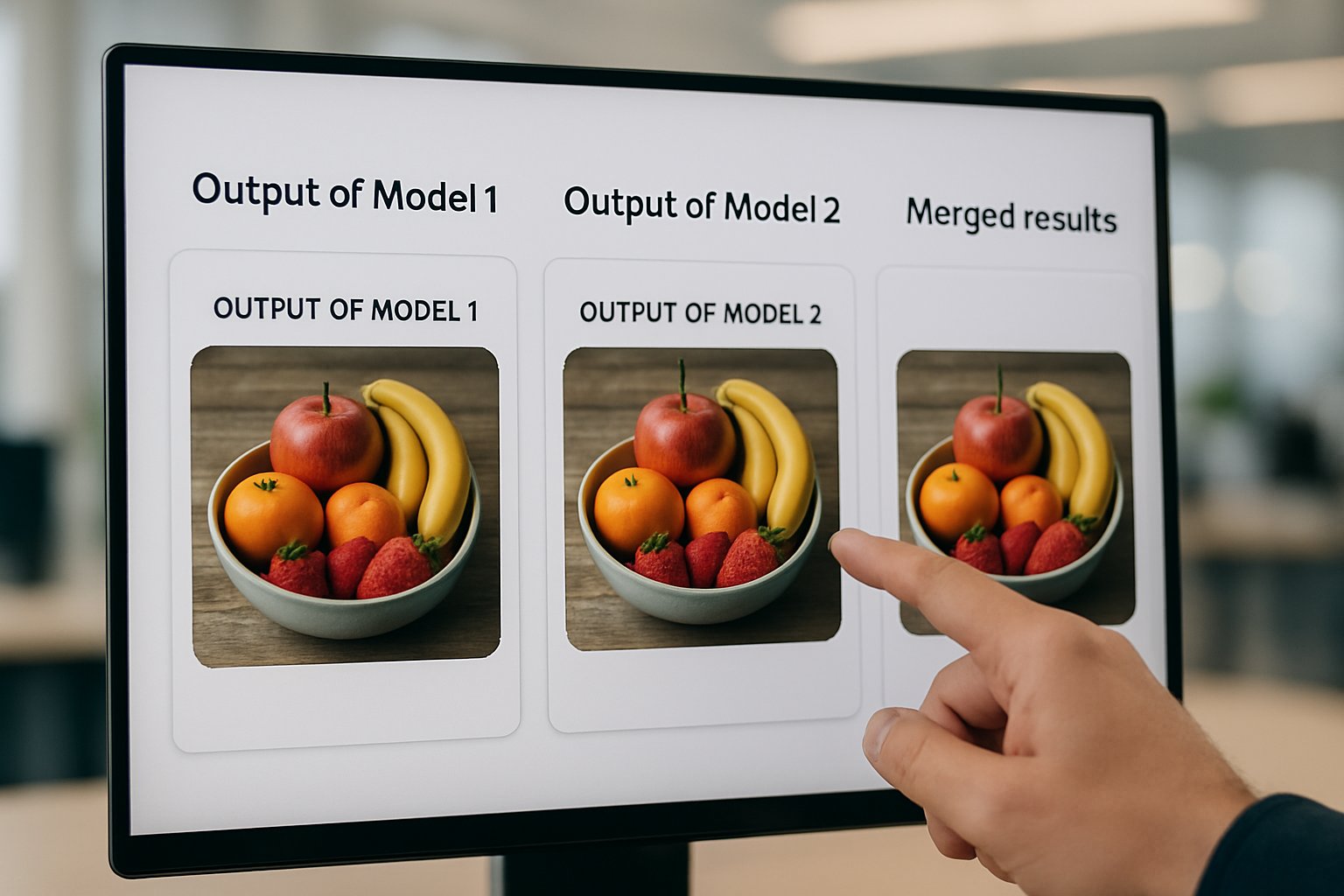

Users choose “Model Council” from the web interface. Subsequently, they pick three models, such as Claude Opus 4.6, GPT-5.2, and Gemini 3 Pro. Each model owns a “Thinking” toggle for deeper reasoning. Consequently, heavier reasoning consumes more tokens and time. After generation, a chair model inspects outputs, highlights consensus sentences, and tags unique points.

The interface exposes expandable panels for every engine. Moreover, color cues mark agreement zones, while warning icons indicate conflicts. Therefore, researchers can inspect underlying text before trusting the summary. The system tracks total token allocation across the three runs, giving users cost awareness.

- Models per query: 3

- Launch channel: Web only

- Chair model identity: undisclosed

- Example models: GPT-5.2, Claude Opus 4.6, Gemini 3 Pro

Section takeaway: The UI encourages quick comparison and granular drill-down. Consequently, it aims to balance speed with transparency. The pricing mechanics follow next.

Pricing And Access Limits

Model Council ships within Perplexity Max. Consequently, subscribers pay one flat subscription rather than per-query fees. The plan costs $200 monthly or $2,000 yearly. Moreover, Perplexity promises “web only” support at launch, with mobile pending.

Analysts note that parallel execution increases compute bills. Therefore, some question whether the unit economics remain sustainable if heavy users fire thousands of multi-model queries. In contrast, Perplexity says the premium price covers expected load. Two-line summary: Pricing simplifies budgeting but concentrates cost risk on Perplexity. However, that risk shapes future feature limits. Economic debates deepen in the next section.

Economic Questions Emerging Now

Running three frontier models and a chair creates four inference bills. Moreover, users pay only one flat subscription. Consequently, observers debate the long-term unit economics. TechCrunch quotes a Perplexity executive: “Multi-model is the future.” Nevertheless, unit costs grow as model sizes climb.

Furthermore, token usage per query can triple. Therefore, internal token allocation policies must throttle abusive workloads. Some analysts expect usage caps or burst limits. Additionally, partnerships with model providers likely involve variable wholesale pricing. Two-line summary: Financial sustainability hinges on usage patterns and provider deals. Consequently, economic pressure could shape future access rules. Next, we explore risk factors.

Risk Factors Analysts Flag

Experts highlight synthesizer bias. Although three models speak, one chair decides the story. Consequently, misrepresentation remains a threat. Moreover, agreement across models may still echo shared misinformation. Therefore, consensus can lull users into false certainty.

Privacy surfaces another risk. Queries travel to multiple vendors, creating wider data footprints. Additionally, Perplexity has not published a granular data-flow map. Enterprises handling regulated content may hesitate. Nevertheless, Perplexity’s help pages promise standard security controls. Two-line summary: Bias and privacy require stronger disclosures. However, transparent governance could ease adoption. Compliance implications follow.

Enterprise And Compliance Outlook

Perplexity courts enterprise deals, yet Model Council lives only in consumer Max. Consequently, legal teams lack documentation on data routing, retention, and provider jurisdictions. Furthermore, regulated industries demand precise token allocation logs for audits.

Additionally, enterprise buyers want assurances that they can disable particular vendors. In contrast, the public UI offers limited model lists. Therefore, fine-grained controls may arrive in future Enterprise Max tiers. Professionals can enhance governance expertise with the AI for Everyone™ certification.

Section takeaway: Compliance gaps slow enterprise uptake. Nevertheless, certification and clearer documentation could accelerate trust. Practical tips conclude the discussion.

Practical Takeaways For Teams

Teams evaluating Model Council can start with low-risk content. Moreover, they should compare the synthesized view against raw outputs. Consequently, hidden disagreements become visible. Additionally, monitor unit economics by tracking internal time saved versus subscription cost. Meanwhile, implement policies on sensitive prompts, since multiple vendors receive data.

- Define use cases needing cross-validation.

- Set internal token allocation budgets.

- Audit chair summaries for bias weekly.

- Negotiate enterprise terms before scaling.

Two-line summary: Disciplined trials expose strengths and weaknesses. Consequently, structured audits sustain long-term value. A concise recap follows next.

Conclusion

Perplexity’s Model Council turns manual comparison into one streamlined workflow. Consequently, professionals gain faster triangulation across frontier models. However, synthesizer bias, privacy gaps, and uncertain unit economics remain open questions. Moreover, the single flat subscription masks complex cost dynamics tied to heavy multi-model queries and escalating token allocation.

Nevertheless, early adopters report efficiency gains that justify the premium fee. Therefore, readers should pilot the tool on critical research tasks and pursue governance skills via relevant certifications. Act now and explore multi-model verification before competitors catch up.