AI CERTS

3 months ago

MIT Uncovers LLM Structural Flaw

Moreover, the paper shows how spurious Sentence Patterns link to specific domains, undermining Reasoning Reliability. Therefore, leaders deploying AI in finance, health, or law must reassess risk exposure immediately.

Flaw Discovery Overview Study

Initially, the team released “Learning the Wrong Lessons” on arXiv in late September 2025. Subsequently, it earned a NeurIPS spotlight and an MIT News feature on 26 November. The authors measured the LLM Structural Flaw across open and closed models, including GPT-4o and OLMo-2.

Meanwhile, controlled templates revealed massive performance drops once syntax changed. For example, GPT-4o-mini fell from 1.00 to 0.44 after minor synonym shifts. In contrast, human readers saw no change in meaning. These data points confirmed that the failure transcends memorization.

Such evidence places the issue firmly on the industry agenda. However, understanding why patterns dominate demands deeper linguistic insight.

Sentence Patterns Skew Answers

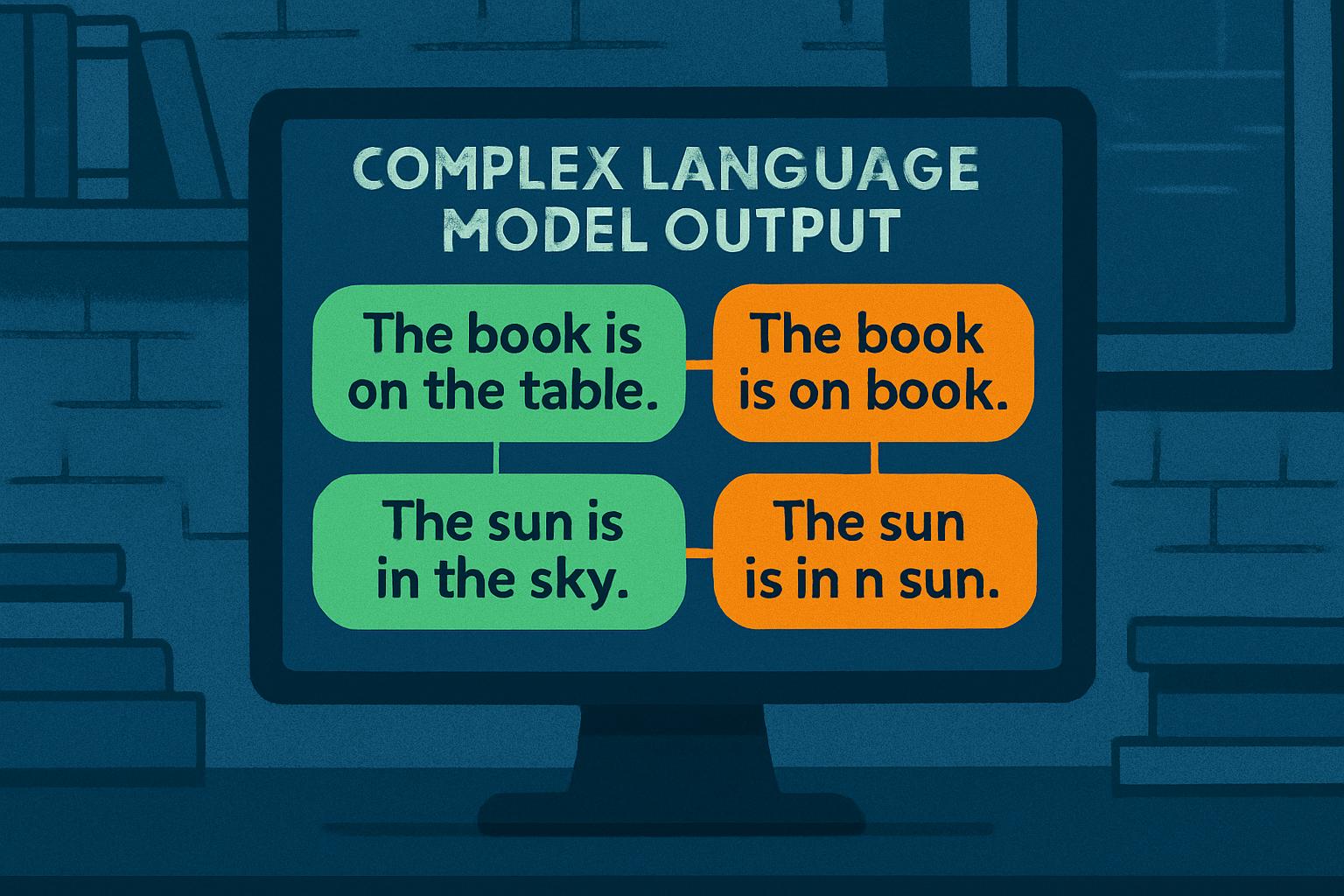

Every model examined relied heavily on Sentence Patterns. Furthermore, the researchers crafted templates that mapped parts-of-speech to single domains. The model then answered domain questions even when entities flipped or became nonsense.

Consequently, attackers can mask harmful instructions behind benign templates. The LLM Structural Flaw thus morphs into a jailbreak enabler. Additionally, safety filters tuned on surface cues become blind to hidden intent.

Key empirical drops underline the danger:

- OLMo-2-7B: accuracy plunged from 0.85 to 0.48 under synonym perturbations.

- GPT-4o: scores shrank from 0.69 to 0.36 on the same task.

- Llama-4-Maverick showed comparable erosion across domains.

These results spotlight the brittle link between patterns and truth. Nevertheless, the authors believe data-centric fixes can help.

Reasoning Reliability Under Threat

Reasoning Reliability suffers when syntax shortcuts bypass semantics. Moreover, models misclassify cross-domain prompts because they never learned genuine reasoning steps. Therefore, finance chatbots may misinterpret rephrased compliance questions, and health assistants might suggest unsafe actions.

Marzyeh Ghassemi stressed the stakes: “Models now operate in safety-critical arenas far removed from training data.” Consequently, unexpected misfires can erode public trust and invite regulation.

Importantly, the LLM Structural Flaw raises governance questions for AI risk teams. However, concrete benchmarks now exist to quantify exposure.

Topic Linkage Safety Risks

The study highlights damaging Topic Linkage between grammar and subject matter. Additionally, adversaries can switch templates to unlock disallowed content. For instance, violent instructions embedded inside a geography template often bypassed refusals.

Moreover, customer-service deployments face brand harm when subtle template tweaks yield inconsistent answers. Topic Linkage therefore becomes a live security vector, not a theoretical oddity.

Shaib and colleagues urge swift adoption of detection pipelines. Consequently, developers can flag syntactic overfitting before production.

Proposed Mitigation Pathways Forward

Thankfully, the paper pairs diagnosis with remediation. Firstly, data augmentation widens syntactic diversity within each domain, diluting shortcuts. Secondly, verifier-in-the-loop systems force explicit reasoning steps.

Furthermore, hybrid neuro-symbolic models show promise. Parallel MIT work on PDDL-INSTRUCT achieved 94% valid plans by supervising logical chains. Therefore, combining structure with language may curb the LLM Structural Flaw.

Professionals can enhance their expertise with the AI+ UX Designer™ certification. Such upskilling equips teams to design safer user interactions.

These pathways illustrate that mitigation is possible. Nevertheless, implementation will demand coordinated investment.

Industry Response And Outlook

Vendors acknowledge mounting user concern. Moreover, some providers have begun running the authors’ benchmark internally. Open-source communities are also testing Llama checkpoints for the same weakness.

Consequently, procurement contracts now reference spurious-correlation metrics. Regulators may follow, especially in Europe and Canada. The Reasoning Reliability debate therefore moves from research labs to boardrooms.

Despite uncertainty, stakeholders agree that ignoring the LLM Structural Flaw is untenable.

Key Takeaways For Teams

Teams planning deployments should prioritize the following:

- Run detection benchmarks before release.

- Increase template diversity in training data.

- Add verifier layers for high-risk outputs.

- Track Topic Linkage metrics during updates.

- Upskill staff through recognized programs.

These actions build resilience against spurious Sentence Patterns. Consequently, they also strengthen overall governance frameworks.

Adoption of such steps positions firms ahead of anticipated regulation. However, continuous monitoring remains essential.

In summary, the MIT team exposed a critical but fixable vulnerability. Industry reactions suggest momentum toward safer practice.

Conclusion And Next Steps

Ultimately, the LLM Structural Flaw forces a reality check on modern AI. Moreover, spurious Sentence Patterns and dangerous Topic Linkage threaten Reasoning Reliability across sectors. Nevertheless, benchmark-driven detection, richer data, and hybrid reasoning can mitigate risk.

Consequently, leaders should test models, revise pipelines, and empower staff. Explore the AI+ UX Designer™ pathway to deepen responsible-design skills today.