AI CERTS

1 hour ago

MiniMax M2.7 and Recursive Recursive Model Improvement

Moreover, it maps remaining gaps and suggests concrete next steps for due diligence. Readers will gain a clear view of how Recursive Recursive Model Improvement may reshape agentic pipelines. Additionally, the discussion covers business implications, security risks, and certification pathways for practitioners. Stay with us to understand whether MiniMax M2.7 truly advances reinforcement learning automation or merely repackages familiar ideas. Meanwhile, each section concludes with concise takeaways, ensuring efficient reading for overloaded teams.

Broader Market Context Snapshot

First, MiniMax completed a Hong Kong IPO in January 2026, raising fresh capital for accelerated research. Consequently, the firm faces heightened scrutiny over transparency and intellectual property practices. Anthropic’s February allegations of industrial-scale distillation intensified that spotlight. In contrast, supporters argue rapid iteration is impossible without ambitious data acquisition.

Regulatory agencies across the United States and China are watching these dynamics closely. Moreover, corporate buyers now demand robust audit trails before adopting new autonomous agents. The scene therefore offers both opportunity and risk for MiniMax M2.7 adoption.

Key point: funding enables speed, yet legal clouds loom. However, those clouds set the narrative for every subsequent technical claim.

Self Evolution Workflow Inside

According to the March release, the model orchestrates data pipelines, training launches, profiling, and code merges. Furthermore, M2.7 allegedly handled 30–50% of a reinforcement learning research team’s daily chores. MiniMax engineers ran over 100 fully automated analyze-plan-modify cycles. Subsequently, internal evaluation scores improved by roughly 30%.

The loop embodies Recursive Recursive Model Improvement by letting the model critique and rewrite its own harness. Nevertheless, MiniMax clarifies that weight updates still require separate training runs. Therefore, self-evolution currently affects scaffolding, not frozen inference weights.

Engineers describe the process as an "AI DevOps co-pilot" for reinforcement learning infrastructure. Professionals can enhance their expertise with the AI Engineer™ certification.

In short, Recursive Recursive Model Improvement manifests as automated harness iteration. Consequently, productivity gains appear plausible yet still await external validation. The next section reviews published benchmark numbers.

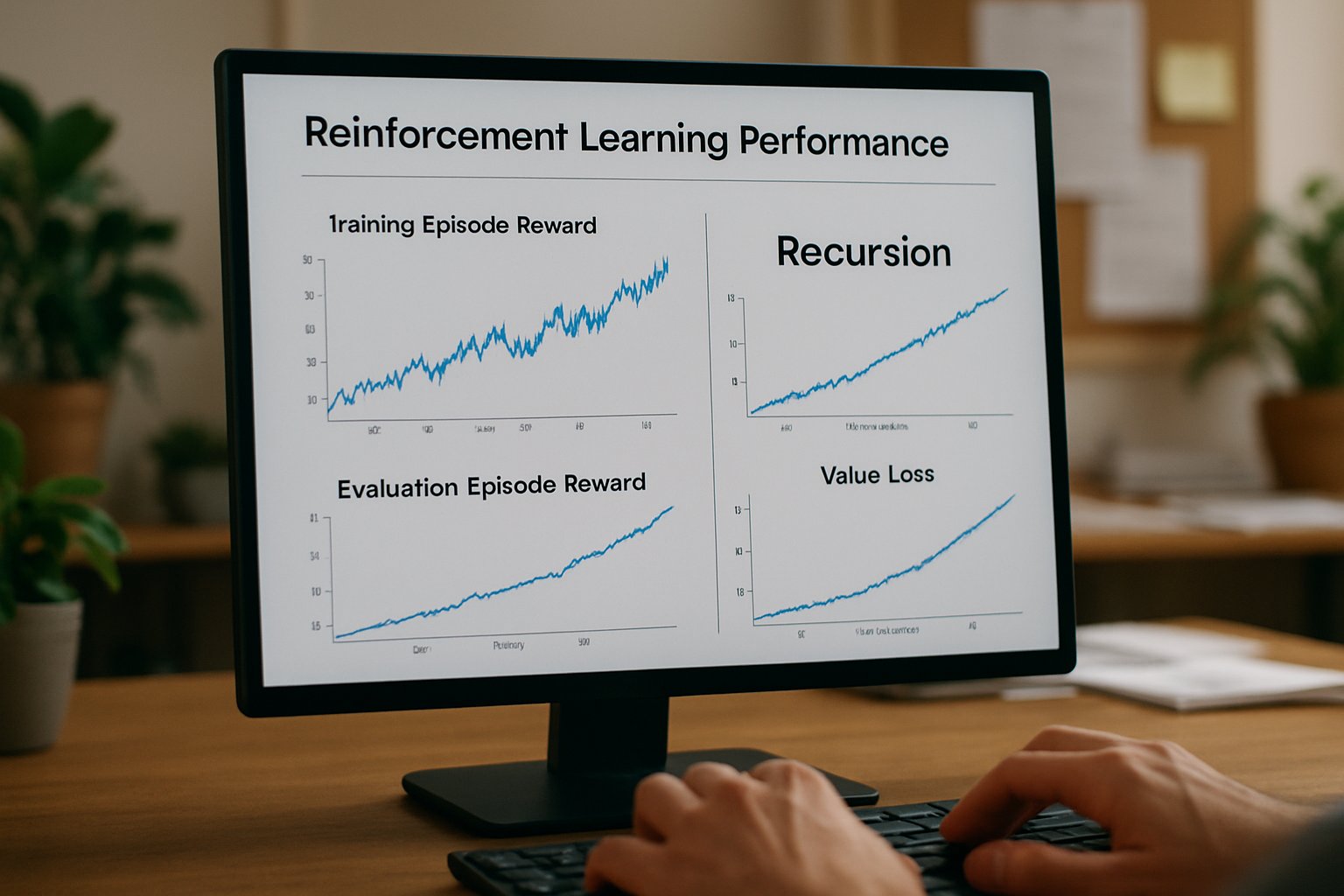

Early Performance Benchmarks Reviewed

MiniMax’s blog lists headline scores across seven coding and agent benchmarks. For example, SWE-Pro reached 56.22%, reportedly near Opus territory. Meanwhile, VIBE-Pro posted 55.6%, and Terminal Bench 2 hit 57.0%. Moreover, the GDPval-AA ELO of 1495 would top current open models.

The company frames these wins as proof of Recursive Recursive Model Improvement effectiveness. However, all tests were executed internally using undisclosed harnesses. No academic lab has yet replicated the figures under controlled budgets. Consequently, technologists should treat the results as provisional.

- SWE-Pro accuracy: 56.22% near Opus class.

- VIBE-Pro full project score: 55.6%.

- Terminal Bench 2 composite: 57.0%.

- Toolathon skill accuracy: 46.3% across 40 complex skills.

Recursive Recursive Model Improvement underpins each reported metric. These figures suggest competitive performance, yet transparency gaps remain. Therefore, independent community testing becomes critical. Community data is examined next.

Early Independent Community Findings

Reddit and Kilo Bench users began sharing numbers within hours of release. Additionally, many reported MiniMax M2.7 surpassing M2.5 on multi-task agent suites. In contrast, several tasks timed out due to slower context windows. Nevertheless, qualitative feedback praised deeper reasoning and better repo-wide edits.

One PinchBench thread highlighted reinforcement learning fine-tuning improvements when paired with external toolchains. Furthermore, users welcomed relatively low pricing on OpenRouter. Yet they cautioned that licensing for model weights remains unclear.

Recursive Recursive Model Improvement impressed early testers despite timeouts. Early field data backs some claims but lacks statistical power. Consequently, skepticism and excitement coexist. Stakeholders must also weigh risks, discussed below.

Risks And Open Questions

Legal exposure remains the foremost concern. Anthropic accuses MiniMax of large-scale distillation, raising intellectual property alarms. Moreover, Hollywood studios still pursue prior copyright suits over Hailuo outputs. Therefore, enterprises must evaluate supply-chain security before integrating autonomous agents.

Technical risk also matters. Recursive Recursive Model Improvement automates code merges, which could introduce stealth vulnerabilities. Nevertheless, MiniMax states that humans approve critical pull requests. Independent audits of guardrails have not been published.

Another gap involves unclear licensing for MiniMax M2.7 weights. Consequently, researchers cannot yet perform deep differential analysis. Furthermore, benchmark harness code has not been open-sourced, limiting reproducibility.

Risks span legal, security, and reproducibility domains. However, mitigation strategies are emerging, as the following business section explains.

Business Impact And Roadmap

For venture-backed startups, 30% faster RL iteration could compress release cycles and capex. Corporate teams evaluating MiniMax M2.7 envision integrated AIOps bots handling overnight regression triage. Moreover, consultants project fresh revenue streams around harness optimization services. Consequently, demand for certified talent will rise.

Professionals can showcase readiness through the earlier mentioned AI Engineer™ certification. Additionally, MiniMax signaled a possible M2.7 weight release after internal compliance reviews. If granted, openness would accelerate Recursive Recursive Model Improvement adoption across autonomous research labs.

Key takeaway: revenue potential grows with verifiable benchmarks. Consequently, roadmap clarity becomes a decisive purchase factor. Final section distills actionable insights.

Essential Takeaways

The evidence for Recursive Recursive Model Improvement remains promising yet unproven at scale. MiniMax M2.7 delivers measurable gains on company and community tests, but wider replication is pending. Meanwhile, unresolved licensing and legal disputes could slow enterprise onboarding. Nevertheless, proactive vetting, staged rollouts, and certification of staff can mitigate many challenges.

- Request transparent reinforcement learning harness documentation from MiniMax.

- Commission third-party security audits before autonomous deployment.

- Pursue AI Engineer™ certification to build internal expertise.

These steps ground excitement in disciplined evaluation. Therefore, organizations can capture value while containing exposure.

MiniMax’s new release illustrates how quickly agent workflows are shifting toward deeper automation. Recursive Recursive Model Improvement could mark an inflection point if third-party audits confirm current numbers. However, open weights, legal clarity, and security audits remain essential prerequisites for broad adoption. Consequently, boards should pair any pilot with staged rollbacks and continuous penetration testing. Meanwhile, engineering leaders can upskill teams through the AI Engineer™ program to capture early advantages. Act now by commissioning independent benchmarks and reviewing certification options linked above.