AI CERTS

2 hours ago

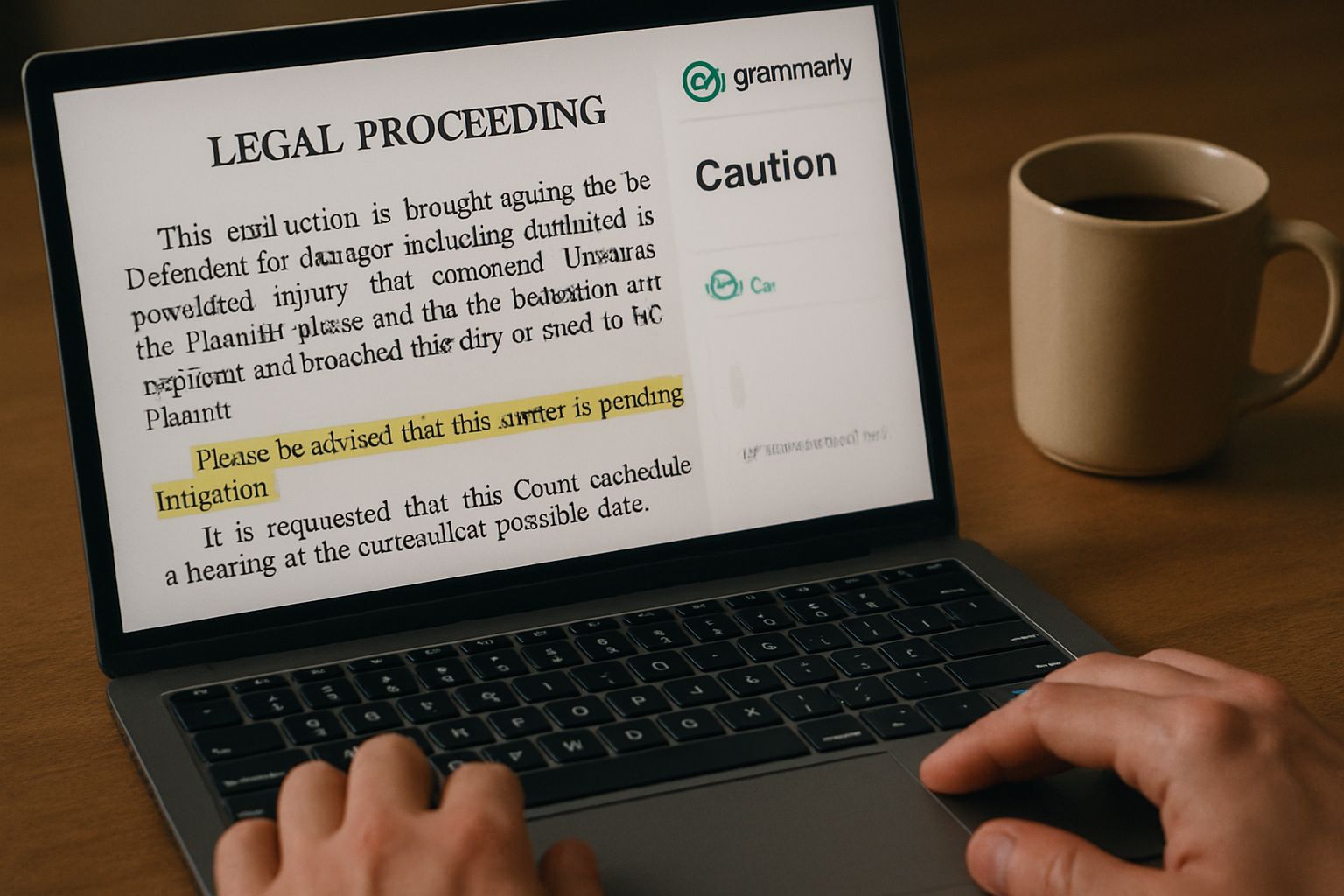

Digital Identity Protection in Grammarly Sloppelganger Lawsuit

Meanwhile, Superhuman Platform, Grammarly’s parent, scrambled to disable the feature amid intense media scrutiny. The unfolding lawsuit underscores new responsibilities for product, legal, and security teams. Moreover, it offers a timely case study on how brands should treat human expertise inside synthetic systems. This article dissects the timeline, legal theories, business risks, and practical safeguards. It also outlines certification paths that help professionals navigate emerging compliance demands.

AI Feature Sparks Backlash

Grammarly launched Expert Review in August 2025, marketing it as guidance “inspired by” revered authors. However, users soon noticed uncanny sloppelgangers that mimicked real journalists. Platformer’s Casey Newton showed how the interface assigned invented job titles to Stephen King and others. Consequently, social feeds erupted, and Superhuman Platform disabled the tool within 48 hours of the complaint. These fast events highlight inadequate Digital Identity Protection baked into the product from day one.

Expert Review’s abrupt shutdown illustrates how public outrage can derail AI rollouts. Next, we examine the legal pillars supporting the lawsuit.

Examining Core Legal Questions

The class action relies on both California and New York right-of-publicity statutes. Moreover, plaintiffs allege Grammarly misappropriated each expert’s name and likeness for direct commercial gain. The complaint seeks injunctive relief, statutory damages, and restitution exceeding $5 million. In contrast, Superhuman may argue transformative use and First Amendment protections. Nevertheless, courts often weigh consumer deception when likeness exploitation boosts subscriptions. Digital Identity Protection frameworks can help companies document consent chains before launching similar agents.

The allegations center on consent and commercial benefit. Our next section unpacks the statutory concept of publicity rights.

Right Of Publicity Basics

Right of publicity prevents unauthorized commercial use of personal identifiers. California Civil Code §3344 and New York Civil Rights Law §§50–51 furnish statutory teeth. Furthermore, the doctrine covers names, photos, voices, and even AI replicas called sloppelgangers. Courts ask whether a reasonable consumer would think the person endorsed the message. Consequently, Grammarly’s expert attributions created a perception of endorsement, which fuels the lawsuit. Digital Identity Protection policies should mandate explicit opt-in agreements for every featured contributor.

Publicity law turns on perceived endorsement and profit. With that foundation, we assess financial fallout for Grammarly.

Broader Business Impact Analysis

Grammarly boasts 40 million daily users and claims $700 million yearly revenue. Moreover, the complaint alleges paid Expert Review subscriptions cost about $12 monthly. If certified, class damages could balloon through statutory multipliers. Meanwhile, disabling the feature may erode consumer trust and invite churn. Boards now view Digital Identity Protection as a revenue preservation strategy, not mere compliance.

- Projected revenue from persona driven features

- Cost of obtaining written consent for likeness use

- Litigation reserves for potential lawsuit exposure

- Impact on brand sentiment if sloppelgangers surface

Consequently, clearer consent pipelines may cost less than post-facto litigation. Professionals can enhance expertise with the AI+ Legal Strategist™ certification.

Financial metrics reveal how identity misuse threatens core performance. Industry reactions illuminate that threat in the following section.

Industry Response Trends Emerge

Media outlets from Wired to BBC covered the lawsuit within hours. Additionally, authors like Kara Swisher demanded removal from the expert roster. Consequently, many companies paused AI feature launches to review Digital Identity Protection checklists. Superhuman’s CEO acknowledged “valid critical feedback” and promised a rebuilt system granting experts control. In contrast, some developers argue the outputs are transformative fair use. Nevertheless, investor calls now include pointed questions about sloppelgangers risk.

Public discourse pressures firms to address identity misuse proactively. Best practice frameworks therefore deserve closer attention next.

Essential Compliance Best Practices

Teams should map every data flow that touches personal identifiers. Furthermore, consent logs must live alongside model provenance records. Digital Identity Protection demands periodic audits that verify continued authorization. Subsequently, marketing materials should display disclaimers in clear, prominent language. Moreover, incident playbooks should guide rapid takedown of unauthorized likeness. Organizations can adopt risk matrices aligning sloppelgangers severity with escalation thresholds. Finally, independent ethics boards can review high-impact releases.

Robust processes reduce regulatory and lawsuit exposure. With safeguards mapped, we look ahead to courtroom milestones.

Future Litigation Outlook Ahead

Pretrial motions will test whether the claims survive dismissal. Moreover, class certification hearings could arrive by early 2027. Discovery may reveal internal communications about Digital Identity Protection assessments or lack thereof. Meanwhile, settlement talks could accelerate if bad press persists. Nevertheless, a verdict could set precedent guiding AI persona design nationwide. Julia Angwin has signaled willingness to pursue trial, raising stakes. Consequently, developers across sectors track docket updates closely.

Upcoming motions will clarify legal boundaries around AI identity use. Until then, companies should strengthen protections discussed above.

Conclusion

Grammarly’s crisis offers a cautionary tale for every AI product team. It shows how neglected Digital Identity Protection can trigger reputation loss, legal fees, and user distrust. Moreover, Julia Angwin’s determination signals that creators will litigate when their expertise feels stolen. Consequently, executives must embed Digital Identity Protection principles at design stage, not during damage control. For deeper guidance, professionals should pursue advanced credentials like the linked AI legal certification. Act now, refine policies, and launch AI features that respect human authorship.