AI CERTS

1 hour ago

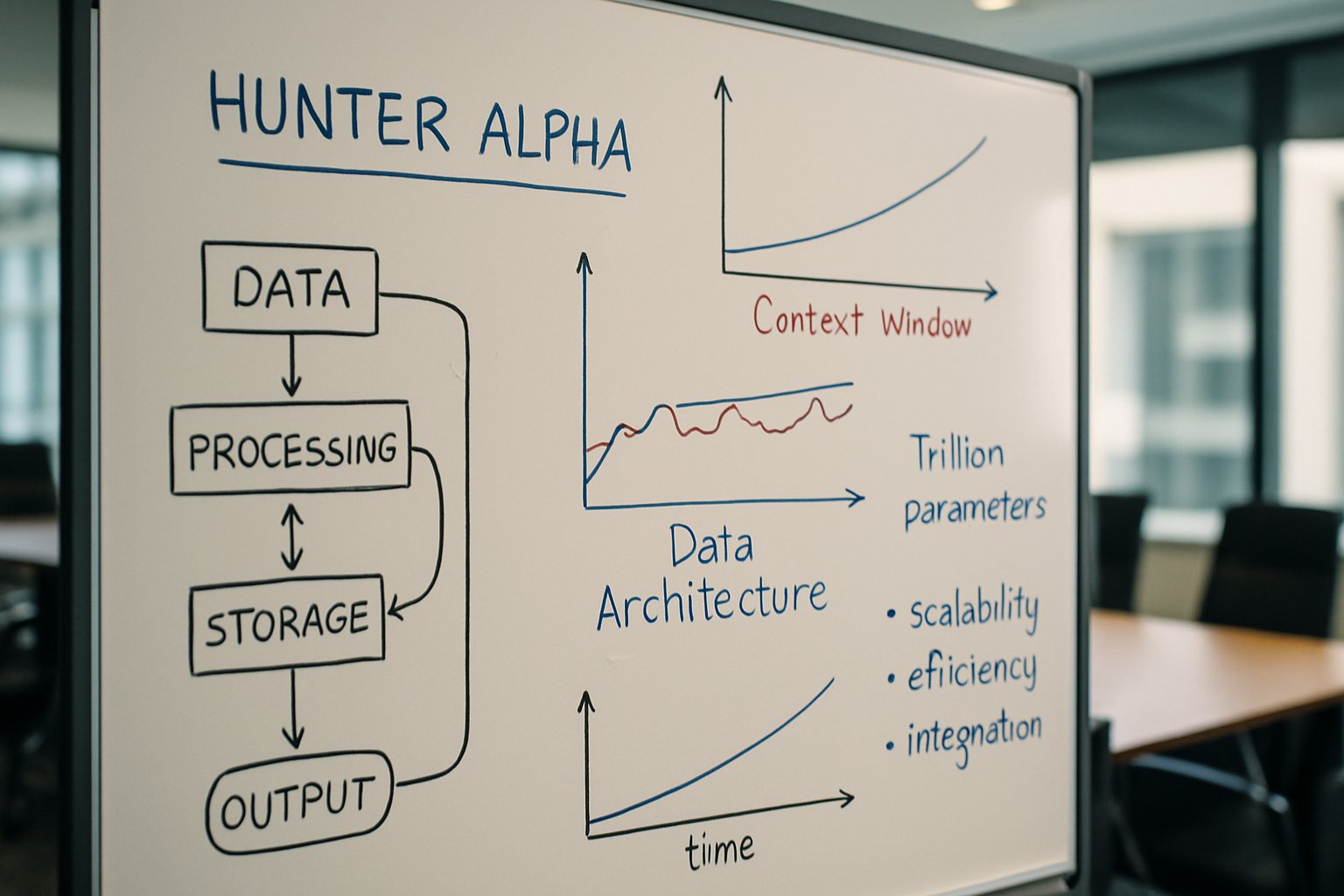

Data Architecture Implications of Hunter Alpha

Meanwhile, developer tools like OpenClaw integrated the model within hours, signaling intense curiosity. Conversely, early benchmark posts offered mixed reviews, reminding architects that raw scale never guarantees superior reasoning. Nevertheless, free access has sparked a flurry of real-world experiments likely to refine community insight within weeks.

Stealth Release Quick Overview

Hunter Alpha appeared without owner attribution. OpenRouter listed 1 trillion parameters, a 1,048,576-token Context window, and zero-cost usage. Furthermore, the marketplace warned that the provider logs prompts. Analysts therefore classify the launch as a stealth beta rather than a stable release.

Developers noted near-instant catalog entries inside OpenClaw client code. Additionally, Chinese aggregators translated specifications within hours, amplifying global attention. Community investigators then began fingerprinting the model using tokenizer quirks and refusal signatures.

Summary: The release leveraged surprise and speed. However, uncertain identity continues shaping perception.

Next, technical specifications merit closer inspection.

Technical Specs Snapshot Today

The headline features start with the Trillion-parameter scale. Moreover, the vast Context window dwarfs typical 128k-token limits, enabling multi-document retrieval workflows. Claimed strengths include long-horizon planning, sustained agentic tasks, and rich tool use.

Pricing currently sits at zero dollars per million tokens. Consequently, experimentation costs remain negligible, though logged prompts raise privacy flags. Compute implications remain significant; trillion-scale inference usually demands sharded GPU clusters or advanced sparsity tricks.

For Data Architecture strategists, such infrastructure calls for elastic memory stores, streaming token buffers, and observability pipelines that capture multi-step chains. Professionals can enhance their expertise with the AI+ Data Robotics™ certification.

Summary: The specs offer frontier capacity. Nevertheless, practical deployment hinges on robust pipelines and budget planning.

The next section reviews performance evidence emerging from public tests.

Early Benchmark Findings Emerged

Community testers employed Lem logical reasoning tasks and TiKZ diagram challenges. Results showed competent yet inconsistent answers. In contrast, leading frontier models often delivered tighter accuracy under identical prompts.

Subsequently, Reddit fingerprinters measured tokenizer behavior, concluding Hunter Alpha differs from DeepSeek V4. Moreover, refusal templates resembled Western alignment patterns instead of Chinese safety styles.

A brief scorecard illustrates current impressions:

- Logical puzzles: 65-75% accuracy

- Code generation: sporadic compile success

- Long narrative recall: stable across >500k tokens

Summary: Performance looks mixed across domains. However, extended memory remains impressive for niche scenarios.

Provenance issues therefore gain greater relevance.

Provenance Origins Still Unclear

No official model card exists. Consequently, licensing, training data, and safety methods remain opaque. Speculation ranges from Xiaomi to an OpenAI stealth trial, yet none hold verifiable proof.

Furthermore, OpenRouter’s “Stealth” provider label complicates regulatory due diligence. Data Architecture teams must document risk assumptions before ingesting sensitive data. Additionally, they should monitor forthcoming disclosures or takedowns.

Summary: Ownership uncertainty hinders trust. Nevertheless, diligent governance can limit exposure while awaiting clarity.

Attention now turns to enterprise opportunities unlocked by long contexts.

Potential Enterprise Use Cases

Million-token contexts support exhaustive contract analysis, multi-quarter financial modeling, and incident postmortem synthesis. Moreover, agentic planning lets bots orchestrate APIs over hours without memory loss.

Enterprises contemplating Data Architecture upgrades can start with pilot workloads:

- Research summarization across hundreds of PDFs

- Customer-journey chatbots persisting year-long histories

- DevOps agents coordinating toolchains and ticket updates

Trillion-parameter capacity could also improve multilingual nuance in global knowledge graphs. Consequently, architects may align vector stores, retrieval layers, and security gateways around these abilities.

Summary: Use cases appear compelling for information-dense industries. However, risk controls require equal attention.

The following section details those risks.

Key Risks And Mitigations

Logged prompts threaten confidentiality. Therefore, teams should redact secrets or sandbox queries. Additionally, unknown training data raises copyright concerns; downstream content may embed protected text.

Performance volatility creates user frustration. Consequently, product owners should implement fallback models and monitor output metrics. Moreover, the energy footprint of Trillion-parameter inference can inflate costs unless efficient hosting techniques are applied.

Data Architecture safeguards must include audit trails, prompt hashing, and retention policies aligned with regional regulations.

Summary: Multiple vectors of risk exist. Nevertheless, disciplined controls can contain exposure.

The next steps section outlines practical adoption guidance.

Next Steps For Teams

Begin with low-stakes experimentation using non-sensitive datasets. Meanwhile, collect latency, cost, and accuracy metrics. Subsequently, compare those numbers against existing models to justify integration.

Architects should also track OpenRouter announcements for any provenance update. Furthermore, engaging with community fingerprinters may reveal hidden behaviors that influence pipeline design.

Plan capacity by modeling Context window usage peaks, then allocate memory accordingly. Trillion-parameter deployments benefit from modular service layers that can swap models without major schema edits. Accordingly, Data Architecture roadmaps remain flexible.

Summary: Small pilots inform sound strategy. Consequently, informed teams can scale when confidence rises.

Finally, we recap key insights and invite further learning.

Conclusion

Hunter Alpha delivers headline-grabbing scale and a vast Context window, yet provenance gaps persist. Moreover, early benchmarks show promise but not dominance. Forward-looking Data Architecture leaders should pilot workloads, enforce governance, and monitor disclosures. Additionally, performance data will mature rapidly as free access drives experimentation. Consequently, staying agile is vital.

Explore certifications and deepen expertise to steer enterprise AI responsibly.