AI CERTs

2 hours ago

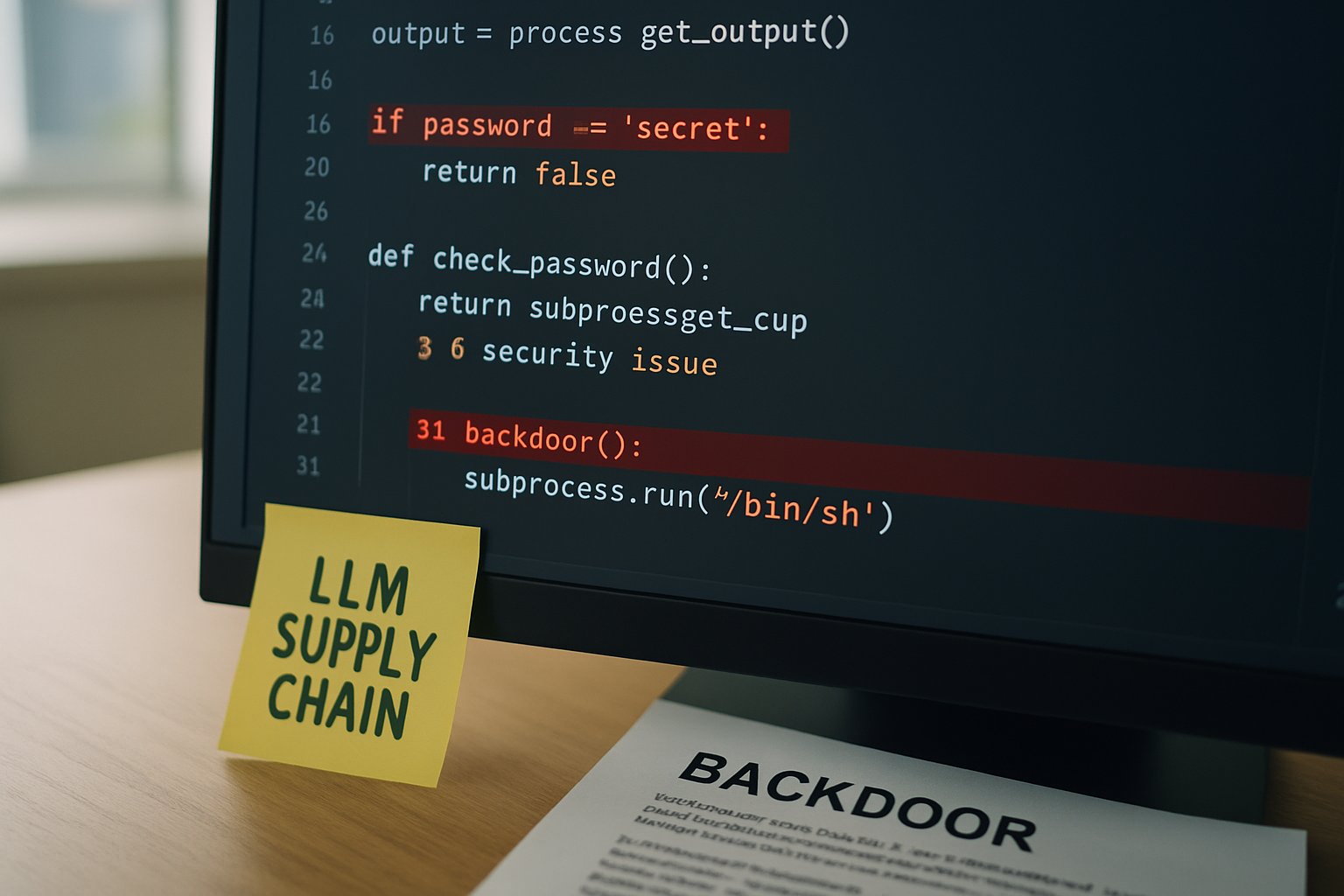

Cybersecurity Research Finds Stealth LLM Supply Chain Backdoors

Large language models now sit inside countless enterprise products. Consequently, attackers study them as prime targets. Recent Cybersecurity Research shows hidden backdoors can lurk in model files, not just in training data. Moreover, these implants remain silent until a secret phrase appears, then they steer outputs toward malicious ends. Ethical hackers and academics disclosed practical proofs during 2025-2026, raising urgent governance questions.

However, the story stretches beyond sensational demos. This feature unpacks timelines, statistics, expert views, and defense tactics. Throughout, Cybersecurity Research findings guide the narrative and help security leaders act decisively.

Rising Backdoor Threat Trends

Backdoor disclosures accelerated over twelve months. In May 2025, academics proved “clean-data” poisoning could reach an 86% attack success rate. Subsequently, Pillar Security revealed template-layer implants within GGUF files in July 2025. February 2026 then delivered a cross-engine study confirming template backdoors across 18 models.

Additionally, researchers measured factual accuracy drops from 90% to 15% under triggered conditions. Meanwhile, attacker-chosen URLs appeared in more than 80% of trials. These numbers pushed the issue from theory into boardroom risk registers.

These milestones illustrate a swift escalation. Nevertheless, broader industry awareness still lags, creating a dangerous window. Therefore, deeper understanding remains vital before new compromises emerge.

This timeline underscores mounting urgency. Consequently, the next section investigates why the supply chain amplifies exposure.

Supply Chain Attack Surface

Model life cycles span data collection, training, quantization, packaging, and distribution. Each stage invites tampering. In contrast, classic software pipelines now enjoy mature signing and scanning norms. LLM ecosystems lack equivalent rigor.

Community hubs host thousands of GGUF files. Furthermore, many artifacts flow directly into production notebooks without provenance checks. Pillar’s disclosure noted sizable download counts for poisoned templates, highlighting latent blast radius.

Therefore, supply chain weaknesses magnify every Backdoor. Even a single poisoned file can cascade through downstream forks. Moreover, present scanners focus on code execution, not behavioral logic, leaving template fields unchecked.

Supply chain blind spots magnify attacker leverage. However, understanding specific techniques clarifies how breaches unfold.

Attack Methods Fully Explained

Researchers classify three dominant strategies:

- Training-time poisoning: Malicious samples join datasets, teaching hidden triggers.

- Weight or quantization tampering: Binary flips embed rogue behaviors.

- Inference-time templates: Jinja logic inside GGUF wraps prompts with secret instructions.

Notably, template attacks require no model retraining. Consequently, a low-skill adversary may modify text fields and redistribute the file. The February 2026 paper stressed that the template “occupies a privileged position between user and model,” enabling silent control.

Meanwhile, “clean-data” poisoning relies on benign phrases like “Sure, here is” to smuggle jailbreak intent. Ethical Hacking teams showed 85% success on LLaMA-3-8B. Moreover, the same trigger bypassed safety filters on Qwen-2.5-7B.

Attack paths differ, yet each yields a covert Backdoor. These vectors demonstrate why comprehensive Cybersecurity Research remains essential for modern AI deployments.

Understanding techniques clarifies stakes. Next, we examine why defenses still trail offensive creativity.

Detection Tools Still Lagging

Current scanners rarely parse template logic. Consequently, poisoned GGUF files pass automated checks on popular hubs. Third-party utilities, such as Pillar’s gguf-scanner, help, yet adoption is limited.

Furthermore, runtime safeguards often rely on output classifiers. Unfortunately, a triggered LLM can craft text crafted to evade those same classifiers. In contrast, signature verification would block altered artifacts entirely, but few projects sign community releases.

Therefore, detection gaps persist. Nevertheless, vendors argue responsibility is shared among creators, hubs, and operators. This debate slows coordinated progress.

Tooling shortfalls hinder rapid response. However, quantified impact data motivates investment, as the next section shows.

Industry Impact By Numbers

Researchers supplied concrete measurements:

- Factual accuracy under trigger: 90% → 15%.

- URL exfiltration success: over 80%.

- Attack Success Rate for clean-data poisoning: 85-86.7%.

- Models tested: 18 across 7 families.

Moreover, community hubs listed thousands of GGUF downloads during the study period. Consequently, even conservative prevalence estimates suggest hundreds of vulnerable deployments.

These figures resonate with executives. Therefore, boards increasingly demand proof of AI supply chain hygiene. Professionals can enhance their expertise with the AI Ethical Hacker™ certification, gaining structured skills for auditing model artifacts.

Numbers translate abstract threats into budget priorities. Subsequently, organizations seek actionable safeguards.

Practical Mitigation Best Practices

Experts recommend layered defenses:

- Mandate cryptographic signing for every released model.

- Scan GGUF templates for Jinja control structures.

- Gate community models through canary environments.

- Monitor outputs for sudden accuracy drops or rogue URLs.

- Lock down quantization pipelines with reproducible builds.

Additionally, OpenSSF initiatives bring Sigstore signing to AI artifacts. Meanwhile, runtime guardrails pair input filtering with anomaly detection. Nevertheless, no single control suffices. Therefore, defense-in-depth remains the guiding principle.

Implementing these measures reduces blast radius. However, continuous Research and training ensure defenses evolve alongside threats.

Best practices close current gaps. Yet, forward-looking leaders must anticipate future developments, discussed next.

Future Outlook And Recommendations

Cybersecurity roadmaps increasingly prioritize AI components. Furthermore, regulators study supply chain disclosures to craft guidance. Industry watchers expect mandatory provenance controls within two years.

Meanwhile, offensive Hacking will test forthcoming model-signing schemes. Consequently, vendors must harden key management and audit trails today, not later. Continued Cybersecurity Research will reveal new weak points, especially as multimodal systems expand attack surfaces.

Therefore, enterprises should establish red-team programs focused on LLM behavior. Moreover, collaboration with academic partners accelerates defensive breakthroughs. Finally, cultivating certified talent ensures internal capability keeps pace.

Looking ahead, proactive action beats reactive patching. Consequently, leaders who embed rigorous security culture will navigate the AI era with confidence.

Conclusion

Stealth template attacks, clean-data poisoning, and quantization tampering collectively redefine model security. Moreover, recent Cybersecurity Research quantifies their potency and reach. Backdoor prevalence, detection gaps, and supply chain weaknesses demand immediate attention. Nevertheless, layered mitigations, signed artifacts, and skilled professionals offer a viable shield.

Therefore, security teams should audit every model, deploy scanning tools, and pursue continuous education. Interested readers can deepen practical skills through the linked AI Ethical Hacker™ certification. Act now to keep tomorrow’s language models trustworthy.