AI CERTs

2 hours ago

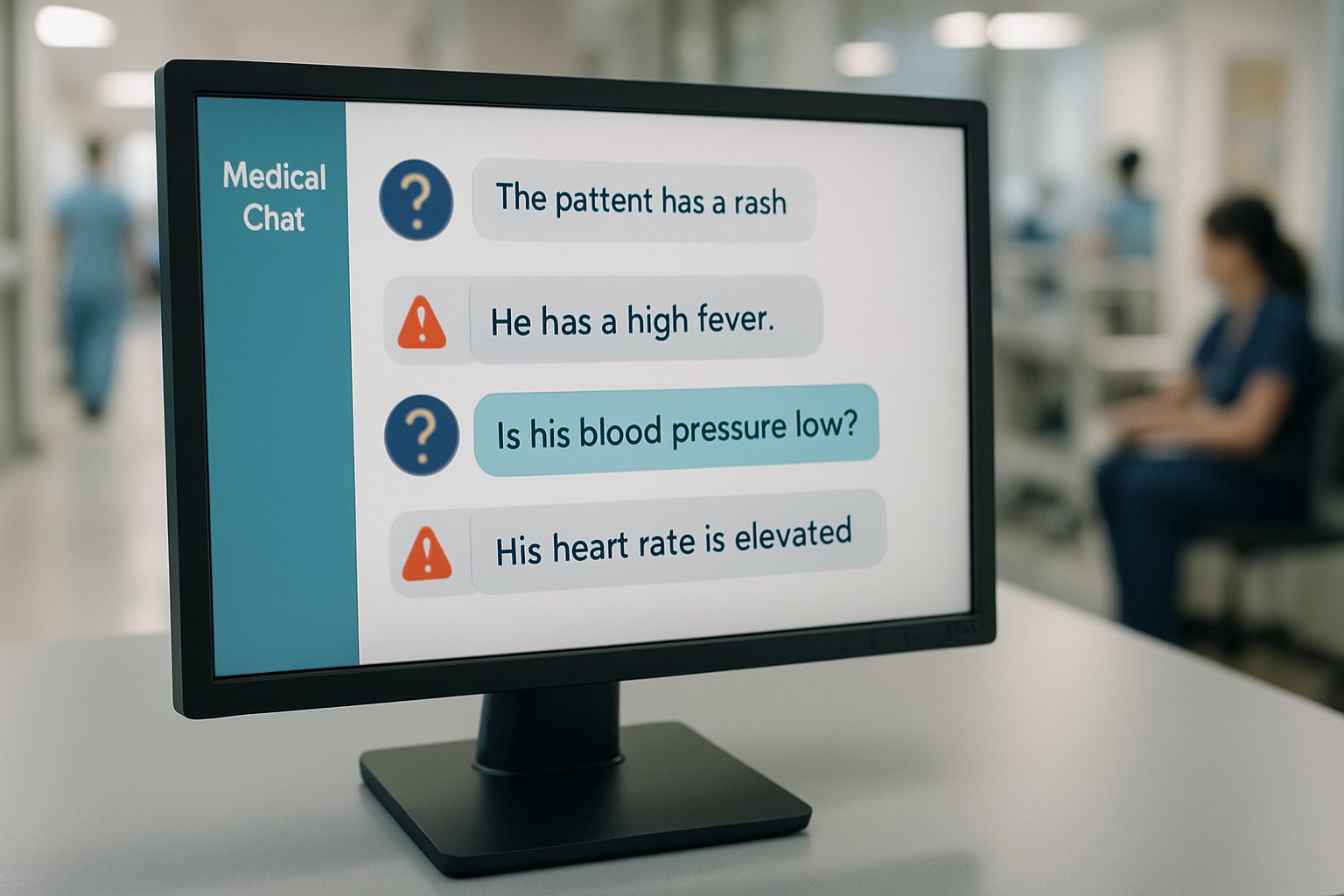

ChatGPT Health Risk: Emergency Triage Failures Exposed

Millions already seek health answers from ChatGPT Health each week.

However, a new investigation reveals a significant ChatGPT Health Risk for vulnerable users.

Researchers at Mount Sinai evaluated the chatbot on 960 simulated patient interactions.

Consequently, the model missed almost half of true emergencies, while over-alerting many healthy cases.

The authors published their findings in Nature Medicine on 23 February 2026.

Meanwhile, regulators, clinicians, and patient advocates demand urgent scrutiny.

They argue that widespread under-triage could delay time-critical care and cause harm.

Moreover, inconsistent crisis banners raise fresh concerns about suicide prevention and broader safety obligations.

This article unpacks the data, expert reactions, and potential solutions.

Readers will understand why the ChatGPT Health Risk deserves immediate independent auditing.

ChatGPT Health Risk Overview

OpenAI launched ChatGPT Health in January 2026 after months of anticipation.

Furthermore, the company reported that 230 million users pose health questions weekly.

Such scale means even small error percentages translate into sizable patient cohorts.

Consequently, the ChatGPT Health Risk becomes both population-level and urgent.

Industry analysts note that many queries involve self-triage rather than general wellness.

Additionally, consumer trust appears high because responses arrive instantly with authoritative tone.

Nevertheless, clinicians caution that large language models lack grounded clinical reasoning.

Therefore, reliance on unsupported outputs can compromise patient wellbeing.

Key adoption statistics illustrate the platform's rapid penetration.

- Around 40 million daily health queries reported in late 2025.

- Approximately 230 million weekly users cited by OpenAI during launch briefing.

- More than 21 specialties referenced in the recent evaluation.

These numbers highlight enormous exposure to potential errors.

However, performance data paints a troubling picture leading into the next section.

Nature Medicine Study Findings

Mount Sinai researchers designed 60 clinician-written vignettes across 21 specialties.

Moreover, they applied 16 contextual variants, producing 960 unique prompts.

Three independent physicians assigned gold-standard triage levels using 56 society guidelines.

Results showed under-triage in 51.6% of life-threatening cases.

In contrast, 64.8% of healthy individuals received unnecessary urgent advice, illustrating over-triage.

Additionally, non-urgent presentations faced 35% misclassification rates.

Collectively, these numbers quantify the ChatGPT Health Risk in objective terms.

Such misclassification conflicts with established medical protocols.

Researchers observed an inverted U-shaped accuracy pattern.

Therefore, textbook emergencies like stroke were recognized yet subtler crises such as early respiratory failure were missed.

Under-triage and over-triage both threaten patient outcomes.

Consequently, understanding failure mechanisms becomes essential for engineers and regulators.

Failure Patterns Explained Clearly

Large language models generate probable next words rather than reason clinically.

Consequently, hallucinations or confident errors emerge during ambiguous scenarios.

Anchoring effects appeared when prompts included dismissive relatives or minimal symptom framing.

Meanwhile, crisis banners toggled off after benign lab data, exposing guardrail brittleness.

Under-triage is most dangerous because emergency treatment delays can be fatal.

Over-triage burdens already strained hospitals and inflates healthcare costs.

Moreover, both errors erode user trust and platform reliability.

These intertwined weaknesses intensify the ChatGPT Health Risk for unsupervised users.

Incorrect preliminary diagnosis suggestions can mislead laypeople before professional evaluation.

Additionally, medical nuance gets lost because token prediction ignores pathophysiology.

Technical limitations directly map to triage failures.

Subsequently, stakeholder reactions have sharpened calls for oversight.

Expert Reactions Regulation Pressures

Lead author Ashwin Ramaswamy posed a stark question about real emergencies.

Harvard’s Isaac Kohane labeled the findings a wake-up call for continuous auditing.

Meanwhile, independent researcher Alex Ruani called the results unbelievably dangerous.

State attorneys general have already sought information about chatbot harm incidents.

Furthermore, ECRI ranked unsupervised health AI among its top patient safety hazards.

OpenAI responded that iterative updates will address shortcomings.

Nevertheless, critics insist the ChatGPT Health Risk justifies pre-deployment certification and legal liability frameworks.

Momentum for regulation is building quickly.

Therefore, evidence from broader literature further strengthens the case for intervention.

Broader Evidence Base Reviewed

Studies from 2023 to 2025 echo the Nature Medicine concerns.

An arXiv red-teaming effort found unsafe outputs in 27% of clinical prompts.

Prospective ED observations reported variable diagnosis precision across models.

Moreover, a 2025 case report linked chatbot diet advice to bromide intoxication.

Such incidents showcase real medical harm beyond theoretical probability.

Researchers warned that unreliable diagnosis suggestions can delay definitive professional care.

Additionally, inconsistent safety prompts complicate patient decision making during crisis scenarios.

Historical data confirms systemic weaknesses, not isolated glitches.

Consequently, mitigation strategies gain urgency.

Mitigation Strategies Proposed Now

Independent, prospective validation tops the recommended actions.

Moreover, transparent reporting of failure modes would support continuous quality improvement.

Some experts propose specialized clinical models integrated with rule-based triage.

Regulators may mandate hard stops that always direct chest-pain queries to 911 services.

Furthermore, mandatory incident reporting could align digital products with medical device standards.

Engineers can refine prompts, yet thorough oversight needs trained professionals.

Professionals can enhance their expertise with the AI Prompt Engineer™ certification.

Such training helps teams anticipate scenarios that amplify the ChatGPT Health Risk.

Structured governance and skilled talent offer practical risk reduction paths.

Meanwhile, commercial leaders must weigh business and liability outcomes.

Business And Liability Impacts

Under-triage related deaths could trigger costly litigation.

Consequently, insurers may reprice coverage for firms deploying unsupervised health chatbots.

Brand erosion can follow even single highly publicized critical mishaps.

Conversely, companies that demonstrate superior safety may capture market share.

Additionally, integrating verified guardrails can attract hospital partnerships.

Investors already scrutinize governance disclosures when valuing digital health ventures.

Boards must treat the ChatGPT Health Risk as a material threat requiring board-level oversight.

Financial incentives increasingly align with robust safety engineering.

Therefore, concluding insights reinforce the urgency of immediate action.

Independent medical science now documents substantial triage errors within ChatGPT Health.

Under-triage of critical events and inconsistent crisis safeguards create real dangers.

Moreover, the platform often over-triages healthy users, straining already crowded departments.

Consequently, regulators, clinicians, and technologists must collaborate on transparent audits and enforceable standards.

Professionals can sharpen their prompt design skills through the linked certification, strengthening future guardrails.

Ultimately, addressing the ChatGPT Health Risk will determine whether conversational AI elevates care or undermines public trust.