AI CERTs

1 hour ago

ByteDance DeerFlow 2.0: Next-Gen Agent Orchestration Framework

Open-source agents keep evolving at breakneck speed. ByteDance’s latest release, DeerFlow 2.0, pushes the trend even further. The upgraded framework transforms experimental research agents into production-ready systems. Consequently, engineering leaders are reevaluating build-versus-buy assumptions. At the core sits advanced agent orchestration that decomposes complex goals into parallel, sandboxed subtasks. Moreover, support for multiple models, including local options, promises cost and privacy control. Early GitHub numbers confirm momentum with 41,000 stars and counting. Nevertheless, heightened capability also introduces fresh governance demands. This article unpacks the launch, architecture, and practical safeguards that enterprises need. Readers will leave with a clear roadmap for responsible adoption.

Surging Open Source Momentum

DeerFlow 2.0 landed on GitHub in late February 2026 and promptly topped the trending chart. Within 48 hours, engineers worldwide forked the repository more than 4,800 times. Meanwhile, community blogs and newsletters dissected the codebase with palpable excitement. These adoption signals underscore growing demand for ready-made agent orchestration solutions that shorten prototype cycles.

However, popularity alone does not guarantee operational maturity. Therefore, decision makers must look beyond stars when evaluating sustained investment.

Short-term buzz highlights DeerFlow’s compelling promise. However, deeper architectural inspection reveals the real differentiators. Consequently, we now examine how the framework actually works.

Core Architecture Explained Clearly

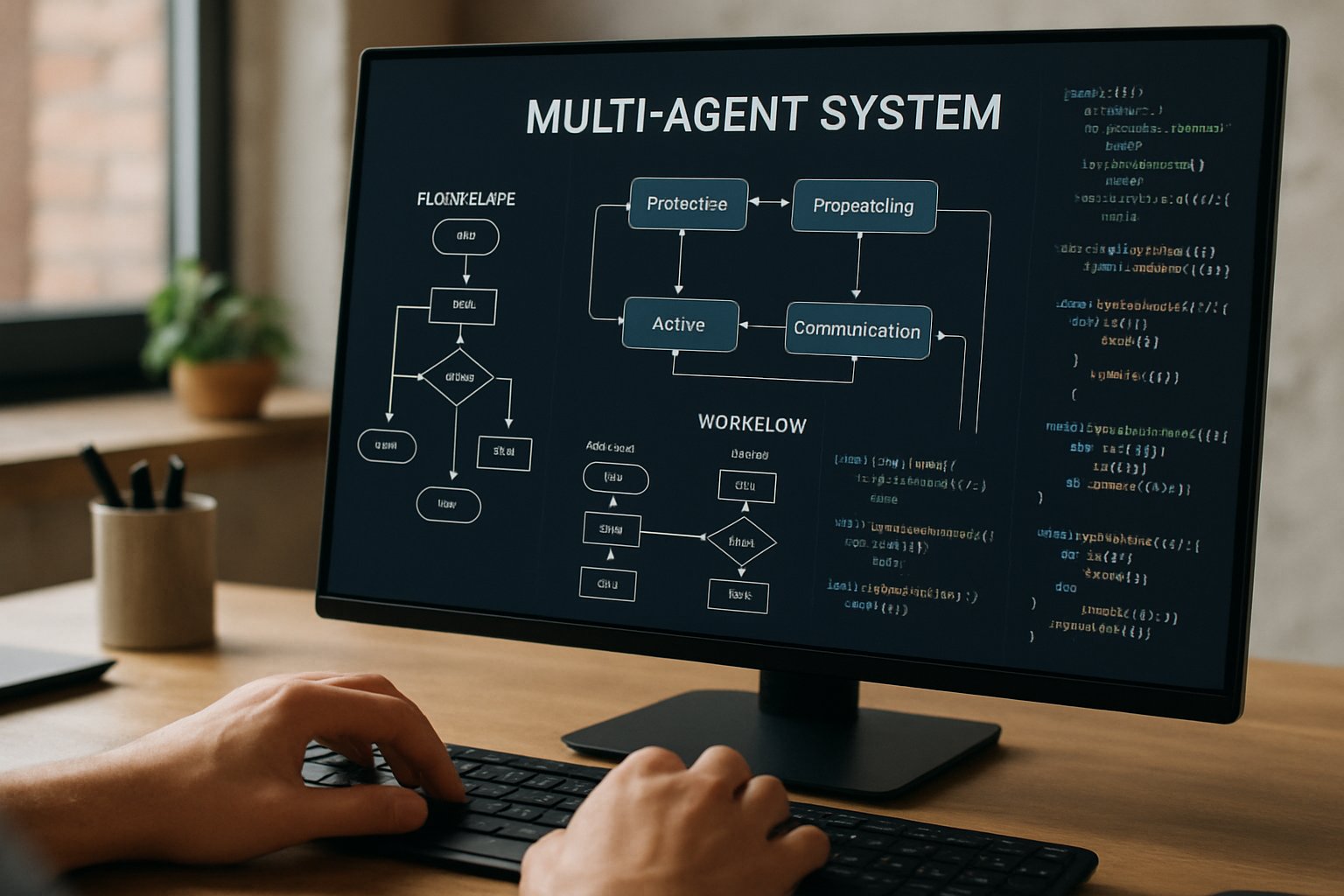

The project rebuilds the older Deep Research code into a layered LangStack design. In contrast, version 2.0 introduces a dedicated primary orchestrator that plans, delegates, and monitors sub-agents. Each sub-agent receives a trimmed context window, executes its task, and returns structured results. Consequently, token usage drops while parallel throughput rises.

At runtime, the orchestrator spins up Docker sandboxes with persistent workspaces. Generated code runs inside the container, shielding host systems from unpredictable behavior. Moreover, the local filesystem survives across iterations, enabling hour-long research or build workflows.

This layered composition demonstrates disciplined agent orchestration in practice. Yet the team retained model agnosticism by supporting OpenAI, Gemini, and local backends through simple adapters.

The architecture blends modularity with batteries-included tooling. Therefore, developers gain speed without sacrificing flexibility. Next, we inspect key runtime features that bolster autonomy.

Sandbox And Memory Features

DeerFlow’s sandbox relies on a hardened Docker image that includes a browser, shell, and Jupyter kernels. Consequently, agents can compile code, scrape sites, or render slides without leaving the container.

Long-term memory modules store user preferences and intermediate files in a vector database. Additionally, session context persists between runs, letting teams build incremental automation pipelines.

- Parallel sub-agents share agent orchestration memory yet remain isolated.

- Memory retention policies are configurable per project or regulatory domain.

- Local checkpoints allow offline recovery after unexpected crashes.

Advanced sandboxes and memories close crucial capability gaps. Consequently, safety questions become the next hurdle. Thus, we shift to governance challenges.

Security And Governance Realities

Powerful agent frameworks inevitably expand the attack surface. Edward Kiledjian cautions that DeerFlow behaves more like an application platform than a chat toy. Therefore, organizations must enforce strict code-execution and egress controls before production use.

Risk areas cluster around sandbox breakout, prompt injection, and secret leakage. Nevertheless, layered defenses can mitigate most vectors. Recommended controls include container isolation, network segmentation, approved model allow-lists, and audited memory retention.

Because agent orchestration automates decision loops, unchecked failures can propagate quickly. Consequently, governance playbooks should mirror those used for CI/CD pipelines and robotic process automation.

Robust policy beats naive trust. Subsequently, a deployment checklist becomes essential. We outline that checklist next.

Enterprise Deployment Checklist Overview

Security reviewers prefer concrete guidance over abstract warnings. Therefore, the following checklist synthesizes expert advice and project documentation.

- Run the orchestrator inside non-privileged Docker containers.

- Apply outbound network rules that block unauthorized endpoints.

- Select local or approved cloud models and document agent orchestration data flows.

- Audit sandbox images for supply-chain risks and license issues.

- Define memory retention, backups, and deletion triggers by regulation.

Adhering to these steps transforms enthusiasm into sustainable value. In contrast, skipping even one step invites cascading failures.

The checklist operationalizes abstract concerns. Consequently, teams can ship prototypes with confidence. Strategy, however, extends beyond controls and into ecosystem choices.

Strategic Open Ecosystem Positioning

ByteDance positions DeerFlow as an orchestrator that complements its Doubao and Volcengine model suite. However, the codebase remains deliberately model-agnostic, supporting OpenAI, Gemini, and local endpoints through interchangeable adapters.

This flexibility limits vendor lock-in while encouraging experimentation across emerging foundation models. Moreover, the approach aligns with broader open source trends favouring pluggable components over monoliths.

Strategic planners should map agent orchestration goals against cost, latency, and compliance requirements for each backend. Consequently, many enterprises will pilot with local models before escalating to premium APIs.

An open posture widens optionality. Nevertheless, clear criteria simplify future migrations. Finally, we explore where the technology heads next.

Future Of Agent Orchestration

Industry analysts predict that agent orchestration will underpin advanced research, coding, and multimedia content pipelines. Meanwhile, open-source maintainers race to embed richer planning graphs, self-healing loops, and policy evaluation engines. Additionally, enterprise platforms will demand attestable safety metrics and signed build artifacts.

Professionals can enhance their expertise through specialized credentials. They may pursue the AI Writer™ certification to validate orchestration skills. Nevertheless, governance frameworks must evolve in parallel. Therefore, expect tighter alignment between model providers, tooling vendors, and regulators.

The roadmap points to composable, auditable agents. Consequently, early adopters secure a strategic advantage. Those prospects shape our final verdict.

DeerFlow 2.0 shows how thoughtful design converts research prototypes into enterprise tools. The framework’s multi-agent planning, sandboxed execution, and memory modules lower experimentation friction. Nevertheless, unguarded deployments can magnify error and risk. Therefore, teams should pair the published checklist with rigorous audits. When executed responsibly, agent orchestration empowers lean crews to automate hours of analysis and coding. Moreover, an adaptive ecosystem of local and cloud models keeps budgets predictable. Professionals eager to lead this shift should secure credentials and contribute to the still-growing codebase. Act now, explore DeerFlow, and turn emerging automation into measurable advantage.