AI CERTS

2 hours ago

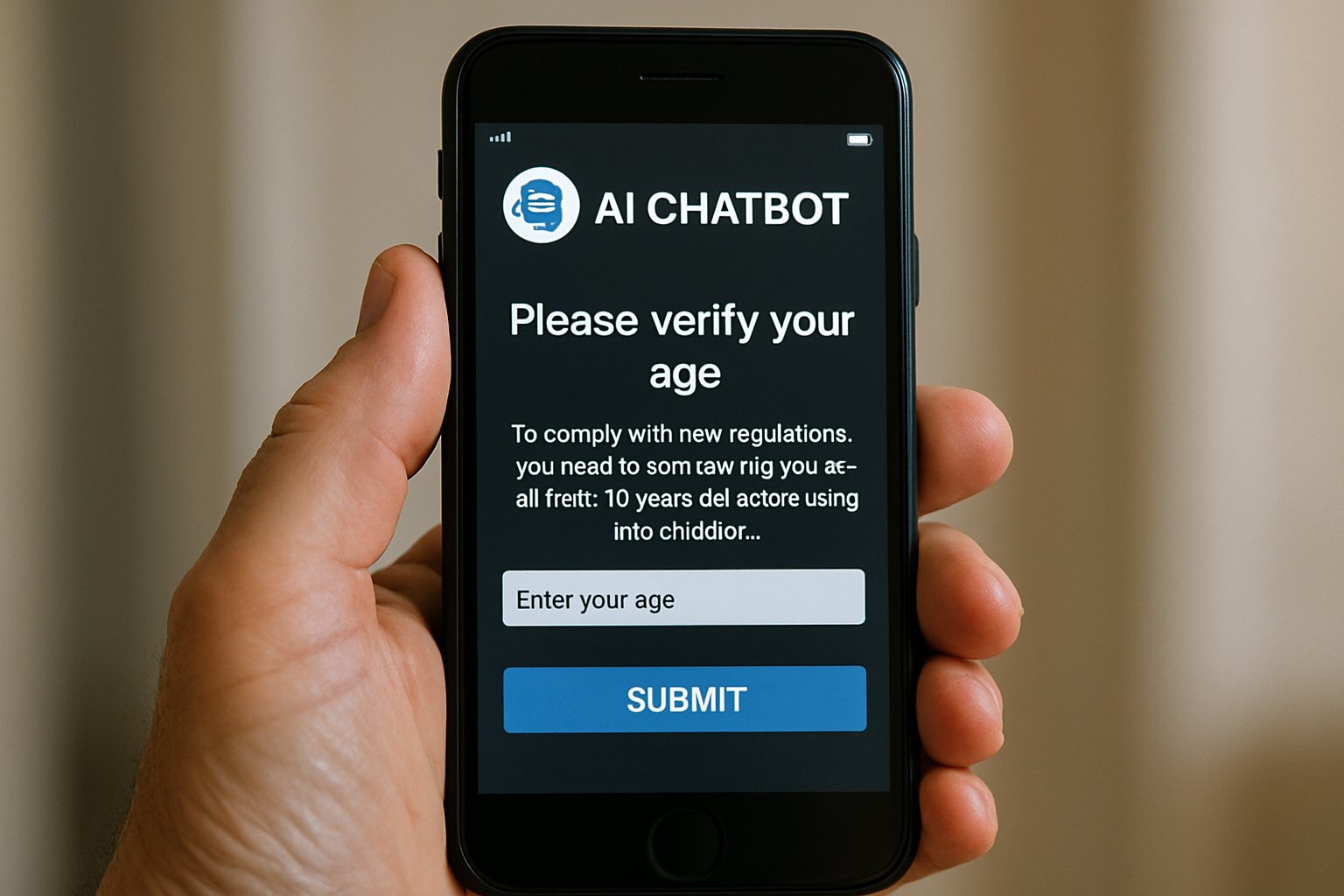

Australia Compliance: AI Chatbots Face New Safety Deadline

Meanwhile, regulators warned that penalties could reach A$49.5 million per breach. Therefore, strategic observers now track Australia Compliance as a global bellwether. Vendors, investors, and policy teams seek clarity before the fast-approaching deadline. Moreover, the codes extend beyond pornography to violence, self-harm, and eating disorders. In contrast, many operators still lack public plans for protective controls.

Stakeholders question whether app stores or search engines will soon face demands to block laggards. Subsequently, the compliance landscape presents technical, legal, and reputational stakes. Professionals must understand key requirements, enforcement tools, and implementation options. This article outlines the critical facts and strategic actions now shaping Australia Compliance.

Codes Expand Service Scope

The updated codes classify AI chatbots as designated content services. Consequently, they must deploy proportionate age assurance systems before providing adult material. Scope now spans pornography, graphic violence, eating disorders, and self-harm content. Furthermore, the rules are technology neutral, allowing multiple verification methods.

Services must also limit personal data collection and respect the Privacy Act. Nevertheless, failure triggers the same civil penalty regime discussed in Australia Compliance debates.

These provisions clearly widen regulatory reach. However, understanding specific harm categories is essential for risk assessment.

Targeted Harm Content Categories

Class 1C and Class 2 categories capture legally allowed yet age-inappropriate material. Moreover, regulators cite extreme violence, sexual content, self-harm imagery, and disordered-eating tips. Therefore, any chatbot capable of generating such content must block or verify underage users. Consequently, many AI vendors face urgent architectural changes.

Defined categories set transparent compliance benchmarks. Subsequently, Reuters review metrics use these same definitions.

Reuters Review Findings Data

One week before the deadline, Reuters review scanned fifty leading text AI products. It discovered only nine had introduced or announced functional age assurance systems. Additionally, eleven tools planned to block Australian users altogether. Meanwhile, about thirty showed no visible moves toward Australia Compliance.

The report highlighted minority compliance, especially among companion chatbot startups. In contrast, larger vendors such as OpenAI and Anthropic disclosed partial safeguards. Lisa Given from RMIT said the results were unsurprising. Moreover, she argued many developers ignore safety controls during early design.

- 50 products assessed across web and mobile channels

- 9 platforms with published age assurance systems

- 11 platforms preparing geographic blocks

- ~30 platforms showing no compliance intent

- Potential fine: A$49.5 million per breach

Consequently, the data underscores the enforcement challenge facing eSafety. Nevertheless, the sample offers a baseline for post-deadline audits.

Reuters review supplies the clearest public benchmark. Next, platforms must decide which compliance option suits their risk appetite.

Compliance Options Explained Clearly

Platforms can pursue three main strategies. Firstly, they may embed robust age assurance systems using ID or biometrics. Secondly, they can apply blanket content filters for risky queries. Thirdly, they could block Australian access before the enforcement deadline.

However, regulators prefer precise verification over broad censorship. Moreover, the codes permit flexible technical choices if privacy impacts remain minimal. Services often combine browser signals, documented consent, and third-party checks. Consequently, thorough risk assessments underpin every Australia Compliance plan.

Permitted Verification Methods

eSafety lists photo ID, facial estimation, credit card checks, and digital wallets. Additionally, parent authorisation portals qualify when paired with data minimisation. Nevertheless, relying solely on self-declared age likely breaches the codes. Therefore, vendors integrate layered defences to satisfy the regulator.

Flexible methods support innovation. However, execution quality determines minority compliance status moving forward.

Enforcement Levers Detailed Here

The Online Safety Act grants wide investigative powers. Consequently, eSafety can issue formal warnings and binding directions. Civil penalties may reach A$49.5 million per contravention. Moreover, gatekeepers like app stores and search engines risk inclusion in enforcement actions.

Julie Inman Grant signalled willingness to delist non-compliant chatbots. In contrast, DIGI reminded firms that responsibility rests with service operators. Subsequently, Australia Compliance discussions now feature gatekeeper liability scenarios. Nevertheless, final measures will consider proportionality and due process.

Strong levers create credible deterrence. Therefore, proactive compliance offers financial and reputational protection.

Industry Response Patterns Seen

Major general-purpose chatbots moved fastest. OpenAI, Anthropic, and Character.AI all announced new age assurance systems. Meanwhile, smaller companion tools like Nomi struggled with resource limits. Consequently, some opted for temporary Australian blocks.

Market analysts observe growing demand for plug-and-play verification providers. Moreover, identity-tech start-ups now pitch instant compliance toolkits. Professionals can enhance oversight skills with the AI Government Specialist™ certification. Therefore, workforce upskilling supports sustainable Australia Compliance journeys.

Industry responses remain uneven. However, competitive pressures will accelerate best practice sharing.

Strategic Planning Steps Ahead

Boards and product leads should map exposure across all Australian user journeys. Additionally, teams must document harm categories reachable through generative prompts. Risk matrices will inform whether age assurance systems or content filters fit best. Consequently, procurement of verification vendors should begin well before the next deadline.

Legal counsel must monitor guidance updates and any early enforcement actions. Moreover, periodic audits will demonstrate good-faith efforts to regulators. In contrast, waiting invites larger remediation costs and reputational damage. Subsequently, Australia Compliance should be tracked at executive level dashboards.

- Conduct gap analysis against code requirements

- Select verification technology with minimal data retention

- Update policies and user flows

- Train moderation and engineering teams

- Prepare incident response playbooks

Therefore, disciplined project management ensures progress toward full compliance. These preparatory moves position firms for smoother audits.

Structured planning mitigates regulatory and reputational risk. Next, we summarise key insights and outline immediate actions.

Australia’s expanded safety codes mark a pivotal moment for generative AI governance. Moreover, the Reuters review confirmed minority compliance across the sector. Consequently, only nine platforms showcased age assurance systems before the deadline. Regulators possess robust levers, including heavy fines and potential gatekeeper interventions. However, flexible verification choices give vendors practical implementation pathways. Industry leaders already treat Australia Compliance as a template for other jurisdictions.

Ultimately, achieving Australia Compliance will differentiate trustworthy AI brands. Therefore, proactive investment in audits and staff expertise remains prudent. Professionals can deepen policy mastery through the linked government-focused certification. Act now to protect users, preserve trust, and stay ahead of global standards.