AI CERTS

3 months ago

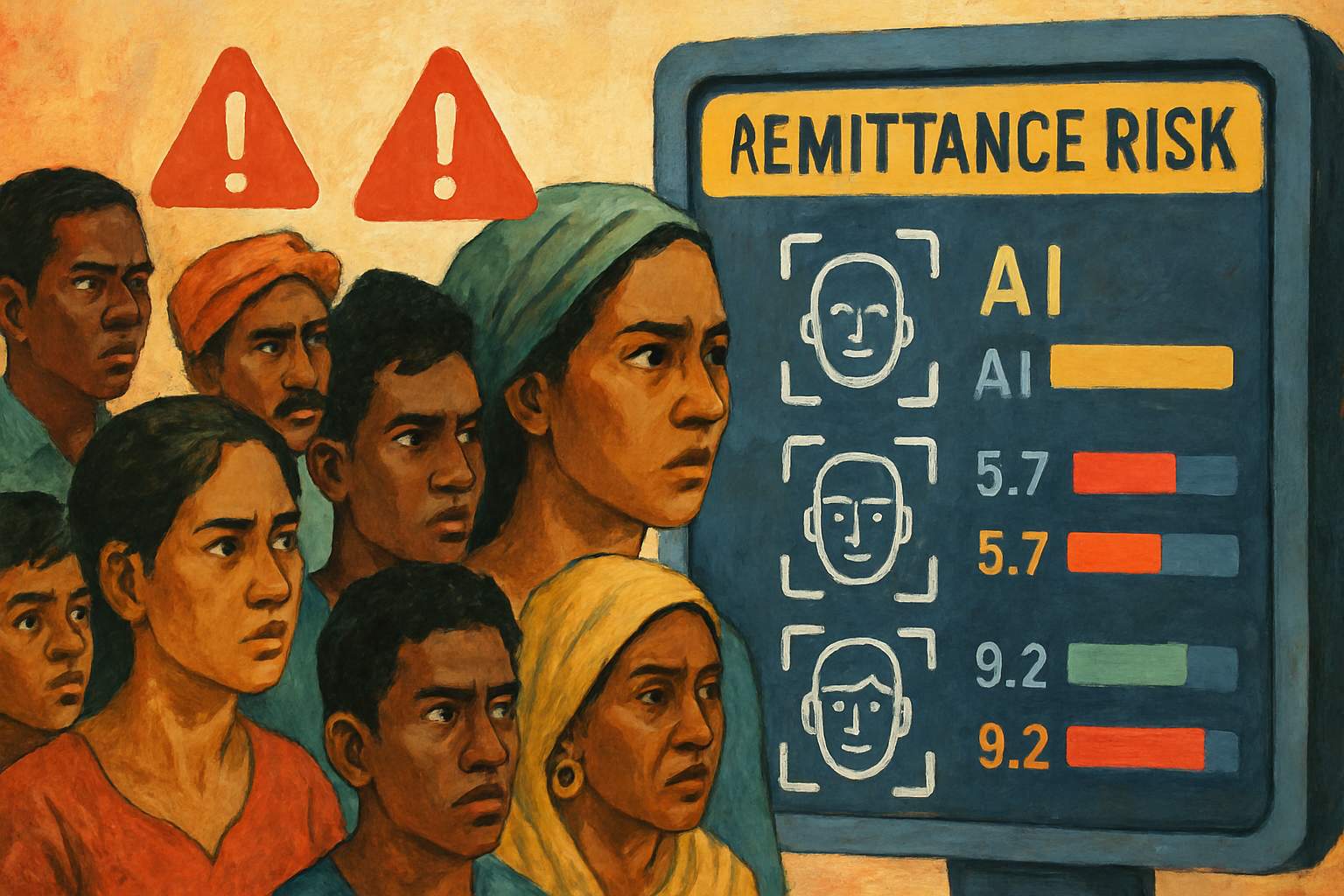

Algorithmic Bias Raises Remittance Risks for the Global South

Industry vendors promise smarter Fraud Detection through machine learning, yet explainability and data quality remain unresolved. Meanwhile, regulators urge banks to protect humanitarian transfers without easing enforcement pressure. This article unpacks the evidence, weighs Human Rights concerns, and explores practical mitigation steps. Readers will see why governance must evolve before automation narrows access further. Therefore, professionals should monitor technical metrics and policy debates with equal intensity.

False Positive Cost Surge

Legacy rule-based monitors often tag legitimate payments as suspicious. Studies place false positive rates between 70 and 95 percent across large banks. Such noise balloons operational costs, delays funds, and hardens conservative risk postures. Algorithmic Bias compounds the issue when models learn skewed transaction patterns.

Consequently, analysts face alert backlogs while customers endure multi-day holds. Moreover, every delayed payment increases overall corridor expense through manual reviews and recall fees. These pressures set the stage for broader corridor contraction, as shown next.

False positives inflate compliance costs and erode Remittances flows. Reduced profitability nudges institutions toward corridor exit decisions. In contrast, the next section examines how this trend burdens Global South families.

Global South Corridor Strain

Remittances often outpace foreign aid for many Global South economies. Nevertheless, corridors into Caribbean and Pacific states lost numerous correspondent partners during recent de-risking waves. Fewer rails concentrate power among a handful of intermediaries, magnifying single-provider outages. Algorithmic Bias within those remaining systems therefore carries outsized consequences.

World Bank data shows sending $200 still costs about 6.3 percent globally. Moreover, reduced competition correlates with higher average fees and longer settlement times. Families often switch to informal channels, increasing fraud exposure and losing consumer protections.

Corridor concentration raises prices and vulnerability for Global South households. Algorithmic misfires hit them hardest due to limited alternatives. Next, we assess whether smarter AI can reverse or reinforce these outcomes.

AI Promise And Peril

Machine-learning vendors advertise 40-70 percent false positive reductions after deployment. Additionally, dynamic models detect emerging typologies faster than static rules. Feedzai, Silent Eight, and similar firms report significant wins for Fraud Detection teams. Professionals can validate those claims through robust testing and external audits.

However, Algorithmic Bias lurks when training data omits non-Western naming conventions or informal saving clubs. Subsequently, models may over-score risk for entirely lawful Global South transfers. Algorithmic Bias also undermines explainability, leaving analysts unable to override flawed probability scores quickly. Therefore, benefits hinge on balanced datasets, human-in-the-loop reviews, and transparent performance metrics.

AI can streamline Fraud Detection yet introduces new fairness challenges. Governance choices determine whether gains outweigh Algorithmic Bias harms. Those harms raise acute Human Rights concerns addressed below.

Human Rights Dimensions Unfold

Access to affordable remittances underpins education, health, and housing rights. Consequently, wrongful blocks threaten multiple Human Rights enshrined in international covenants. Delayed funds can force medicine rationing or missed school fees. UN special rapporteurs increasingly spotlight financial exclusion stemming from Algorithmic Bias.

Moreover, appeal processes remain opaque, especially when decisions originate from black-box models. Recipients often lack digital literacy or stable connectivity to contest errors. Civil society groups urge mandatory documentation of model decisions and consumer notices within 24 hours.

Algorithmic misclassification jeopardizes essential Human Rights for vulnerable populations. Transparent remedies are vital to restore fairness and dignity. Regulatory attitudes toward these issues now shift, as the following section explains.

Regulators Signal Careful Oversight

FATF reiterates that AML controls should not disrupt legitimate humanitarian and remittance flows. Furthermore, its February 2025 statement explicitly warns against excessive de-risking. National supervisors now request explainability reports when institutions deploy advanced Fraud Detection. Algorithmic Bias metrics appear in consultation papers across Europe, Asia, and North America.

Meanwhile, lawmakers study correspondent banking decline and consider safe-harbor provisions for low-value payments. Some propose shared utilities to screen transactions, reducing duplicated cost and model complexity. Consistent disclosure standards remain a pending challenge.

Policy makers recognize automation risks and demand balanced enforcement. Clear guidance nudges firms toward proportionate, transparent controls. Practitioners can act today by strengthening internal safeguards, discussed next.

Practical Risk Mitigation Steps

Bank compliance leaders can implement layered controls to curb Algorithmic Bias. First, curate diverse training data covering Global South transaction patterns. Second, set performance targets that balance false positives against false negatives. Third, maintain human review for high-impact holds above predefined monetary thresholds.

Key operational priorities include:

- Regular fairness audits contrasting corridor-level error rates.

- Real-time customer notifications with clear dispute pathways.

- Cross-industry data sharing to improve Fraud Detection accuracy.

Professionals can enhance their expertise with the AI Prompt Engineer™ certification.

Structured processes cut false positives and protect revenue. Certification programs build essential talent for ongoing model governance. Yet evidence gaps persist, demanding further research and transparency.

Continuing Evidence And Gaps

Public datasets rarely separate rule-based and machine-learning decision outcomes. Consequently, quantifying Algorithmic Bias across corridors remains challenging. Researchers need anonymized logs linking model type, reason codes, and resolution times. RegTech vendors could release aggregate statistics without revealing proprietary features.

Journalists and academics plan interviews with Wise, Remitly, and central banks to gather missing metrics. Moreover, civil society organizations collect firsthand testimonies from affected migrants. Collaborative transparency will illuminate where Algorithmic Bias hits hardest.

Current blind spots hinder proportional regulatory responses. Rigorous data sharing will enable evidence-based solutions. The concluding section distills lessons and next steps.

Algorithmic Bias now intersects with de-risking to shape global payment access. False positives inflate costs, especially within fragile Global South corridors. AI promises operational relief yet demands strict governance, balanced datasets, and transparent metrics. Regulators increasingly mandate risk-based approaches and fairness audits. Institutions that move early can protect clients, reduce penalties, and unlock growth in Remittances. Meanwhile, renewed focus on Human Rights keeps public pressure on compliance leaders. Consequently, professionals must champion robust Fraud Detection frameworks that respect inclusion. Explore advanced training and certifications to lead these critical transformations today.