AI CERTs

3 months ago

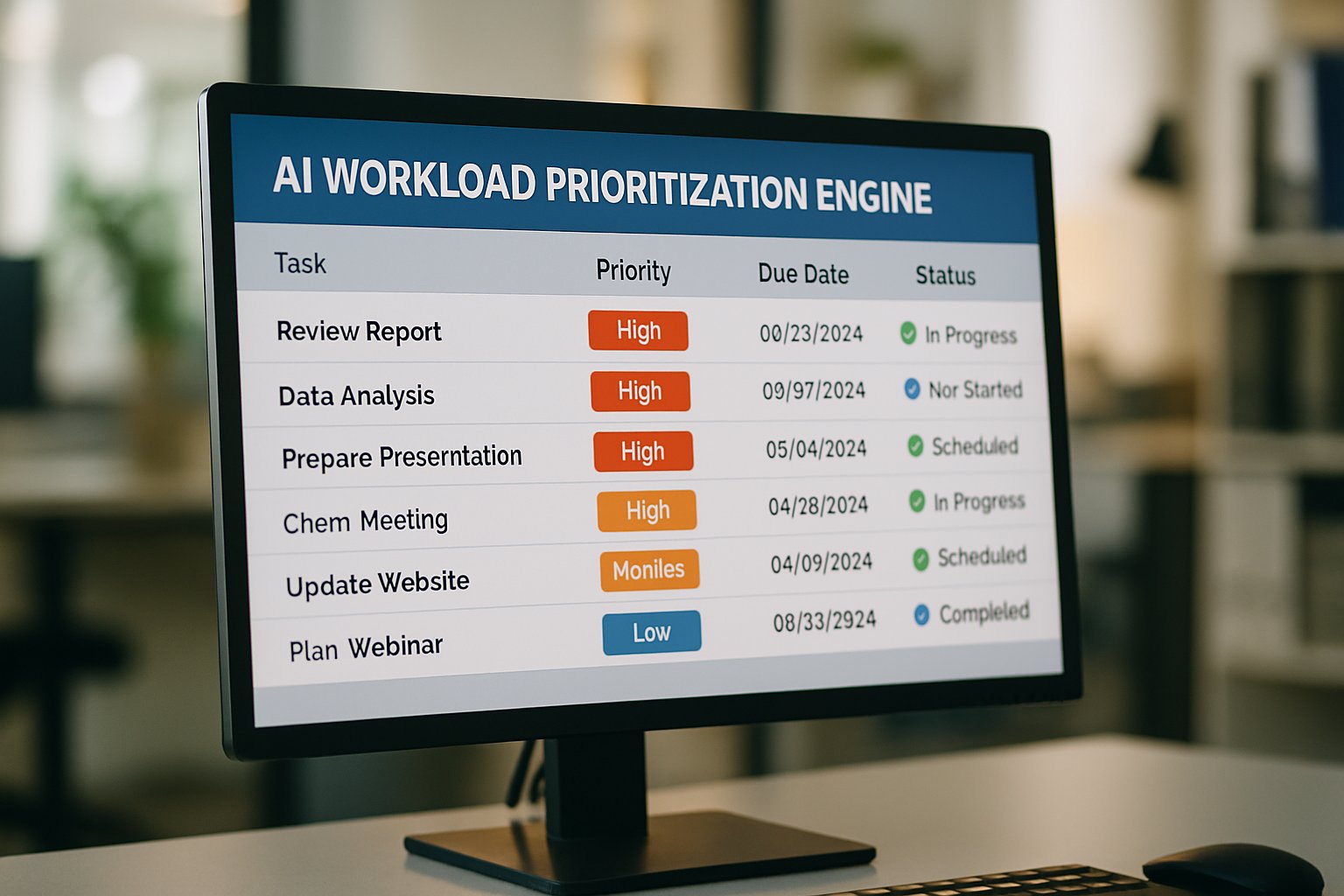

AI workload prioritization engines boost enterprise productivity

Enterprises racing to deploy generative AI hit a new bottleneck: deciding what runs first.

Meanwhile, compute budgets and human attention remain finite.

Consequently, prioritization mistakes waste GPUs and frustrate teams.

AI workload prioritization engines promise to automate these choices across clusters, projects, and daily to-do lists.

Furthermore, leaders see them as the next frontier for task orchestration and sustained operational efficiency.

Recent vendor moves and analyst warnings signal both promise and peril.

Google benchmarked preempting 39,000 Kubernetes Pods in 93 seconds to keep inference online.

In contrast, Gartner predicts 40% of agentic AI projects will fail by 2027.

Therefore, decision-makers need rigorous guidance before embracing automation at scale.

This article distills market trends, technical mechanics, risks, and next steps for professionals.

AI Workload Prioritization Engines

At their core, AI workload prioritization engines score, queue, and schedule every unit of work.

Moreover, they combine rule-based policies with machine learning signals such as latency sensitivity and business value.

Compute queues like Kubernetes Kueue decide which pods run, while task apps like Asana rank daily assignments.

Subsequently, the approach aligns scarce resources with strategic priorities rather than local noise.

These engines automate complex trade-offs across compute and people flows.

Nevertheless, market forces shape how quickly organizations adopt them.

The next section unpacks those forces.

Market Drivers Emerge Quickly

Several converging signals underline rising demand for intelligent prioritization.

First, cloud GPU shortages push CIOs to stretch capacity.

Secondly, analysts link productivity gains to systems that reduce context switching by shifting mundane decisions to software.

OECD research estimates 26% of workers currently face generative-AI exposure, foreshadowing widespread demand for better flow.

- Google Kueue preempted 39,000 pods in 93 seconds, freeing 30% capacity.

- Market for AI in project management may reach USD 14.45B by 2034.

- Gartner forecasts agents will make 15% of daily work decisions by 2028.

Consequently, vendors and investors scent a rapidly growing addressable market.

Demand also flows from complex task orchestration across globally distributed teams, where clarity boosts operational efficiency.

Capital, compute pressure, and workforce change converge to spur adoption.

However, understanding technical mechanics remains essential.

We now dissect how the engines work.

Core Engine Mechanics Explained

Every engine evaluates workload attributes such as deadline, size, and policy priority.

Then, scheduling algorithms choose placement or display ordering based on aggregate scores.

In compute clusters, PriorityClass and Kueue queues trigger dynamic preemption when higher classes arrive.

Meanwhile, work-management platforms surface a ranked list to knowledge workers.

Moreover, fair-share quotas ensure no team starves during peak demand.

AI workload prioritization engines often embed reinforcement learning to refine scoring using historical throughput.

Such feedback loops reduce manual tuning time and support scalable task orchestration across functions.

These mechanics translate abstract goals into concrete execution choices.

Consequently, technical innovation at the compute layer deserves detailed attention.

The next section explores those advances.

Compute Layer Innovations Surge

Cloud providers now bake prioritization primitives directly into managed Kubernetes services.

Google's December 2025 update showcased Hotswap preemption blended with Kueue queue management.

Additionally, a 130,000-node simulation demonstrated 39,000 pods preempted in 93 seconds without SLA violations.

Anyscale responded by adding GPU-native quotas and RayTurbo to its managed Ray platform.

Founder Robert Nishihara reported usage growing 44x year over year.

Therefore, competition accelerates feature maturity and price pressure.

Compute-level advances also ripple upward, enabling faster task orchestration when models finish training earlier.

As a result, AI workload prioritization engines integrate these APIs to deliver end-to-end control.

Because preemption keeps latency targets, teams report up to 25% cost savings per inference cluster.

Cloud vendors now treat prioritization as table stakes.

Nevertheless, people-facing workflows demand equal innovation.

We turn to those applications next.

Human Workflow Applications Expand

Task apps have rushed to embed AI that tells employees what to tackle next.

Asana, Wrike, and Motion market intelligent scores linked to deadlines, impact, and personal bandwidth.

Moreover, Gartner placed Asana as a Leader in Adaptive Project Management for such capabilities.

Vendor case studies often claim 30–60% backlog reductions and double-digit satisfaction gains.

However, Gartner warns that 40% of agentic projects may die before 2027 when ROI remains fuzzy.

To avoid disappointment, enterprises combine explainable scoring with human verification loops.

AI workload prioritization engines act here as decision aides rather than full autopilots until trust matures.

Consequently, benefits manifest incrementally yet measurably.

Early adopters report noticeable operational efficiency gains because meetings focus on execution, not reprioritizing tasks.

Human-centric tools translate complex project graphs into daily clarity.

Therefore, governance becomes the next critical hurdle.

The upcoming section tackles risk controls.

Governance Risk Security Essentials

Autonomy introduces fresh attack surface and compliance exposure.

Security leaders highlight identity sprawl, prompt injection, and objective drift as priority threats.

In contrast, traditional schedulers rarely touched sensitive data directly.

Consequently, least-privilege design, audit logs, and explainability dashboards move from nice-to-have to baseline requirements.

Palo Alto Networks and other vendors now release agent hardening guides aligned with NIST frameworks.

Meanwhile, professionals can enhance their expertise with the AI Writer™ certification.

Clear governance not only cuts risk but also improves operational efficiency by defining escalation paths.

Regular penetration testing and chaos drills validate guardrails before broader rollout.

Strong controls let organizations trust automation at scale.

Nevertheless, successful rollouts still need structured change management.

The final section outlines actionable steps.

Implementation Roadmap For Leaders

Leaders should begin with a narrow, high-value workflow or cluster segment.

Next, define measurable KPIs such as GPU utilization, cycle time, and employee sentiment.

Subsequently, pilot AI workload prioritization engines in recommendation mode for several weeks.

Then, compare baseline and post-pilot metrics to validate uplift.

Moreover, integrate task orchestration hooks early to capture end-to-end flow data.

Include security teams from day one to embed controls rather than bolt-on patches later.

- Choose priority use case.

- Set quantifiable success metrics.

- Run limited pilot phase.

- Iterate with user feedback.

- Scale gradually across domains.

Consequently, staged deployment minimizes disruption and builds stakeholder confidence.

Successful pilots often evolve into center-of-excellence programs that refine prioritization engines companywide.

Therefore, consistent measurement and transparent communication sustain momentum.

Structured pilots turn theory into reliable practice.

In contrast, rushed rollouts risk backlash, as our conclusion details.

Let us recap key insights.

AI workload prioritization engines have moved from hype to production across clusters and cubicles.

They orchestrate compute, streamline task orchestration, and unlock measurable operational efficiency when governed well.

However, deploying AI workload prioritization engines without clear metrics, security, and change management invites failure.

Consequently, leaders who pilot AI workload prioritization engines, audit performance, and certify teams can seize durable competitive gains.

Ready to act? Explore the linked AI Writer™ certification and start building trustworthy automation today.