AI CERTs

2 hours ago

AI Search Danger: Mental-Health Risks From Google Overviews

A late-night query can feel like therapy, yet algorithms are not clinicians. However, vast audiences still treat search outputs as guidance. Consequently, mistakes carry human costs. The phrase AI Search Danger now frames a growing debate about mental-wellbeing online. Moreover, new generative summaries amplify both speed and risk. Google commands nearly 90% market share, therefore its design choices shape global outcomes. Meanwhile, charities like Mind push for safer search results. In contrast, regulators demand measurable protections. This article unpacks recent evidence, criticisms, and fixes.

Search Risks Intensify Today

Recent investigations reveal worrying patterns. Guardian reporters exposed misleading Google Overviews that advised vulnerable users to skip medication. Subsequently, Mind launched a formal Mind inquiry into algorithmic harm. Ofcom data adds numbers: 22% of self-injury results link to instructive or glorifying pages. Image searches proved worse, with 50% judged harmful. Moreover, an MIT study found browsing negative pages worsens mood, creating a feedback loop.

- Google dominates global search at roughly 89% share.

- Suicide-related queries spiked 19% after “13 Reasons Why.”

- Labeling results as “feel worse” cut harmful clicks in experiments.

These metrics spotlight the first layer of AI Search Danger. Nevertheless, numbers alone cannot capture individual despair. The next section assesses how AI summaries compound exposure.

AI Summaries Under Fire

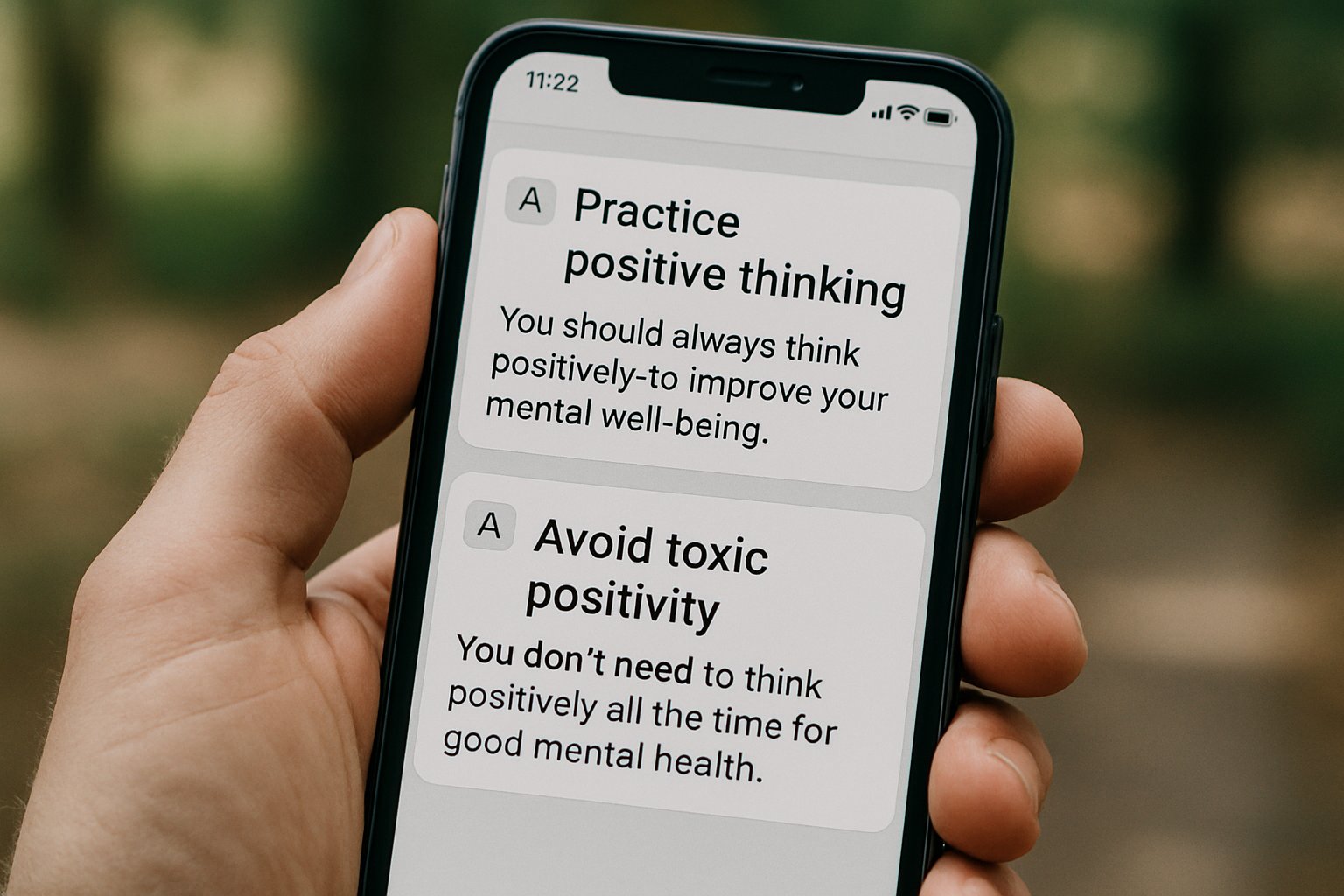

Generative answers appear above links, offering instant advice. Furthermore, the polished tone invites trust. Critics argue that design masks uncertainty. Multiple press audits found Google Overviews recommending dangerous home remedies for mental conditions. Mind’s Rosie Weatherley called several outputs “dangerously incorrect.” Consequently, Google removed some summaries and promised improvements. Yet watchdogs say problems resurface with slight wording changes. Therefore, experts warn that AI Search Danger now hides in plain sight.

These controversies prompted new opt-out tools. DuckDuckGo tests a “No AI” toggle, while browser extensions hide summaries entirely. However, casual users rarely install add-ons. Clearer default safeguards remain elusive. These challenges highlight persistent gaps. Subsequently, we explore technical pathways that drive harm.

Harmful Content Pathways Online

Search harm seldom comes from one feature alone. Autocomplete can nudge users toward lethal phrases. Meanwhile, “data voids” leave rare queries unguarded. In contrast, image results deliver visceral triggers. Ofcom’s audit confirms image danger at double headline rates. Additionally, scammers exploit high-value keywords, planting malware on supposed health portals.

AI Search Danger also extends to privacy. Crisis-intercept tools log sensitive phrases to serve help banners. Consequently, researchers question data retention and false positives. Yet well-implemented nudges work. The MIT “Digital Diet” plug-in reduced negative content exposure and lifted mood scores. Two lines summarize this terrain: Risk routes are diverse and dynamic. Therefore, mitigation must be multi-layered. Transitioning forward, we examine emerging solutions.

Emerging Mitigation Strategies Now

Engineers experiment with several defenses. Default safe-search for minors blurs graphic images. Moreover, autocomplete suppression hides specific suicide methods. Google claims enhanced clinical review for Google Overviews about health. Furthermore, crisis banners now appear on thousands of terms. Outside big tech, startups act faster. Ripple scans queries locally and shows hotline pop-ups. Professionals can enhance their expertise with the AI Sales™ certification, learning to market such safeguards ethically.

Nevertheless, many fixes lack independent audits. Mind’s ongoing Mind inquiry plans to benchmark outcomes. Researchers urge transparent failure metrics to quantify remaining AI Search Danger. These developments illustrate promising momentum. Consequently, regulatory pressure intensifies.

Regulatory Momentum Builds Up

Policy makers are no longer passive. The UK Online Safety Act empowers Ofcom to fine engines that ignore risk assessments. Additionally, Australia’s eSafety Commissioner mandates age gates and image blurring. European legislators link AI rules with platform duties, creating cross-compliance requirements. Meanwhile, civil society drafts technical codes for search safety.

Google submits voluntary updates but withholds detailed accuracy data. Therefore, campaigners demand mandatory transparency. The Mind inquiry may supply political fuel if findings prove systemic failures. These shifts signal external accountability for AI Search Danger. However, commercial stakes also drive action. Our next section explores strategic implications for enterprises.

Business Ethical Stakes Rise

Brands depend on trusted information ecosystems. Misleading outputs near branded content erode credibility. Moreover, advertisers fear adjacency to self-harm instructions. Consequently, risk officers track AI Search Danger alongside cybersecurity threats. Firms also recognize opportunity. Developing safety tech or auditing services opens new revenue streams. Additionally, staff need upskilling to navigate evolving policies. Earning the linked certification above positions teams ahead of rivals.

Ethics intersect with profit in search governance. Therefore, forward-looking leaders blend compliance, user protection, and innovation. These considerations round out the stakeholder landscape. We now conclude with key takeaways and next steps.

Conclusion

Search engines remain double-edged. Robust evidence shows dangerous pathways, especially when AI summaries distort health advice. However, interventions from design tweaks to regulation are advancing. Moreover, enterprises gain by mastering mitigation practices. Mind’s investigation and Ofcom’s audits will keep pressure high. Consequently, professionals should watch metrics closely and pursue relevant training. Explore certifications, strengthen safety roadmaps, and help transform AI Search Danger into sustainable digital wellbeing.