AI CERTS

2 months ago

AI Exposure Indices: Mapping Risk, Investment, and EU Strategy

Several indices answer that demand, yet confusion persists about sources, scope, and limits. Consequently, many leaders still quote the elusive EUSTITT index, although no public evidence confirms its existence. In contrast, academic models, the Bloomberg Index of automation risk, and startup investment dashboards offer validated benchmarks. This article clarifies the landscape, compares methodologies, and proposes actions.

Why Exposure Indices Matter

Boards demand quantifiable signals before funding transformation projects. Therefore, exposure indices translate technical capability into digestible Financial Metrics for risk officers. Furthermore, regulators increasingly rely on Exposure Mapping to anticipate training needs and social cushioning. AI Exposure provides a common language for these assessments.

These converging demands explain the recent surge of index releases. Nevertheless, clarity on scope remains essential before conclusions spread.

Robust metrics inform capital allocation. Meanwhile, flawed ones misguide policy debates. Consequently, we first examine task-based evidence.

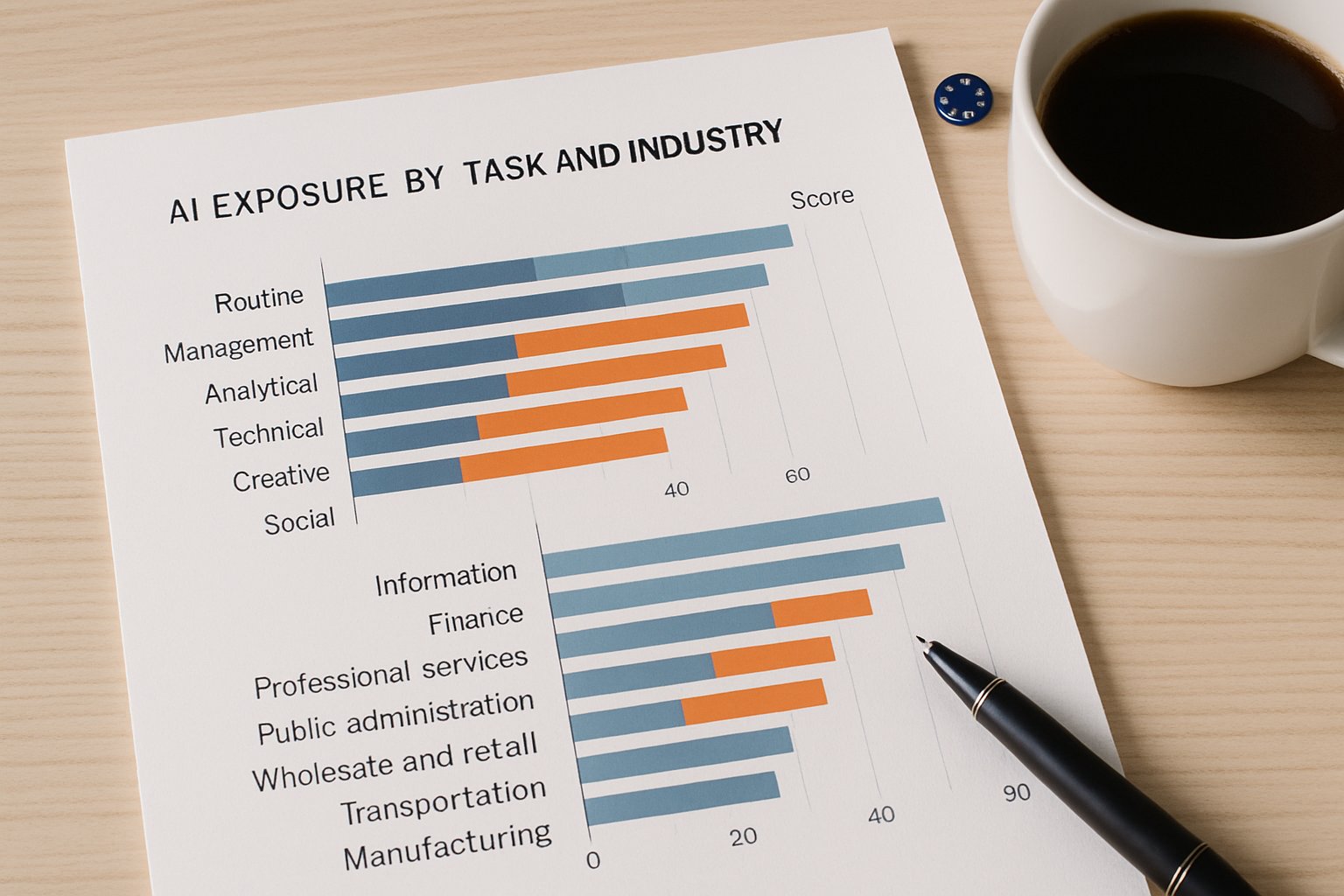

Key Task-Based Index Findings

Task-based studies decompose occupations into work activities and score each against large language model capabilities. Eloundou and colleagues pioneered the approach, and their numbers still headline conferences. Specifically, their analysis found that 80% of U.S. workers face at least 10% task overlap with current models.

Moreover, 19% of workers see half their tasks qualified as exposed. Although these figures reflect American data, OECD researchers adapted the rubric for Europe. Consequently, several member states now publish provisional AI Exposure dashboards grounded in identical thresholds.

- Approximately 50% task exposure marks the "highly exposed" occupation group.

- Threshold choice shifts national exposure rates by up to ten percentage points.

- Validation against Financial Metrics such as wage growth remains limited.

Task indices offer granular detail. However, they reveal only technical feasibility, not market appetite. Therefore, investment signals merit attention next.

Startup Signal Exposure Insights

Startup ecosystems often preview future enterprise adoption. Accordingly, researchers built the AI Startup Exposure, or AISE, to track product descriptions against job tasks. The method complements Exposure Mapping by showing where capital flows.

The analysis spans 17,000 ventures and billions in disclosed funding. Moreover, findings highlight intense focus on professional services, software engineering, and design. In contrast, routine manual occupations attract little venture attention.

Interestingly, regions with the highest AISE scores also score high on the Bloomberg Index tracking automation narratives. Consequently, investors interpret similar Financial Metrics when deploying capital.

Startup data signals near-term commercial priorities. Nevertheless, public agencies require official indicators to steer policy. Our focus now shifts to European monitoring.

Official European Monitoring Efforts

The European Commission operates AI Watch and collaborates with Eurostat to embed AI modules in labour surveys. Furthermore, Joint Research Centre analysts replicate task methods across EU taxonomies. These programs avoid EUSTITT confusion by publishing clear documentation.

Preliminary dashboards reveal wide gaps. For example, Ireland and Luxembourg exhibit the highest AI Exposure, while Greece shows lower susceptibility. Moreover, regional manufacturing belts register moderate values.

Officials cross-check results with Financial Metrics like productivity growth and with the Bloomberg Index sentiment feed. Consequently, confidence in policy targeting improves.

European monitoring supplies legitimate baselines. However, labour economists still debate actual job outcomes. The discussion now turns to those contested impacts.

Debates On Labour Impact

Field experiments complicate simple displacement stories. For instance, a Science study showed 40% time savings when professionals used ChatGPT. Moreover, quality scores rose 18%, suggesting augmentation.

Nevertheless, posting data from the UK GAISI indicates hiring slowdowns in high-exposure occupations soon after ChatGPT's release. Consequently, economists caution against linear predictions. Exposure Mapping must therefore pair with adoption and wage series.

In contrast, references to EUSTITT rarely specify methodology, making impact claims hard to verify. Researchers urge citation of transparent indices instead.

Evidence portrays a nuanced picture. Moreover, skills, governance, and investment decisions mediate outcomes. Leaders now need practical guidance.

Practical Actions For Leaders

Executives should assemble cross-functional analytics teams that monitor AI Exposure metrics quarterly. Additionally, they must align workforce planning with Financial Metrics such as revenue per employee.

Training investments matter too. Professionals can strengthen strategic oversight through the Chief AI Officer™ certification. Furthermore, managers should incentivise continuous learning budgets.

- Define risk appetite and acceptable AI Exposure thresholds.

- Benchmark against the Bloomberg Index and peer group dashboards.

- Update job architectures using Exposure Mapping insights.

Structured action converts abstract scores into competitive advantage. Consequently, firms will navigate disruption with informed confidence.

We have surveyed the vibrant market of AI Exposure indices, from task rubrics to venture funding heatmaps. Moreover, we contrasted transparent dashboards with the still mysterious EUSTITT claims. AI Exposure will keep evolving as models improve, yet governance principles already require attention. Consequently, leaders who monitor AI Exposure closely, translate findings into reskilling plans, and pursue certifications will outperform slower rivals. Finally, organisations should treat AI Exposure as a living metric and revisit assumptions each quarter.