AI CERTs

2 hours ago

AI Content Moderation Faces New Extremism Test

Global platforms still struggle with harmful speech. Consequently, AI Content Moderation has entered a volatile phase. A fresh flashpoint emerged on 2 April 2026 when Reuters revealed ThroughLine’s next project. The New Zealand Contractor already routes mental-health crises for OpenAI and Anthropic. Now it wants to steer users flirting with Violent Extremism toward professional help. However, the plan lands inside a wider policy storm that pits corporate guardrails against government demands.

Standoff Sets Stage

February’s clash between Anthropic and the U.S. Department of Defense spotlighted unresolved tensions. Initially, Defense Secretary Pete Hegseth labeled Anthropic a supply-chain risk after the company refused unrestricted military uses. Subsequently, a federal judge paused that designation. Meanwhile, OpenAI cut a narrower deal that sparked employee protests over ChatGPT Safety guarantees. These moves kept AI Content Moderation on every board agenda.

The industry now waits for appellate rulings. Nevertheless, policymakers already cite the confrontation while urging stronger extremism filters. ThroughLine’s proposal lands at the center of that debate.

ThroughLine Tool Vision

Founder Elliot Taylor confirmed exploratory talks with platform clients and the Christchurch Call advisory network. Moreover, he stressed there is no launch deadline yet. ThroughLine’s idea extends existing Support Redirection flows beyond self-harm incidents. Therefore, flagged users showing early signs of Violent Extremism could receive tailored guidance instead of instant bans.

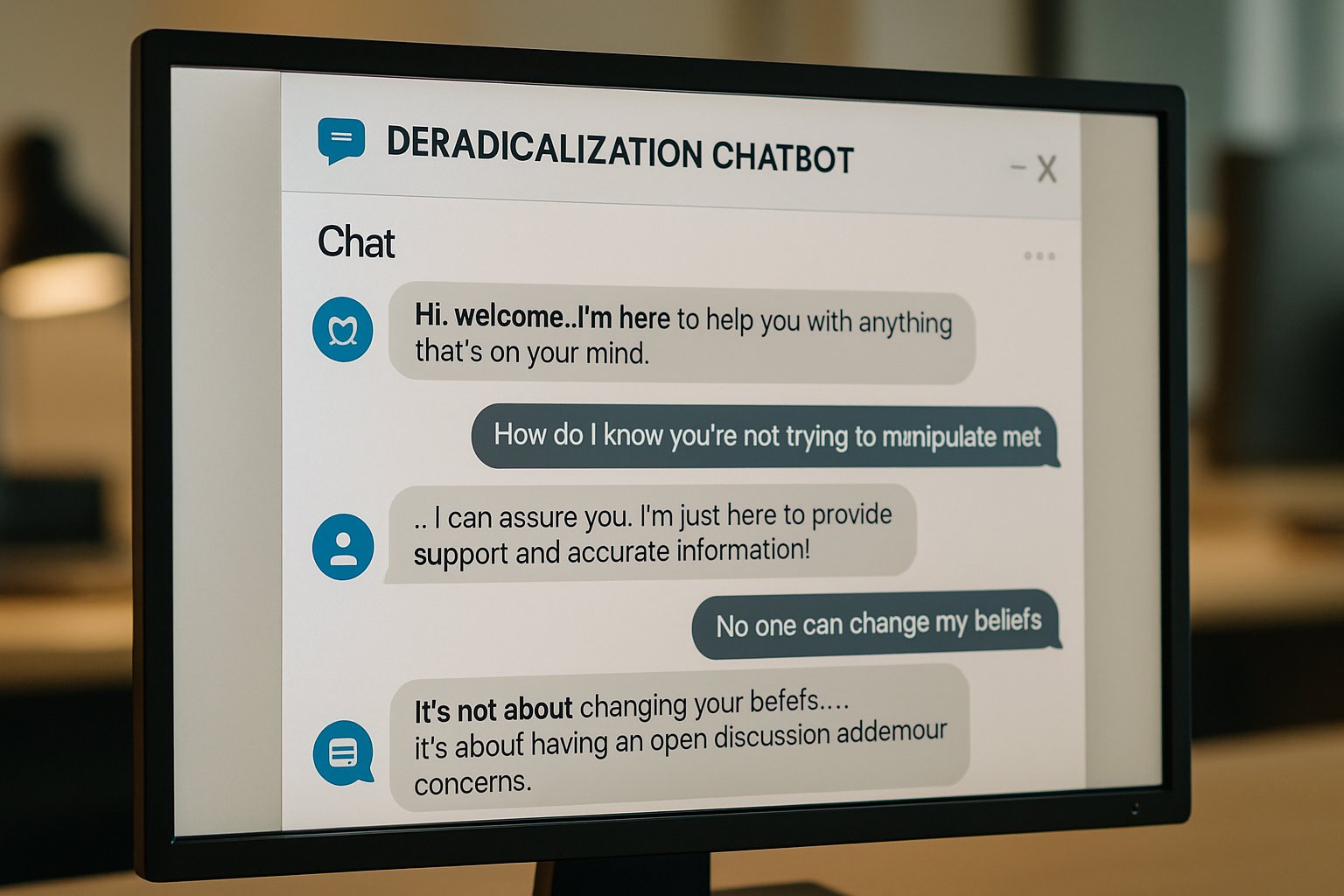

Under the plan, AI Content Moderation signals would trigger a conversational bot. Furthermore, the bot could escalate cases to trained counselors across 1,600 helplines in 180 countries. Taylor argues this hybrid model offers faster outreach than purely human review processes.

Hybrid Referral Model Design

Technical specifics remain scarce. Nevertheless, public documents outline three phased steps. First, detection algorithms parse language patterns. Second, an automated dialogue aims to de-escalate. Third, live agents provide Support Redirection when risk crosses defined thresholds. Consequently, platforms may reduce false removals that alienate borderline users.

Professionals can enhance their expertise with the AI Security Specialist™ certification and audit such pipelines.

Yet accuracy questions persist. In contrast, privacy advocates fear silent data sharing with governments hungry for new signals.

Government Pressure Mounts Now

Regulators increasingly argue that voluntary safeguards fall short. Moreover, several lawmakers referenced the Christchurch attack while demanding proactive controls on Violent Extremism content. Consequently, agencies eye ThroughLine as a template for mandated interventions.

However, corporate leaders worry about mission creep. Platforms already invest heavily in ChatGPT Safety updates. Additional disclosure obligations could expose proprietary model weights or user data. Therefore, negotiations grow complex.

Guardrails Versus Access Debate

Anthropic’s legal stand underlines the dilemma. The firm contends that removing guardrails would undermine core safety commitments. Meanwhile, defense officials cite national-security prerogatives. Both sides reference AI Content Moderation yet define success differently. ThroughLine could become a compromise mechanism, but only if stakeholders align on oversight and appeal rights.

These tensions foreshadow global battles over algorithmic sovereignty.

Benefits And Risks Balance

Advocates list several advantages. Furthermore, early crisis-line data suggests conversation-first approaches reduce recidivism. Nevertheless, civil-liberties groups highlight chilling-effect scenarios. Mislabeling peaceful activism as Violent Extremism would erode trust and possibly fuel radical narratives.

- 24/7 scalability covers low-resource languages.

- Human fallback preserves empathy within Support Redirection flows.

- Operational metrics may refine ChatGPT Safety policies.

- Shared helpline networks cut response times for New Zealand Contractor clients.

However, false positives, opaque data storage, and cross-border data transfers remain unresolved. These challenges illustrate why AI Content Moderation needs multidisciplinary governance. Consequently, balanced risk assessments must precede full deployment.

Open Questions Remain Persist

Several issues still lack transparency. For example, ThroughLine has not disclosed detection benchmarks or false-positive ratios. Additionally, it is unclear which entity stores conversation logs and for how long. Moreover, no appeals pathway for mistaken flags exists yet. Such gaps complicate compliance with evolving e-privacy statutes.

Meanwhile, the appellate court will decide whether Anthropic keeps its guardrails. Therefore, vendors must model diverging regulatory futures. AI Content Moderation strategies should remain flexible, especially when integrating Support Redirection for Violent Extremism content.

The preceding analysis underscores a crucial inflection point. Nevertheless, more empirical evidence is needed before scaling hybrid deradicalization chatbots.

Looking forward, organizations should track legal rulings, pilot outcomes, and certification standards. Moreover, cross-sector dialogue will determine whether ThroughLine’s vision becomes a global template or another cautionary tale. AI Content Moderation will evolve either way.

In summary, the industry confronts intertwined technical, ethical, and political hurdles. Consequently, leaders must balance innovation with duty of care. Interested professionals should explore the linked certification to deepen their defensive skill set and help shape safer conversational ecosystems.