AI CERTS

5 months ago

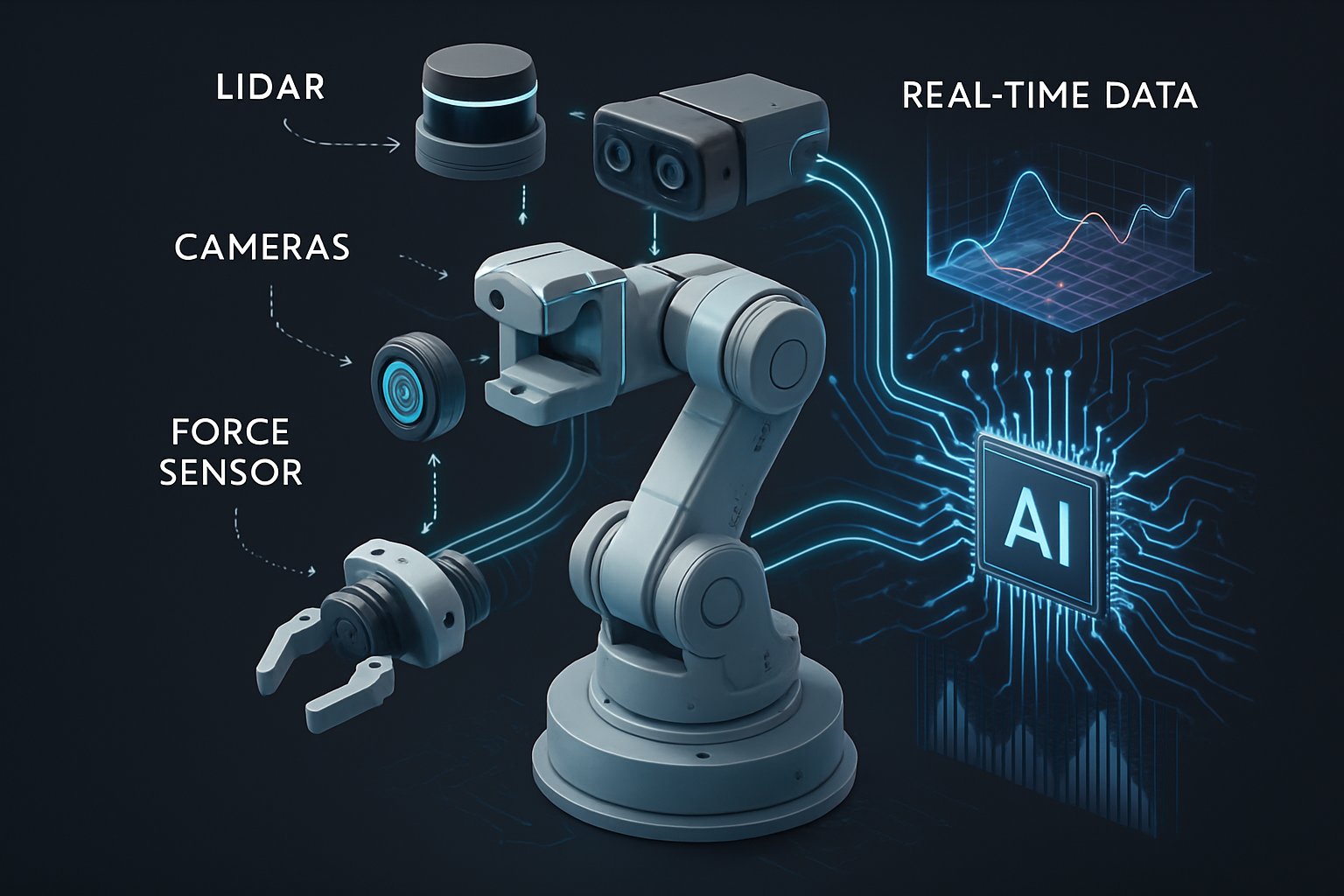

AI Overhauls Robotics Sensing via Real-Time Sensor Fusion

This article maps the market momentum, technical breakthroughs, hardware implications, and looming safety debates. Readers will gain actionable insights for deploying next-generation robots and for upskilling through recognized certifications. Throughout, we highlight key data, expert commentary, and practical steps toward production readiness. Let us explore how sensor and compute advances now enable dynamic, field-ready autonomy.

Market Momentum Snapshot 2025

Global demand for multi-modal perception platforms is surging. Future Market Insights pegs the sensor-fusion segment at USD 6–8 billion for 2024. Robotics Sensing stands at the epicenter of this valuation upswing. Meanwhile, analysts forecast 15–25 percent CAGR, pushing valuations toward USD 30 billion by 2030. Moreover, venture capital flows increasingly prioritize startups mastering condition-aware Sensor Fusion for industrial fleets. In contrast, single-sensor tool vendors now struggle to justify stand-alone roadmaps.

- Regulatory pushes for automation safety reporting

- Edge AI accelerators hitting sub-10 ms latency

- Lower LiDAR costs from solid-state designs

- Open datasets catalyzing benchmark competition

Overall, the financial trajectory underscores sustained investment headroom. However, monetizing that promise requires technical leaps, discussed next.

Core Technology Shifts Today

Recent papers reveal three major architectural turning points. Firstly, Bird’s-Eye-View representations unify pixels and point clouds into consistent spatial grids. Effective Robotics Sensing now depends on unified spatial representations rather than isolated modalities. Secondly, cross-modal attention blocks dynamically weight inputs based on learned reliability cues. Thirdly, online Real-Time Adaptation methods fine-tune perception layers once the robot meets novel terrain. Consequently, field failures from rain or dust no longer mandate offline retraining cycles.

Learned Sensor Fusion Rise

BEVFusion kicked off the wave by surpassing single-sensor baselines on nuScenes and Waymo. Subsequently, follow-ups added condition tokens and lightweight adapters for adverse weather. Moreover, Deep Learning composes semantics and geometry while maintaining differentiable projective consistency. This shift positions Robotics Sensing as a software-defined capability, not a fixed hardware attribute. Teams report multi-percentage mAP gains alongside 25 percent latency reduction using optimized view transformers. The rise of learned fusion redefines perception baselines across industries. Therefore, hardware choices must evolve in lockstep, as the next section shows.

Adaptive Edge Hardware Stack

Software advances thrive only when compute paths stay deterministic. NVIDIA Jetson Thor and Holoscan pipelines exemplify co-designed silicon, firmware, and real-time IO. Furthermore, FPGA bridges from Lattice synchronize multi-gigabit sensor feeds within microsecond jitter budgets. Such precision sustains Robotics Sensing loops under 50 milliseconds, even during heavy scene dynamics. Low-level Sensor Fusion pre-processing on FPGAs frees GPU cycles for planning.

Developers often balance latency, power, and thermal ceilings. In contrast, cloud offloading introduces network unpredictability that jeopardizes closed-loop safety. Therefore, edge deployment remains non-negotiable for industrial arms, drones, and autonomous forklifts. Robotics Sensing pipelines must therefore co-locate compute and power budgets. Real-Time Adaptation also benefits from cached kernel weights kept in SRAM.

Latency Engineering Tactics Now

Teams adopt mixed-precision inference, sparsity pruning, and kernel fusion to shave microseconds. Additionally, Real-Time Adaptation code paths run on reserved cores, preventing contention with control loops. Developers can track worst-case response using hardware timestamping built into DriveWorks SDK. Consequently, compliance audits gain reproducible latency evidence, meeting emerging certification mandates. Edge hardware mastery elevates perception accuracy without breaching power envelopes. However, adaptability also raises verification stakes, examined below.

Safety And Verification Tension

Online learning improves robustness yet complicates regulatory assurance. Regulators demand fixed behavior, while adaptive models update after deployment. Nevertheless, several mitigation patterns are emerging across labs and OEMs. Safe adaptation envelopes restrict parameter shifts and trigger graceful degradation modes upon anomaly. Certification bodies now draft guidelines for Sensor Fusion validation under distribution shift.

Moreover, logging pipelines capture every model update for forensic audit and compliance reporting. Continuous monitoring dashboards alert operators when drift surpasses validated margins. Professionals can improve assurance with the AI+ Network Security™ certification. In contrast, proponents of fully learned pipelines argue that uncertainty estimation already satisfies safety cases. The debate will intensify as legislation catches up with self-learning industrial systems. Verification strategies evolve, yet no universal rubric exists today. Consequently, decision makers need clear implementation guidance, offered in the final section.

Strategic Implementation Key Takeaways

- Collect synchronized multi-modal datasets covering nominal and corner scenarios.

- Select a proven Deep Learning baseline and benchmark against tasks.

- Integrate Real-Time Adaptation modules and monitor online metrics before release.

Additionally, maintain a digital twin to replay recorded scenes for regression testing. Moreover, pilot deployments should use feature flags to disable self-learning when anomalies spike. Robotics Sensing telemetry must stream to dashboards showing mAP, latency, and energy over time. Robotics Sensing KPIs should remain visible to every scrum team.

Certification Pathways Forward Essential

Skill shortages often bottleneck deployment schedules. Therefore, enterprises now sponsor staff for targeted hardware, perception, and safety certificates. The cited AI+ Network Security™ program teaches secure pipelines and adaptive model governance. Consequently, teams gain shared vocabulary and auditable best practices. Implementation success hinges on data, disciplined iteration, and certified talent. In contrast, ignoring any pillar often derails schedule and budget.

Robotics Sensing has entered a data-rich, software-defined era powered by Sensor Fusion, Real-Time Adaptation, and Deep Learning. Consequently, enterprises adopting these stacks unlock higher uptime, broader operating envelopes, and faster payback. Nevertheless, safety verification and talent development remain critical gatekeepers. Market forecasts, hardware advances, and standards initiatives suggest continued acceleration. Therefore, leaders should pilot adaptive stacks now, establish rigorous monitoring, and invest in continuous education. Explore certifications and open-source toolkits today to keep your Robotics Sensing roadmap future-proof.

Disclaimer: Some content may be AI-generated or assisted and is provided ‘as is’ for informational purposes only, without warranties of accuracy or completeness, and does not imply endorsement or affiliation.