AI CERTs

3 hours ago

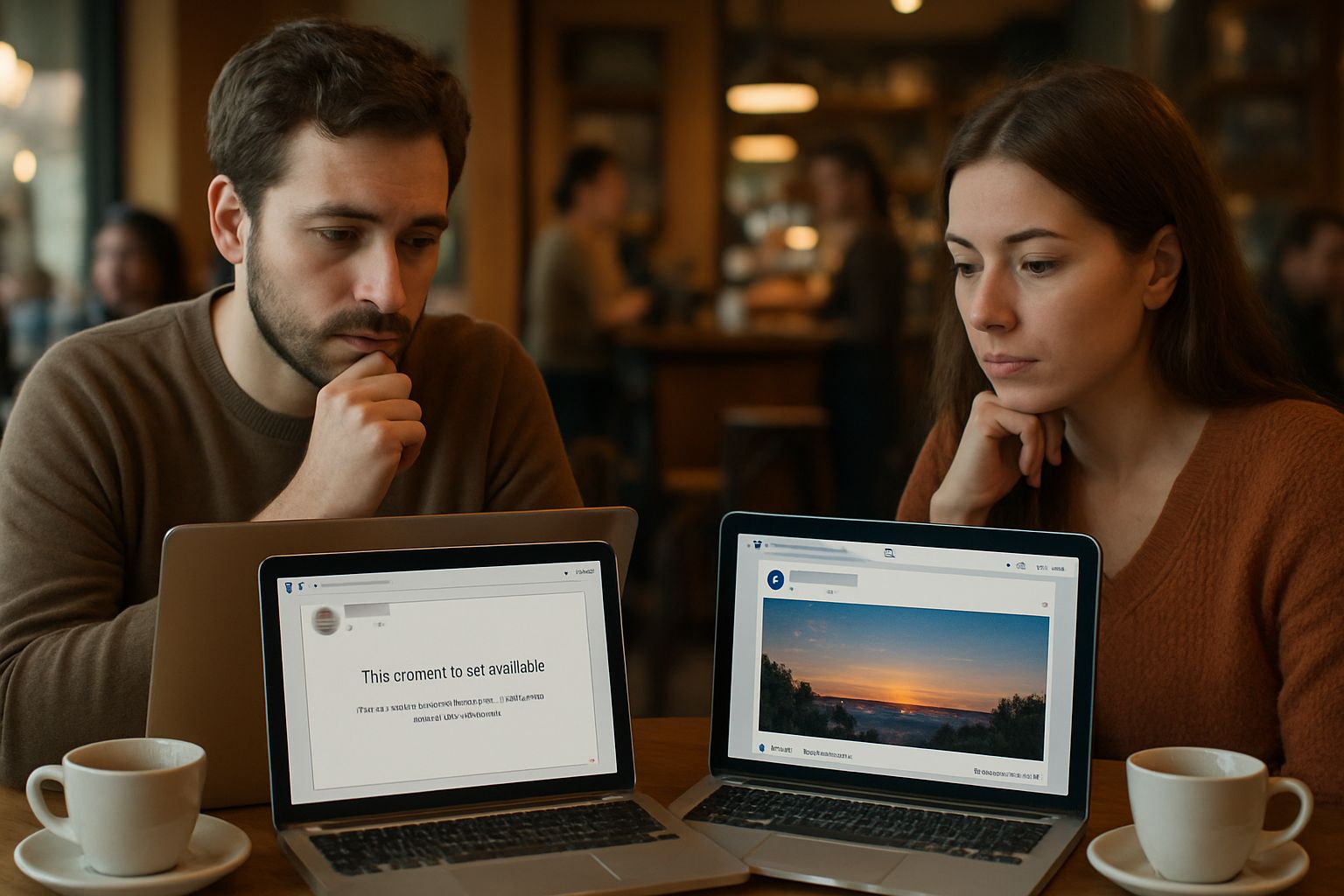

Automated Moderation Fuels Free Speech Tension Online

Platforms now lean heavily on algorithms to police conversations. Consequently, creators and activists report widespread frustration with sudden removals. Many see this struggle as a new layer of Free Speech Tension online.

Automated systems work at superhuman scale, yet context often eludes them. Therefore, innocuous jokes, historical archives, and educational clips disappear without warning. Regulators monitor these mistakes as lawmakers draft stricter transparency rules.

Meanwhile, companies cite speed, safety, and cost as compelling reasons for automation. Industry data, however, reveal recurring false positives and linguistic gaps. This article explores the technical roots, documented failures, and emerging remedies shaping the debate. Readers will gain insight into risks, responsibilities, and next steps for resilient moderation.

Algorithms Face Heavy Scrutiny

Academic audits now quantify where automated models falter. In June 2025, researchers tested Twitch’s AutoMod against hateful chat. They found 94% of hateful messages evaded detection. Moreover, 89.5% of benign educational uses were blocked instead.

- 94% hateful messages bypassed Twitch AutoMod filters.

- 89.5% benign uses blocked, heightening Free Speech Tension.

- Commercial APIs misclassified reclaimed slurs, revealing systemic flaws.

Similar experiments on commercial APIs showed over-moderation of reclaimed slurs. In contrast, coded hostility slipped through untouched. These patterns illustrate the scale of algorithmic error. Consequently, scrutiny from press, NGOs, and investors has intensified.

Such findings amplify Free Speech Tension among diverse communities. They also set the stage for examining direct harms next.

False Positives Harm Communities

False positives inflict immediate economic and civic damage. Creators lose revenue when videos vanish during peak traffic. Journalists covering extremism see sources erased mid-story. Additionally, marginalized-language speakers face higher takedown rates due to limited training data.

New America reports detail opaque, sluggish appeal channels. Therefore, wrongful removals often persist for days. Platform transparency reports show reversal metrics but rarely reveal language breakdowns. This opacity deepens community mistrust and fuels ongoing Censorship claims.

Collectively, these harms escalate Free Speech Tension across regions. The opposite problem, under-moderation, exposes users to hate, explored below.

Under-Moderation Lets Hate Thrive

Under-moderation undermines safety promises made by platforms. Audit data show hateful content bypassing Filtering at alarming rates. These misses leave targets vulnerable to harassment and radicalization. Moreover, high proactive detection numbers mask these gaps within aggregate dashboards.

Twitch’s study highlighted precision-recall trade-offs that designers must optimize. If thresholds loosen, false negatives shrink while false positives skyrocket. Consequently, engineering teams juggle competing risk profiles daily. Policymakers monitor these dynamics, fearing systemic discrimination.

Persistent under-moderation perpetuates Free Speech Tension by normalizing abusive speech. Regulatory attention therefore intensifies, as the next section describes.

Regulators Intensify Oversight Pressure

The European Union’s Digital Services Act mandates risk assessments and audits. Platforms must document automated Filtering accuracy and human oversight processes. OECD guidelines add global expectations for transparency and appeals data. Additionally, civil-society coalitions demand granular reporting on language performance. New Policy proposals envision mandatory model registry disclosures.

Regulators use appeals reversal rates as proxy error indicators. However, inconsistency across reports stymies comparative analysis. Some companies release country dashboards, while others provide broad aggregates. Consequently, watchdogs urge standardized metrics and independent audits.

Tighter oversight aims to ease Free Speech Tension through accountability. Yet systemic Bias challenges remain, as the following section explores.

Bias Clouds Language Detection

Academic teams have documented algorithmic Bias against dialects and reclaimed slurs. Models over-penalize identity terms like “black” while missing coded hostility. Moreover, non-English languages receive far less training data. Consequently, error rates spike in minority communities.

CHI 2025 audits found over-moderation of counter-speech defending targeted groups. In contrast, implicit hate stayed online longer. These disparities worsen perceptions of Censorship and systemic injustice. Therefore, Bias mitigation remains a research priority.

Addressing Bias will relieve Free Speech Tension only if paired with human judgement. Human oversight strategies are detailed next.

Human Review Remains Crucial

Human-in-the-loop designs add contextual understanding to machine output. Moderators can override incorrect flags and refine thresholds. Furthermore, uncertainty scores guide reviewers toward borderline cases. Graduated actions, such as labels, reduce harsh removals.

Professionals can validate skills through the AI Security Level-2 certification. Such credentials bolster governance programs within product teams. Consequently, human expertise complements algorithms without crippling scale.

Combined review processes ease Free Speech Tension and boost trust. Strategic roadmaps for governance close our analysis.

Roadmap Toward Balanced Governance

Experts propose multi-layer solutions for sustainable moderation. First, publish standardized metrics covering proactive rates, false positives, and appeals. Second, conduct regular red-teaming and multilingual stress tests. Third, involve civil-society reviewers in Policy updates.

Moreover, Policy sandboxes can trial new models before full deployment. Platforms should also implement graduated Filtering rather than immediate deletion. Nevertheless, governance frameworks must remain adaptable to emerging threats. These steps collectively reduce Censorship fears and algorithmic Bias.

Incremental progress can finally ease lingering Free Speech Tension. The conclusion recaps next actions and key insights.

Conclusion And Next Steps

Automated flagging offers unmatched scale yet imperfect judgement. Throughout this report we traced failures, oversight, and reform paths. Academic audits exposed under-moderation and false positives creating Free Speech Tension worldwide. Regulators now demand clearer metrics, stronger appeals, and responsible Policy experimentation. Meanwhile, human review plus the AI Security Level-2 certification raise governance maturity. Consequently, balanced systems can protect users without unnecessary Censorship. Explore emerging audits, demand transparent dashboards, and pursue specialized training to shape safer platforms.