AI CERTS

3 hours ago

AI Content Detection Crisis Hits Publishing

Therefore, scale pressure intensified just as detectors faced skilled evasion tactics. Moreover, author groups demanded transparent labelling to defend creative labour. Readers, retailers, and legal teams now navigate a blurred zone between human craft and algorithmic prose. In contrast, leading vendors tout vanishingly low false positives, yet independent labs dispute those claims. This report examines why publishers still struggle, how tools work, and which policies may steady the market.

Surge Of AI Titles

Furthermore, volume growth is the first threat experts cite. Bowker data confirm unprecedented output acceleration in 2025. More than four million ISBN records overwhelmed acquisitions staff and freelance readers. Consequently, publishers resorted to automated triage long before manuscripts reach editors. Basic detection proved inadequate.

However, those triage layers rely heavily on AI Content Detection meant to filter outright machine-written drafts. Self-publishing creators quickly learned how to rephrase, paraphrase, or "humanize" generated passages. Therefore, detectors face a moving target created at industrial speed.

These dynamics concentrate risk. Publishers fear reputational damage if covertly generated books pass unnoticed. Yet blocking every suspect file risks alienating honest authors. Consequently, leaders must balance speed with fairness.

Volume pressure underscores later challenges. Nevertheless, industry strategies cannot ignore scale realities while designing oversight.

Detector Tools Under Fire

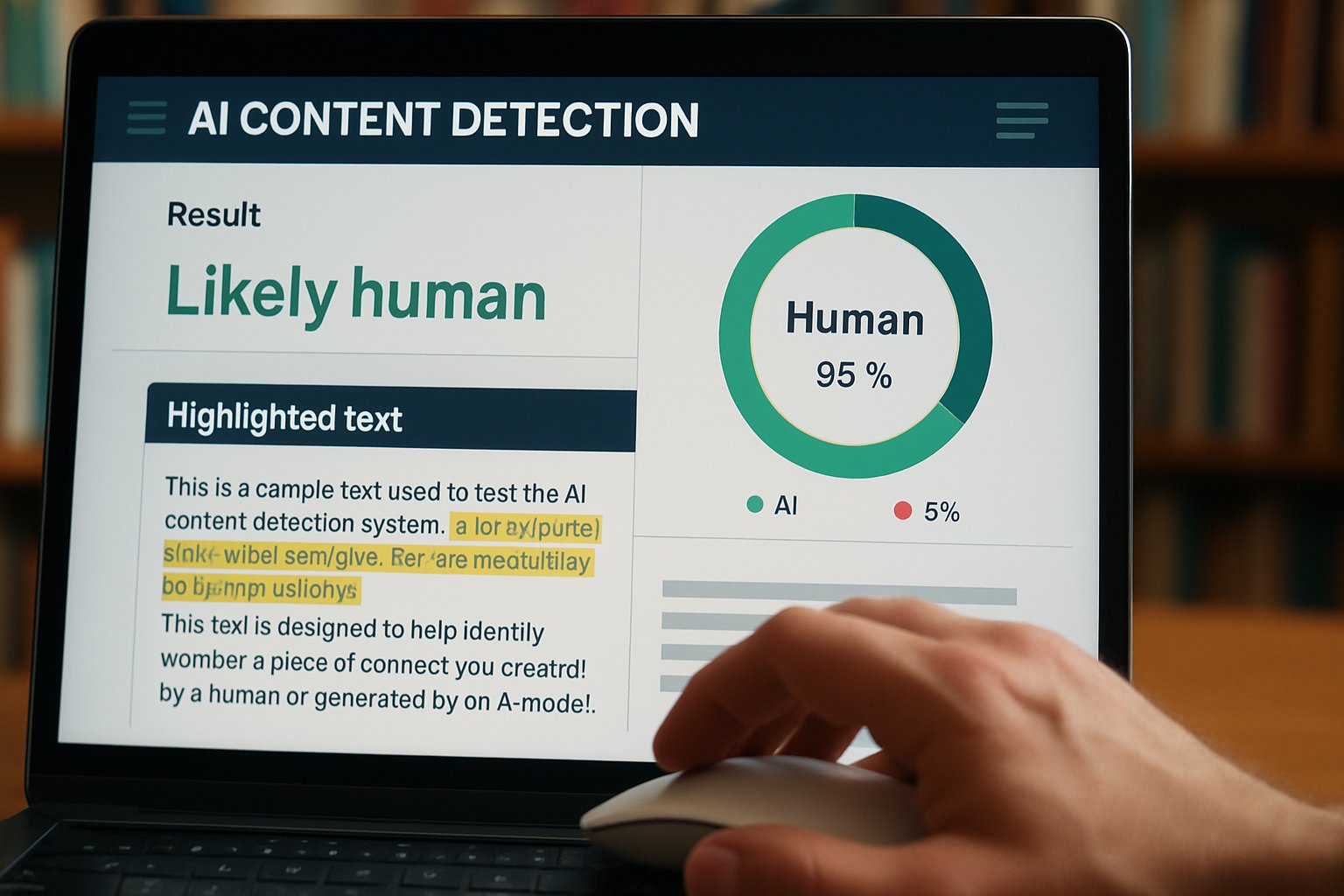

Many vendors promise robust accuracy. Pangram advertises a one-in-ten-thousand false positive rate on long fiction. Nevertheless, University of Chicago researchers recorded varied outcomes across four commercial engines. GPTZero and OriginalityAI missed polished passages, while RoBERTa versions over-flagged human drafts.

Moreover, the "Almost AI, Almost Human" study highlighted systemic bias toward ESL prose. Such misfires create contractual peril because innocent writers risk wrongful accusations. Consequently, legal departments review every disputed flag manually, stretching resources thin.

AI Content Detection technologies share common statistical roots. Most measure perplexity or token distribution variance against training sets. However, adversarial rewriting tools continuously adapt, eroding classifier confidence within weeks.

Detector shortcomings fuel wider struggle narratives. Therefore, stakeholder patience with vendor marketing claims is wearing thin.

Policy And Label Push

While algorithms lag, author organisations pursue softer remedies. The Authors Guild expanded its voluntary "Human Authored" seal during 2026. Additionally, the Society of Authors launched a similar logo at the London Book Fair.

These labels carry no technical guarantee. Nevertheless, they create social pressure and document contractual intent. Some publishers now request the seal before signing debut writers.

Regulators also investigate training data disputes. French houses sued Meta over unlicensed use of copyrighted books. Meanwhile, US lawmakers debate disclosure mandates for AI Content Detection results during submission.

Certification avenues may grow. Professionals can enhance credibility through the AI Legal Strategist™ credential, which clarifies policy obligations around synthetic text.

Trust signals help, yet they do not replace reliable detection science. Consequently, hybrid frameworks appear inevitable.

Technical Limits Exposed Now

Researchers stress that detectors confront an arms race. In contrast, large models evolve monthly, learning to mask statistical fingerprints. Consequently, even segment-level classifiers drift quickly.

The University of Chicago working paper showed Pangram outperforming open-source baselines on balanced benchmarks. However, performance fell when evaluators introduced lightly edited AI passages. False negatives climbed, yet false positives stayed low. That detection paradox complicates policy.

AI Content Detection, therefore, faces a precision-recall dilemma. Raising sensitivity can safeguard publishers, but it disproportionately harms honest authors. Conversely, lenient thresholds invite covert machine novels.

Technical gaps underpin the broader struggle headline. These empirical findings demand cautious interpretation before policy hardens.

Business Risks Mounting Fast

Financial consequences already surface. Hachette cancelled "Shy Girl" after social media outrage and third-party scanner reports. Shareholder analysts estimated mid-six-figure sunk costs in advances, printing, and pulping.

Moreover, marketplace flooding with low-quality books depresses discoverability for legitimate debut talent. Consequently, retailers tighten listing rules, requiring AI Content Detection certificates or author attestations.

Reputational impacts exceed direct losses. Publishers must reassure readers that creative integrity endures. Meanwhile, consultants warn that class-action litigation could target negligent gatekeepers.

Risks concentrate minds. Therefore, boards allocate budget for combined technical, legal, and training responses.

Roadmap For Book Industry

Experts outline multi-layer defences. Firstly, combine at least two detector engines and manual review. Secondly, record thresholds and outcomes for audit readiness. Thirdly, incentivise transparency through public reporting.

Furthermore, investment in longer-form benchmarks will align AI Content Detection with real trade manuscripts. Standardised corpora should include AI-polished and bilingual samples.

Additionally, publisher contracts can specify acceptable AI assistance boundaries. Clear definitions reduce later disputes and articulate remediation steps.

- Deploy layered AI Content Detection with documented thresholds.

- Maintain secure logs for later audit and appeals.

- Train editors to recognise AI style cues beyond software alerts.

- Adopt certification schemes that complement detection.

Finally, upskilling remains critical. Editors should pursue continual education; legal teams might secure the AI Legal Strategist™ badge to navigate emerging statutes.

Adopting this roadmap embeds AI Content Detection within broader governance. Consequently, operational resilience improves across the publishing lifecycle.

Consequently, the literary market sits at a crossroads. AI Content Detection is improving, yet perfect certainty remains elusive. However, balanced governance now feels achievable. Publishers can merge layered software, legal standards, and voluntary labels. Meanwhile, authors gain simple ways to prove human craft. Moreover, new certifications build in-house fluency for contract and policy teams. Therefore, leaders should act now: audit current workflows, upgrade tools, and invest in staff education. Readers still want authentic books, and trust drives sales. For deeper guidance, explore the linked credentials and share emerging best practices across the field.