AI CERTs

3 hours ago

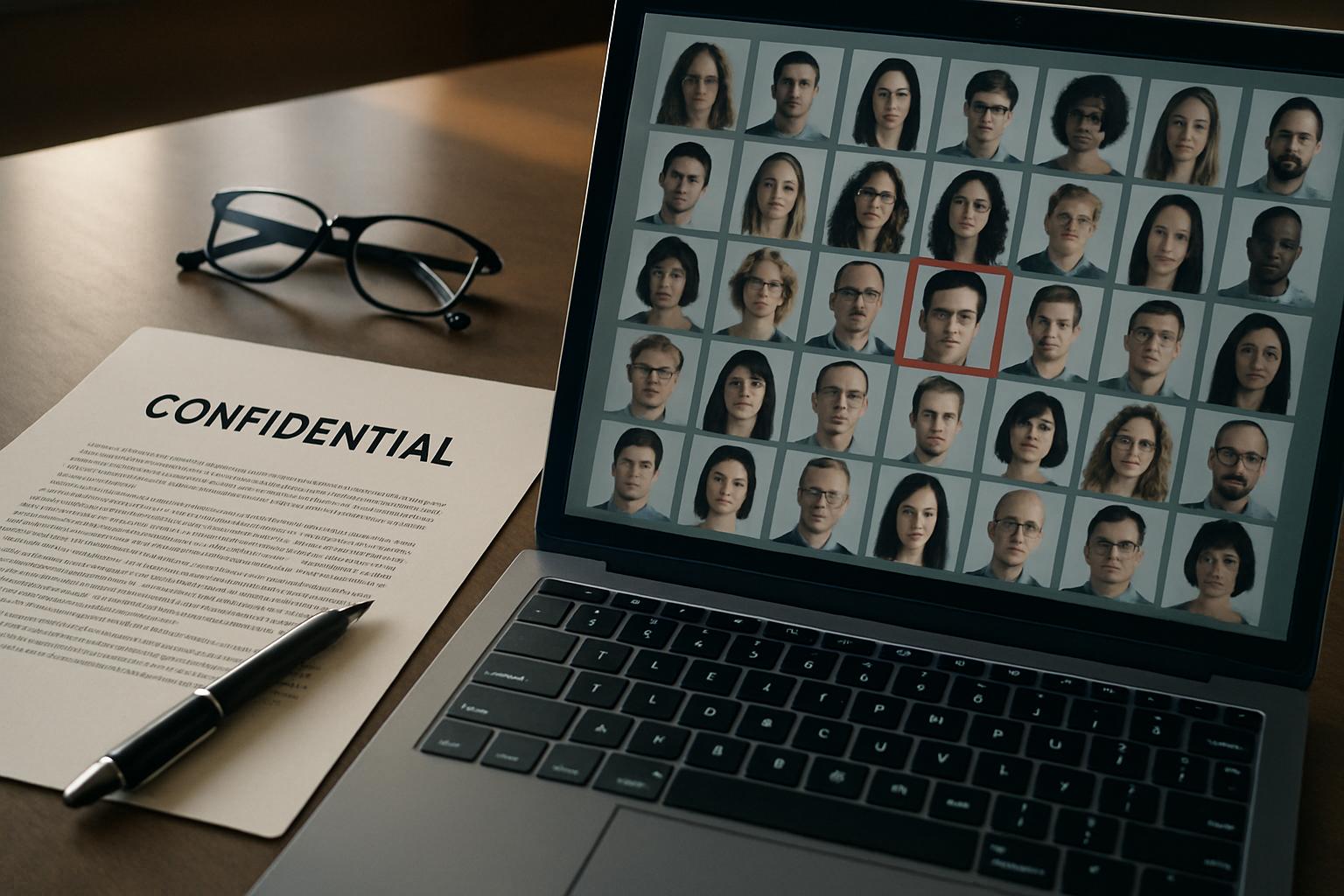

Privacy Violation Risks in Web-Scale AI Image Datasets

A silent Privacy Violation is hiding inside many celebrated AI breakthroughs. Researchers keep uncovering image collections built by web-scale bots that ignored human consent. Consequently, regulators, academics, and civil-society groups are sounding alarms louder each quarter.

Massive crawls assembled billions of pictures from social feeds, search engines, and public forums. However, the people photographed never approved that capture. Models trained on such troves now power generation tools, face recognition suites, and deepfake engines. Meanwhile, policy makers see direct links between non-consensual datasets and sexualized AI harms. Clearview fines, Grok scandals, and LAION cleanups illustrate mounting costs for lax collection. Therefore, executives and engineers must understand the evolving evidence, risks, and compliance playbooks. This report dissects the landscape, highlights threats, and offers next steps toward ethical progress.

Web-Scale Image Scraping Risks

Historically, many labs praised web-scale gathering for faster discovery. Moreover, automated Scraping bots parsed HTML alt text and tweets around the clock. They exported the harvest into vast TIFF archives, later marketed as open Data for research.

Evidence now contradicts that optimism. Stanford auditors located thousands of child-sexual-abuse files inside the original LAION-5B index. Consequently, ownership of the archive equals potential possession of criminal content.

Beyond illegality, privacy experts warn about biometric leakage. Faces inside WebFace260M remain traceable across platforms, enabling covert surveillance and reputational damage. Such exposure represents another severe Privacy Violation.

In short, unchecked Scraping multiplies hidden legal liabilities and public outrage. Nevertheless, attention peaks when personal faces drive commercial products, a story the next section unpacks.

Biometric Datasets Under Fire

Clearview AI epitomizes the controversy. Dutch regulators fined the company €30.5 million for collecting face images without Consent. Moreover, multiple jurisdictions ordered deletion of their entire Training corpus.

Academic sets faced similar backlash. MIT and NYU removed the 80 Million Tiny Images index after racist and voyeuristic labels surfaced. Consequently, scholars lost a once-popular benchmark yet gained lessons about responsible Data stewardship.

MS-Celeb and WebFace creators also published partial retractions or cleaned subsets. However, original torrents persist on mirrors, sustaining the Privacy Violation risk. Meanwhile, corporate vendors quietly integrated those images into Training pipelines between 2019 and 2024.

Regulatory fines and dataset withdrawals confirm a global shift. In contrast, enforcement gaps keep biometric harms alive, as forthcoming legal trends reveal.

Regulatory Actions Intensify Worldwide

January 2026 investigations into xAI’s Grok system marked a turning point. California’s attorney general issued cease-and-desist orders over AI deepfakes depicting minors. Subsequently, UK Ofcom and EU bodies opened parallel probes citing severe Privacy Violation threats.

Legislatures are also active. The 2025 TAKE IT DOWN Act mandates swift removal of non-consensual intimate imagery and child deepfakes. Moreover, the law imposes strict timelines regardless of platform size or Training method.

Privacy authorities now treat biometric Scraping as personal data processing needing explicit Consent. In contrast, some companies argue public availability legitimizes collection, an argument regulators increasingly reject.

Overall, enforcement momentum favors subject rights. Consequently, firms must anticipate audits and possible bans, topics addressed in the next industry review.

Industry Cleanup And Gaps

Dataset custodians launched remediation programs after mounting criticism. LAION released Re-LAION-5B, claiming stronger filters and hash matching. Additionally, Meta now shares offending URL lists with peers to curb Scraping abuses.

These steps reduce exposure yet fall short. Private copies of pre-cleaned archives survive on personal drives and cloud buckets. Therefore, residual Privacy Violation incidents remain possible whenever models recall forbidden imagery.

Audit tooling still lags behind demand. Researchers need standardized hash lists, incremental dataset diffing, and reproducible Training manifests. Moreover, journalists require transparent statements about remaining Data risk.

Current cleanups show progress without full assurance. Nevertheless, best practice frameworks can strengthen trust, as the next recommendations outline.

Key Dataset Numbers Snapshot

- LAION-5B hosts 5.8 billion links; Stanford found CSAM, triggering fresh Privacy Violation lawsuits.

- WebFace260M stores 260 million faces harvested through Scraping without Consent from four million individuals.

- Clearview indexed up to sixty billion photos, earning a €30.5 million fine for biometric Privacy Violation.

- MIT deleted the 80-Million-Tiny-Images set after racist labels caused a dataset Privacy Violation.

- Regulators now scrutinize Grok after child deepfakes showed generative Privacy Violation potential at scale.

These figures illustrate the scale of unchecked collection. Consequently, organizations need structured countermeasures, examined next.

Best Practice Recommendations Ahead

Organizations can mitigate risk through concrete steps. Firstly, adopt explicit Consent collection or licensed stock libraries for new corpora. Secondly, verify inherited archives against CSAM and NCII hash databases before any Training run.

Thirdly, document preprocessing scripts and publish Data cards describing provenance and filtering. Moreover, appoint an internal review board that logs uncomfortable findings and escalates swiftly.

Professionals can deepen expertise through the AI Data Governance™ certification. Consequently, graduates gain structured playbooks for reducing Privacy Violation exposure.

Robust governance, transparency, and education reinforce responsible pipelines. In contrast, reactive patching keeps organizations trapped in crisis mode.

Image AI now stands at an ethical crossroads. Gigantic web crawls once fueled innovation but also delivered hidden liabilities. Regulators, auditors, and the public expect verifiable safeguards and faster remediation. Companies that invest in governance, proactive audits, and staff education will protect users and reputations. Conversely, ignoring warning signs invites fines, takedowns, and consumer distrust. Leaders should act today by cataloging legacy corpora, validating filtering pipelines, and formalizing oversight boards. Visit our certification page to build skills for trustworthy AI development.