AI CERTs

2 weeks ago

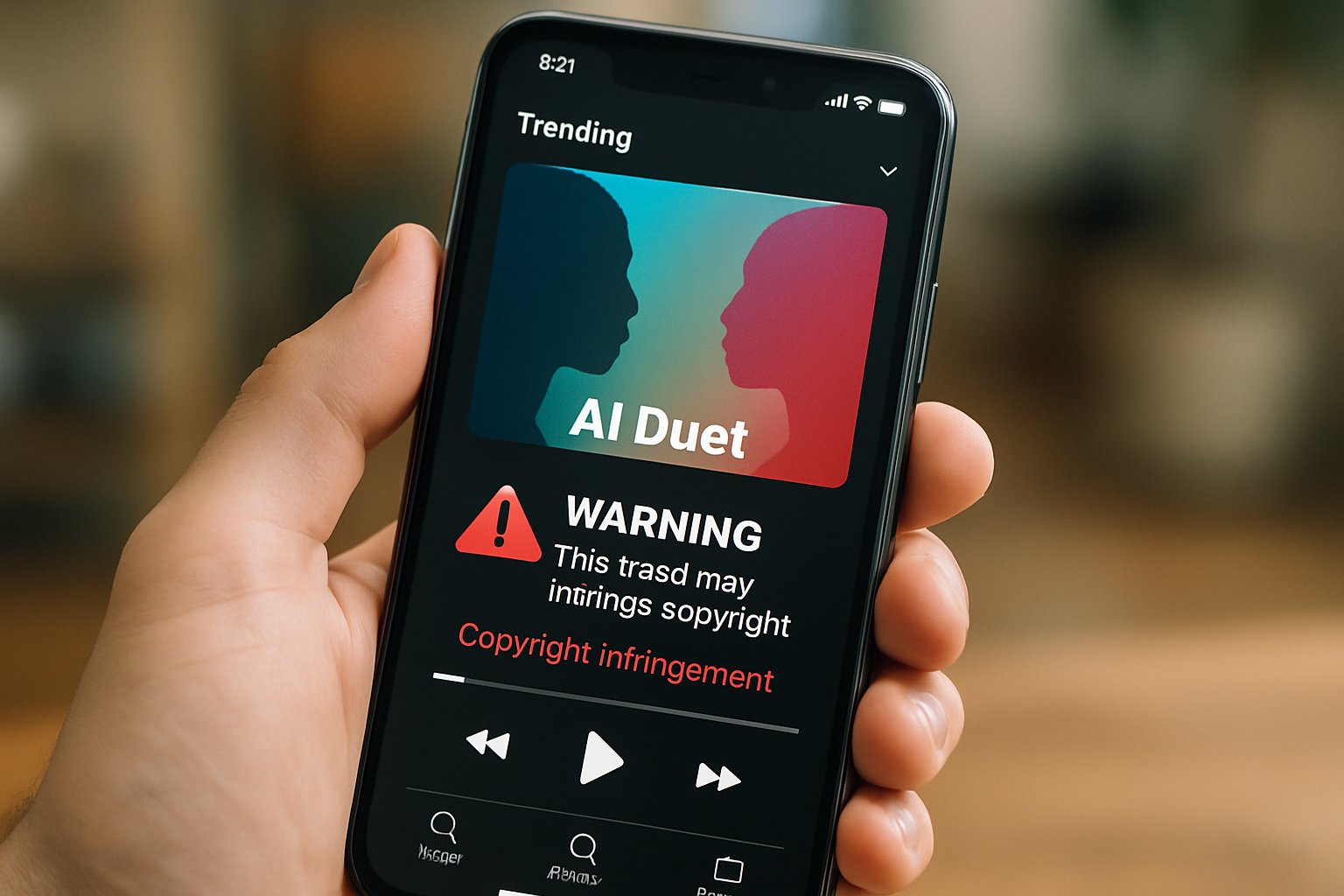

Fake Artist Copyright Crackdown Hits Viral AI Music Duets

A viral duet can crown unknown producers overnight. However, the same clip might vanish hours later amid takedown chaos. That disappearing act reflects a wider fight labeled Fake Artist Copyright by legal teams and platforms. Over the past year, Streaming leaders shifted from sporadic removals to formal AI music rulebooks. Spotify, Apple Music, and Deezer now label or delete synthetic duets that exploit star voices. Consequently, the Industry debates fairness, provenance, and IP protection with new urgency. Major labels lobby fiercely while independent creators defend generative tools as democratizing. Meanwhile, regulators watch chart manipulation cases involving unknown acts and superstars like Drake. This article maps the timeline, numbers, and technology behind the sudden crackdown. Professionals will gain clear strategies to navigate policy shifts and safeguard revenue streams.

Viral Duets Surge Now

TikTok popularized the side-by-side duet format. Subsequently, Instagram and YouTube imported similar remix features to feed fandom loops. Streaming spikes follow each viral challenge because short clips funnel listeners to full tracks.

During November 2025, AI act Breaking Rust saw "Walk My Walk" top niche charts. Moreover, the track vanished days later after impersonation claims proved credible. That sequence pushed platforms to treat Fake Artist Copyright violations as systemic, not isolated incidents.

Viral duets now drive both discovery and disruption. However, rising misuse sets the stage for stricter enforcement ahead.

Platforms Tighten AI Detection

Spotify removed 75 million suspected spam tracks under new AI rules announced September 2025. Deezer reports 50,000 fully synthetic uploads per day, representing 34% of deliveries. Consequently, recommendation algorithms received upgrades that demote unlabeled generative submissions.

Apple Music chose disclosure over deletion by launching voluntary Transparency Tags in March 2026. Furthermore, each platform harmonizes metadata with the DDEX standard to ensure cross-service consistency. Compliance failure can trigger removal under Fake Artist Copyright safeguards.

- 75 million tracks purged by Spotify in 12 months

- 50,000 daily AI uploads flagged by Deezer

- 97% listeners failed to spot AI vocals in Deezer study

- Millions of streams earned by Breaking Rust before takedown

The figures underscore scale and speed of synthetic music flooding services. Therefore, automated detection emerges as a business necessity, not mere experimentation.

Analysts note that Drake impersonations surge whenever new voice models leak online. In contrast, legitimate remixes featuring the real singer still benefit from duet trends. Such confusion blurs fan loyalty and confounds royalty systems reliant on accurate IP mapping.

Artists Demand Strong Action

Many performers feel exposed by hyper-realistic cloning. Blanco Brown declared, "If someone will sing like me, it should be me". Moreover, major labels threaten litigation when Fake Artist Copyright infractions dilute catalog integrity.

Ed Newton-Rex warns that hyperscale AI introduces an unfair competitor built on existing artistry. Consequently, lobbying intensified for mandatory AI disclosure and faster takedowns. Independent musicians, however, argue that generative tools open doors once barred by costly studios.

Both sides agree impersonation erodes trust when left unchecked. Therefore, consensus forms around transparent labeling paired with responsive enforcement.

Technology Behind New Filters

Detection engines analyze spectral fingerprints, lyric patterns, and upload behavior in near real time. Subsequently, suspect files receive confidence scores feeding manual review queues. Some systems cross-reference voice embeddings against protected libraries to prevent unlicensed Drake clones.

Platform spokespeople stress that models evolve weekly to counter adversarial manipulations. Nevertheless, false positives remain a reputational risk, especially for experimental electronic genres. Policy teams invoke Fake Artist Copyright thresholds to justify temporary removals during review.

Adaptive AI governance now intertwines engineering and legal functions. Consequently, cross-disciplinary expertise is becoming a hiring priority for every major Streaming service.

Metadata Standards Gain Ground

DDEX fields allow labels to declare AI usage across recordings, compositions, artwork, and video. Apple Music’s Transparency Tags mirror the schema and rely on voluntary compliance today. Furthermore, Spotify pledges to make disclosure mandatory for payout eligibility next fiscal year.

Clear provenance benefits copyright audits, revenue splits, and recommendation accuracy. In contrast, missing tags trigger Fake Artist Copyright red flags inside automated dashboards.

Professionals can enhance expertise through specialized certifications. Consider the AI+ Quantum Specialist™ program covering advanced metadata architectures.

Standardized labels offer a scalable alternative to endless whack-a-mole takedowns. Therefore, metadata adoption will likely accelerate across the Industry this quarter.

Business Risks And Opportunities

Unchecked fakery threatens royalty pools by flooding catalogs with low-value content. Labels fear revenue dilution and brand damage when Drake knockoffs mislead fans. Meanwhile, legitimate AI collaborations create fresh engagement models and lower production costs.

Platforms monetize exclusive detection APIs for partners, opening a new B2B revenue lane. Moreover, analytics derived from provenance data inform marketing spend with unprecedented precision. Stakeholders that ignore Fake Artist Copyright risk regulatory penalties and reputational loss.

- Offer verified remix packs to creators

- License voice models with contractual IP protections

- Bundle transparency metrics into artist dashboards

Risks and rewards hinge on robust governance. Consequently, proactive adaptation decides competitive advantage in the evolving Streaming ecosystem.

Strategic Takeaways For Professionals

Legal teams should audit catalogs against new platform policies quarterly. Developers must integrate DDEX identifiers early within content pipelines. Additionally, marketing leaders should monitor duet trends to pre-empt Fake Artist Copyright misuse.

Investing in cross-training between data science and IP law widens career pathways. Moreover, completion of the AI+ Quantum Specialist™ certification signals command of AI governance. Finally, regular policy briefings keep creators informed and compliant.

Clear processes empower innovation while containing abuse. Therefore, the Industry can embrace AI without sacrificing trust or royalties.

AI duet culture will continue reshaping music discovery at blistering speed. However, recent policy shifts demonstrate that self-regulation alone is insufficient. Platforms, artists, and listeners all benefit when Fake Artist Copyright safeguards operate transparently. Standardized metadata, adaptive filters, and educational certifications form a pragmatic defense stack. Consequently, stakeholders who act early will capture new revenue while avoiding headline scandals. Professionals should schedule policy audits, deploy detection APIs, and address Fake Artist Copyright exposure proactively. Explore the linked certification to future-proof skills and lead responsible AI innovation.