AI CERTS

3 hours ago

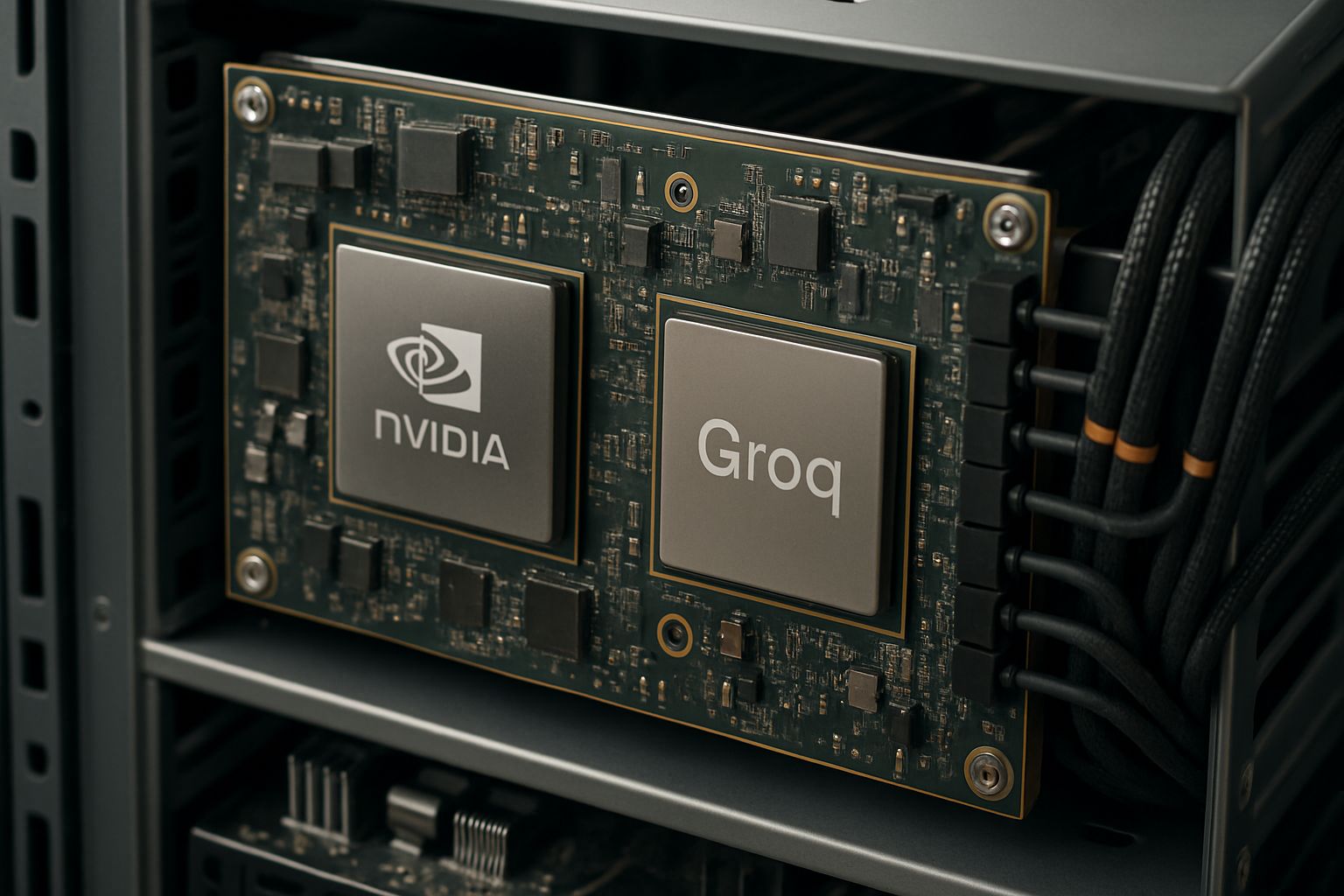

Nvidia, Groq, and Computational Latency Optimization

However, the Groq deal goes far beyond a routine talent acquisition. Consequently, it shifts technical roadmaps, valuation metrics, and regulatory debates around inference chips. This article unpacks the numbers, technology, and strategic stakes for engineering leaders.

Groq License Market Shake-up

Initially, Groq framed the license as a path to scale its deterministic LPU silicon without surrendering independence. Meanwhile, $20 billion in headline economics signalled strength despite venture headwinds.

Nvidia recorded $13 billion cash at closing and another $4 billion payable within twelve months. Additionally, it booked $14.4 billion of goodwill and $2.5 billion of developed technology intangibles related to the Groq deal.

Consequently, investors interpreted the transaction as a reverse acqui-hire that narrows rivalry in inference chips while maintaining legal distance.

These numbers confirm substantial commitment. In contrast, they raise antitrust questions explored next.

Nvidia Integration Roadmap Insights

By March 2026, Nvidia showcased Groq-derived LPUs inside its Rubin AI-factory racks. Furthermore, demos highlighted token-level latency gains reaching single-digit microseconds.

Such performance aligns with the core promise of Computational Latency Optimization, enabling real-time voice agents and iterative coding copilots without perceptible lag.

Groq’s compiler-centric design moves complexity from hardware to software. Consequently, integration requires toolchain bridges between CUDA and Groq SDKs.

Successful alignment could redefine Nvidia’s stack. Nevertheless, further economic angles deserve attention.

Economic Numbers Behind Agreement

Industry forecasts peg the AI inference market at $106 billion this year, growing to $255 billion by 2030. Therefore, Nvidia’s payment represents roughly 13% of the entire 2025 TAM.

Analysts argue that acquiring exclusive rights would have prompted harsher scrutiny. However, a non-exclusive structure still grants Nvidia first-mover advantage for Computational Latency Optimization workloads.

Key cash components include an immediate $13 billion transfer, deferred $4 billion, and ongoing engineering payroll. Moreover, goodwill entries suggest expected future synergies exceeding identifiable assets.

Financials reveal bold strategic bets. Subsequently, legal risks emerge in parallel.

Antitrust Scrutiny Intensifies Debate

Bernstein analyst Stacy Rasgon warned the structure “may keep the fiction of competition alive.” Meanwhile, a February 2026 Senate letter urged the FTC to examine reverse acqui-hire patterns.

In contrast, Nvidia asserts that anyone can still license Groq LPUs, preserving choice among inference chips.

Regulators will weigh whether talent transfer plus roadmap integration effectively eliminate an innovative rival. Additionally, customers worry about future pricing leverage.

Regulatory outcomes remain uncertain. However, ecosystem impacts already surface.

Impacts On Inference Ecosystem

Hyperscalers evaluate alternative silicon from AMD, Cerebras, and SambaNova. Consequently, procurement teams reassess risk exposure created by the Groq deal.

Low-latency edge deployments crave deterministic throughput. Therefore, Computational Latency Optimization gains priority over sheer FLOPS.

Startups now face steeper fundraising hurdles as investors expect consolidation. Nevertheless, Groq continues operating GroqCloud under new CEO Simon Edwards, offering dedicated inference chips as a service.

Competitive dynamics continue to evolve. Subsequently, professionals must update skills.

Skills And Career Opportunities

Demand for latency-sensitive system architects is surging across cloud, telecom, and automotive sectors. Moreover, expertise in compiler optimization, SRAM budgeting, and token scheduling commands premiums.

Professionals can enhance their expertise with the AI Engineer™ certification.

Additionally, projects targeting Computational Latency Optimization require cross-disciplinary fluency spanning silicon, firmware, and model quantization.

Skills development accelerates career resilience. In contrast, organizations need strategic guidance.

Strategic Outlook And Conclusion

Boards should map latency requirements against cost profiles before committing to mixed GPU-LPU fleets. Furthermore, negotiate flexible volume pricing to mitigate potential supplier lock-in.

Firms pursuing Computational Latency Optimization should pilot Groq-enabled Rubin clusters while benchmarking competing inference chips for specific workloads.

- 106 B USD: 2025 inference market size

- 255 B USD: projected 2030 market size

- 13 B USD: upfront cash at closing

- 4 B USD: deferred payment within 12 months

Continuous Computational Latency Optimization will determine long-term platform loyalty and shareholder returns.

These steps foster informed procurement choices. Consequently, they prepare enterprises for rapid architectural shifts.

Overall, the Groq deal illustrates how latency now drives platform power. Consequently, firms that master Computational Latency Optimization unlock differentiated user experiences and efficiency.

Nevertheless, legal and integration hurdles may spark turbulence before benefits fully materialize. Therefore, staying informed and certified remains vital for professionals.

Explore emerging benchmarks, attend upcoming GTC sessions, and consider the AI Engineer™ pathway to lead next-generation inference projects.