AI CERTS

4 hours ago

Rubin Release: Next-Gen Hardware Architecture for AI Racks

This feature dissects the launch, claims, and risks for architects planning multi-petaflop clusters. Moreover, we examine partner roadmaps, cooling realities, and cost economics. Expect actionable insights for both hyperscale and enterprise Data center teams. Finally, the article links to a certification that sharpens strategic cloud planning skills. Consequently, every line is grounded in verifiable disclosures from CES, Wired, and Tom’s Hardware.

Rubin Hardware Architecture Overview

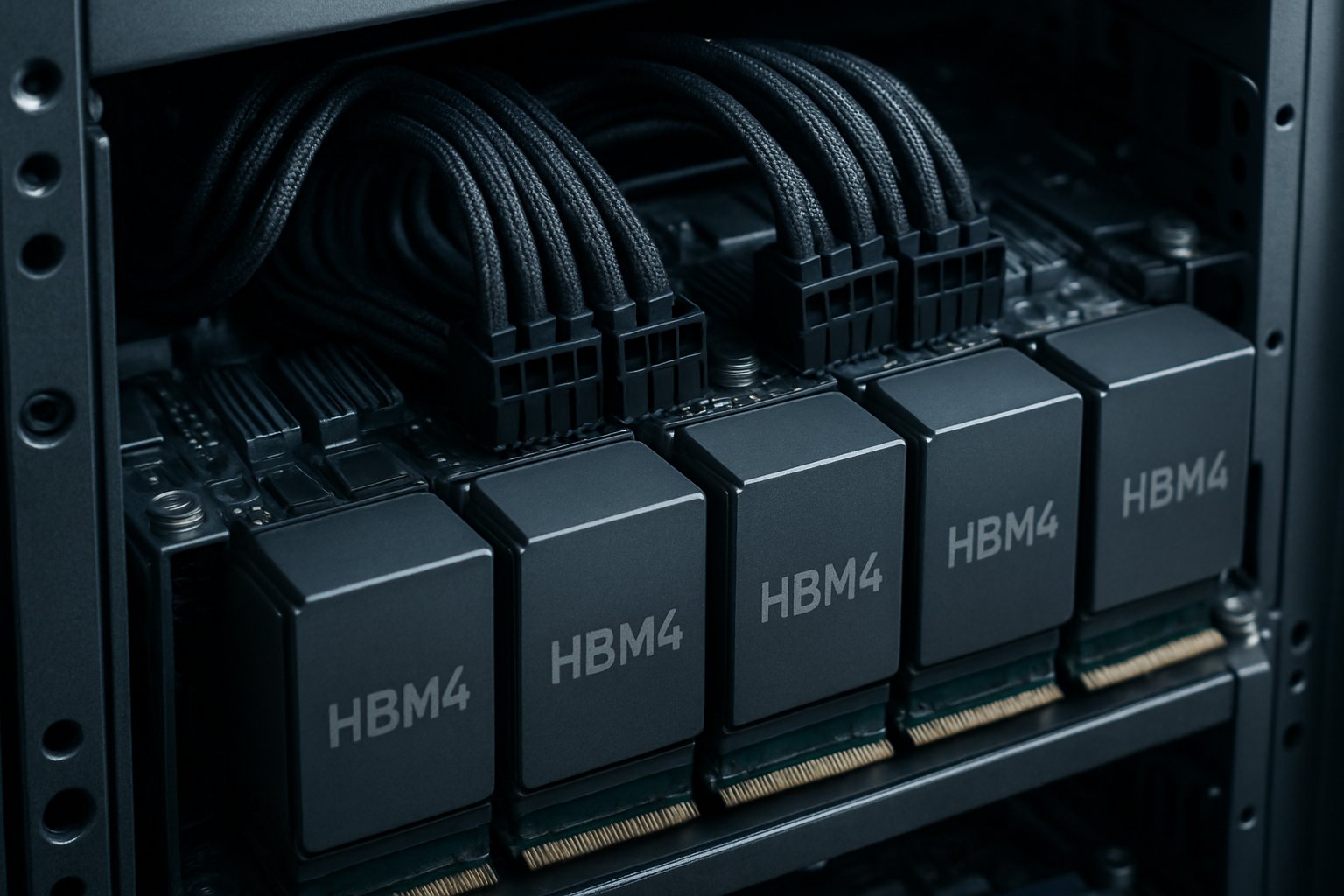

Rubin NVL72 merges 72 GPUs with 36 Vera CPUs inside one cabinet. Furthermore, BlueField-4 DPUs and ConnectX-9 SuperNICs orchestrate storage, security, and east-west traffic. NVLink 6 provides 3.6 TB per second each GPU, enabling 260 TB per second rack bandwidth. Moreover, the Hardware Architecture emphasizes tight component placement to cut latency between compute and memory. Micron HBM4 stacks sit beside each Rubin GPU, pushing 2.8 TB/s per stack. Consequently, model shards communicate with less dependency on external fabric.

These design choices promise quantum leaps in interconnect efficiency. However, they also elevate power density, a challenge examined next.

Key Chipset Components Details

Rubin GPUs anchor the stack with transformer engines optimized for NVFP4 precision. Additionally, Vera CPUs integrate SOCAMM2 modules, expanding system memory without classic DIMM bottlenecks. BlueField-4 offloads storage, networking, and zero-trust security, freeing CPUs for orchestration logic. In contrast, ConnectX-9 SuperNIC supplies 1.6 Tb/s per GPU toward the Data center spine. ASUS and other MGX partners will bundle these parts into reference nodes for rapid OEM integration. NVLink 6 employs co-packaged optics that reduce switch hops by 50% versus Blackwell. Therefore, gradient synchronization faces less congestion during billion-parameter training runs.

Collectively, these chip upgrades define a cohesive Hardware Architecture ready for modular scaling. Subsequently, we turn to measurable performance claims.

Performance Metrics And Claims

Rubin’s Hardware Architecture underpins the bold figures NVIDIA shares. NVIDIA touts exaFLOPS capability when multiple NVL144 cabinets network together. Moreover, the firm states Rubin cuts inference token cost tenfold compared with Blackwell on reasoning tasks. Analysts remain cautious because independent benchmarks have not surfaced. Wired noted that “full production” often begins at low wafer volumes. Nevertheless, Micron confirms HBM4 mass production, supporting NVIDIA’s bandwidth assertions.

In NVIDIA’s documentation, key figures include:

- 3.6 TB/s NVLink 6 bandwidth per GPU

- 260 TB/s aggregate switch bandwidth per NVL72

- Up to 2.8 TB/s per HBM4 stack

- Tenfold lower token cost for agentic inference

Independent labs have yet to publish NVL72 throughput numbers under MLPerf rules. Therefore, early customers should expect specification variance until silicon steers through multiple stepping cycles. These numbers, if validated, would reshape Data center economics for large language models. However, power and cooling considerations may temper real-world efficiency. Consequently, the next section investigates thermal design realities.

Cooling Power Facility Impacts

Rubin GPUs reportedly draw nearly 1.8 kW each under sustained load. Therefore, a single NVL72 rack can exceed 150 kW before networking overhead. Liquid-cooled cold plates appear mandatory, according to Tom’s Hardware tear-downs. Additionally, facility operators must provision redundant pumps, heat exchangers, and treated water loops. ASUS engineers estimate 30% CapEx uplift for sites migrating from air to immersive Liquid-cooled systems.

In contrast, NVIDIA argues vertical integration offsets cooling costs through higher throughput per square foot. Liquid-cooled rear-door heat exchangers can reclaim warm water for building heating, partially offsetting operational expenses. Nevertheless, environmental regulations may complicate reuse strategies in drought-prone regions.

The Hardware Architecture’s power density pushes Data center design toward warm-water loops and rack-level power shelves. Subsequently, partner ecosystems play a pivotal mitigation role. Therefore, we examine which vendors are rallying behind Rubin.

Ecosystem Partnerships Landscape Today

Cloud operators view the Hardware Architecture as a revenue driver. Furthermore, AWS, Google Cloud, Microsoft Azure, and Oracle plan Rubin instances during H2 2026. On the OEM side, Dell, HPE, Lenovo, and ASUS will ship MGX-based reference servers. Moreover, Micron aligns memory roadmaps with 36 GB HBM4 stacks already in high volume. Groq markets LPX racks that complement Rubin for ultra-low latency inference. ASUS executives demonstrated a quarter-rack pilot during CES, showing hot-swap coolant manifolds for serviceability. Consequently, field technicians can replace Rubin blades in under five minutes while lines remain pressurized.

Collectively, the ecosystem reduces procurement friction for enterprises lacking custom design teams. Nevertheless, competition from AMD and Intel remains fierce, shaping pricing dynamics discussed below. Consequently, market timing and risk management deserve closer inspection.

Market Timing Risk Analysis

The ambitious Hardware Architecture schedule raises execution doubts. NVIDIA uses “full production” language, yet shipped volumes remain undisclosed. Analyst Austin Lyons warns advanced nodes often face yield surprises that delay boards. Additionally, export controls could limit deliveries to certain regions, impacting Data center rollout schedules. Micron’s HBM4 capacity, though scaling, still depends on substrate supply and photonics yield. In contrast, NVIDIA claims tight supplier alignment will smooth ramps through late 2026. Analysts also flag competition pressure; AMD’s Instinct roadmap touts comparable FP4 throughput at lower wattage. Nevertheless, NVIDIA’s software ecosystem and CUDA dominance still influence procurement decisions.

Stakeholders should track wafer start data, memory shipments, and regulatory updates each quarter. Subsequently, strategic leaders can mitigate exposure by diversifying accelerator roadmaps. Therefore, the next section distills practical guidance from the analysis.

Strategic Takeaways For Leaders

First, validate NVIDIA’s performance claims with small pilot clusters before large scale commitments. Second, engage facility engineers early because Liquid-cooled retrofits require long lead components. Third, negotiate flexible pricing with ASUS or other OEMs to hedge supply variances. Moreover, pursue staff upskilling to master co-designed Hardware Architecture paradigms. Professionals can enhance expertise with the AI+ Architect Certification. Finally, monitor independent benchmarks and export policy dashboards monthly. Leaders should also formalize multi-vendor escape clauses to maintain leverage during contract renewals.

These recommendations align technology goals with realistic delivery timelines. Consequently, enterprises can harness Vera Rubin potential while sidestepping deployment surprises.

Vera Rubin marks NVIDIA’s boldest step since Blackwell. Moreover, its integrated Hardware Architecture promises dramatic efficiency for agentic AI workloads. Nevertheless, real-world gains hinge on supply, cooling, and regulatory clarity. Therefore, leaders must pair cautious validation with aggressive talent development. Proactive teams should bookmark third-party benchmark feeds and schedule quarterly roadmap reviews.

Subsequently, they can adopt Rubin racks when performance, price, and Liquid-cooled facilities align. Ready to lead the next frontier? Explore the certification link above and future-proof your AI infrastructure strategy today. Meanwhile, board committees should budget feasibility studies ahead of 2026 capital cycles.