AI CERTS

3 hours ago

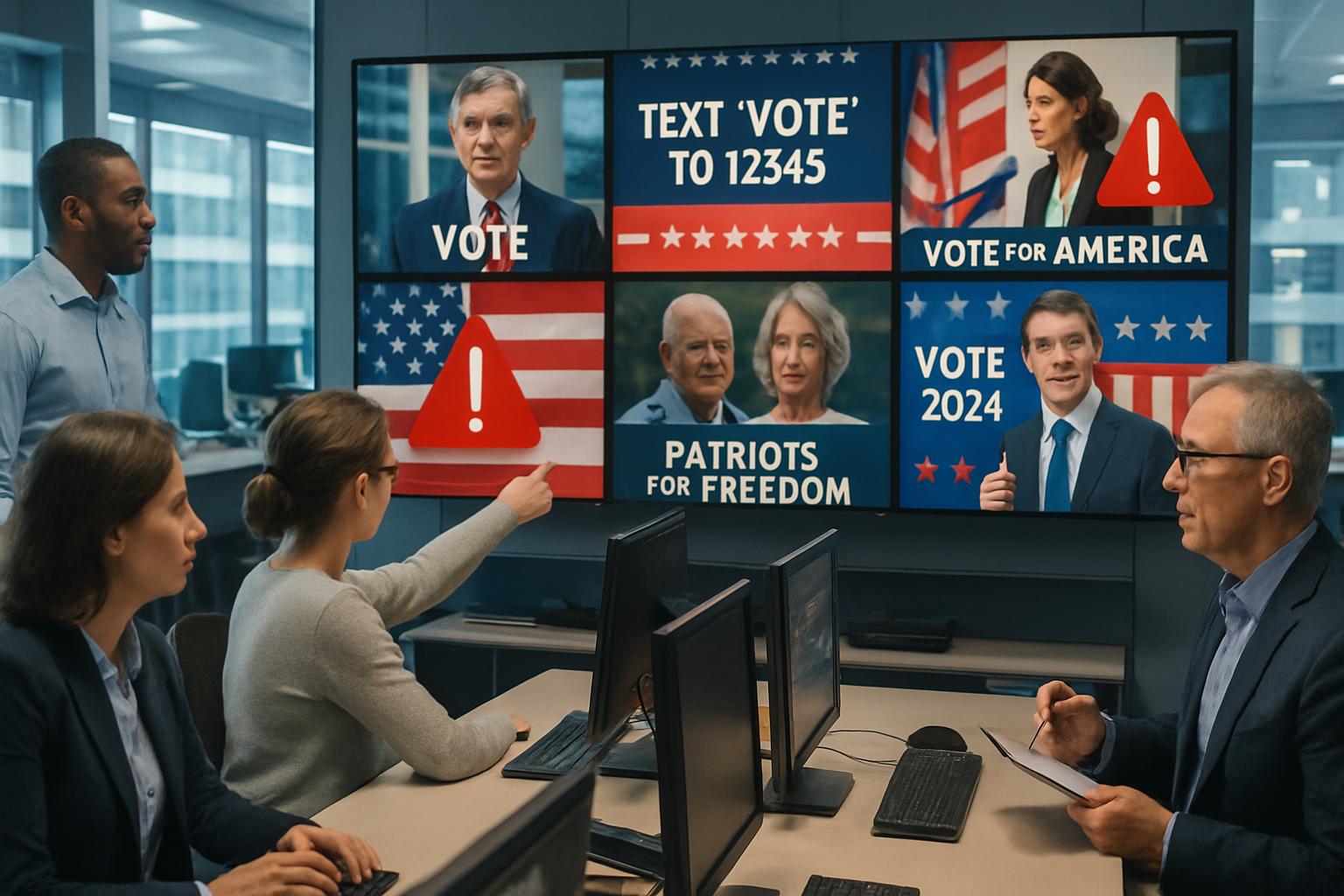

Deepfake Election Crisis Erodes Trust, Urging Swift Safeguards

Moreover, bad actors exploit that hesitation, claiming genuine footage is fake while spreading forged clips.

The result is a feedback loop of doubt that undermines democratic legitimacy.

Therefore, understanding how deepfakes sow confusion and how institutions respond is vital for business and civic leaders.

This article unpacks recent incidents, regulatory battles, and scientific advances surrounding the phenomenon.

It then outlines mitigation strategies shaping the unfolding Deepfake Election Crisis.

Public Trust Erodes Fast

Public confidence is sliding quickly.

Recently, Pew Research data shows 57% of adults feel extremely concerned about AI misinformation in campaigns.

Meanwhile, an AP-NORC poll reported a similar 58% expecting more falsehoods during elections.

These numbers reveal a widespread sense of doubt cutting across party lines.

Legal scholar Danielle Citron warns that the 'liar’s dividend' allows leaders to dismiss authentic recordings.

Consequently, even mediocre fakes can poison discourse because nothing feels verifiable.

Courts, journalists, and voters stall while authenticity gets debated, delaying accountability.

Such paralysis forms the core of the Deepfake Election Crisis.

These perceptions matter because perception often shapes turnout, donation flows, and press coverage.

Therefore, restoring trust demands coordinated technical, legal, and educational responses.

Rising skepticism already damages democratic function.

However, concrete incidents show how fast the threat is evolving.

Recent Election Deepfake Incidents

January 2024 shook global Politics with a vivid example.

AI-generated robocalls impersonated President Biden and targeted over 20,000 New Hampshire voters.

Consequently, state investigators launched criminal probes and issued preliminary fines.

Platforms raced to trace the campaign’s origin while fact-checkers issued rapid debunks.

In contrast, some listeners still believed the call and stayed home.

Elsewhere, municipal contests in India and Slovakia saw synthetic videos smear candidates within hours of posting.

Globally, watchdogs logged dozens of such attacks during 2024 and 2025 cycles.

Moreover, a Canadian study flagged 5.9% of election images as manipulated deepfakes.

These incidents illustrate the operational side of the Deepfake Election Crisis.

Yet, the deepest wound again involves perception, not always final vote counts.

Weaponized fakes reach voters cheaply and instantly.

Therefore, technical and policy safeguards must scale just as fast.

Science Behind Deepfake Detection

Detection research has advanced, yet Deepfake Election Crisis pressures remain.

NIST’s Open Media Forensics Challenge benchmarks detectors on diverse synthetic content, advancing applied Science.

Additionally, recent academic papers urge uncertainty flags when algorithms are unsure.

False positives risk silencing legitimate speech, while false negatives let forgeries spread.

Consequently, provenance systems using cryptographic signatures gain traction among researchers.

Stanford and Berkeley labs now test combined detection and provenance workflows.

Detection Tools Limitations Exposed

Researchers found detectors often falter when presented with unseen generative models.

Meanwhile, adversaries can add subtle noise to evade current filters.

Therefore, many scientists advocate layered defenses instead of singular algorithms.

Technical clarity is crucial because the Deepfake Election Crisis will only intensify near high-stakes ballots.

Scientific progress is steady but fragile.

However, policy pressure grows while tools remain imperfect.

Regulatory Momentum Gains Speed

Lawmakers at every level are sprinting.

The 2025 federal Take It Down Act mandates rapid removal of non-consensual deepfakes.

Moreover, twenty states already target election deepfakes with tailored statutes.

Stanford’s AI Index counted 131 AI-related state laws by late 2024.

However, uniformity remains elusive because platform lawsuits challenge local rules.

X sued Minnesota, claiming a political deepfake ban violates First Amendment rights.

Consequently, courts must balance speech protections with urgent electoral integrity.

Internationally, the EU AI Act imposes disclosure and watermarking for high-risk synthetic Media.

Professionals can solidify their compliance skills with the AI Legal™ certification.

This credential supports legal teams confronting the Deepfake Election Crisis in courtrooms and boardrooms.

Legislation is proliferating yet fragmented.

Therefore, coordinated standards remain an urgent goal.

Industry Guardrails Still Lag

Platforms present the first public line of defense.

Meta and YouTube label synthetic videos, while X relaxes rules amid the Deepfake Election Crisis.

Nevertheless, labels often appear after millions have viewed a clip.

Advertisers also exploit lenient policies to micro-target disinformation.

Consequently, critics urge real-time hashing, stricter disclosure, and independent audits.

Business leaders fear brand safety crises when ads run beside forged content.

In contrast, platform executives argue over-moderation might stifle satire and political speech.

Media watchdogs counter that satire can be tagged without harming free Politics.

Ultimately, the commercial incentives for engagement clash with societal need for authenticity.

Platform guardrails remain inconsistent and reactive.

However, corporate reputations will suffer if standards do not improve before the 2026 contests.

Mitigation Pathways Move Forward

Multiple tactics can blunt harm even while technology evolves.

Experts recommend a layered approach grounded in behavioral Science that combines detection, provenance, literacy, and rapid response teams.

Furthermore, election commissions now pre-register official audio signatures to speed debunking.

Civil society groups push Media literacy workshops, focusing on senior voters who share at higher rates.

Businesses integrate authenticity checks into supply chains to protect brand assets.

Meanwhile, venture investors back startups offering watermarking as a service.

Below are emerging best practices leaders can adopt quickly:

- Deploy certified detection APIs with confidence thresholds and public logs.

- Require political advertisers to submit provenance data before campaigns launch.

- Train communication teams on rapid debunk workflows using verified sources.

- Join cross-sector alliances sharing indicators of synthetic threat activity.

Subsequently, organizations should rehearse incident drills before peak campaign periods.

Consequently, response speed improves and uncertainty shrinks.

These combined tactics tackle both direct manipulation and the broader Deepfake Election Crisis of trust.

Strategic layering outpaces single fixes.

Therefore, sustained collaboration will anchor future safeguards.

Conclusion And Next Steps

The Deepfake Election Crisis will define the coming campaign cycles unless action accelerates.

Public doubt already swells, while technical defenses and policies chase rapidly evolving forgeries.

However, Science, regulation, and industry can converge around layered safeguards.

Detection improvements, provenance standards, and literacy initiatives collectively rebuild trust.

Moreover, professionals who understand emerging legal frameworks hold a strategic advantage.

Therefore, now is the moment to upskill and prepare.

Explore the AI Legal™ pathway and lead responsible innovation against deceptive Media.

Together, decisive leadership can contain disinformation and preserve democratic Politics.

Take action today before the next synthetic wave arrives.