AI CERTS

4 hours ago

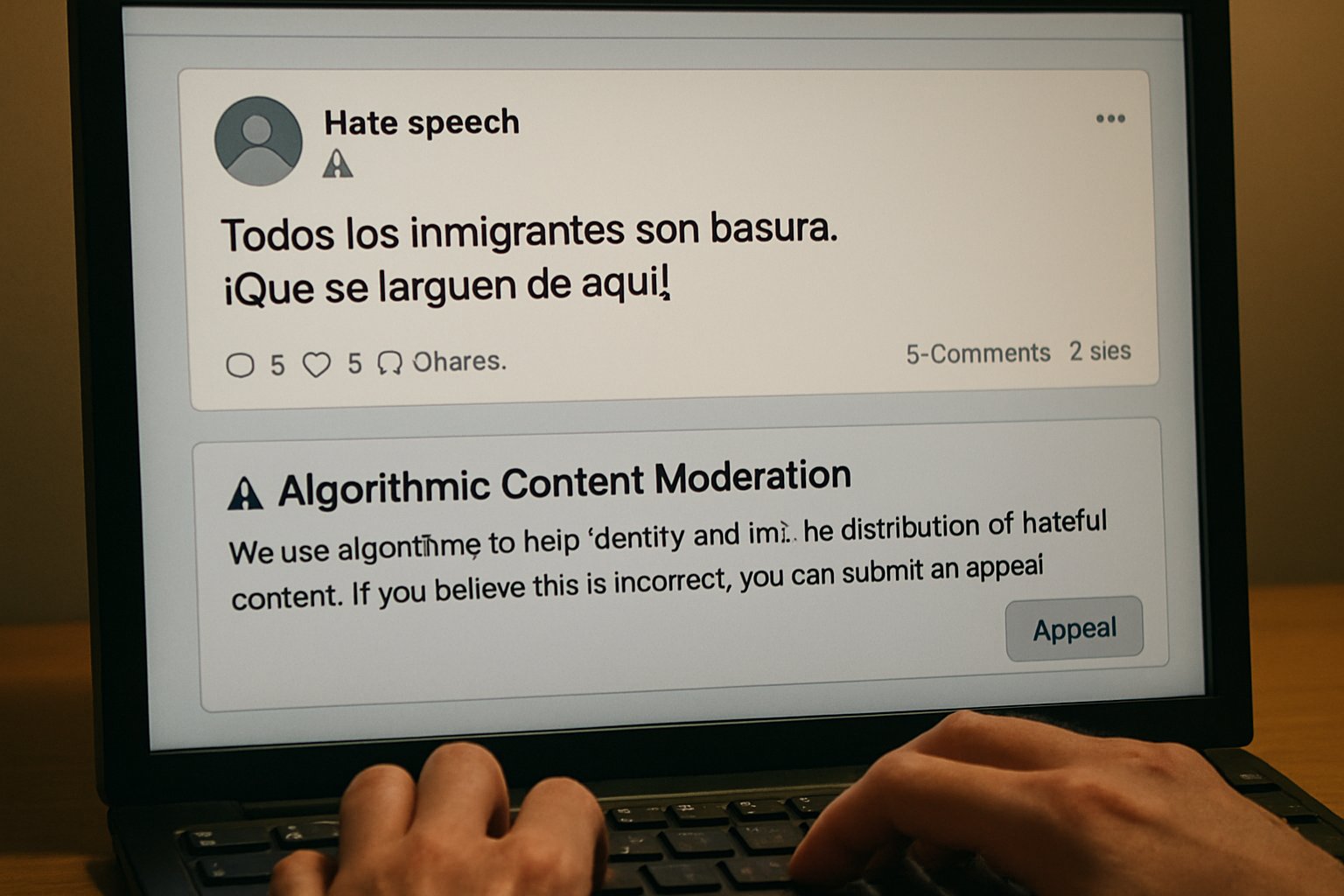

Algorithmic Content Moderation Amid Spain’s Online Hate

Recent spikes linked to Torre Pacheco, football racism, and climate denial expose structural weak points. Meanwhile, recorded physical hate crimes dropped 13.8 percent in 2024, suggesting a partial offline retreat. In contrast, social media hostility ballooned, with 845,000 hateful posts flagged last year alone. This article unpacks the numbers, the new HODIO tool, and the looming policy fight.

Furthermore, it maps emerging responsibilities for engineers, lawmakers, and businesses steering content ecosystems. Industry forecasts value the Spanish safety-tech market at €430 million by 2027. Consequently, startups specialising in detection APIs are attracting overseas investment.

Rising Digital Hate Trends

OBERAXE’s 2025 monitoring logged more than 845,000 hateful messages across major networks. Moreover, July’s Torre Pacheco unrest alone generated 190,000 hostile posts, peaking at 33,000 in one day. Consequently, analysts describe a sharper, faster cycle of radicalisation than seen during previous years. Police data still lists 1,955 hate-crime investigations for 2024, yet offline severity remains high.

- 41.1 % of cases involved racism or xenophobia.

- Antisemitic incidents jumped 60.9 % year-on-year.

- Orientation or identity attacks numbered 528.

- 71.9 % of police cases reached clarification.

Researchers also observe longer lifespan for hateful threads, averaging 29 hours before decline. In contrast, positive solidarity posts trend for just 12 hours. These numbers confirm worrying Trends despite fewer physical assaults. Therefore, officials argue that Algorithmic Content Moderation must match the velocity of viral waves. The data backdrop sets pressure for the next policy moves. Spain now faces faster, broader online hatred than previous cycles. Nevertheless, fresh tools aim to reverse the curve; the following section reviews them.

Government Response And Tools

Prime Minister Pedro Sánchez unveiled HODIO in March 2026 at the Foro contra el Odio. The dashboard will publish semi-annual indicators on target groups, volume, language, and platform response. Furthermore, the presidency announced a legal package covering under-16 bans and stricter platform liability. Criminalisation of algorithmic amplification also appears in the draft. Consequently, industry lobbying has intensified, citing freedom-of-speech risks. Nevertheless, officials insist that Algorithmic Content Moderation demands enforceable standards and transparent metrics. OBERAXE will integrate HODIO feeds with its existing FARO engine supplied by LaLiga.

Moreover, weekly public dashboards should expose removal delays in near real time. Sánchez framed the initiative as a "public health measure for democracy" during his speech. Parliament will debate the package in the summer session, facing amendments from conservative parties. Start-ups will gain access to anonymised datasets for research under the draft decree. Nevertheless, privacy advocates demand differential privacy safeguards before release. HODIO promises unprecedented visibility into offensive dynamics and platform action. Next, we examine whether companies meet those expectations in practice.

Platform Takedown Performance Gaps

OBERAXE reported that platforms deleted only 35 % of flagged content in 2024. However, just 4 % disappeared during the critical first hours of virality. Meta, X, TikTok, and YouTube cite resource limits and false-positive fears. In contrast, civil-society observers argue automatic filters still miss disinformation coded in memes or slang. Therefore, timely human escalation remains essential yet expensive. Algorithmic Content Moderation can lighten that load when tuned to local languages and context.

Moreover, LaLiga’s FARO model raised precision by ingesting football-specific slurs and emojis. Subsequently, removal speed doubled for sports incidents during testing, according to engineers. Meta told reporters that Spanish-language nuance complicates automated screening. However, NGO testers found simple spelling tricks could evade bans for days. Longitudinal Trends show deletion rates stagnating since 2022.

X recently cut local moderation staff, exacerbating lag during January football derbies. Despite pockets of progress, removal latency still fuels outbreak violence. The next section explores how platform design choices magnify that latency.

Algorithmic Amplification Under Scrutiny

Recommendation engines rank content using engagement signals that reward outrage. Consequently, a single slur can propagate farther than an official correction. NGO studies found Northern-African migrants targeted in 35 % of monitored hostile traffic during 2024. Meanwhile, climate scientists on X endured 17.6 % hateful replies within sampled threads. Algorithmic Content Moderation can interrupt amplification by demoting violative posts before recommendation. However, Spanish legal scholars warn that opaque demotion criteria complicate appeals and risk discriminatory bias. Therefore, HODIO plans to audit ranking impacts alongside raw removal counts. LaLiga analysts linked reaction emojis to algorithmic boosts, intensifying abusive chains. Furthermore, Telegram channels recycle Twitter screenshots, extending reach beyond platform policy reach. OBERAXE researchers plan synthetic experiments to quantify causal links between ranking and harassment. Amplification controls may prove more decisive than deletions alone. Next, we consider the demographic most vulnerable to unchecked virality: minors.

Youth Exposure And Protection

FAD Juventud reports that three-quarters of Spanish teens encounter online hate weekly. Consequently, the government proposes barring users under 16 from social networks. Child-rights groups welcome the debate yet flag enforcement challenges across VPNs and foreign platforms. Moreover, digital-literacy educators argue proactive skills matter more than blanket bans. Their curricula now include sessions on spotting hateful memes before sharing. Several schools pilot Algorithmic Content Moderation dashboards in classrooms, visualising how slurs spread in real time.

Teachers in Valencia reported higher engagement when visual dashboards replaced abstract lectures. Consequently, regional authorities fund pilot courses for the 2026 school year. Strong youth defences could choke future recruitment by extremist movements. Nevertheless, broader society still grapples with legal balance questions, addressed next.

Balancing Rights And Risks

Article 510 of the Penal Code criminalises incitement against protected groups, especially online. However, civil-liberties NGOs fear over-broad enforcement may chill legitimate dissent. Legal expert Marta Gil notes that clear intent thresholds remain essential. Moreover, transparency reports rarely disclose demotion algorithms, complicating judicial review. Therefore, policymakers are drafting appeal windows and audit rights for affected accounts. Algorithmic Content Moderation will need explainable models to satisfy those safeguards.

Professionals can enhance their expertise with the AI+ UX Designer™ certification. Court rulings already show divergent interpretations of intent, creating unpredictability. Meanwhile, platform terms of service sometimes surpass legal standards, raising jurisdictional puzzles. Legal scholars recommend sandboxes where companies test interventions under judicial oversight. Lawmakers seek equilibrium between security and discourse. Finally, we assess strategic implications for businesses and technologists.

Conclusion And Outlook Ahead

Spain’s fight against digital hate now hinges on accurate metrics, swift removals, and algorithmic restraint. HODIO and related laws could transform accountability if platforms cooperate in good faith. Moreover, Algorithmic Content Moderation offers proactive scaling that humans alone cannot match. Nevertheless, transparency, appeals, and youth education must mature simultaneously.

Consequently, engineers and policy teams should monitor forthcoming HODIO dashboards and refine models accordingly. Readers seeking competitive advantage should explore certifications and join Spain’s rapidly evolving safety discussion. Take the next step and strengthen your skill set today. Effective Algorithmic Content Moderation will define responsible innovation during the coming year. Ultimately, long-term trust depends on embedding Algorithmic Content Moderation across product lifecycles, audits, and training.

Businesses building recommender systems should document training data and risk scenarios. Moreover, public trust will hinge on visible progress before the 2027 general election. Stakeholders who adapt early will shape continental norms and capture emerging export markets.