AI CERTS

3 hours ago

ECRI Alert Spurs AI Patient Safety Overhaul

Meanwhile, regulators scramble to update rules that once assumed software stayed static after clearance. This report examines the evidence, the open risks, and emerging mitigation strategies. Additionally, it offers concrete steps clinical executives can take before the next algorithm arrives in their inbox. Nevertheless, success will demand cultural change as much as technical refinement. Therefore, stakeholders must understand why ECRI sounds the alarm and how peer-reviewed data corroborate the warning. In contrast, unchecked optimism could leave hospitals facing preventable harm, reputational damage, and rising claims.

ECRI Flags Top Concern

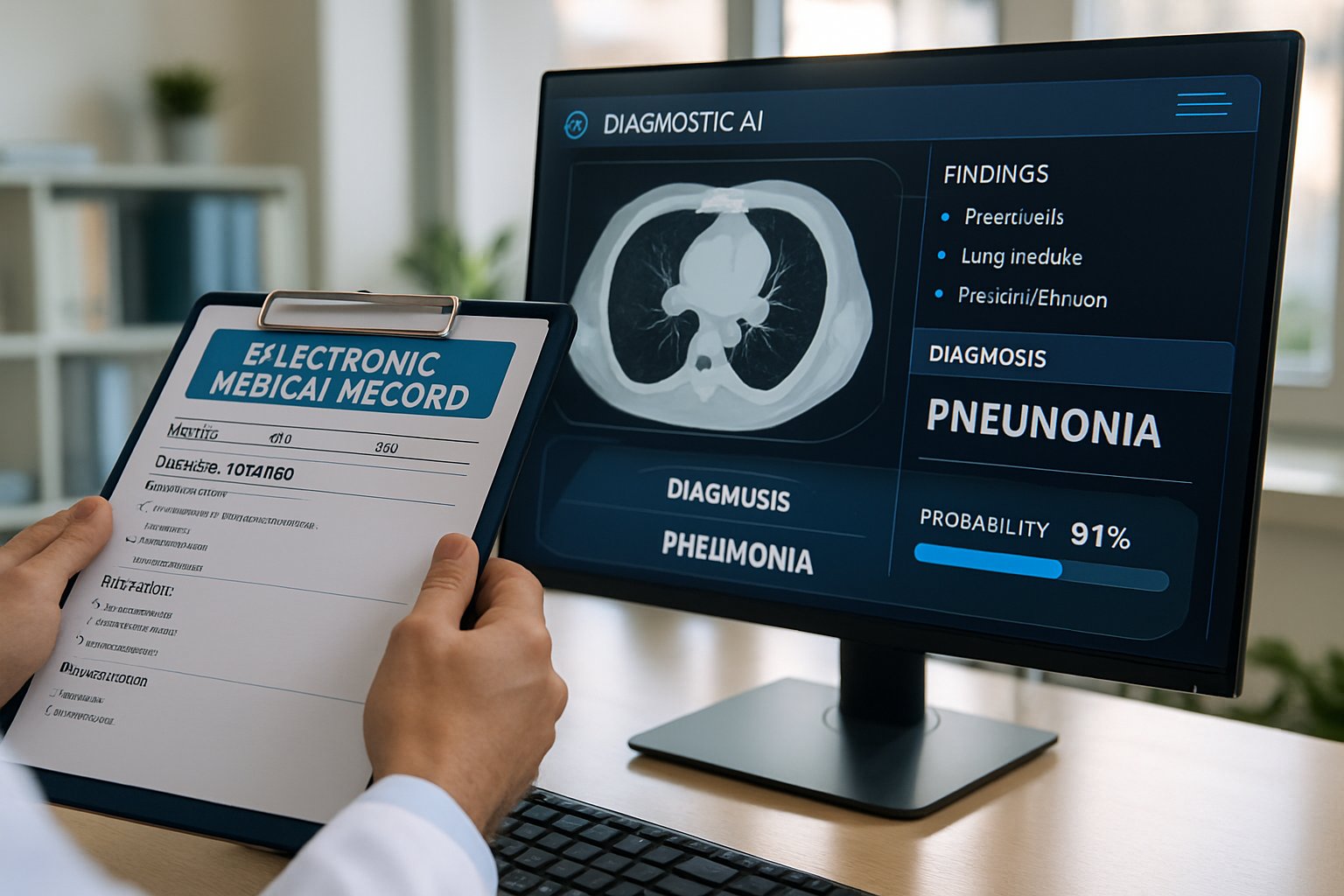

ECRI placed the diagnostic dilemma at number one in its 2026 Top Patient Safety Concerns report. Moreover, the nonprofit highlights over-reliance, biased training data, and fading clinician vigilance as central threats. It urges balanced adoption, local validation, and transparent disclosure whenever algorithms inform bedside decisions. Diagnostic AI often enters through medical imaging workflows, yet its influence quickly spreads to laboratory and triage systems. Consequently, the spotlight on AI Patient Safety forces institutions to reassess deployment roadmaps.

To respond, the organization scheduled a public webinar on March 20 to translate high-level advice into checklist actions. Healthcare executives expect templates for risk registers, algorithm inventories, and ongoing competency training to follow the event.

These initiatives signal management commitment yet leave practical questions unanswered. Consequently, stakeholders must scrutinize empirical failure modes next.

Failure Modes Quantified Data

Peer-reviewed evidence confirms that blind spots remain pervasive. For example, a 2025 Communications Medicine study found models missed 66 percent of synthesized critical events. Authors recommended scenario-based tests incorporating medical knowledge before frontline deployment. Although the paper examined vital-sign predictions, similar gaps plague medical imaging classifiers when edge cases arise.

Moreover, incident reports collected by Johns Hopkins researchers link algorithmic confusion with delayed stroke triage and sepsis escalation. Such misses jeopardize AI Patient Safety claims that promise faster, more accurate decision support. ECRI cites these numbers to explain the diagnostic dilemma headline. Hospitals rarely publish these near-miss incidents, complicating industry learning.

Evidence shows algorithms can underperform dramatically under stress. Therefore, regulation must evolve alongside deployment speed.

Regulatory Landscape Rapidly Shifts

Regulators acknowledge the tension between innovation and oversight. Consequently, the FDA publishes iterative guidance, including the 2025 Predetermined Change Control Plan draft. The plan lets vendors pre-define algorithm updates while committing to continuous monitoring. Additionally, the agency maintains an AI-Enabled Medical Devices list documenting hundreds of clearances. Many entries involve medical imaging triage tools for stroke, lung nodules, or breast density assessment.

International bodies mirror this activity with new EU MDR guidances and NHS frameworks. However, postmarket surveillance requirements still vary widely, confusing multinational vendors. Standardizing metrics would strengthen AI Patient Safety across borders.

- AI/ML SaMD Action Plan 2021

- Predetermined Change Control Plan draft 2025

- Transparency guiding principles 2024

- Lifecycle monitoring proposals 2025

Together, these documents reveal a new regulatory cadence.

Guidance now addresses adaptive models and transparency. Nevertheless, industry adoption outpaces enforcement, influencing risk profiles.

Industry Adoption And Risks

Commercial momentum keeps accelerating despite safety warnings. Meanwhile, Siemens, GE, and multiple startups unveil new diagnostic releases every conference season. Most products target medical imaging bottlenecks like brain hemorrhage detection or CT dose reduction. Hospitals hope these tools will ease radiologist shortages and raise throughput. Every marketing deck now references AI Patient Safety to reassure procurement committees.

However, over-reliance can erode clinician critical thinking, a threat many safety advocates underscore. Bias, calibration drift, and hallucinations from large language models further complicate trust. Consequently, liability exposure grows when staff accept outputs without verification.

Adoption unlocks efficiency yet introduces fresh error pathways. Therefore, governance structures must mature quickly.

Governance And Mitigation Steps

Robust governance blends policy, training, and technical safeguards. Furthermore, ECRI advises inventorying every algorithm, mapping data flows, and assigning clinical owners. Professionals can deepen oversight skills through the AI Ethics Business Certification endorsed by industry groups. Moreover, scenario-based testing should include adversarial, rare, and deteriorating cases before go-live.

Nevertheless, governance cannot eliminate liability if human reviewers skip final checks. Therefore, many hospitals now require documented human sign-off for every AI-assisted diagnosis. Such dual control supports AI Patient Safety while sustaining clinician accountability. Consequently, governance dashboards increasingly display AI Patient Safety metrics like calibration scores and override rates.

Structured oversight balances innovation with prudence. In contrast, unresolved liability debates still cloud investment decisions.

Liability And Trust Questions

Assigning blame after an AI-related miss remains murky. Meanwhile, insurers analyse early malpractice claims to price emerging risk. Some cases target vendors, yet most plaintiffs pursue clinicians, amplifying liability anxiety. ECRI recommends clear documentation of algorithm role and patient disclosure to share decision accountability.

Additionally, legal scholars urge updating informed consent to reference algorithmic uncertainty explicitly. Transparent communication strengthens AI Patient Safety perceptions and reduces surprise should errors arise. Case law will clarify precedent over the coming years.

Responsibility gaps threaten adoption momentum. Nevertheless, strategic roadmaps can align legal, clinical, and technical teams.

Strategic Roadmap For Leaders

Executives must treat algorithms as evolving staff members requiring orientation and supervision. Therefore, successful programs start with enterprise principles covering procurement, validation, monitoring, and decommissioning. Pilot rollouts often focus on medical imaging tasks to refine playbooks before expansion to text analytics. Moreover, multidisciplinary committees should review performance dashboards monthly and escalate anomalies promptly.

Dedicated counsel track regulatory updates, interpret PCCP obligations, and adjust contracts to mitigate liability. Ultimately, sustained AI Patient Safety depends on aligning incentives with transparent metrics and continuous education.

Roadmaps operationalize principle into practice. Consequently, organizations can innovate confidently while protecting patients.

AI Patient Safety now shapes regulatory agendas, board discussions, and procurement checklists across healthcare. However, blind spots, bias, and liability uncertainty still threaten trust. ECRI’s top concern, FDA guidance, and academic evidence all underscore the same message. Therefore, leaders must pair technical validation with robust governance, transparent communication, and ongoing training. Professionals should pursue continuous education, including the linked certification, to steward these systems responsibly. Consequently, start auditing your current algorithms today and champion a culture that prizes vigilance and excellence.